As Supersymmetry Fails Tests, Physicists Seek New Ideas

As a young theorist in Moscow in 1982, Mikhail Shifman became enthralled with an elegant new theory called supersymmetry that attempted to incorporate the known elementary particles into a more complete inventory of the universe.

“My papers from that time really radiate enthusiasm,” said Shifman, now a 63-year-old professor at the University of Minnesota. Over the decades, he and thousands of other physicists developed the supersymmetry hypothesis, confident that experiments would confirm it. “But nature apparently doesn’t want it,” he said. “At least not in its original simple form.”

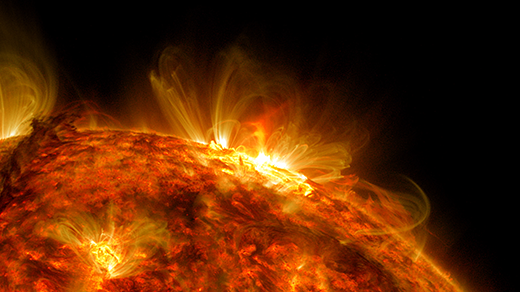

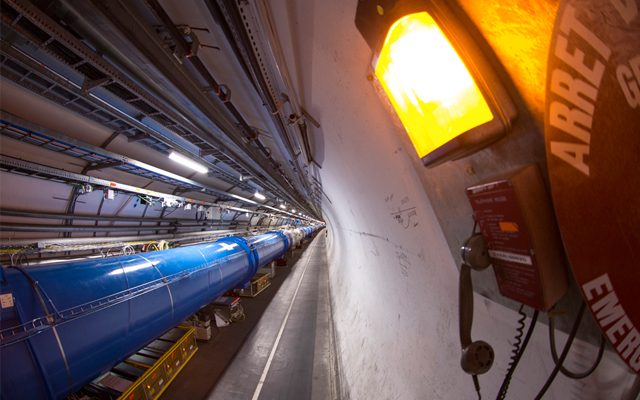

With the world’s largest supercollider unable to find any of the particles the theory says must exist, Shifman is joining a growing chorus of researchers urging their peers to change course.

In an essay posted last month on the physics website arXiv.org, Shifman called on his colleagues to abandon the path of “developing contrived baroque-like aesthetically unappealing modifications” of supersymmetry to get around the fact that more straightforward versions of the theory have failed experimental tests. The time has come, he wrote, to “start thinking and developing new ideas.”

But there is little to build on. So far, no hints of “new physics” beyond the Standard Model — the accepted set of equations describing the known elementary particles — have shown up in experiments at the Large Hadron Collider, operated by the European research laboratory CERN outside Geneva, or anywhere else. (The recently discovered Higgs boson was predicted by the Standard Model.) The latest round of proton-smashing experiments, presented last week at the Hadron Collider Physics conference in Kyoto, Japan, ruled out another broad class of supersymmetry models, as well as other theories of “new physics,” by finding nothing unexpected in the rates of several particle decays.

“Of course, it is disappointing,” Shifman said. “We’re not gods. We’re not prophets. In the absence of some guidance from experimental data, how do you guess something about nature?”

Younger particle physicists now face a tough choice: follow the decades-long trail their mentors blazed, adopting ever more contrived versions of supersymmetry, or strike out on their own, without guidance from any intriguing new data.

“It’s a difficult question that most of us are trying not to answer yet,” said Adam Falkowski, a theoretical particle physicist from the University of Paris-South in Orsay, France, who is currently working at CERN. In a blog post about last week’s results, Falkowski joked that it was time to start applying for jobs in neuroscience.

“There’s no way you can really call it encouraging,” said Stephen Martin, a high-energy particle physicist at Northern Illinois University who works on supersymmetry, or SUSY for short. “I’m certainly not someone who believes SUSY has to be right; I just can’t think of anything better.”

Supersymmetry has dominated the particle physics landscape for decades, to the exclusion of all but a few alternative theories of physics beyond the Standard Model.

“It’s hard to overstate just how much particle physicists of the past 20 to 30 years have invested in SUSY as a hypothesis, so the failure of the idea is going to have major implications for the field,” said Peter Woit, a particle theorist and mathematician at Columbia University.

The theory is alluring for three primary reasons: It predicts the existence of particles that could constitute “dark matter,” an invisible substance that permeates the outskirts of galaxies. It unifies three of the fundamental forces at high energies. And — by far the biggest motivation for studying supersymmetry — it solves a conundrum in physics known as the hierarchy problem.

The problem arises from the disparity between gravity and the weak nuclear force, which is about 100 million trillion trillion (10^32) times stronger and acts at much smaller scales to mediate interactions inside atomic nuclei. The particles that carry the weak force, called W and Z bosons, derive their masses from the Higgs field, a field of energy saturating all space. But it is unclear why the energy of the Higgs field, and therefore the masses of the W and Z bosons, isn’t far greater. Because other particles are intertwined with the Higgs field, their energies should spill into it during events known as quantum fluctuations. This should quickly drive up the energy of the Higgs field, making the W and Z bosons much more massive and rendering the weak nuclear force about as weak as gravity.

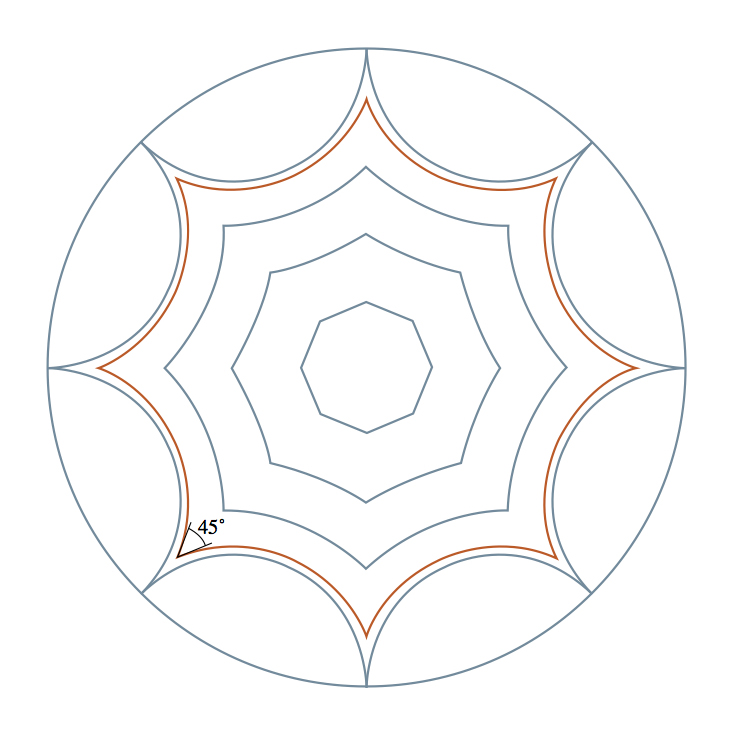

Supersymmetry solves the hierarchy problem by theorizing the existence of a “superpartner” twin for every elementary particle. According to the theory, fermions, which constitute matter, have superpartners that are bosons, which convey forces, and existing bosons have fermion superpartners. Because particles and their superpartners are of opposite types, their energy contributions to the Higgs field have opposite signs: One dials its energy up, the other dials it down. The pair’s contributions cancel out, resulting in no catastrophic effect on the Higgs field. As a bonus, one of the undiscovered superpartners could make up dark matter.

“Supersymmetry is such a beautiful structure, and in physics, we allow that kind of beauty and aesthetic quality to guide where we think the truth may be,” said Brian Greene, a theoretical physicist at Columbia University.

Over time, as the superpartners failed to materialize, supersymmetry has grown less beautiful. According to mainstream models, to evade detection, superpartner particles would have to be much heavier than their twins, replacing an exact symmetry with something like a carnival mirror. Physicists have put forward a vast range of ideas for how the symmetry might have broken, spawning myriad versions of supersymmetry.

But the breaking of supersymmetry can pose a new problem. “The heavier you have to make some of the superpartners compared to the existing particles, the more that cancellation of their effects doesn’t quite work,” Martin explained.

Most particle physicists in the 1980s thought they would detect superpartners that are only slightly heavier than the known particles. But the Tevatron, the now-retired particle accelerator at Fermilab in Batavia, Ill., found no such evidence. As the Large Hadron Collider probes increasingly higher energies without any sign of supersymmetry particles, some physicists are saying the theory is dead. “I think the LHC was a last gasp,” Woit said.

Today, most of the remaining viable versions of supersymmetry predict superpartners so heavy that they would overpower the effects of their much lighter twins if not for fine-tuned cancellations between the various superpartners. But introducing fine-tuning in order to scale back the damage and solve the hierarchy problem makes some physicists uncomfortable. “This, perhaps, shows that we should take a step back and start thinking anew on the problems for which SUSY-based phenomenology was introduced,” Shifman said.

But some theorists are forging ahead, arguing that, in contrast to the beauty of the original theory, nature could just be an ugly combination of superpartner particles with a soupçon of fine-tuning. “I think it is a mistake to focus on popular versions of supersymmetry,” said Matt Strassler, a particle physicist at Rutgers University. “Popularity contests are not reliable measures of truth.”

In some of the less popular supersymmetry models, the lightest superpartners are not the ones the Large Hadron Collider experiments have looked for. In others, the superpartners are not heavier than existing particles but merely less stable, making them more difficult to detect. These theories will continue to be tested at the Large Hadron Collider after it is upgraded to full operational power in about two years.

If nothing new turns up — an outcome casually referred to as the “nightmare scenario” — physicists will be left with the same holes that riddled their picture of the universe three decades ago, before supersymmetry neatly plugged them. And, without an even higher-energy collider to test alternative ideas, Falkowski says, the field will undergo a slow decay: “The number of jobs in particle physics will steadily decrease, and particle physicists will die out naturally.”

Greene offers a brighter outlook. “Science is this wonderfully self-correcting enterprise,” he said. “Ideas that are wrong get weeded out in time because they are not fruitful or because they are leading us to dead ends. That happens in a wonderfully internal way. People continue to work on what they find fascinating, and science meanders toward truth.”

Note: This article was updated on Nov. 26, 2012, to clarify the role of the weak nuclear force inside atomic nuclei.

This article was reprinted on ScientificAmerican.com.