Time’s (Almost) Reversible Arrow

I.

Few facts of experience are as obvious and pervasive as the distinction between past and future. We remember one, but anticipate the other. If you run a movie backwards, it doesn’t look realistic. We say there is an arrow of time, which points from past to future.

One might expect that a fact as basic as the existence of time’s arrow would be embedded in the fundamental laws of physics. But the opposite is true. If you could take a movie of subatomic events, you’d find that the backward-in-time version looks perfectly reasonable. Or, put more precisely: The fundamental laws of physics — up to some tiny, esoteric exceptions, as we’ll soon discuss — will look to be obeyed, whether we follow the flow of time forward or backward. In the fundamental laws, time’s arrow is reversible.

Quantized

A monthly column in which top researchers explore the process of discovery. This month’s columnist, Frank Wilczek, is a Nobel Prize-winning physicist at the Massachusetts Institute of Technology.

Logically speaking, the transformation that reverses the direction of time might have changed the fundamental laws. Common sense would suggest that it should. But it does not. Physicists use convenient shorthand — also called jargon — to describe that fact. They call the transformation that reverses the arrow of time “time reversal,” or simply T. And they refer to the (approximate) fact that T does not change the fundamental laws as “T invariance,” or “T symmetry.”

Everyday experience violates T invariance, while the fundamental laws respect it. That blatant mismatch raises challenging questions. How does the actual world, whose fundamental laws respect T symmetry, manage to look so asymmetric? Is it possible that someday we’ll encounter beings with the opposite flow — beings who grow younger as we grow older? Might we, through some physical process, turn around our own body’s arrow of time?

David Kaplan, Petr Stepanek and Ryan Griffin for Quanta Magazine; music by Kevin MacLeod

Video: David Kaplan explains how the search for hidden symmetries leads to discoveries like the Higgs boson.

Those are great questions, and I hope to write about them in a past future posting. Here, however, I want to consider a complementary question. It arises when we start from the other end, in the facts of common experience. From that perspective, the puzzle is this:

Why should the fundamental laws have that bizarre and problem-posing property, T invariance?

The answer we can offer today is incomparably deeper and more sophisticated than that we could offer 50 years ago. Today’s understanding emerged from a brilliant interplay of experimental discovery and theoretical analysis, which yielded several Nobel prizes. Yet our answer still contains a serious loophole. As I’ll explain, closing that loophole may well lead us, as an unexpected bonus, to identify the cosmological “dark matter.”

II.

The modern history of T invariance begins in 1956. In that year, T. D. Lee and C. N. Yang questioned a different but related feature of physical law, which until then had been taken for granted. Lee and Yang were not concerned with T itself, but with its spatial analogue, the parity transformation, “P.” Whereas T involves looking at movies run backward in time, P involves looking at movies reflected in a mirror. Parity invariance is the hypothesis that the events you see in the reflected movies follow the same laws as the originals. Lee and Yang identified circumstantial evidence against that hypothesis and suggested critical experiments to test it. Within a few months, experiments proved that P invariance fails in many circumstances. (P invariance holds for gravitational, electromagnetic and strong interactions, but generally fails in the so-called weak interactions.)

Those dramatic developments around P (non)invariance stimulated physicists to question T invariance, a kindred assumption they had also once taken for granted. But the hypothesis of T invariance survived close scrutiny for several years. It was only in 1964 that a group led by James Cronin and Valentine Fitch discovered a peculiar, tiny effect in the decays of K mesons that violates T invariance.

III.

The wisdom of Joni Mitchell’s insight — that “you don’t know what you’ve got ‘til it’s gone” — was proven in the aftermath.

If, like small children, we keep asking, “Why?” we may get deeper answers for a while, but eventually we will hit bottom, when we arrive at a truth that we can’t explain in terms of anything simpler. At that point we must call a halt, in effect declaring victory: “That’s just the way it is.” But if we later find exceptions to our supposed truth, that answer will no longer do. We will have to keep going.

As long as T invariance appeared to be a universal truth, it wasn’t clear that our italicized question was a useful one. Why was the universe T invariant? It just was. But after Cronin and Fitch, the mystery of T invariance could not be avoided.

Many theoretical physicists struggled with the vexing challenge of understanding how T invariance could be extremely accurate, yet not quite exact. Here the work of Makoto Kobayashi and Toshihide Maskawa proved decisive. In 1973, they proposed that approximate T invariance is an accidental consequence of other, more-profound principles.

The time was ripe. Not long before, the outlines of the modern Standard Model of particle physics had emerged and with it a new level of clarity about fundamental interactions. By 1973 there was a powerful — and empirically successful! — theoretical framework, based on a few “sacred principles.” Those principles are relativity, quantum mechanics and a mathematical rule of uniformity called “gauge symmetry.”

It turns out to be quite challenging to get all those ideas to cooperate. Together, they greatly constrain the possibilities for basic interactions.

Kobayashi and Maskawa, in a few brief paragraphs, did two things. First they showed that if physics were restricted to the particles then known (for experts: if there were just two families of quarks and leptons), then all the interactions allowed by the sacred principles also respect T invariance. If Cronin and Fitch had never made their discovery, that result would have been an unalloyed triumph. But they had, so Kobayashi and Maskawa went a crucial step further. They showed that if one introduces a very specific set of new particles (a third family), then those particles bring in new interactions that lead to a tiny violation of T invariance. It looked, on the face of it, to be just what the doctor ordered.

In subsequent years, their brilliant piece of theoretical detective work was fully vindicated. The new particles whose existence Kobayashi and Maskawa inferred have all been observed, and their interactions are just what Kobayashi and Maskawa proposed they should be.

Before ending this section, I’d like to add a philosophical coda. Are the sacred principles really sacred? Of course not. If experiments force scientists to modify those principles, they will do so. But at the moment, the sacred principles look awfully good. And evidently it’s been fruitful to take them very seriously indeed.

IV.

So far I’ve told a story of triumph. Our italicized question, one of the most striking puzzles about how the world works, has received an answer that is deep, beautiful and fruitful.

But there’s a worm in the rose.

A few years after Kobayashi and Maskawa’s work, Gerard ’t Hooft discovered a loophole in their explanation of T invariance. The sacred principles allow an additional kind of interaction. The possible new interaction is quite subtle, and ’t Hooft’s discovery was a big surprise to most theoretical physicists.

The new interaction, were it present with substantial strength, would violate T invariance in ways that are much more obvious than the effect that Cronin, Fitch and their colleagues discovered. Specifically, it would allow the spin of a neutron to generate an electric field, in addition to the magnetic field it is observed to cause. (The magnetic field of a spinning neutron is broadly analogous to that of our rotating Earth, though of course on an entirely different scale.) Experimenters have looked hard for such electric fields, but so far they’ve come up empty.

Nature does not choose to exploit ’t Hooft’s loophole. That is her prerogative, of course, but it raises our italicized question anew: Why does Nature enforce T invariance so accurately?

Several explanations have been put forward, but only one has stood the test of time. The central idea is due to Roberto Peccei and Helen Quinn. Their proposal, like that of Kobayashi and Maskawa, involves expanding the standard model in a fairly specific way. One introduces a neutralizing field, whose behavior is especially sensitive to ’t Hooft’s new interaction. Indeed if that new interaction is present, then the neutralizing field will adjust its own value, so as to cancel that interaction’s influence. (This adjustment process is broadly similar to how negatively charged electrons in a solid will congregate around a positively charged impurity and thereby screen its influence.) The neutralizing field thereby closes our loophole.

Peccei and Quinn overlooked an important, testable consequence of their idea. The particles produced by their neutralizing field — its quanta — are predicted to have remarkable properties. Since they didn’t take note of these particles, they also didn’t name them. That gave me an opportunity to fulfill a dream of my adolescence.

A few years before, a supermarket display of brightly colored boxes of a laundry detergent named Axion had caught my eye. It occurred to me that “axion” sounded like the name of a particle and really ought to be one. So when I noticed a new particle that “cleaned up” a problem with an “axial” current, I saw my chance. (I soon learned that Steven Weinberg had also noticed this particle, independently. He had been calling it the “Higglet.” He graciously, and I think wisely, agreed to abandon that name.) Thus began a saga whose conclusion remains to be written.

In the chronicles of the Particle Data Group you will find several pages, covering dozens of experiments, describing unsuccessful axion searches.

Yet there are grounds for optimism.

The theory of axions predicts, in a general way, that axions should be very light, very long-lived particles whose interactions with ordinary matter are very feeble. But to compare theory and experiment we need to be quantitative. And here we meet ambiguity, because existing theory does not fix the value of the axion’s mass. If we know the axion’s mass we can predict all its other properties. But the mass itself can vary over a wide range. (The same basic problem arose for the charmed quark, the Higgs particle, the top quark and several other others. Before each of those particles was discovered, theory predicted all of its properties except for the value of its mass.) It turns out that the strength of the axion’s interactions is proportional to its mass. So as the assumed value for axion mass decreases, the axion becomes more elusive.

In the early days physicists focused on models in which the axion is closely related to the Higgs particle. Those ideas suggested that the axion mass should be about 10 keV — that is, about one-fiftieth of an electron’s mass. Most of the experiments I alluded to earlier searched for axions of that character. By now we can be confident such axions don’t exist.

Attention turned, therefore, toward much smaller values of the axion mass (and in consequence feebler couplings), which are not excluded by experiment. Axions of this sort arise very naturally in models that unify the interactions of the standard model. They also arise in string theory.

Axions, we calculate, should have been abundantly produced during the earliest moments of the Big Bang. If axions exist at all, then an axion fluid will pervade the universe. The origin of the axion fluid is very roughly similar to the origin of the famous cosmic microwave background (CMB) radiation, but there are three major differences between those two entities. First: The microwave background has been observed, while the axion fluid is still hypothetical. Second: Because axions have mass, their fluid contributes significantly to the overall mass density of the universe. In fact, we calculate that they contribute roughly the amount of mass astronomers have identified as dark matter! Third: Because axions interact so feebly, they are much more difficult to observe than photons from the CMB.

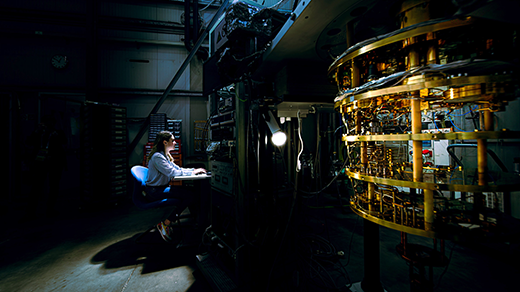

The experimental search for axions continues on several fronts. Two of the most promising experiments are aimed at detecting the axion fluid. One of them, ADMX (Axion Dark Matter eXperiment) uses specially crafted, ultrasensitive antennas to convert background axions into electromagnetic pulses. The other, CASPEr (Cosmic Axion Spin Precession Experiment) looks for tiny wiggles in the motion of nuclear spins, which would be induced by the axion fluid. Between them, these difficult experiments promise to cover almost the entire range of possible axion masses.

Do axions exist? We still don’t know for sure. Their existence would bring the story of time’s reversible arrow to a dramatic, satisfying conclusion, and very possibly solve the riddle of the dark matter, to boot. The game is afoot.

This article was reprinted on Wired.com.