Mathematicians Resurrect Hilbert’s 13th Problem

Ricardo Bessa for Quanta Magazine

Introduction

Success is rare in math. Just ask Benson Farb.

“The hard part about math is that you’re failing 90% of the time, and you have to be the kind of person who can fail 90% of the time,” Farb once said at a dinner party. When another guest, also a mathematician, expressed amazement that he succeeded 10% of the time, he quickly admitted, “No, no, no, I was exaggerating my success rate. Greatly.”

Farb, a topologist at the University of Chicago, couldn’t be happier about his latest failure — though, to be fair, it isn’t his alone. It revolves around a problem that, curiously, is both solved and unsolved, closed and open.

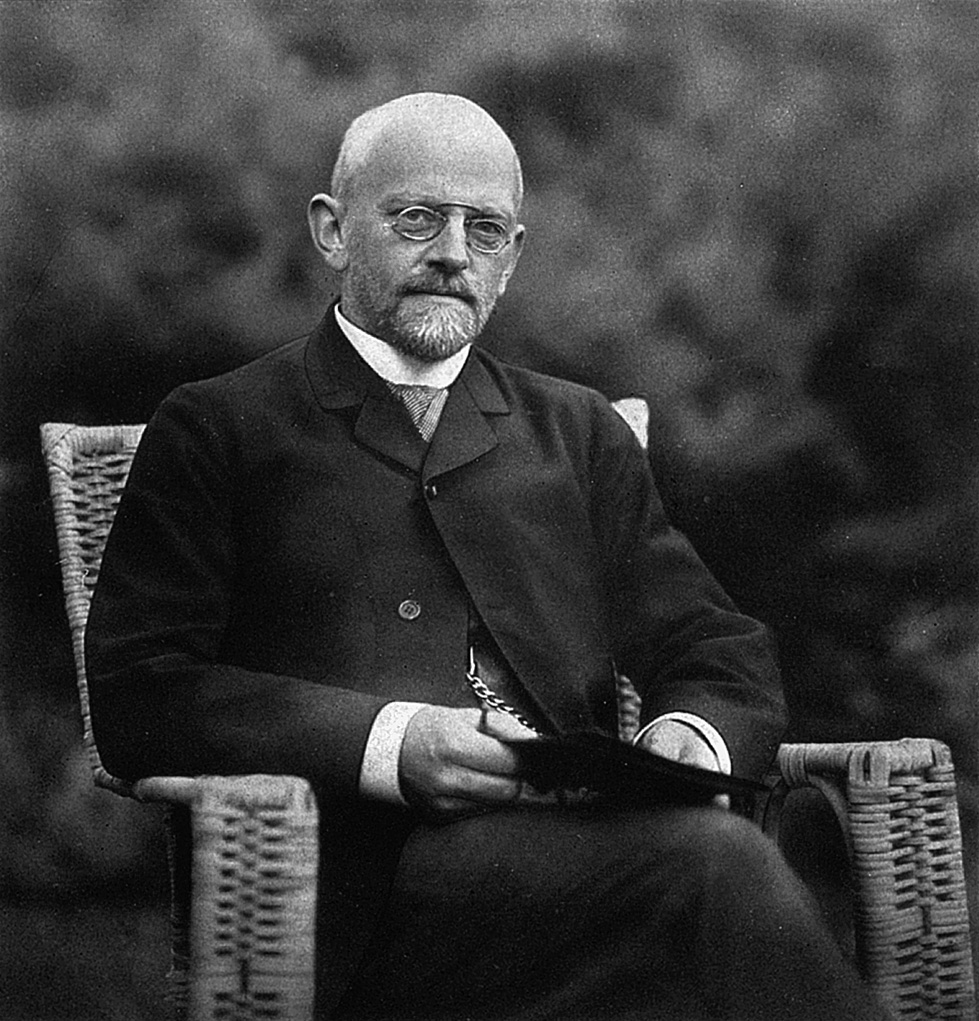

The problem was the 13th of 23 then-unsolved math problems that the German mathematician David Hilbert, at the turn of the 20th century, predicted would shape the future of the field. The problem asks a question about solving seventh-degree polynomial equations. The term “polynomial” means a string of mathematical terms — each composed of numerical coefficients and variables raised to powers — connected by means of addition and subtraction. “Seventh-degree” means that the largest exponent in the string is 7.

Mathematicians already have slick and efficient recipes for solving equations of second, third, and to an extent fourth degree. These formulas — like the familiar quadratic formula for degree 2 — involve algebraic operations, meaning only arithmetic and radicals (square roots, for example). But the higher the exponent, the thornier the equation becomes, and solving it approaches impossibility. Hilbert’s 13th problem asks whether seventh-degree equations can be solved using a composition of addition, subtraction, multiplication and division plus algebraic functions of two variables, tops.

The answer is probably no. But to Farb, the question is not just about solving a complicated type of algebraic equation. Hilbert’s 13th is one of the most fundamental open problems in math, he said, because it provokes deep questions: How complicated are polynomials, and how do we measure that? “A huge swath of modern mathematics was invented in order to understand the roots of polynomials,” Farb said.

In 1900, David Hilbert presented a list of 23 important open problems. The 13th is, in a sense, both solved and unsolved.

University of Göttingen

The problem has led him and the mathematician Jesse Wolfson at the University of California, Irvine into a mathematical rabbit hole, whose tunnels they’re still exploring. They’ve also drafted Mark Kisin, a number theorist at Harvard University and an old friend of Farb’s, to help them excavate.

They still haven’t solved Hilbert’s 13th problem and probably aren’t even close, Farb admitted. But they have unearthed mathematical strategies that had practically disappeared, and they have explored connections between the problem and a variety of fields including complex analysis, topology, number theory, representation theory and algebraic geometry. In doing so, they’ve made inroads of their own, especially in connecting polynomials to geometry and narrowing the field of possible answers to Hilbert’s question. Their work also suggests a way to classify polynomials using metrics of complexity — analogous to the complexity classes associated with the unsolved P vs. NP problem.

“They’ve really managed to extract from the question a more interesting version” than ones previously studied, said Daniel Litt, a mathematician at the University of Georgia. “They’re making the mathematics community aware of many natural and interesting questions.”

Open and Shut, and Open Again

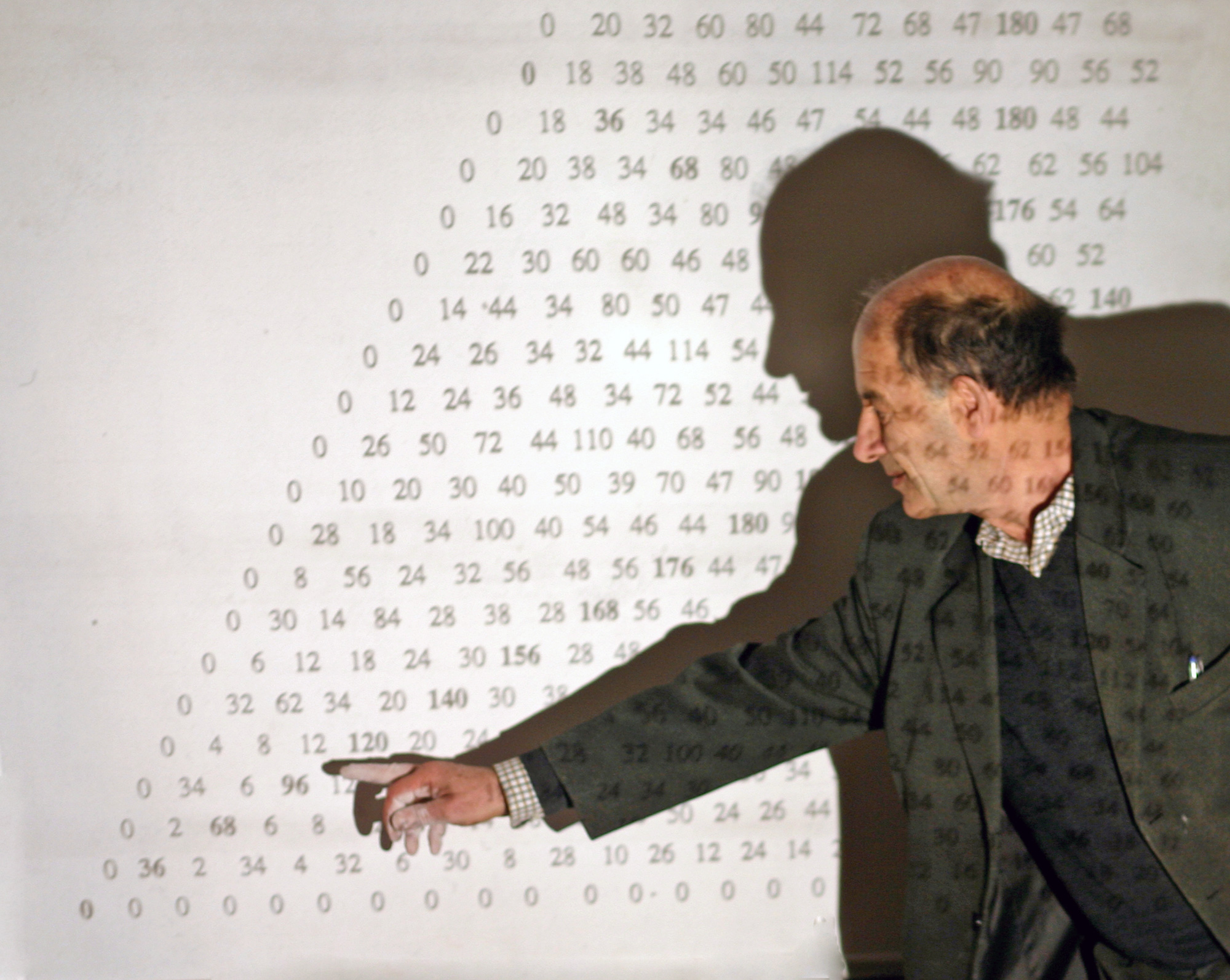

Many mathematicians already thought the problem was solved. That’s because a Soviet prodigy named Vladimir Arnold and his mentor, Andrey Nikolyevich Kolmogorov, published proofs of it in the late 1950s. For most mathematicians, the Arnold-Kolmogorov work closed the book. Even Wikipedia — not a definitive source, but a reasonable proxy for public knowledge — until recently declared the case closed.

While Vladimir Arnold and his mentor Andrey Nikolyevich Kolmogorov proved a version of Hilbert’s 13th problem in the 1950s, it likely wasn’t the variant Hilbert was interested in.

But five years ago, Farb came across a few tantalizing lines in an essay by Arnold, in which the famous mathematician reflected on his work and career. Farb was surprised to see that Arnold described Hilbert’s 13th problem as open and had actually spent four decades trying to solve the problem that he’d supposedly already conquered.

“There are all these papers that would just literally repeat that it was solved. They clearly had no understanding of the actual problem,” Farb said. He was already working with Wolfson, then a postdoctoral researcher, on a topology project, and when he shared what he’d found in Arnold’s paper, Wolfson jumped in. In 2017, during a seminar celebrating Farb’s 50th birthday, Kisin listened to Wolfson’s talk and realized with surprise that their ideas about polynomials were related to questions in his own work in number theory. He joined the collaboration.

The reason for the confusion about the problem soon became clear: Kolmogorov and Arnold had solved only a variant of the problem. Their solution involved what mathematicians call continuous functions, which are functions without abrupt discontinuities, or cusps. They include familiar operations like sine, cosine and exponential functions, as well as more exotic ones.

But researchers disagree on whether Hilbert was interested in this approach. “Many mathematicians believe that Hilbert really meant algebraic functions, not continuous functions,” said Zinovy Reichstein, a mathematician at the University of British Columbia. Farb and Wolfson have been working on the problem they believe Hilbert intended ever since their discovery.

Hilbert’s 13th, Farb said, is a kaleidoscope. “You open this thing up, and the more you put into it, the more new directions and ideas you get,” he said. “It cracks open the door to a whole array, this whole beautiful web of math.”

The Roots of the Matter

Mathematicians have been probing polynomials for as long as math has been around. Stone tablets carved more than 3,000 years ago show that ancient Babylonian mathematicians used a formula to solve polynomials of second degree — a cuneiform forebear of the same quadratic formula that algebra students learn today. That formula, $latex{x=\frac{{ – b \pm \sqrt {b^2 – 4ac} }}{{2a}}}$, tells you how to find the roots, or the values of x that make an expression equal to zero, of the second-degree polynomial $latex{ax^2 + bx +c}$.

Over time, mathematicians naturally wondered if such clean formulas existed for higher-degree polynomials. “The multi-millennial history of this problem is to get back to something that powerful and simple and effective,” said Wolfson.

The higher polynomials grow in degree, the more unwieldy they become. In his 1545 book Ars Magna, the Italian polymath Gerolamo Cardano published formulas for finding the roots of cubic (third-degree) and quartic (fourth-degree) polynomials.

The roots of a cubic polynomial written $latex{ax^3 + bx^2 + cx + d = 0}$ can be found using this formula:

The quartic formula is even worse.

“As they go up in degree, they go up in complexity; they form a tower of complexities,” said Curt McMullen of Harvard. “How can we capture that tower of complexities?”

The Italian mathematician Paolo Ruffini argued in 1799 that polynomials of degree 5 or higher couldn’t be solved using arithmetic and radicals; the Norwegian Niels Henrik Abel proved it in 1824. In other words, there can be no similar “quintic formula.” Fortunately, other ideas emerged that suggested ways forward for higher-degree polynomials, which could be simplified through substitution. For example, in 1786, a Swedish lawyer named Erland Bring showed that any quintic polynomial equation of the form $latex{ax^5 + bx^4 + cx^3 + dx^2 + ex + f = 0}$ could be retooled as $latex{px^5 + qx + 1 = 0}$ (where p and q are complex numbers determined by a, b, c, d, e and f). This pointed to new ways of approaching the inherent but hidden rules of polynomials.

In the 19th century, William Rowan Hamilton picked up where Bring and others had left off. He showed, among other things, that to find the roots of any sixth-degree polynomial equation, you only need the usual arithmetic operations, some square and cube roots, and an algebraic formula that depends on only two parameters.

In 1975, the American algebraist Richard Brauer at Harvard introduced the idea of “resolvent degree,” which describes the lowest number of terms needed to represent the polynomial of some degree. (Less than a year later, Arnold and Japanese number theorist Goro Shimura introduced nearly the same definition in another paper.)

In Brauer’s framework, which represented the first attempt to codify the rules of such substitutions, Hilbert’s 13th problem asks us if it’s possible for seventh-degree polynomials to have a resolvent degree of less than 3; later, he made similar conjectures about sixth- and eighth-degree polynomials.

But these questions also invoke a broader one: What’s the smallest number of parameters you need to find the roots of any polynomial? How low can you go?

Thinking Visually

A natural way to approach this question is to think about what polynomials look like. A polynomial can be written as a function — $latex{f(x)=x^2 -3x + 1}$, for example — and that function can be graphed. Then finding the roots becomes a matter of recognizing that where the function has value 0, the curve crosses the x-axis.

Higher-degree polynomials give rise to more complicated figures. Third-degree polynomial functions with three variables, for example, produce smooth but twisty surfaces embedded in three dimensions. And again, by knowing where to look on these figures, mathematicians can learn more about their underlying polynomial structure.

As a result, many efforts to understand polynomials borrow from algebraic geometry and topology, mathematical fields that focus on what happens when shapes and figures are projected, deformed, squashed, stretched or otherwise transformed without breaking. “Henri Poincaré basically invented the field of topology, and he explicitly said he was doing it in order to understand algebraic functions,” said Farb. “At the time, people were really wrestling with these fundamental connections.”

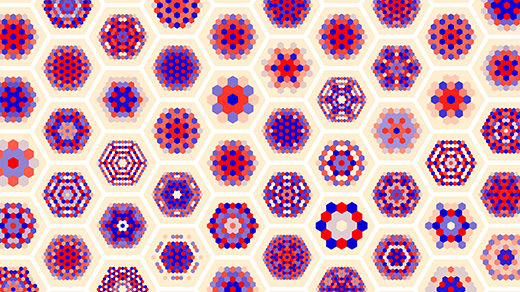

Hilbert himself unearthed a particularly remarkable connection by applying geometry to the problem. By the time he enumerated his problems in 1900, mathematicians had a vast array of tricks to reduce polynomials, but they still couldn’t make progress. In 1927, however, Hilbert described a new trick. He began by identifying all the possible ways to simplify ninth-degree polynomials, and he found within them a family of special cubic surfaces.

Hilbert already knew that every smooth cubic surface — a twisty shape defined by third-degree polynomials — contains exactly 27 straight lines, no matter how tangled it appears. (Those lines shift as the coefficients of the polynomials change.) He realized that if he knew one of those lines, he could simplify the ninth-degree polynomial to find its roots. The formula required only four parameters; in modern terms, that means the resolvent degree is at most 4.

“Hilbert’s amazing insight was that this miracle of geometry — from a completely different world — could be leveraged to reduce the [resolvent degree] to 4,” Farb said.

Toward a Web of Connections

As Kisin helped Farb and Wolfson connect the dots, they realized that the widespread assumption that Hilbert’s 13th was solved had essentially closed off interest in a geometric approach to resolvent degree. In January 2020, Wolfson published a paper reviving the idea by extending Hilbert’s geometric work on ninth-degree polynomials to a more general theory.

Hilbert had focused on cubic surfaces to solve ninth-degree polynomials in one variable. But what about higher-degree polynomials? To solve those in a similar way, Wolfson thought, you could replace that cubic surface with some higher-dimensional “hypersurface” formed by those higher-degree polynomials in many variables. The geometry of these is less understood, but in the last few decades mathematicians have been able to prove that hypersurfaces always have lines in some cases.

Every smooth cubic surface, no matter how twisty or furled, contains exactly 27 straight lines. Hilbert used this fact of geometry to construct a formula for the roots of a ninth-degree polynomial. Jesse Wolfson has taken this idea further, using lines on higher-dimensional “hypersurfaces” to create formulas for more complicated polynomials.

Hilbert’s idea of using a line on a cubic surface to solve a ninth-degree polynomial can be extended to lines on these higher-dimensional hypersurfaces. Wolfson used this method to find new, simpler formulas for polynomials for certain degrees. That means that even if you can’t visualize it, you can solve a 100-degree polynomial “simply” by finding a plane on a multidimensional cubic hypersurface (47 dimensions, in this case).

With this new method, Wolfson confirmed Hilbert’s value of the resolvent degree for ninth-degree polynomials. And for other degrees of polynomials — especially those above degree 9 — his method narrows down the possible values for the resolvent degree.

Thus, this isn’t a direct attack on Hilbert’s 13th, but rather on polynomials in general. “They kind of found some adjacent questions and made progress on those, some of them long-standing, in the hopes that that will shed light on the original question,” McMullen said. And their work points to new ways of thinking about these mathematical constructions.

This general theory of resolvent degree also shows that Hilbert’s conjectures about sixth-degree, seventh-degree and eighth-degree equations are equivalent to problems in other, seemingly unrelated fields of math. Resolvent degree, Farb said, offers a way to categorize these problems by a kind of algebraic complexity, rather like grouping optimization problems in complexity classes.

Even though the theory began with Hilbert’s 13th, however, mathematicians are skeptical that it can actually settle the open question about seventh-degree polynomials. It speaks to big, unexplored mathematical landscapes in unimaginable dimensions — but it hits a brick wall at the lower numbers, and it can’t determine their resolvent degrees.

For McMullen, the lack of headway — despite these signs of progress — is itself interesting, as it suggests that the problem holds secrets that modern math simply can’t comprehend. “We haven’t been able to address this fundamental problem; that means there’s some dark area we haven’t pushed into,” he said.

“Solving it would require entirely new ideas,” said Reichstein, who has developed his own new ideas about simplifying polynomials using a concept he calls essential dimension. “There is no way of knowing where they will come from.”

But the trio is undeterred. “I’m not going to give up on this,” Farb said. “It’s definitely become kind of the white whale. What keeps me going is this web of connections, the mathematics surrounding it.”