Evolution’s Random Paths Lead to One Place

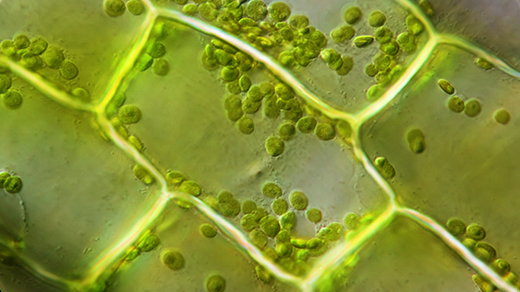

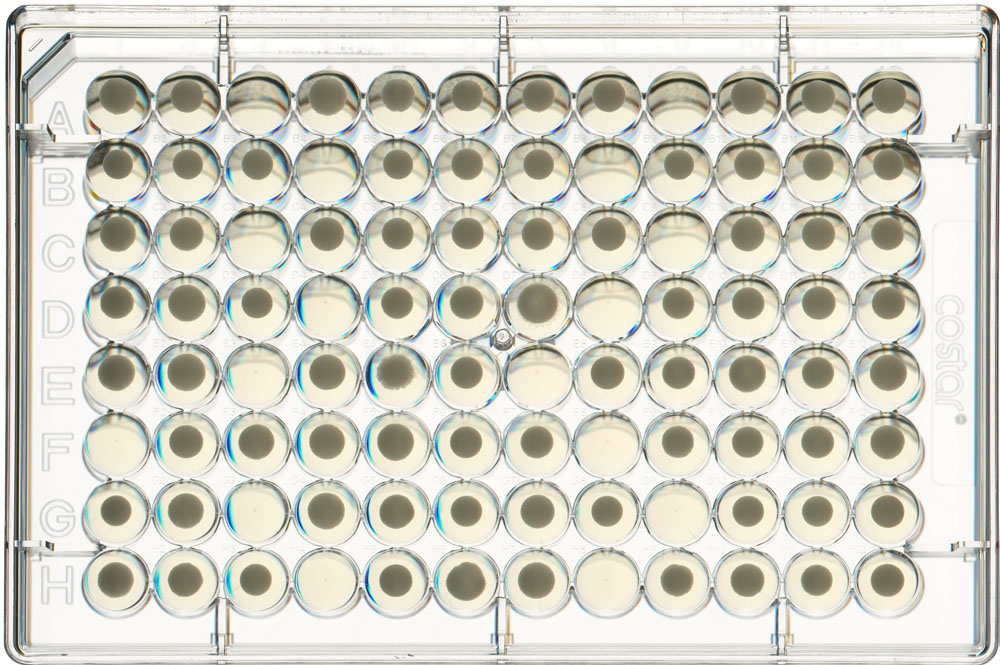

In his fourth-floor lab at Harvard University, Michael Desai has created hundreds of identical worlds in order to watch evolution at work. Each of his meticulously controlled environments is home to a separate strain of baker’s yeast. Every 12 hours, Desai’s robot assistants pluck out the fastest-growing yeast in each world — selecting the fittest to live on — and discard the rest. Desai then monitors the strains as they evolve over the course of 500 generations. His experiment, which other scientists say is unprecedented in scale, seeks to gain insight into a question that has long bedeviled biologists: If we could start the world over again, would life evolve the same way?

Many biologists argue that it would not, that chance mutations early in the evolutionary journey of a species will profoundly influence its fate. “If you replay the tape of life, you might have one initial mutation that takes you in a totally different direction,” Desai said, paraphrasing an idea first put forth by the biologist Stephen Jay Gould in the 1980s.

Desai’s yeast cells call this belief into question. According to results published in Science in June, all of Desai’s yeast varieties arrived at roughly the same evolutionary endpoint (as measured by their ability to grow under specific lab conditions) regardless of which precise genetic path each strain took. It’s as if 100 New York City taxis agreed to take separate highways in a race to the Pacific Ocean, and 50 hours later they all converged at the Santa Monica pier.

The findings also suggest a disconnect between evolution at the genetic level and at the level of the whole organism. Genetic mutations occur mostly at random, yet the sum of these aimless changes somehow creates a predictable pattern. The distinction could prove valuable, as much genetics research has focused on the impact of mutations in individual genes. For example, researchers often ask how a single mutation might affect a microbe’s tolerance for toxins, or a human’s risk for a disease. But if Desai’s findings hold true in other organisms, they could suggest that it’s equally important to examine how large numbers of individual genetic changes work in concert over time.

“There’s a kind of tension in evolutionary biology between thinking about individual genes and the potential for evolution to change the whole organism,” said Michael Travisano, a biologist at the University of Minnesota. “All of biology has been focused on the importance of individual genes for the last 30 years, but the big take-home message of this study is that’s not necessarily important.”

The key strength in Desai’s experiment is its unprecedented size, which has been described by others in the field as “audacious.” The experiment’s design is rooted in its creator’s background; Desai trained as a physicist, and from the time he launched his lab four years ago, he applied a statistical perspective to biology. He devised ways to use robots to precisely manipulate hundreds of lines of yeast so that he could run large-scale evolutionary experiments in a quantitative way. Scientists have long studied the genetic evolution of microbes, but until recently, it was possible to examine only a few strains at a time. Desai’s team, in contrast, analyzed 640 lines of yeast that had all evolved from a single parent cell. The approach allowed the team to statistically analyze evolution.

“This is the physicist’s approach to evolution, stripping down everything to the simplest possible conditions,” said Joshua Plotkin, an evolutionary biologist at the University of Pennsylvania who was not involved in the research but has worked with one of the authors. “They could partition how much of evolution is attributable to chance, how much to the starting point, and how much to measurement noise.”

Desai’s plan was to track the yeast strains as they grew under identical conditions and then compare their final fitness levels, which were determined by how quickly they grew in comparison to their original ancestral strain. The team employed specially designed robot arms to transfer yeast colonies to a new home every 12 hours. The colonies that had grown the most in that period advanced to the next round, and the process repeated for 500 generations. Sergey Kryazhimskiy, a postdoctoral researcher in Desai’s lab, sometimes spent the night in the lab, analyzing the fitness of each of the 640 strains at three different points in time. The researchers could then compare how much fitness varied among strains, and find out whether a strain’s initial capabilities affected its final standing. They also sequenced the genomes of 104 of the strains to figure out whether early mutations changed the ultimate performance.

Previous studies have indicated that small changes early in the evolutionary journey can lead to big differences later on, an idea known as historical contingency. Long-term evolution studies in E. coli bacteria, for example, found that the microbes can sometimes evolve to eat a new type of food, but that such substantial changes only happen when certain enabling mutations happen first. These early mutations don’t have a big effect on their own, but they lay the necessary groundwork for later mutations that do.

Diminishing Returns

Desai’s study isn’t the first to suggest that the law of diminishing returns applies to evolution. A famous decades-long experiment from Richard Lenski’s lab at Michigan State University, which has tracked E. coli for thousands of generations, found that fitness converged over time. But because of limitations in genomics technology in the 1990s, that study didn’t identify the mutations underlying those changes. “The 36 populations we had then would have been much more expensive to sequence than the hundred they did here,” said Michael Travisano of the University of Minnesota, who worked on the Michigan State study.

More recently, two papers published in Science in 2011 mixed and matched a handful of beneficial mutations in different types of bacteria. When the researchers engineered those mutations into different strains of bacteria, they found that the fitter strains enjoyed a smaller benefit. Desai’s study examined a much broader combination of possible mutations, showing that the rule is much more general.

But because of the small scale of such studies, it wasn’t clear to Desai whether these cases were the exception or the rule. “Do you typically get big differences in evolutionary potential that arise in the natural course of evolution, or for the most part is evolution predictable?” he said. “To answer this we needed the large scale of our experiment.”

As in previous studies, Desai found that early mutations influence future evolution, shaping the path the yeast takes. But in Desai’s experiment, that path didn’t affect the final destination. “This particular kind of contingency actually makes fitness evolution more predictable, not less,” Desai said.

Desai found that just as a single trip to the gym benefits a couch potato more than an athlete, microbes that started off growing slowly gained a lot more from beneficial mutations than their fitter counterparts that shot out of the gate. “If you lag behind at the beginning because of bad luck, you’ll tend to do better in the future,” Desai said. He compares this phenomenon to the economic principle of diminishing returns — after a certain point, each added unit of effort helps less and less.

Scientists don’t know why all genetic roads in yeast seem to arrive at the same endpoint, a question that Desai and others in the field find particularly intriguing. The yeast developed mutations in many different genes, and scientists found no obvious link among them, so it’s unclear how these genes interact in the cell, if they do at all. “Perhaps there is another layer of metabolism that no one has a handle on,” said Vaughn Cooper, a biologist at the University of New Hampshire who was not involved in the study.

It’s also not yet clear whether Desai’s carefully controlled results are applicable to more complex organisms or to the chaotic real world, where both the organism and its environment are constantly changing. “In the real world, organisms get good at different things, partitioning the environment,” Travisano said. He predicts that populations within those ecological niches would still be subject to diminishing returns, particularly as they undergo adaptation. But it remains an open question, he said.

Nevertheless, there are hints that complex organisms can also quickly evolve to become more alike. A study published in May analyzed groups of genetically distinct fruit flies as they adapted to a new environment. Despite traveling along different evolutionary trajectories, the groups developed similarities in attributes such as fecundity and body size after just 22 generations. “I think many people think about one gene for one trait, a deterministic way of evolution solving problems,” said David Reznick, a biologist at the University of California, Riverside. “This says that’s not true; you can evolve to be better suited to the environment in many ways.”

This article was reprinted on Wired.com.