How Life (and Death) Spring From Disorder

Olena Shmahalo/Quanta Magazine

Introduction

What’s the difference between physics and biology? Take a golf ball and a cannonball and drop them off the Tower of Pisa. The laws of physics allow you to predict their trajectories pretty much as accurately as you could wish for.

Now do the same experiment again, but replace the cannonball with a pigeon.

Biological systems don’t defy physical laws, of course — but neither do they seem to be predicted by them. In contrast, they are goal-directed: survive and reproduce. We can say that they have a purpose — or what philosophers have traditionally called a teleology — that guides their behavior.

By the same token, physics now lets us predict, starting from the state of the universe a billionth of a second after the Big Bang, what it looks like today. But no one imagines that the appearance of the first primitive cells on Earth led predictably to the human race. Laws do not, it seems, dictate the course of evolution.

The teleology and historical contingency of biology, said the evolutionary biologist Ernst Mayr, make it unique among the sciences. Both of these features stem from perhaps biology’s only general guiding principle: evolution. It depends on chance and randomness, but natural selection gives it the appearance of intention and purpose. Animals are drawn to water not by some magnetic attraction, but because of their instinct, their intention, to survive. Legs serve the purpose of, among other things, taking us to the water.

Mayr claimed that these features make biology exceptional — a law unto itself. But recent developments in nonequilibrium physics, complex systems science and information theory are challenging that view.

Once we regard living things as agents performing a computation — collecting and storing information about an unpredictable environment — capacities and considerations such as replication, adaptation, agency, purpose and meaning can be understood as arising not from evolutionary improvisation, but as inevitable corollaries of physical laws. In other words, there appears to be a kind of physics of things doing stuff, and evolving to do stuff. Meaning and intention — thought to be the defining characteristics of living systems — may then emerge naturally through the laws of thermodynamics and statistical mechanics.

This past November, physicists, mathematicians and computer scientists came together with evolutionary and molecular biologists to talk — and sometimes argue — about these ideas at a workshop at the Santa Fe Institute in New Mexico, the mecca for the science of “complex systems.” They asked: Just how special (or not) is biology?

It’s hardly surprising that there was no consensus. But one message that emerged very clearly was that, if there’s a kind of physics behind biological teleology and agency, it has something to do with the same concept that seems to have become installed at the heart of fundamental physics itself: information.

Disorder and Demons

The first attempt to bring information and intention into the laws of thermodynamics came in the middle of the 19th century, when statistical mechanics was being invented by the Scottish scientist James Clerk Maxwell. Maxwell showed how introducing these two ingredients seemed to make it possible to do things that thermodynamics proclaimed impossible.

Maxwell had already shown how the predictable and reliable mathematical relationships between the properties of a gas — pressure, volume and temperature — could be derived from the random and unknowable motions of countless molecules jiggling frantically with thermal energy. In other words, thermodynamics — the new science of heat flow, which united large-scale properties of matter like pressure and temperature — was the outcome of statistical mechanics on the microscopic scale of molecules and atoms.

According to thermodynamics, the capacity to extract useful work from the energy resources of the universe is always diminishing. Pockets of energy are declining, concentrations of heat are being smoothed away. In every physical process, some energy is inevitably dissipated as useless heat, lost among the random motions of molecules. This randomness is equated with the thermodynamic quantity called entropy — a measurement of disorder — which is always increasing. That is the second law of thermodynamics. Eventually all the universe will be reduced to a uniform, boring jumble: a state of equilibrium, wherein entropy is maximized and nothing meaningful will ever happen again.

Are we really doomed to that dreary fate? Maxwell was reluctant to believe it, and in 1867 he set out to, as he put it, “pick a hole” in the second law. His aim was to start with a disordered box of randomly jiggling molecules, then separate the fast molecules from the slow ones, reducing entropy in the process.

Imagine some little creature — the physicist William Thomson later called it, rather to Maxwell’s dismay, a demon — that can see each individual molecule in the box. The demon separates the box into two compartments, with a sliding door in the wall between them. Every time he sees a particularly energetic molecule approaching the door from the right-hand compartment, he opens it to let it through. And every time a slow, “cold” molecule approaches from the left, he lets that through, too. Eventually, he has a compartment of cold gas on the right and hot gas on the left: a heat reservoir that can be tapped to do work.

This is only possible for two reasons. First, the demon has more information than we do: It can see all of the molecules individually, rather than just statistical averages. And second, it has intention: a plan to separate the hot from the cold. By exploiting its knowledge with intent, it can defy the laws of thermodynamics.

At least, so it seemed. It took a hundred years to understand why Maxwell’s demon can’t in fact defeat the second law and avert the inexorable slide toward deathly, universal equilibrium. And the reason shows that there is a deep connection between thermodynamics and the processing of information — or in other words, computation. The German-American physicist Rolf Landauer showed that even if the demon can gather information and move the (frictionless) door at no energy cost, a penalty must eventually be paid. Because it can’t have unlimited memory of every molecular motion, it must occasionally wipe its memory clean — forget what it has seen and start again — before it can continue harvesting energy. This act of information erasure has an unavoidable price: It dissipates energy, and therefore increases entropy. All the gains against the second law made by the demon’s nifty handiwork are canceled by “Landauer’s limit”: the finite cost of information erasure (or more generally, of converting information from one form to another).

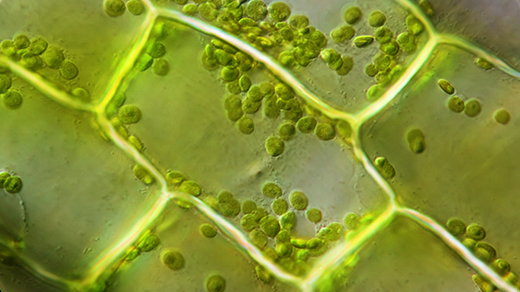

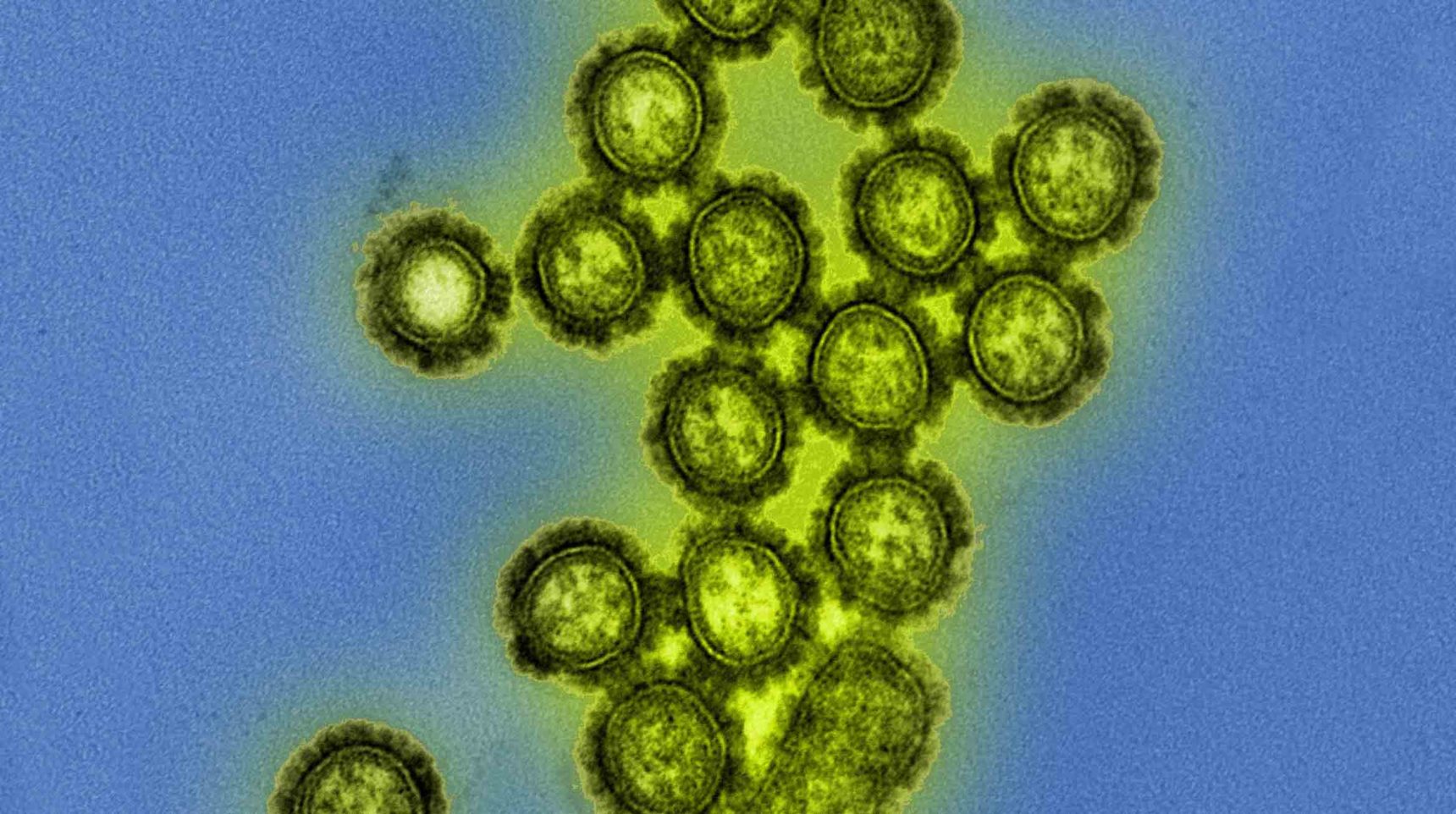

Living organisms seem rather like Maxwell’s demon. Whereas a beaker full of reacting chemicals will eventually expend its energy and fall into boring stasis and equilibrium, living systems have collectively been avoiding the lifeless equilibrium state since the origin of life about three and a half billion years ago. They harvest energy from their surroundings to sustain this nonequilibrium state, and they do it with “intention.” Even simple bacteria move with “purpose” toward sources of heat and nutrition. In his 1944 book What is Life?, the physicist Erwin Schrödinger expressed this by saying that living organisms feed on “negative entropy.”

They achieve it, Schrödinger said, by capturing and storing information. Some of that information is encoded in their genes and passed on from one generation to the next: a set of instructions for reaping negative entropy. Schrödinger didn’t know where the information is kept or how it is encoded, but his intuition that it is written into what he called an “aperiodic crystal” inspired Francis Crick, himself trained as a physicist, and James Watson when in 1953 they figured out how genetic information can be encoded in the molecular structure of the DNA molecule.

A genome, then, is at least in part a record of the useful knowledge that has enabled an organism’s ancestors — right back to the distant past — to survive on our planet. According to David Wolpert, a mathematician and physicist at the Santa Fe Institute who convened the recent workshop, and his colleague Artemy Kolchinsky, the key point is that well-adapted organisms are correlated with that environment. If a bacterium swims dependably toward the left or the right when there is a food source in that direction, it is better adapted, and will flourish more, than one that swims in random directions and so only finds the food by chance. A correlation between the state of the organism and that of its environment implies that they share information in common. Wolpert and Kolchinsky say that it’s this information that helps the organism stay out of equilibrium — because, like Maxwell’s demon, it can then tailor its behavior to extract work from fluctuations in its surroundings. If it did not acquire this information, the organism would gradually revert to equilibrium: It would die.

Looked at this way, life can be considered as a computation that aims to optimize the storage and use of meaningful information. And life turns out to be extremely good at it. Landauer’s resolution of the conundrum of Maxwell’s demon set an absolute lower limit on the amount of energy a finite-memory computation requires: namely, the energetic cost of forgetting. The best computers today are far, far more wasteful of energy than that, typically consuming and dissipating more than a million times more. But according to Wolpert, “a very conservative estimate of the thermodynamic efficiency of the total computation done by a cell is that it is only 10 or so times more than the Landauer limit.”

The implication, he said, is that “natural selection has been hugely concerned with minimizing the thermodynamic cost of computation. It will do all it can to reduce the total amount of computation a cell must perform.” In other words, biology (possibly excepting ourselves) seems to take great care not to overthink the problem of survival. This issue of the costs and benefits of computing one’s way through life, he said, has been largely overlooked in biology so far.

Inanimate Darwinism

So living organisms can be regarded as entities that attune to their environment by using information to harvest energy and evade equilibrium. Sure, it’s a bit of a mouthful. But notice that it said nothing about genes and evolution, on which Mayr, like many biologists, assumed that biological intention and purpose depend.

How far can this picture then take us? Genes honed by natural selection are undoubtedly central to biology. But could it be that evolution by natural selection is itself just a particular case of a more general imperative toward function and apparent purpose that exists in the purely physical universe? It is starting to look that way.

Adaptation has long been seen as the hallmark of Darwinian evolution. But Jeremy England at the Massachusetts Institute of Technology has argued that adaptation to the environment can happen even in complex nonliving systems.

Adaptation here has a more specific meaning than the usual Darwinian picture of an organism well-equipped for survival. One difficulty with the Darwinian view is that there’s no way of defining a well-adapted organism except in retrospect. The “fittest” are those that turned out to be better at survival and replication, but you can’t predict what fitness entails. Whales and plankton are well-adapted to marine life, but in ways that bear little obvious relation to one another.

England’s definition of “adaptation” is closer to Schrödinger’s, and indeed to Maxwell’s: A well-adapted entity can absorb energy efficiently from an unpredictable, fluctuating environment. It is like the person who keeps her footing on a pitching ship while others fall over because she’s better at adjusting to the fluctuations of the deck. Using the concepts and methods of statistical mechanics in a nonequilibrium setting, England and his colleagues argue that these well-adapted systems are the ones that absorb and dissipate the energy of the environment, generating entropy in the process.

Complex systems tend to settle into these well-adapted states with surprising ease, said England: “Thermally fluctuating matter often gets spontaneously beaten into shapes that are good at absorbing work from the time-varying environment.”

There is nothing in this process that involves the gradual accommodation to the surroundings through the Darwinian mechanisms of replication, mutation and inheritance of traits. There’s no replication at all. “What is exciting about this is that it means that when we give a physical account of the origins of some of the adapted-looking structures we see, they don’t necessarily have to have had parents in the usual biological sense,” said England. “You can explain evolutionary adaptation using thermodynamics, even in intriguing cases where there are no self-replicators and Darwinian logic breaks down” — so long as the system in question is complex, versatile and sensitive enough to respond to fluctuations in its environment.

But neither is there any conflict between physical and Darwinian adaptation. In fact, the latter can be seen as a particular case of the former. If replication is present, then natural selection becomes the route by which systems acquire the ability to absorb work — Schrödinger’s negative entropy — from the environment. Self-replication is, in fact, an especially good mechanism for stabilizing complex systems, and so it’s no surprise that this is what biology uses. But in the nonliving world where replication doesn’t usually happen, the well-adapted dissipative structures tend to be ones that are highly organized, like sand ripples and dunes crystallizing from the random dance of windblown sand. Looked at this way, Darwinian evolution can be regarded as a specific instance of a more general physical principle governing nonequilibrium systems.

Prediction Machines

This picture of complex structures adapting to a fluctuating environment allows us also to deduce something about how these structures store information. In short, so long as such structures — whether living or not — are compelled to use the available energy efficiently, they are likely to become “prediction machines.”

It’s almost a defining characteristic of life that biological systems change their state in response to some driving signal from the environment. Something happens; you respond. Plants grow toward the light; they produce toxins in response to pathogens. These environmental signals are typically unpredictable, but living systems learn from experience, storing up information about their environment and using it to guide future behavior. (Genes, in this picture, just give you the basic, general-purpose essentials.)

Prediction isn’t optional, though. According to the work of Susanne Still at the University of Hawaii, Gavin Crooks, formerly at the Lawrence Berkeley National Laboratory in California, and their colleagues, predicting the future seems to be essential for any energy-efficient system in a random, fluctuating environment.

There’s a thermodynamic cost to storing information about the past that has no predictive value for the future, Still and colleagues show. To be maximally efficient, a system has to be selective. If it indiscriminately remembers everything that happened, it incurs a large energy cost. On the other hand, if it doesn’t bother storing any information about its environment at all, it will be constantly struggling to cope with the unexpected. “A thermodynamically optimal machine must balance memory against prediction by minimizing its nostalgia — the useless information about the past,’’ said a co-author, David Sivak, now at Simon Fraser University in Burnaby, British Columbia. In short, it must become good at harvesting meaningful information — that which is likely to be useful for future survival.

You’d expect natural selection to favor organisms that use energy efficiently. But even individual biomolecular devices like the pumps and motors in our cells should, in some important way, learn from the past to anticipate the future. To acquire their remarkable efficiency, Still said, these devices must “implicitly construct concise representations of the world they have encountered so far, enabling them to anticipate what’s to come.”

The Thermodynamics of Death

Even if some of these basic information-processing features of living systems are already prompted, in the absence of evolution or replication, by nonequilibrium thermodynamics, you might imagine that more complex traits — tool use, say, or social cooperation — must be supplied by evolution.

Well, don’t count on it. These behaviors, commonly thought to be the exclusive domain of the highly advanced evolutionary niche that includes primates and birds, can be mimicked in a simple model consisting of a system of interacting particles. The trick is that the system is guided by a constraint: It acts in a way that maximizes the amount of entropy (in this case, defined in terms of the different possible paths the particles could take) it generates within a given timespan.

Entropy maximization has long been thought to be a trait of nonequilibrium systems. But the system in this model obeys a rule that lets it maximize entropy over a fixed time window that stretches into the future. In other words, it has foresight. In effect, the model looks at all the paths the particles could take and compels them to adopt the path that produces the greatest entropy. Crudely speaking, this tends to be the path that keeps open the largest number of options for how the particles might move subsequently.

You might say that the system of particles experiences a kind of urge to preserve freedom of future action, and that this urge guides its behavior at any moment. The researchers who developed the model — Alexander Wissner-Gross at Harvard University and Cameron Freer, a mathematician at the Massachusetts Institute of Technology — call this a “causal entropic force.” In computer simulations of configurations of disk-shaped particles moving around in particular settings, this force creates outcomes that are eerily suggestive of intelligence.

In one case, a large disk was able to “use” a small disk to extract a second small disk from a narrow tube — a process that looked like tool use. Freeing the disk increased the entropy of the system. In another example, two disks in separate compartments synchronized their behavior to pull a larger disk down so that they could interact with it, giving the appearance of social cooperation.

Of course, these simple interacting agents get the benefit of a glimpse into the future. Life, as a general rule, does not. So how relevant is this for biology? That’s not clear, although Wissner-Gross said that he is now working to establish “a practical, biologically plausible, mechanism for causal entropic forces.” In the meantime, he thinks that the approach could have practical spinoffs, offering a shortcut to artificial intelligence. “I predict that a faster way to achieve it will be to discover such behavior first and then work backward from the physical principles and constraints, rather than working forward from particular calculation or prediction techniques,” he said. In other words, first find a system that does what you want it to do and then figure out how it does it.

Aging, too, has conventionally been seen as a trait dictated by evolution. Organisms have a lifespan that creates opportunities to reproduce, the story goes, without inhibiting the survival prospects of offspring by the parents sticking around too long and competing for resources. That seems surely to be part of the story, but Hildegard Meyer-Ortmanns, a physicist at Jacobs University in Bremen, Germany, thinks that ultimately aging is a physical process, not a biological one, governed by the thermodynamics of information.

It’s certainly not simply a matter of things wearing out. “Most of the soft material we are made of is renewed before it has the chance to age,” Meyer-Ortmanns said. But this renewal process isn’t perfect. The thermodynamics of information copying dictates that there must be a trade-off between precision and energy. An organism has a finite supply of energy, so errors necessarily accumulate over time. The organism then has to spend an increasingly large amount of energy to repair these errors. The renewal process eventually yields copies too flawed to function properly; death follows.

Empirical evidence seems to bear that out. It has been long known that cultured human cells seem able to replicate no more than 40 to 60 times (called the Hayflick limit) before they stop and become senescent. And recent observations of human longevity have suggested that there may be some fundamental reason why humans can’t survive much beyond age 100.

There’s a corollary to this apparent urge for energy-efficient, organized, predictive systems to appear in a fluctuating nonequilibrium environment. We ourselves are such a system, as are all our ancestors back to the first primitive cell. And nonequilibrium thermodynamics seems to be telling us that this is just what matter does under such circumstances. In other words, the appearance of life on a planet like the early Earth, imbued with energy sources such as sunlight and volcanic activity that keep things churning out of equilibrium, starts to seem not an extremely unlikely event, as many scientists have assumed, but virtually inevitable. In 2006, Eric Smith and the late Harold Morowitz at the Santa Fe Institute argued that the thermodynamics of nonequilibrium systems makes the emergence of organized, complex systems much more likely on a prebiotic Earth far from equilibrium than it would be if the raw chemical ingredients were just sitting in a “warm little pond” (as Charles Darwin put it) stewing gently.

In the decade since that argument was first made, researchers have added detail and insight to the analysis. Those qualities that Ernst Mayr thought essential to biology — meaning and intention — may emerge as a natural consequence of statistics and thermodynamics. And those general properties may in turn lead naturally to something like life.

At the same time, astronomers have shown us just how many worlds there are — by some estimates stretching into the billions — orbiting other stars in our galaxy. Many are far from equilibrium, and at least a few are Earth-like. And the same rules are surely playing out there, too.

This article was reprinted on Wired.com.