Computer Scientists Inch Closer to Major Algorithmic Goal

Kiki Ljung for Quanta Magazine

Introduction

If someone asks you to determine whether two objects are the same, it might seem like a trivial request. In most everyday cases, a quick glance is enough for you to render an accurate judgment.

But in computer science, it’s a far more involved question. In fact, it’s one that cuts to the unresolved heart of what computers are capable of. Depending on what the objects are, and how you define sameness, we still don’t know whether computers can answer the question quickly, or whether a slow and laborious approach is essentially the best they can manage.

Over the last decade, there have been some important results demonstrating that computers can do at least a little better than that. One of the biggest recent results in computer science was a faster algorithm for determining when two graphs are the same. The 2015 work, by László Babai of the University of Chicago, broke one important computational speed barrier but fell short of another.

Now, a paper by Xiaorui Sun of the University of Illinois, Chicago has presented a new, faster algorithm for a related question called the group isomorphism problem, which is about knowing when two mathematical objects called groups are the same. The work, posted online this past March, takes a small step toward clarifying the underlying computational complexity involved in comparing objects.

Sun’s work breaks a long-standing speed limit for a particular class of groups — the one regarded as the hardest instance of the group isomorphism problem to solve. If an algorithm can quickly compare groups of this sort, the hope is that it can quickly compare groups of any type.

“We don’t know such a theorem, but we have reason to believe something like that should be true,” said Josh Grochow of the University Colorado, Boulder.

Comparing Comparisons

To determine whether two things are the same in a precise way, you first need to define “the same.” Two strings of numbers could be considered the same if they merely contain the same digits, or they might need to have the same digits in the same order.

Isomorphism is a particular kind of sameness that comes up a lot in mathematics. When two objects are isomorphic to each other, it roughly means that they contain the same elements, and that those elements are in the same relationship with each other.

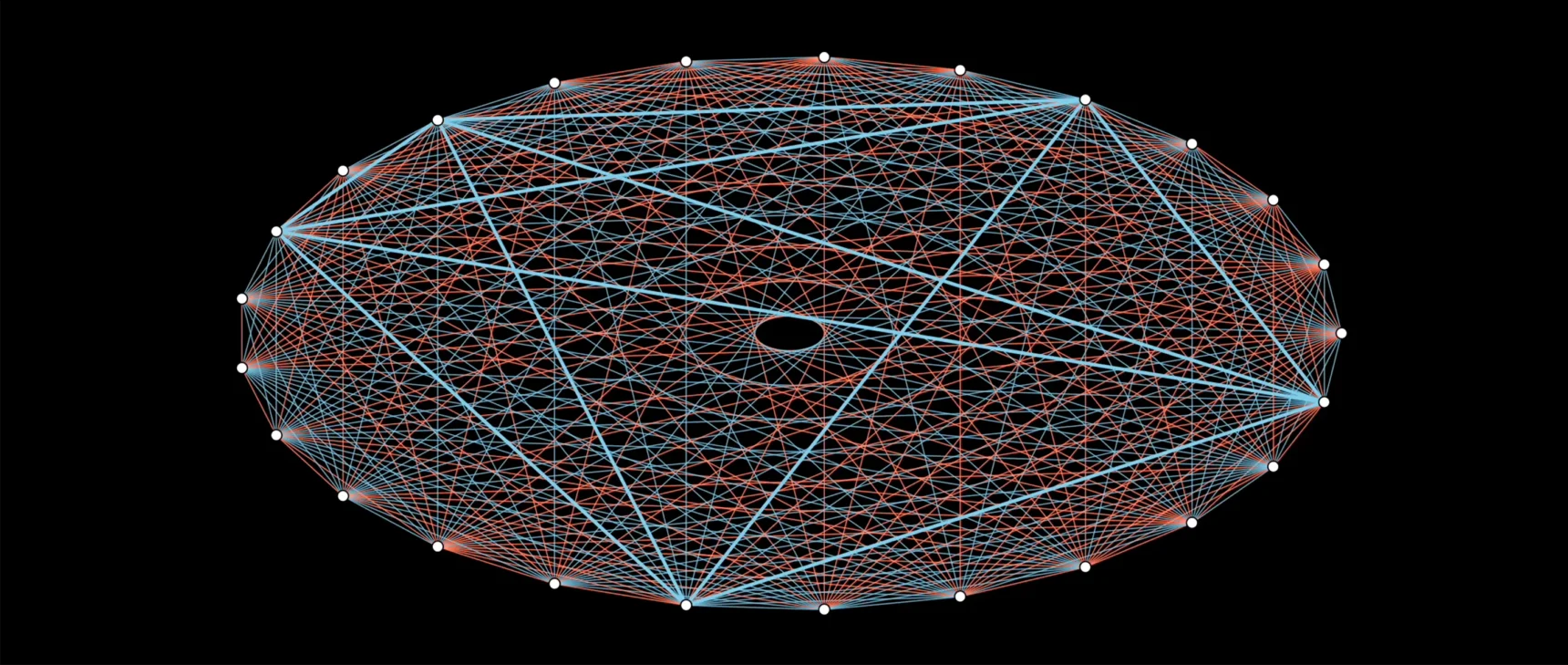

Graphs — collections of vertices (dots) linked by edges (lines) — provide an accessible, visual way to see what isomorphism can look like. Two graphs are isomorphic if you can match vertices in one graph with vertices in the other, such that vertices that are connected by an edge in the first graph are connected by an edge in the second. Isomorphic graphs can look different on the surface, but they share an underlying structure.

These two graphs look different, but they’re isomorphic because the relationships between vertices and edges are preserved (as shown by the different colors).

Groups are more abstract than graphs, but they’re still amenable to comparison by isomorphism. A group is a collection of elements — such as numbers — that can be combined with each other according to some operation so that the result is also in the collection. For example, you can have a group whose elements are the integers — all the positive and negative whole numbers, plus zero — and whose operation is addition: Add any two integers, and the result is always another integer.

Two groups are isomorphic if you can pair each element in one group with an element in the other, so that the result of operating on two elements in the first group is consistent with the result of operating on the equivalent values of those elements in the second group.

Here’s a simple example of two groups, each with two elements, that are isomorphic to each other. The first group consists of the numbers 0 and 1, and the second group consists of the letters a and b. Both groups contain an operation for combining the elements of the group in a specific way, with the results laid out in the tables below.

The groups are isomorphic because 0 pairs with a, 1 pairs with b, and the structural relationship produced by combining elements is the same in both groups.

“We say two groups are isomorphic if they are basically equivalent,” Sun said.

Unbalanced Progress

Isomorphism is an important concept in mathematics — where graphs and groups feature extensively — because it allows mathematicians to look past superficial differences and focus on the ways in which related objects may actually be the same. But it’s fundamental in computer science, too; researchers not only look for algorithms that determine whether two objects are isomorphic, but also measure how fast those algorithms can run.

That measurement can be tricky. An algorithm’s speed is based on how its runtime changes with the size of the objects it’s working on. Imagine, for example, that you have two pairs of groups. In one pair, each group contains five elements. In the other, each group contains 10 elements.

You’d expect it to take an algorithm more time to determine whether the groups with more elements are isomorphic. But how much more time? Will it take twice as long? 52 longer? 25 longer? Those questions correspond to important broad classifications of algorithmic speed: They can run in linear time (which means in this case that it takes twice as long), polynomial time (52 longer) and exponential time (25 longer).

Computer scientists know which speed category most computing questions fall into, but not all.

“Most are either the easiest or the hardest [kind of question], but there are still several exceptions which are unknown,” Sun said. Graph and group isomorphism are among those exceptions, which is what makes them so appealing to study.

In the 1970s, Robert Tarjan of Princeton University realized that there is an algorithm that can determine whether any two groups are isomorphic with a runtime of $latex n^{{(log\,n)}}$, where n is the number of elements in each group. This is called a quasi-polynomial-time algorithm, and in the hierarchy of runtimes it’s better than exponential time (2n) but worse than polynomial time (n2). This is approximately the same speed as Babai’s graph isomorphism algorithm, and it’s still the best we can do for groups almost 50 years later.

“It roughly means there’s been no progress for half a century,” Sun said.

At the time of Tarjan’s result, the group isomorphism problem was more widely studied than the graph version. That’s flipped today, in part because graph isomorphism has spurred exciting innovations while group isomorphism has stalled.

“All of our tools have been really slow for years, and it’s been hard to exploit what we know about the algebra [of groups],” said James Wilson of Colorado State University.

But despite this disparity in progress, the two problems have a deeper connection than the similarity of their names: The group isomorphism problem (at least in this formulation) reduces to the graph isomorphism problem. This means that any algorithm that can solve the graph problem can also solve the group problem in a similar amount of time. The reverse is not true — progress on group doesn’t imply progress on graph. But the lack of advances on the group problem has weighed on mathematicians seeking commensurate gains on the graph problem. How can you accomplish a harder thing if you can’t first accomplish something that is closely related and seems even easier?

Xiaorui Sun found a new algorithm that can determine more quickly than ever before whether two groups of a particular type are isomorphic, or roughly equivalent.

Maryann Xue

“In other words,” Sun said, “in order to further improve graph isomorphism, group isomorphism is a big bottleneck.”

A Problem Transformed

When progress on a problem stalls as long as it did for group isomorphism, invention is usually necessary to get unstuck. “When you have a big advance, that should be some indication that there’s a new idea,” Grochow said.

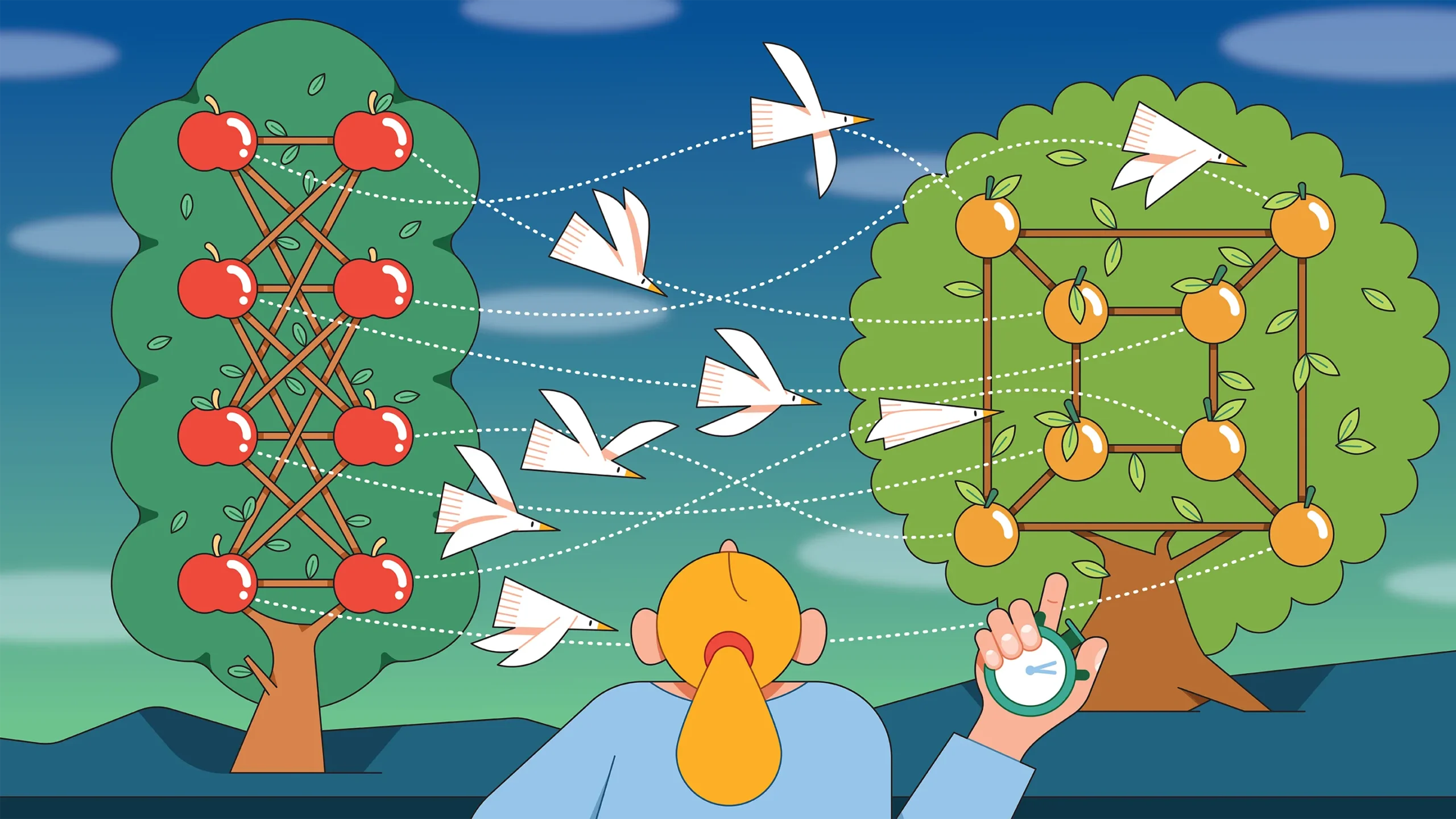

Sun’s work contains a few ideas that involve targeting an important type of group and finding a clever way to break those groups into pieces in order to compare them.

The groups Sun’s algorithm works for are called p-groups of class 2 and exponent p. They are similar to groups in which the product of two elements is another element and the product remains the same regardless of the order in which you multiply them. But what really matters is what they represent for the overall group isomorphism problem. These groups have a very simple structure, which means they should be easy to compare. But despite this simplicity, researchers hadn’t figured out a way to speed up the algorithm. Until they could, it felt hopeless to make improvements for the general question of group isomorphism.

Sun began by switching the setting from groups to matrices, arrays of numbers that serve as foundational objects in linear algebra. This was possible due to a theorem from the 1930s called the Baer correspondence, which transforms this version of the group isomorphism question into a perfectly analogous problem about matrices. In particular, Sun worked with matrix spaces, which are collections of matrices with a special property: The (linear) combination of any two matrices in the space equals another matrix in the space.

In other words, these spaces are structured a lot like groups. So instead of trying to understand when two groups are isomorphic, Sun could just try to understand when two matrix spaces are isometric — a notion of isomorphism of matrix spaces that corresponds to that of groups.

Sun was not the first researcher to adopt this approach, but he was the first to introduce an additional step: splitting a matrix space into two pieces. One piece is the core of the space, in which all the matrices are simple. The other piece is the space surrounding that core, in which all the matrices are particularly complex. This move corresponds to splitting a group into subgroups that contain only some of the total elements.

Sun then applied different algorithmic methods to each of these pieces. The core has a simple structure, so he used a characterization of the structure to represent it in a more organized way. The outer layer is more complex, so there’s no obviously fast way to compare it to another. Instead, Sun’s method takes an approach called individualization and refinement to rule out most of the possible ways of mapping one outer layer onto the other and then uses a computer to work through all remaining possible ways to determine whether an isomorphic matching exists.

The method is similar in spirit to how you might solve a sudoku puzzle. There are some squares whose potential values are constrained by what you already know (the core of the matrix space), allowing you to fill them in quickly. Then there are others (the outer layer) which have fewer constraints, but which you can figure out just by trying all possible values — and as long as there aren’t too many of these kinds of squares, you can still solve the puzzle in a reasonable amount of time.

“I fill in all the things I can quickly tell are constrainable, and now I might go back in and try my heart out on the missing boxes,” Wilson said. “If you’ve narrowed the scope, now it’s a good time to switch gears and use the computer to search for the right values.”

Sun’s breakthrough was showing that it’s always possible to do this splitting for the matrix spaces corresponding to p-groups of class 2 and exponent p. He then proved that after such a split, a combination of algorithmic techniques makes it possible to determine whether two spaces are isomorphic in $latex n^{{(log\,n)}^{5/6}}$ time, a value that’s slightly lower than Tarjan’s $latex n^{{(log\,n)}}$ method. (Both algorithms also include constant terms, which don’t have a big effect on the runtime, and which we’ve left out for clarity.)

The result doesn’t determine which speed category group isomorphism falls into; it’s still somewhere between exponential and polynomial time. But Sun has nudged it slightly closer to the polynomial side of things, and there’s reason to believe more than that should be possible. After all, his work provides computer scientists with a faster group isomorphism algorithm for the hardest kind of groups, raising the possibility that a similar speedup could be within reach for groups of all kinds.

“If you can solve it for p-groups, probably you can solve the whole thing,” Grochow said. “Maybe.”