Mathematicians Discover Novel Way to Predict Structure in Graphs

Introduction

It has been an exhilarating year in combinatorics research. In early 2023, mathematicians were stunned when two of the biggest problems in the field were solved in as many months. Now, a third major question has fallen with a 14-page proof “that has absolutely all the right ideas,” said Mehtaab Sawhney of the Massachusetts Institute of Technology, who added: “It’s completely shocking.”

That question deals with so-called Ramsey numbers — fundamental quantities that reflect the limits of possible disorder. These numbers measure the size that collections of vertices and edges, called graphs, can attain before they inevitably give rise to pattern and structure.

Mathematicians have been studying Ramsey numbers, which are notoriously difficult to pin down, for nearly a century. In doing so, they’ve developed techniques that have led to advances in a variety of disciplines beyond graph theory, including number theory and cryptography.

But the new proof, posted online earlier this month, marks a departure from those techniques. It not only solves a problem that has resisted progress for more than 40 years, but also presents a novel road map for how mathematicians might tackle Ramsey problems going forward.

Party Planning Meets Graph Theory

To understand what a Ramsey number is, imagine you’re hosting a party.

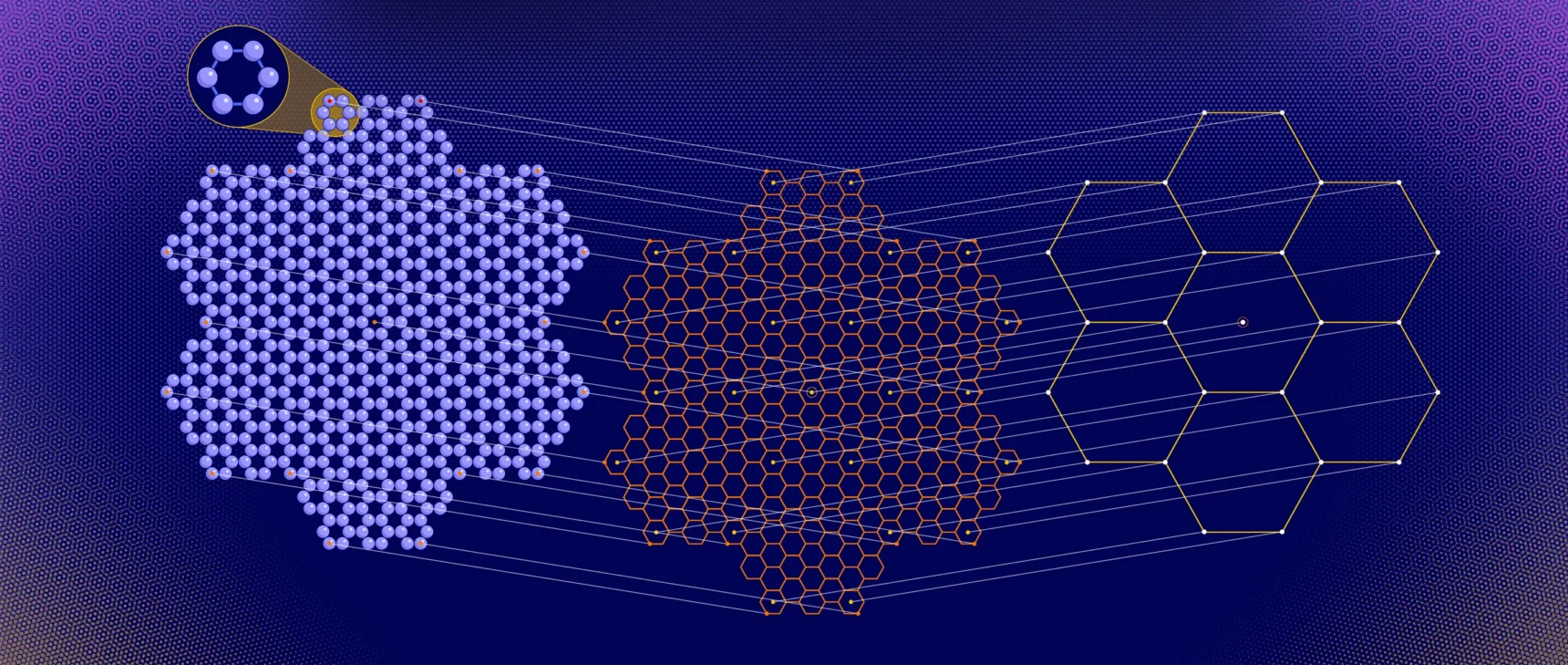

How many people would you need to invite to guarantee that there will be a group of people who all know one another, or a group who are all strangers? You can encode this question in the language of graphs. Assign a vertex to each person. For n people, you get n vertices. Connect every pair of vertices with an edge. Color the edge red if the people in question know each other, and blue if they are strangers.

Merrill Sherman/Quanta Magazine

A group of mutual acquaintances or strangers is represented by a structure called a clique: a set of vertices connected by edges of the same color. The Ramsey number r(s, t) is the minimum number of people you must invite to make it impossible to avoid including a group of s acquaintances or t strangers — in the language of graph theory, a red clique of size s or a blue clique of size t.

For example, we know that r(4, 5) = 25. So you can host a party with 24 people, some of whom know each other, without including a group of four mutual acquaintances or five strangers. But add one more person, and you can’t avoid creating at least one of these structures.

One of this year’s earlier breakthroughs in combinatorics gave a tighter upper bound for “symmetric” Ramsey numbers, where the red and blue cliques are the same size. With asymmetric Ramsey numbers — the subject of the new result — mathematicians fix the size of the red clique and ask what happens as the size of the blue clique gets arbitrarily large.

Mathematicians have only been able to exactly compute a handful of the smallest Ramsey numbers. They proved that r(4, 5) = 25 in 1995. But nobody knows the value of r(4, 6). Similarly, in the early 1980s, they showed that r(3, 9) = 36, but r(3, 10) remains an open problem. (The symmetric case is just as difficult: r(4) = 18, but the value of r(5) is not known.)

And so mathematicians instead try to estimate Ramsey numbers — coming up with upper and lower bounds on their values.

In the 1990s, they used techniques for randomly generating graphs to prove that if the red clique is fixed at 3, and the blue one becomes bigger and bigger, the size of the Ramsey number grows as the square of the size of the blue clique. In other words, r(3, t) is approximately t2.

The new proof asks what happens when the size of the red clique is set at 4, rather than 3. In the 1930s, it was established that r(4, t) grows no faster than around t3. But the best lower bound, found in the 1970s, is about t5/2 — considerably smaller.

Efforts to close the gap by raising the lower bound or lowering the upper one failed for decades, until a pair of mathematicians added a key ingredient.

Hidden in Plain Sight

In 2019, Sam Mattheus, then a graduate student at the Free University of Brussels (VUB) was looking for inspiration. His expertise was in finite geometry, the study of arrangements of points, lines and other structures in specially defined spaces. But even though he found the work interesting, he felt constrained by how strict and exact these geometric constructions had to be.

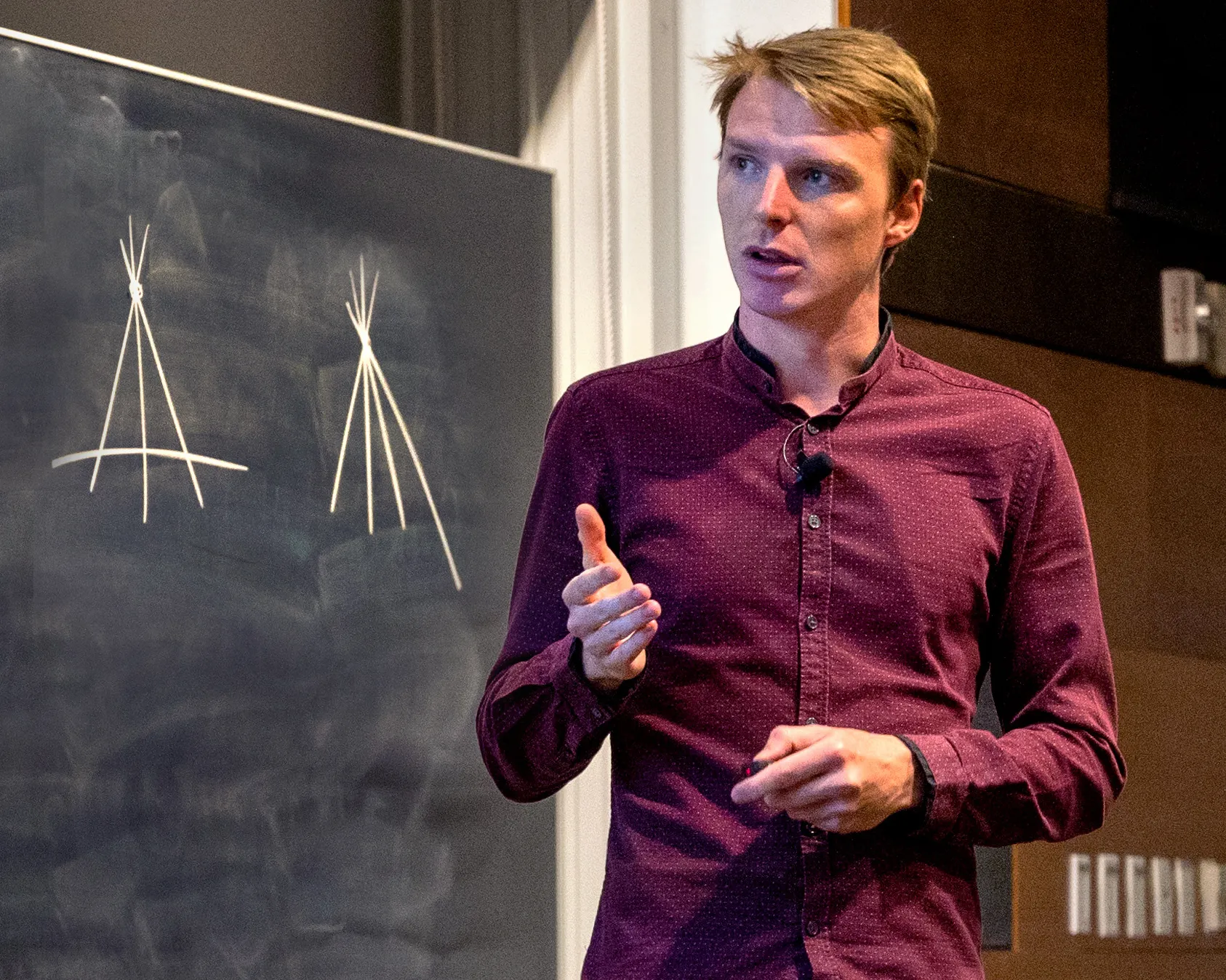

Sam Mattheus figured out how to apply constructions from finite geometry to solve a longstanding problem in Ramsey theory.

Carnegie Mellon University

Then he saw a paper by two mathematicians, Dhruv Mubayi of the University of Illinois, Chicago and Jacques Verstraete of the University of California, San Diego. They were rethinking how to approach Ramsey problems. While traditional techniques involve randomly generating graphs to get good estimates of Ramsey numbers, Mubayi and Verstraete started with “pseudorandom” constructions that look random, but aren’t.

Something clicked in Mattheus. Perhaps, he thought, his geometric perspective could help. For the next couple of years, while he finished his graduate work, he kept this idea at the back of his mind. He then applied for a Fulbright fellowship, which would allow him to pursue a postdoc with Verstraete in the U.S.

In 2022, shortly after Mattheus was awarded the Fulbright (along with another fellowship), he moved to UCSD and began working with Verstraete on r(4,t). The mathematicians wanted to raise the lower bound to meet the known upper bound. To do that, they would have to find a graph with nearly t3 vertices that had no red cliques of size 4 or blue cliques of size t.

To get their proof to work, they reformulated the problem. Imagine simply deleting every blue edge. The goal now becomes to find a graph with no red cliques of size 4, and no independent sets of size t (that is, sets of t vertices without any edges).

Mubayi and Verstraete’s 2019 work implied that if you can construct a pseudorandom graph without red cliques of size 4, then you can take random pieces of it to get smaller graphs without any large independent sets. This was precisely what Mattheus and Verstraete wanted to find. By beginning with an even larger graph, they hoped to find a graph with almost t3 vertices that met their criteria. “Inside these graphs hide better Ramsey graphs,” Verstraete said.

The problem was figuring out the right pseudorandom construction to start with.

The mathematicians had to get there in a somewhat roundabout way. They didn’t start with a pseudorandom graph. They didn’t start with a graph at all.

Instead, Mattheus remembered a strange object called a Hermitian unital, something that finite geometers tend to be very familiar with — but that a mathematician working in combinatorics was unlikely to ever encounter.

A Hermitian unital is a special set of points on a curve, along with lines that pass through those points in specific configurations. Crucially, it can also be represented as a graph that consists of many large but barely overlapping cliques.

This graph is well known, and many of its properties have been studied. But it had never been considered in the context of Ramsey problems. “It’s very specific to this finite-geometry business,” Mattheus said.

The graph might not seem useful at first glance, since it contains so many big cliques. But a key feature of the Hermitian unital is that it only contains size-4 cliques whose vertices are clustered together in an atypical way. Because of this property, it was relatively easy for the mathematicians to destroy those unwanted cliques by deleting edges at random.

These deletions gave them a new graph with no size 4 cliques — but it still contained large independent sets. Mattheus and Verstraete now needed to prove that this graph was pseudorandom. In doing so, they were finally able to use the 2019 proof as they’d hoped. They took random subgraphs with about t3 vertices, and could guarantee that those subgraphs were free of independent sets of size t.

This completed the proof. “This construction is absolutely beautiful,” Sawhney said.

The work heralds a shift in how mathematicians think about Ramsey problems. “It’s very, very natural to try to use randomness to try to push things through and get as good a bound as you can,” said David Conlon of the California Institute of Technology. “But what this really shows is that randomness only gets you so far.”