‘Functional Fingerprint’ May Identify Brains Over a Lifetime

Researchers studying the functional connections among parts of the brain are finding that the “fingerprint” of these patterns can be used to identify individuals over many years, and to distinguish their relatives from strangers.

JR Bee for Quanta Magazine

Introduction

Michaela Cordova, a research associate and lab manager at Oregon Health and Science University, begins by “de-metaling”: removing rings, watches, gadgets and other sources of metal, double-checking her pockets for overlooked objects that could, in her words, “fly in.” Then she enters the scanning room, raises and lowers the bed, and waves a head coil in the general direction of the viewing window and the iPad camera that’s enabling this virtual lab tour (I’m watching from thousands of miles away in Massachusetts). Her voice is mildly distorted by the microphone embedded in the MRI scanner, which from my slightly blurry vantage point looks less like an industrial cannoli than a beast with a glowing blue mouth. I can’t help but think that eerie description might resonate with her usual clientele.

Cordova works with children, assuaging their fears, easing them in and out of the scanner while coaxing them with soft words, Pixar movies and promises of snacks to minimize wiggling. These kids are enrolled in research aimed at mapping the brain’s neural connections.

The physical links between brain regions, collectively known as the “connectome,” are part of what distinguish humans cognitively from other species. But they also differentiate us from one another. Scientists are now combining neuroimaging approaches with machine learning to understand the commonalities and differences in brain structure and function across individuals, with the goal of predicting how a given brain will change over time because of genetic and environmental influences.

The lab where Cordova works, headed by associate professor Damien Fair, is concerned with the functional connectome, the map of brain regions that coordinate to carry out specific tasks and to influence behavior. Fair has a special name for a person’s distinct neural connections: the functional fingerprint. Like the fingerprints on the tips of our digits, a functional fingerprint is specific to each of us and can serve as a unique identifier.

“I could take a fingerprint from my five-year-old, and I’d still be able to know that fingerprint is hers when she’s 25,” Fair said. Even though her finger might get bigger and go through other changes with age and experience, “still the core features are all there.” In the same way, work from Fair’s lab and others hints that the essence of someone’s functional connectome might be identifiably fixed and that normal changes over a lifetime are largely predictable.

Identifying, tracking and modeling the functional connectome could expose how brain signatures lead to variations in behavior and, in some cases, confer a higher risk of developing certain neuropsychiatric conditions. To this end, Fair and his team systematically search their data for patterns in brain connectivity across scans, studies and, ultimately, clinical populations.

Characterizing the Connectome

Traditional techniques for mapping the functional connectome focus on just two brain regions at a time, using MRI data to correlate how the activity of each changes in relation to the other. Brain regions with signals that vary in unison are assigned a score of 1. If one increases while the other decreases, that merits a –1. If there is no observable relationship between the two, that’s a 0.

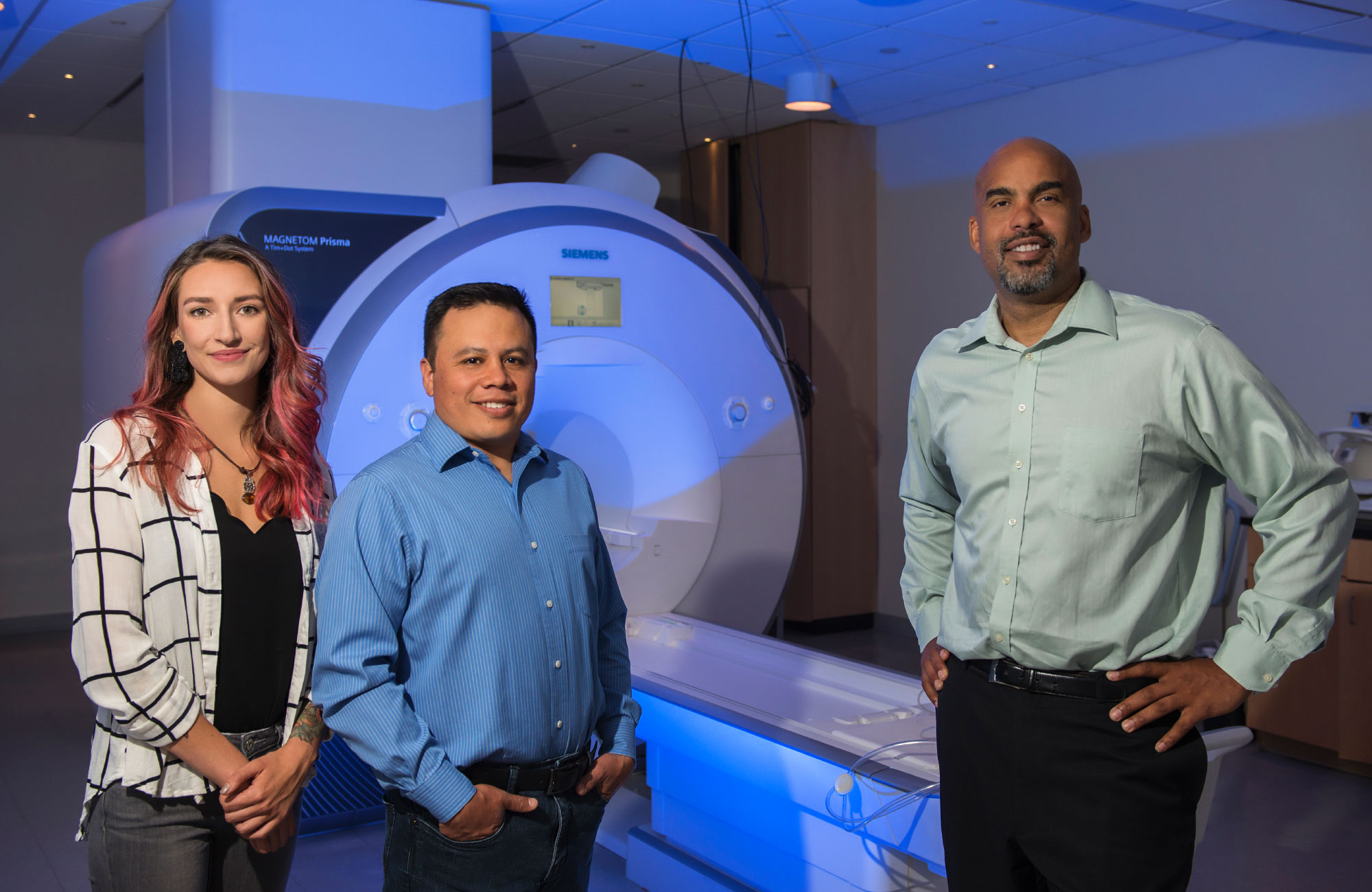

Damien Fair (at right), an associate professor of neuroscience and psychiatry at Oregon Health and Science University, heads a lab that maps how brain areas work together during tasks and behaviors. With colleagues such as assistant professor Oscar Miranda-Dominguez (at center) and research associate Michaela Cordova (at left), Fair turns MRI data from human subjects into profiles of the functional “connectome.”

Jordan Sleeth/OHSU

This approach, however, has limitations. For instance, it considers these pairs of regions independently of the rest of the brain, even though each is likely to also be influenced by inputs from neighboring areas, and those extra inputs might mask the true functional connection of any pair. Overcoming such assumptions required looking at cross talk throughout the whole brain, not just a subset, and revealing more widespread, informative patterns in connectivity that might have otherwise gone unnoticed.

In 2010, Fair co-authored a paper in Science that described using machine learning and MRI scans to take into account every pair of correlations simultaneously, in order to estimate the maturity (or “age”) of a given brain. Although this collaboration wasn’t the only one analyzing patterns across multiple connections at once, it generated a buzz throughout the research community because it was the first to use those patterns to predict the brain age of a given individual.

Four years later, in a paper that coined the phrase “functional fingerprinting,” Fair’s team devised their own method of mapping the functional connectome and predicting the activity of single brain regions based on the signals coming from not one but all the regions in combination with one another.

In their simple linear model, the activity of a single region is equal to the summed contributions of all the other areas, each of which is weighted, since some lines of communication between regions are stronger than others. The relative contributions of each area are what make a functional fingerprint unique. The researchers needed just 2.5 minutes of high-quality MRI data per participant to generate the linear model.

According to their calculations, roughly 30 percent of the connectome is unique to the individual. The majority of these regions tend to govern “higher order” tasks that require more cognitive processing, such as learning, memory and attention — as compared with more basic functions like sensory, motor and visual processing.

It makes sense that those areas would be so distinctive, Fair explained, because those higher-order control regions are in essence what make us who we are. Indeed, brain areas like the frontal and parietal cortices developed later in the course of evolution, and enlarged as modern humans emerged.

“If you think about what is likely to be most similar across people, it would be the more simple stuff,” Fair said, “like how I move my fingers and how visual information is initially processed.” Those areas vary less across the human population.

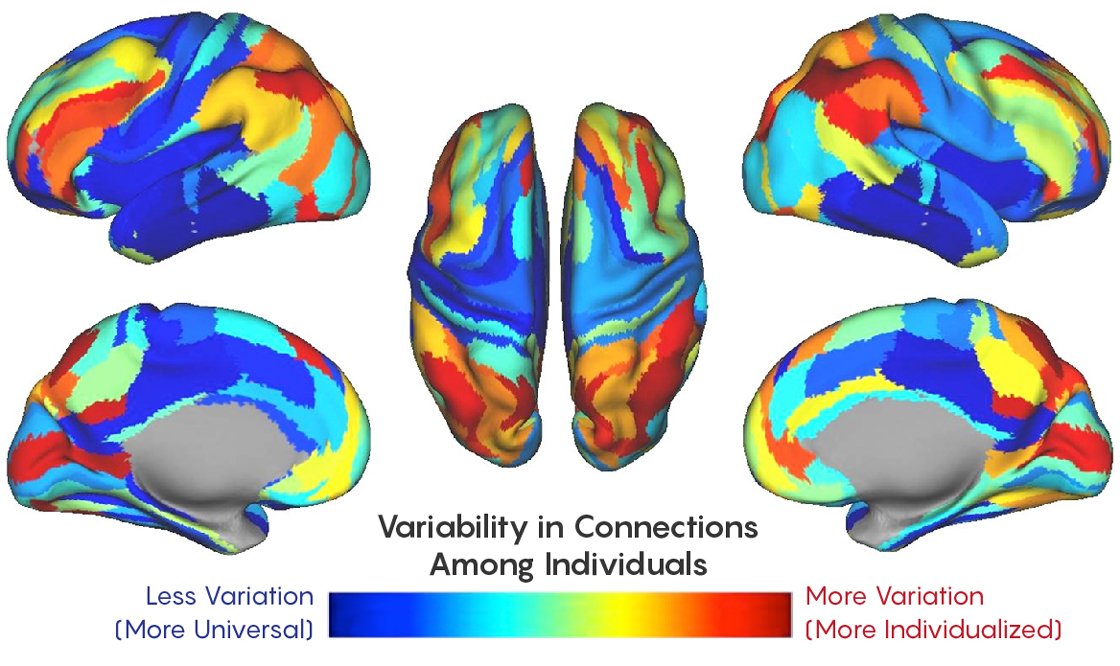

The 2014 analysis by Damien Fair and his colleagues assessed how much patterns of functional connectivity in the human brain vary across the population. About 30 percent of the connections, mostly in areas linked to greater cognitive processing, were unique to individuals.

Lucy Reading-Ikkanda/Quanta Magazine, adapted from doi.org/10.1371/journal.pone.0111048

By considering the unique activity patterns in the distinctive regions, the model could identify an individual based on new scans taken two weeks after the fact. But what’s a few weeks out of a lifetime? Fair and his team began to wonder if someone’s functional fingerprint could persist over the course of years, or even generations.

If the researchers could compare one person’s functional fingerprint to those of close relatives, they might be able to distinguish between the genetic and environmental forces that shape our neural circuitry.

Tracing Neural Lineage

The first step in linking genes to brain organization is determining which aspects of the connectome are shared between family members. The task is nuanced: Relatives are known to have brain structures that are similar in terms of volume, shape and white matter integrity, but that does not necessarily imply they have the same connections linking those structures. Since certain mental conditions also tend to run in families, Fair’s mission to detect heritable connections might eventually help discern the parts of the brain and genes that increase a person’s risk of developing specific disorders.

As they described in a paper posted in June, the lab set out to create a machine-learning framework to ask whether the cross talk between brain regions was more alike in relatives than in strangers.

The researchers retested their linear model on a new set of brain scans — this time including children — to ensure the connectome remained relatively stable throughout early adolescence. Indeed, the model was sensitive enough to identify individuals despite developmental modifications in their neural connections over the course of a few years.

Investigating the role of genetics and environment on brain circuits first involved using a sorting algorithm known as a classifier to divide the tested individuals into two groups, “related” and “unrelated,” based on their functional fingerprints. The model was trained on the children from Oregon, and then tested on a fresh set of children as well as another sample that included adults from the Human Connectome Project.

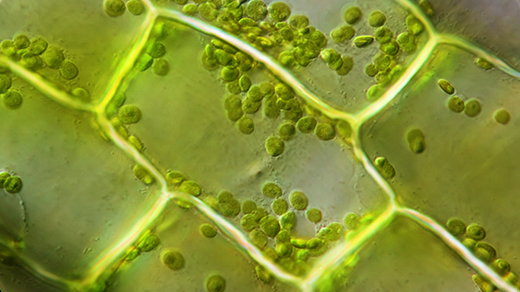

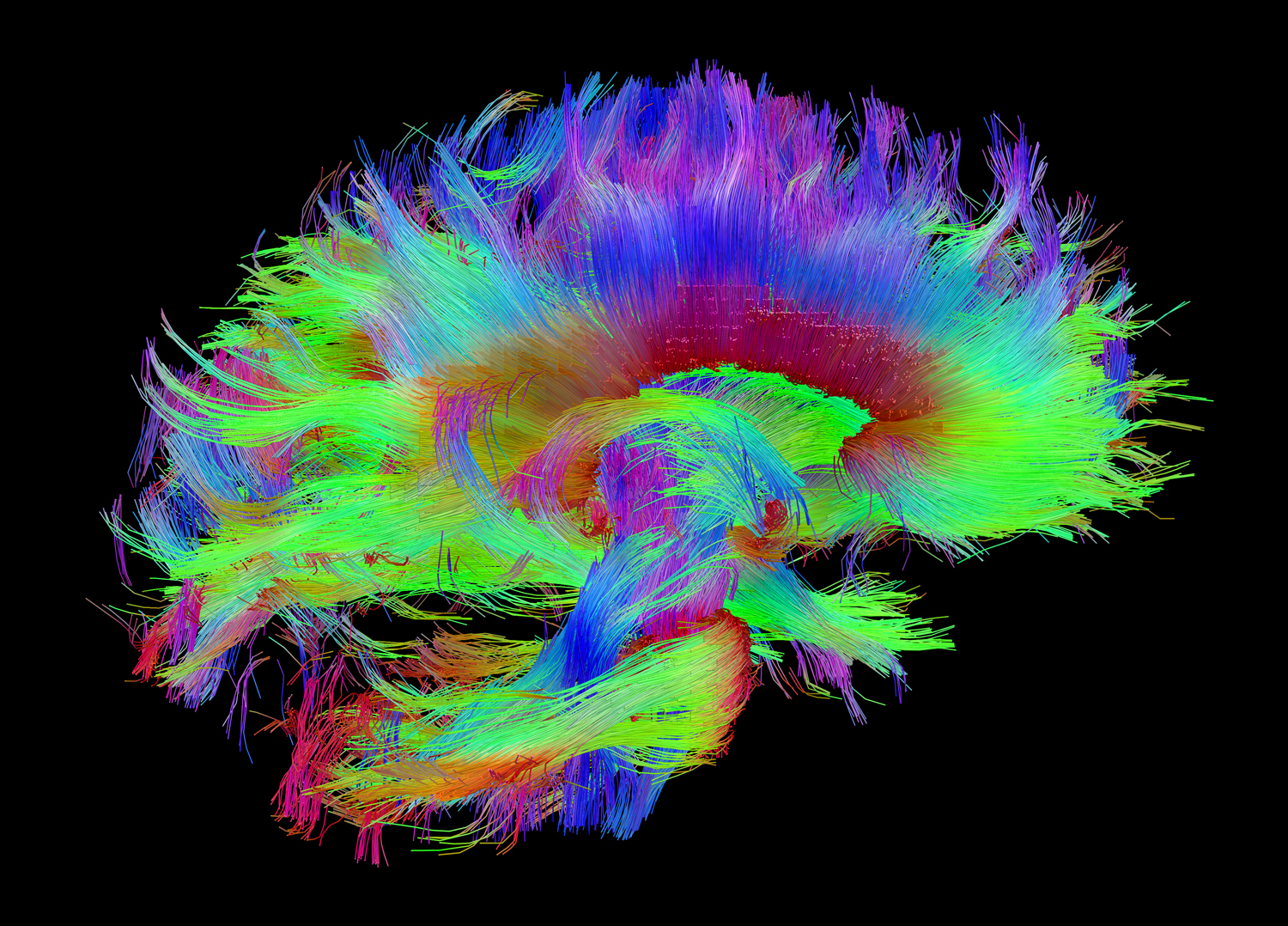

This image is one of many generated by the Human Connectome Project, which maps anatomical connections among brain areas. In contrast, the mapping effort occurring in Damien Fair’s laboratory and others is concerned with the functional connectome — information about how brain areas stimulate or suppress each other.

Courtesy of the Laboratory of Neuro Imaging and Martinos Center for Biomedical Imaging, Consortium of the Human Connectome Project — www.humanconnectomeproject.org

Much as a human observer might posit the relationships between people based on physical features like eye color, hair color and height, the classifier did the same using neural connections. Functional fingerprints appeared most similar between identical twins, followed by fraternal twins, nontwin siblings and, finally, unrelated participants.

Research assistant professor Oscar Miranda-Dominguez — a member of Fair’s lab and the first author on the study — was surprised they were able to identify adult siblings using the models trained on children. The models trained on adults could not do this, possibly because the adults’ higher-order systems had already fully matured, making their features less generalizable to young, developing brains. “A further study with larger samples and age spans might clarify the maturation aspect,” Miranda said.

The model’s ability to draw nuanced distinctions between family members, he added, was remarkable, because the researchers had trained the classifier to delineate only “related” and “unrelated,” rather than degrees of relatedness. (Their 2014 linear model was able to detect these subtle differences, but more traditional correlational approaches were not.)

Although their twin sample was not big enough to finely parse genetic influences from environmental ones, there’s “no question” in Fair’s mind that the latter plays a large part in shaping the functional fingerprint. Their supplemental materials described a model to differentiate shared environment from shared genetics, but the team is careful not to draw firm conclusions without a larger data set. “Most of what we’re seeing here is about the genetics and less about the environment,” Fair said, “not that the environment doesn’t have a big influence on the connectome, too.”

To dissociate the contributions of shared environments from those of shared genetics, Miranda said, “one way to proceed could be to find the brain features that can distinguish identical twins from nonidentical twins, since the two types of twins share the same environment but only identical twins share the same genetic contributions.”

Although all the neural circuits they examined demonstrated some level of commonality between siblings, the higher-order systems were the most heritable. These were the same areas exhibiting the most variation among individuals in the study four years prior. As Miranda pointed out, those regions mediate behaviors stemming from the nexus of social interaction and genetics, perhaps predicting a “family identity.” Add “distributed brain activity” to the list of traits that run in families, right after high blood pressure, arthritis and nearsightedness.

Seeking Signs of Brain-Predicted Age

While Fair and Miranda in Oregon characterize the genetic underpinnings of the functional connectome, at King’s College London the research fellow James Cole is hard at work using neuroimaging and machine learning to decrypt the heritability of brain age. Fair’s team defines brain age in terms of the functional connections between regions, but Cole employs it as an index of atrophy — brain shrinkage — over time. As cells shrivel or die throughout the years, neural volume decreases but the skull remains the same size, and the extra space fills up with cerebrospinal fluid. In a sense, past a certain point in development brains age by withering.

In 2010, the same year that Fair co-authored the influential Science paper that generated excitement around harnessing functional MRI data to assign brain age, one of Cole’s colleagues led a related effort published in NeuroImage, using anatomical data, because the difference between the inferred brain age and chronological age (the “brain age gap”) might be biologically informative.

According to Cole, aging affects each person, each brain and even each cell type slightly differently. Precisely why such a “mosaic of aging” exists is a mystery, but Cole will tell you that, at some level, we still don’t know what aging is. Gene expression changes with time, as does metabolism, cell function and cell turnover. Yet organs and cells can change independently; there’s no single gene or hormone that drives the whole aging process.

Although it’s widely accepted that different people age at different rates, the notion that various facets of the same person might mature separately is slightly more controversial. As Cole explained, many methods to gauge aging exist, but not many have been combined or compared just yet. The hope is that by measuring many tissues within an individual, researchers will be able to devise a more comprehensive assessment of aging. Cole’s work is a start at doing this with images of brain tissue.

The theoretical framework behind Cole’s approach is relatively straightforward: Feed data from healthy individuals into an algorithm that learns to predict brain age from anatomical data, then test the model on a fresh sample, subtracting the participants’ chronological age from their brain age. If their brain age is greater than their chronological one, this signals an accumulation of age-related changes, possibly due to diseases like Alzheimer’s.

In 2017, Cole used algorithms called Gaussian process regressions (GPRs) to generate a brain age for each participant. This allowed him to compare his own assessment of age to other existing measures, such as which regions of the genome are turned on and off by the addition of methyl groups at various ages. Biomarkers like methylation age had been previously used to predict mortality, and Cole suspected brain age could be used to do so as well.

Indeed, individuals with brains that appeared older than their chronological age tended to be at a greater risk for poor physical and cognitive health and, ultimately, death. Cole was surprised to learn that having a high neuroimaging-derived brain age didn’t necessarily correlate with a high methylation age. However, if participants had both, their risk of mortality increased.

Later that same year, Cole and his colleagues extended this work by using digital neural networks to assess whether brain-predicted age was more similar between identical twins than fraternal twins. The data came straight off the MRI scanner, and included images of the whole head, complete with nose, ears, tongue, spinal cord and, in some cases, a bit of fat around the neck. With minimal preprocessing, they were fed into the neural network, which, after training and testing, generated its best estimates of brain age. In keeping with the genetic-influence hypothesis, the brain ages of identical twins were more similar than those of fraternal twins.

While his results indicate that brain age is likely due in part to genetics, Cole warned not to neglect environmental effects. “Even if you do have a genetic predisposition to having an older-appearing brain,” he said, “chances are if you could modify your environment, that could more than outweigh the damage that your genes might be causing.”

The help that neural networks provide to this effort to read brain age comes with trade-offs, at least for now. They can sift through MRI data to find differences between individuals, even when researchers don’t know what features might be relevant. But a general caveat of deep learning is that no one knows what features in a data set the neural net is identifying. Because the raw MRI images he is using included the entire head, Cole acknowledges that perhaps we should call what they are measuring “whole-head age” rather than brain age. As someone once pointed out to him, he said, people’s noses change over time, so what’s to say the algorithm wasn’t tracking that instead?

Cole is confident this isn’t the case, however, because his neural networks performed similarly on both raw data and data processed to remove head structures outside the brain. The real payoff from eventually understanding what the neural networks are paying attention to, he expects, will be clues about what specific parts of the brain figure most in the age assessment.

Tobias Kaufmann, a researcher at the Norwegian Centre for Mental Disorders Research at the University of Oslo, suggested the machine learning techniques used to predict brain age almost don’t matter if the model is properly trained and tuned. The results from different algorithms will typically converge, as Cole found when he compared his GPRs to the neural network.

The difference, according to Kaufmann, is that Cole’s deep learning method reduces the need for tedious, time-consuming preprocessing of MRI data. Shortening this step could someday speed up diagnoses in clinics, but for now, it also protects scientists from accidentally imposing biases on the raw data.

Richer data sets might also permit more complex predictions, like identifying patterns indicative of mental health. Having all the information in the data set, without transforming or reducing it, might therefore help the science, Kaufmann said. “I think that’s the big advantage of the deep learning method.”

Kaufmann is the lead author on a paper currently under review, constituting the largest brain-imaging study on brain age to date. The researchers employed machine learning on structural MRI data to reveal which brain regions showed the strongest aging patterns in people with mental disorders. Next, they took their inquiry one step further, probing which genes underlie brain aging patterns in healthy people. They were intrigued to note that many of the same genes that affected brain age were also involved in common brain disorders, perhaps indicating similar biological pathways.

The next goal, he said, is to go beyond heritability to unravel the specific pathways and genes involved in brain anatomy and signaling.

Although Kaufmann’s approach to decrypting brain age, like Cole’s, focuses on anatomy, he underscored the importance of gauging brain age in terms of connectivity as well. “I think both of these approaches are extremely important to take,” he said. “We need to understand the heritability and the underlying genetic architecture of both brain structure and function.”

Cole, for one, has no shortage of further research endeavors in mind. There is something compelling about the need for artificial intelligence to understand our own, underscored by advances that illuminate the connection between genes, brains, behaviors and ancestry. Unless, of course, he finds he’s been studying nose age all along.

This article was reprinted on Wired.com.