Researchers Identify ‘Master Problem’ Underlying All Cryptography

Samantha Mash for Quanta Magazine

Introduction

In 1868, the mathematician Charles Dodgson (better known as Lewis Carroll) proclaimed that an encryption scheme called the Vigenère cipher was “unbreakable.” He had no proof, but he had compelling reasons for his belief, since mathematicians had been trying unsuccessfully to break the cipher for more than three centuries.

There was just one small problem: A German infantry officer named Friedrich Kasiski had, in fact, broken it five years earlier, in a book that garnered little notice at the time.

Cryptographers have been playing this game of cat and mouse, creating and breaking ciphers, for as long as people have been sending secret information. “For thousands of years, people [have been] trying to figure out, ‘Can we break the cycle?’” said Rafael Pass, a cryptographer at Cornell Tech and Cornell University.

Five decades ago, cryptographers took a huge step in this direction. They showed that it’s possible to create provably secure ciphers if you have access to a single ingredient: a “one-way function,” something that’s easy to carry out but hard to reverse. Since then, researchers have devised a wide array of candidate one-way functions, from simple operations based on multiplication to more complicated geometric or logarithmic procedures.

Today, the internet protocols for tasks like transmitting credit card numbers and digital signatures depend on these functions. “Most of the crypto that is used in the real world is something that can be based on one-way functions,” said Yuval Ishai, a cryptographer at the Technion in Haifa, Israel.

But this advance has not ended the cat-and-mouse game — it has only sharpened its focus. Now, instead of having to worry about the security of every aspect of an encryption scheme, cryptographers need only concern themselves with the function at its core. But none of the functions currently in use have ever been definitively proved to be one-way functions — we don’t even know for sure that true one-way functions exist. If they do not, cryptographers have shown, then secure cryptography is impossible.

In the absence of proofs, cryptographers simply hope that the functions that have survived attacks really are secure. Researchers don’t have a unified approach to studying the security of these functions because each function “comes from a different domain, from a different set of experts,” Ishai said.

Cryptographers have long wondered whether there is a less ad hoc approach. “Does there exist some problem, just one master problem, that tells us whether cryptography is possible?” Pass asked.

Now he and Yanyi Liu, a graduate student at Cornell, have shown that the answer is yes. The existence of true one-way functions, they proved, depends on one of the oldest and most central problems in another area of computer science called complexity theory, or computational complexity. This problem, known as Kolmogorov complexity, concerns how hard it is to tell the difference between random strings of numbers and strings that contain some information.

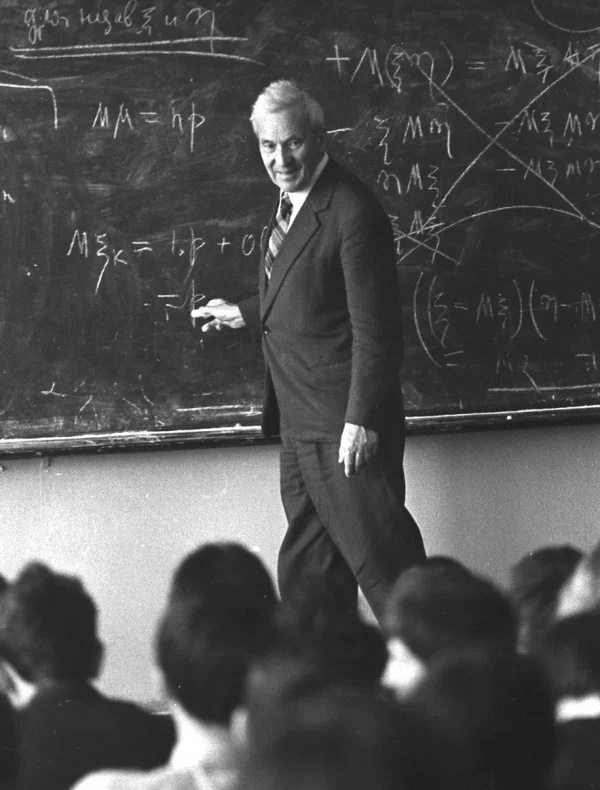

Rafael Pass, a cryptographer at Cornell Tech and Cornell University, helped find a question that could reveal whether one-way functions — and thus modern cryptography — truly exist.

Hugh Gordon

Liu and Pass proved that if a certain version of Kolmogorov complexity is hard to compute, in a specific sense, then true one-way functions do exist, and there’s a clear-cut way to build one. Conversely, if this version of Kolmogorov complexity is easy to compute, then one-way functions cannot exist. “This problem, [which] came before people introduced one-way functions, actually turns out to fully characterize it,” Pass said.

The finding suggests that instead of looking far and wide for candidate one-way functions, cryptographers could just concentrate their efforts on understanding Kolmogorov complexity. “It all hinges on this problem,” Ishai said. The proof is “breakthrough work on the foundations of cryptography.”

The paper has prompted cryptographers and complexity theorists to work together more closely, spurring a burst of activity uniting their approaches. “Multiple research groups are working to get to the bottom of things,” said Ryan Williams, a computer scientist at the Massachusetts Institute of Technology.

Leveraging Hardness

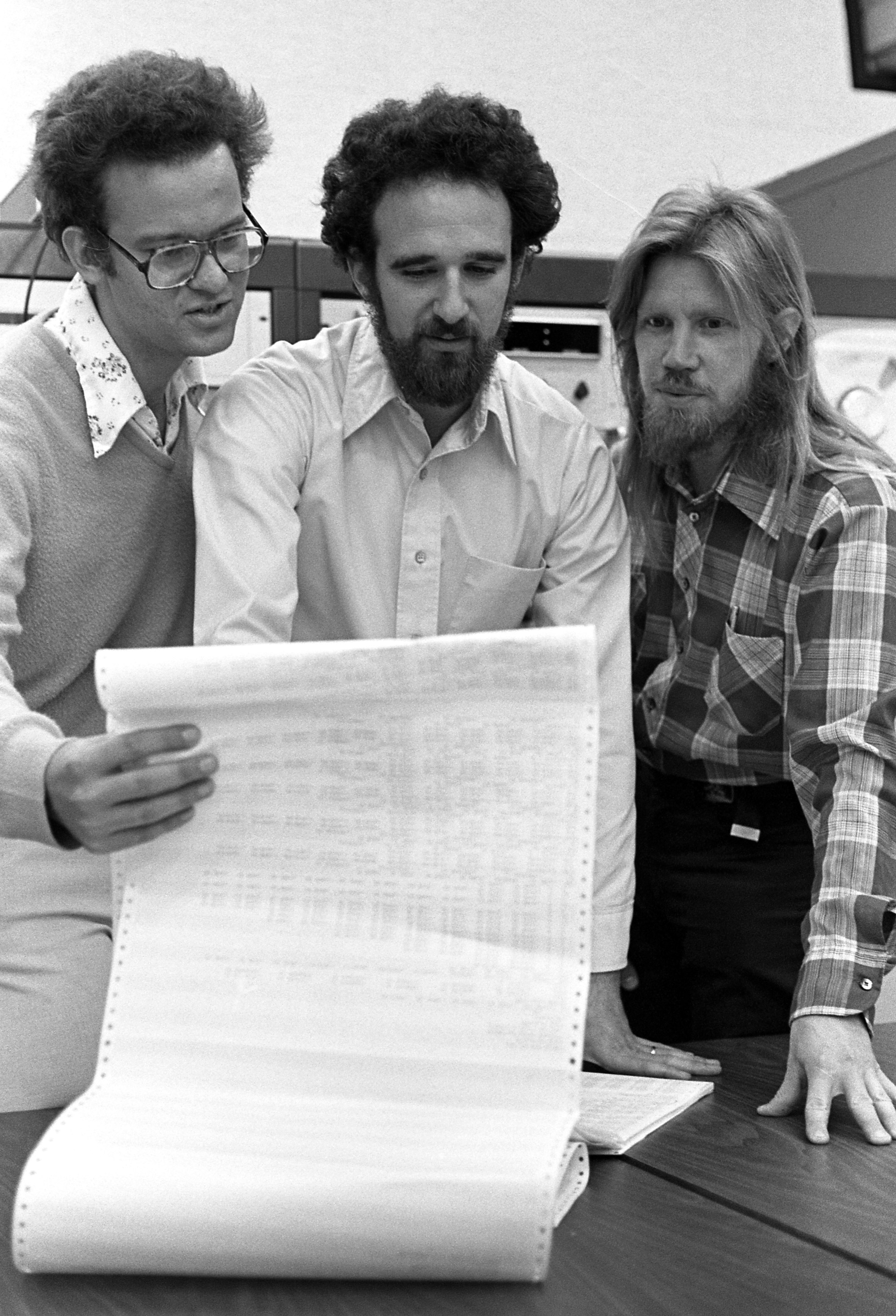

Usually, a hard problem is an obstacle. But in cryptography, where you can deploy it against your adversaries, it’s a boon. In 1976, Whitfield Diffie and Martin Hellman wrote a groundbreaking paper in which they argued that the particular hardness of one-way functions was precisely what cryptographers needed to meet the demands of the dawning computer age. “We stand today on the brink of a revolution in cryptography,” they wrote.

Martin Hellman (center) and Whitfield Diffie (right), shown here in a 1977 photo with Ralph Merkle, wrote a seminal paper that linked one-way functions to cryptography.

Chuck Painter / Stanford News Service

In the decades that followed, researchers figured out how to build a wide variety of cryptographic tools out of one-way functions, including private key encryption, digital signatures, pseudorandom number generators and zero-knowledge proofs (in which one person can convince another that a statement is true without revealing the proof). Diffie and Hellman’s paper was “almost like a prophecy,” Pass said. From the single building block of one-way functions, cryptographers managed to build “these super-complex and beautiful creatures,” he said.

To get a feel for how one-way functions work, imagine someone asked you to multiply two large prime numbers, say 6,547 and 7,079. Arriving at the answer of 46,346,213 might take some work, but it is eminently doable. However, if someone instead handed you the number 46,346,213 and asked for its prime factors, you might be at a loss. In fact, for numbers whose prime factors are all large, there is no efficient way (that we know of) to find those factors. This makes multiplication a promising candidate for a one-way function: As long as you start with large enough prime numbers, the process seems easy to do, but hard to undo. But we don’t know for sure that this is the case. Someone could find a fast way to factor numbers at any moment.

Cryptographers have gleaned an assortment of potential one-way functions from different areas of mathematics, but no single function has a higher claim than another. If, say, multiplication were toppled as a one-way function tomorrow, that wouldn’t say anything about the validity of the other candidate one-way functions. Cryptographers have long asked whether there is some quintessential one-way function — one which, if broken, would pull all the other candidates down with it.

In 1985, Leonid Levin, a computer scientist at Boston University, answered this question in a formal sense, demonstrating a “universal” one-way function that is guaranteed to be a one-way function if anything is. But his construction was “very artificial,” said Eric Allender, a computer scientist at Rutgers University. It is “not something anybody would have studied for any reason other than to get a result like that.”

What cryptographers were really after was a universal one-way function that stemmed from some natural problem — one that would give real insight into whether one-way functions exist. Researchers long had a particular problem in mind: Kolmogorov complexity, a measure of randomness that originated in the 1960s. But its connection with one-way functions was subtle and elusive.

Pass became fascinated with that connection as a graduate student in 2004. Over the years he toyed with the problem, without much success. But he felt sure there was something there, and a burst of activity in Kolmogorov complexity over the past five years only heightened his interest.

Pass tried to persuade several graduate students to explore the question with him, but none were willing to take on what might turn out to be a fruitless project. Then Yanyi Liu started graduate school at Cornell. “Yanyi was fearless,” Pass wrote in an email. Together, they plunged in.

What Is Random?

The concept of randomness is, by its nature, tricky to pin down. There’s a Dilbert comic strip in which an office tour guide shows Dilbert the accounting department’s “random number generator” — which turns out to be a monster who just keeps repeating the number 9. “Are you sure that’s random?” Dilbert asks. “That’s the problem with randomness,” his guide answers, “you can never be sure.”

Yanyi Liu worked with Pass to meaningfully connect one-way functions and Kolmogorov complexity.

Changzhi Xie

If someone shows you the number strings 99999999999999999999 and 03729563829603547134 and says they were chosen randomly, you can’t exactly debunk that claim: Both strings have the same probability of being created when you pick digits randomly. Yet the second string certainly feels more random.

“We think that we know what we mean when we say, ‘That thing is random,’” Allender said. “But it wasn’t really until the notion of Kolmogorov complexity was defined that that was shown to have a mathematically meaningful definition.”

To get at the notion of a random string of numbers, Andrey Kolmogorov decided in the 1960s to focus not on the process by which the string was generated, but on the ease with which it can be described. The string 99999999999999999999 can be concisely described as “20 9s,” but the string 03729563829603547134 might not have any description shorter than the string itself.

Kolmogorov defined the complexity of a string as the length of the shortest possible program that produces the string as an output. If we’re dealing with, say, thousand-digit strings, some have very short programs, such as “print a thousand 9s” or “print the number 23319” or “print the first thousand digits of π using the following formula….” Other strings are impossible to describe succinctly and have no program shorter than one that writes out the entire string and just tells the computer to print it. And some strings have programs whose length falls somewhere in the middle.

Kolmogorov complexity quickly became one of the core concepts of computer science. The notion is so fundamental that it was independently discovered multiple times in the 1960s. It’s “a deep problem, not just about randomness [and] mathematics, but really about science in general,” Pass said.

There’s just one drawback to Kolmogorov complexity: It’s incomputable, meaning that there is no program that can calculate the complexity of every possible string. We know this because if there were such a program, we’d end up with a contradiction.

To see this, imagine we have a program that can compute Kolmogorov complexity for any string. Let’s call the program K. Now, let’s search for the smallest string of numbers — call it S — whose Kolmogorov complexity is double the length of K. To be concrete, we could imagine that K has 1 million characters, so we’re looking for a string S whose Kolmogorov complexity is 2 million (meaning that the shortest program that outputs S has 2 million characters).

Merrill Sherman/Quanta Magazine

With program K in our toolbox, calculating S is easy (though not necessarily quick): We can write a new program that we’ll call P. The program P essentially says, “Go through all strings in order, using program K to compute their Kolmogorov complexity, until you find the first one whose Kolmogorov complexity is 2 million.” We’ll need to use program K when building P, so altogether P will have slightly more than 1 million characters. But this program outputs S, and we defined S as a string whose shortest program has 2 million characters. There’s the contradiction.

But this contradiction evaporates if, instead of looking for the shortest program that outputs a string, we look for the shortest reasonably efficient program that outputs the string (where we get to specify what “reasonable” means). After all, the program P takes an enormous amount of time to run, since it has to check so many strings. If we forbid such slow programs, we end up with a notion called “time-bounded” Kolmogorov complexity. This version of Kolmogorov complexity is computable — we can calculate the time-bounded Kolmogorov complexity for every possible string, at least in principle. And in some ways, it is as natural a concept as the original Kolmogorov complexity. After all, Pass said, what we really care about is, “Can you actually generate the string while we live on Earth, or while the universe still exists?”

Since time-bounded Kolmogorov complexity is computable, a natural next question is how hard it is to compute. And this is the question that Liu and Pass proved holds the key to whether one-way functions exist. “It’s a lovely insight,” Allender said.

More specifically, suppose you’ve set your sights on a less lofty goal than calculating the exact time-bounded Kolmogorov complexity of every possible string — suppose you’re content to calculate it approximately, and just for most strings. If there’s an efficient way to do this, Liu and Pass showed, then true one-way functions cannot exist. In that case, all our candidate one-way functions would be instantly breakable, not just in theory but in practice. “Bye-bye to cryptography,” Pass said.

Conversely, if calculating the approximate time-bounded Kolmogorov complexity is too hard to solve efficiently for many strings, then Liu and Pass showed that true one-way functions must exist. If that’s the case, their paper even provides a specific way to make one. The one-way function that they describe in their paper is too complicated to use in real-world applications, but in cryptography, practical constructions often quickly follow a theoretical breakthrough, Ishai said. The impracticality of Liu and Pass’ one-way function, he said, is “not a fundamental limitation.”

Andrey Kolmogorov came up with a way to measure randomness based on how easy it was to describe the generation of a string of numbers.

Alexander Makarov/RIA Novosti

And if their function can be made practical, it should be used in preference to the candidate one-way functions based on multiplication and other mathematical operations. For if anything is a one-way function, this one is. “If we can break a scheme like that, then all other schemes out there can also be broken,” Pass said.

A Richer Theory

The paper has set off a cascade of new research at the interface of cryptography and complexity theory. While both disciplines investigate how hard computational problems are, they come at the question from different mindsets, said Rahul Santhanam, a complexity theorist at the University of Oxford. Cryptography, he said, is fast-moving, pragmatic and optimistic, while complexity theory is slow-moving and conservative. In the latter field, “there are these long-standing open questions, and once in every dozen years, something happens,” he said. But “the questions are very deep and difficult.”

Now cryptography and complexity have a shared goal, and each field offers the other a fresh perspective: Cryptographers have powerful reasons to think that one-way functions exist, and complexity theorists have different powerful reasons to think that time-bounded Kolmogorov complexity is hard. Because of the new results, the two hypotheses bolster each other.

“If you believe this [Kolmogorov complexity] problem is difficult … then you believe in one-way functions,” Williams said. And “if you believe in crypto at all, then you’ve kind of got to believe that this version of time-bounded Kolmogorov complexity must be hard.”

Cryptographers are now faced with the task of trying to make Liu and Pass’ one-way function more practical. They are also starting to explore whether any other “master problems” besides time-bounded Kolmogorov complexity might also govern the existence of one-way functions, or of more sophisticated cryptographic tools. Complexity theorists, meanwhile, are starting to dig deeper into understanding the hardness of Kolmogorov complexity.

All of this suggests that the discovery’s true legacy might be still to come. “[It’s] a seed of something that is likely to develop into a much richer theory,” Ishai said.