To Teach Computers Math, Researchers Merge AI Approaches

Valentin Tkach for Quanta Magazine

Introduction

The world has learned two things in the past few months about large language models (LLMs) — the computational engines that power programs such as ChatGPT and Dall·E. The first is that these models appear to have the intelligence and creativity of a human. They offer detailed and lucid responses to written questions, or generate beguiling images from just a few words of text.

The second thing is that they are untrustworthy. They sometimes make illogical statements, or confidently pronounce falsehoods as fact.

“They will talk about unicorns, but then forget that they have one horn, or they’ll tell you a story, then change details throughout,” said Jason Rute of IBM Research.

These are more than just bugs — they demonstrate that LLMs struggle to recognize their mistakes, which limits their performance. This problem is not inherent in artificial intelligence systems. Machine learning models based on a technique called reinforcement learning allow computers to learn from their mistakes to become prodigies at games like chess and Go. While these models are typically more limited in their ability, they represent a kind of learning that LLMs still haven’t mastered.

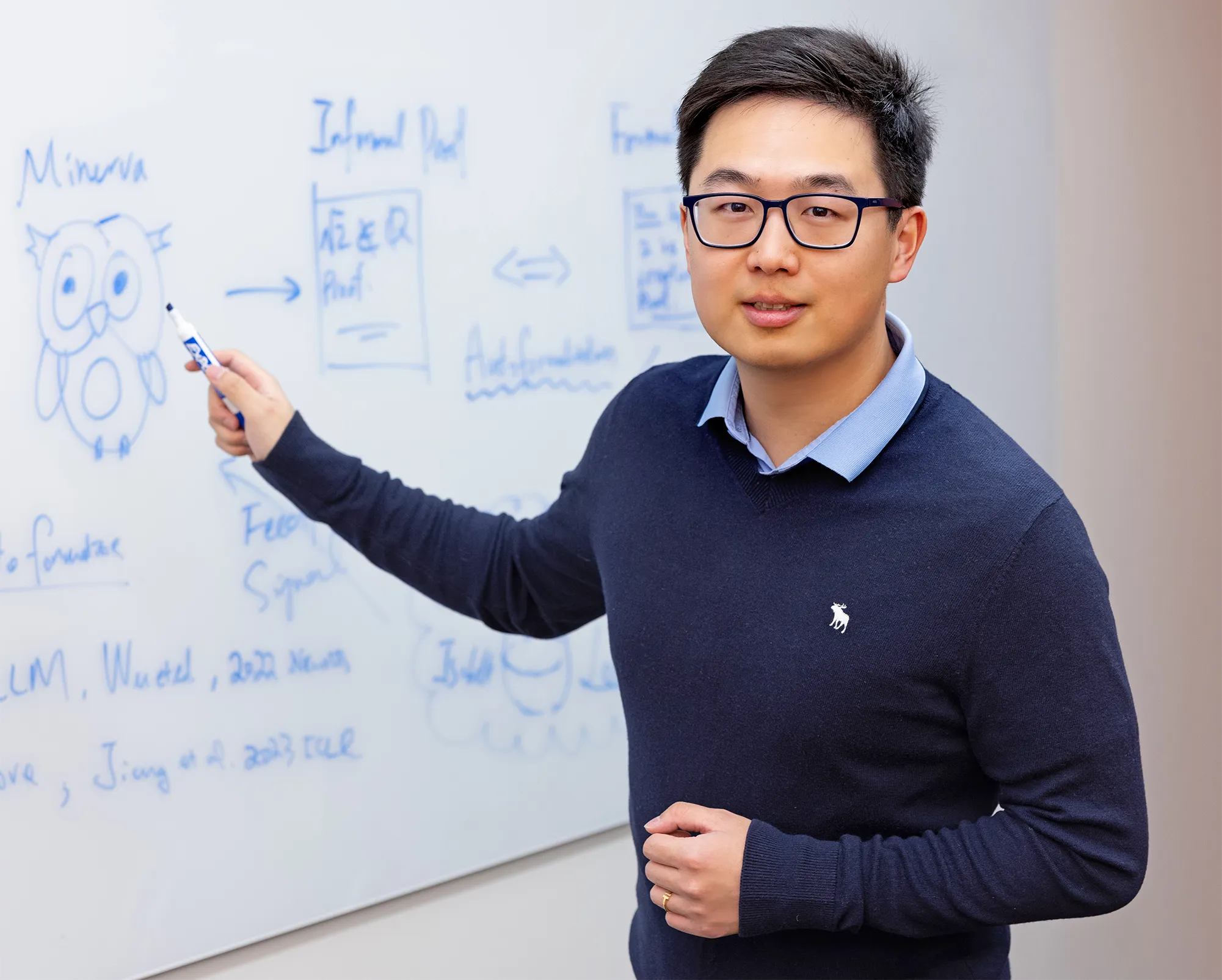

“We don’t want to create a language model that just talks like a human,” said Yuhuai (Tony) Wu of Google AI. “We want it to understand what it’s talking about.”

Wu is a co-author on two recent papers that suggest a way to achieve that. At first glance, they’re about a very specific application: training AI systems to do math. The first paper describes teaching an LLM to translate ordinary math statements into formal code that a computer can run and check. The second trained an LLM not just to understand natural-language math problems but to actually solve them, using a system called Minerva.

Together, the papers suggest the shape of future AI design, where LLMs can learn to reason via mathematical thinking.

“You have things like deep learning, reinforcement learning, AlphaGo, and now language models,” said Siddhartha Gadgil, a mathematician at the Indian Institute of Science in Bangalore who works with AI math systems. “The technology is growing in many different directions, and they all can work together.”

Not-So-Simple Translations

For decades, mathematicians have been translating proofs into computer code, a process called formalization. The appeal is straightforward: If you write a proof as code, and a computer runs the code without errors, you know that the proof is correct. But formalizing a single proof can take hundreds or thousands of hours.

Yuhuai (Tony) Wu recently co-authored two papers that taught large language models to translate and solve math problems written in natural language.

Christophe Testi/CreativeShot

Over the last five years, AI researchers have started to teach LLMs to automatically formalize, or “autoformalize,” mathematical statements into the “formal language” of computer code. LLMs can already translate one natural language into another, such as from French to English. But translating from math to code is a harder challenge. There are far fewer example translations with which to train an LLM, for example, and formal languages don’t always contain all the vocabulary necessary.

“When you translate the word ‘cheese’ from English to French, there is a French word for cheese,” Rute said. “The problem is in mathematics, there isn’t even the right concept in the formal language.”

That’s why the seven authors of the first paper, with a mix of academic and industry affiliations, chose to autoformalize short mathematical statements rather than entire proofs. The researchers worked primarily with an LLM called Codex, which is based on GPT-3 (a predecessor of ChatGPT) but has additional training on technical material from sources like GitHub. To get Codex to understand math well enough to autoformalize, they provided it with just two examples of natural-language math problems and their formal code translations.

After that brief tutorial, they fed Codex the natural-language statements of nearly 4,000 math problems from high school competitions. Its performance at first might seem underwhelming: Codex translated them into the language of a mathematics program called Isabelle/HOL with an accuracy rate of just under 30%. When it failed, it made up terms to fill gaps in its translation lexicon.

Merrill Sherman/Quanta Magazine

“Sometimes it just doesn’t know the word it needs to know — what the Isabelle name for ‘prime number’ is, or the Isabelle name for ‘factorial’ is — and it just makes it up, which is the biggest problem with these models,” Rute said. “They do a lot of guessing.”

But for the researchers, the important thing was not that Codex failed 70% of the time; it was that it managed to succeed 30% of the time after seeing such a small number of examples.

“They can do all these different tasks with only a few demonstrations,” said Wenda Li, a computer scientist at the University of Cambridge and a co-author of the work.

Li and his co-authors see the result as representative of the kind of latent capacities LLMs can acquire with enough general training data. Prior to this research, Codex had never tried to translate between natural language and formal math code. But Codex was familiar with code from its training on GitHub, and with natural-language mathematics from the internet. To build on that base, the researchers only had to show it a few examples of what they wanted, and Codex could start connecting the dots.

“In many ways what’s amazing about that paper is [the authors] didn’t do much,” Rute said. “These models had this natural ability to do this.”

Researchers saw the same thing happen when they tried to teach LLMs not only how to translate math problems, but how to solve them.

Minerva’s Math

The second paper, though independent of the earlier autoformalization work, has a similar flavor. The team of researchers, based at Google, trained an LLM to answer, in detail, high school competition-level math questions such as “A line parallel to y = 4x + 6 passes through (5, 10). What is the y-coordinate of the point where this line crosses the y-axis?”

The authors started with an LLM called PaLM that had been trained on general natural-language content, similar to GPT-3. Then they trained it on mathematical material like arxiv.org pages and other technical material, mimicking Codex’s origins. They named this augmented model Minerva.

Merrill Sherman/Quanta Magazine

The researchers showed Minerva four examples of what they wanted. In this case, that meant step-by-step solutions to natural-language math problems.

Then they tested the model on a range of quantitative reasoning questions. Minerva’s performance varied by subject: It answered questions correctly a little better than half the time for some topics (like algebra), and a little less than half the time for others (like geometry).

One concern the authors had — a common one in many areas of AI research — was that Minerva answered questions correctly only because it had already seen them, or similar ones, in its training data. This issue is referred to as “pollution,” and it makes it hard to know whether a model is truly solving problems or merely copying someone else’s work.

“There is so much data in these models that unless you’re trying to avoid putting some data in the training set, if it’s a standard problem, it’s very likely it’s seen it,” Rute said.

Jason Rute, a computer scientist at IBM Research, sees potential in large language models, but also knows their weaknesses. “They do a lot of guessing,” he said.

IBM

To guard against this possibility, the researchers had Minerva take the 2022 National Math Exam from Poland, which came out after Minerva’s training data was set. The system got 65% of the questions right, a decent score for a real student, and a particularly good one for an LLM, Rute said. Again, the positive results after so few examples suggested an inherent ability for well-trained models to take on such tasks.

“This is a lesson we keep learning in deep learning, that scale helps surprisingly well with many tasks,” said Guy Gur-Ari, a researcher formerly at Google and a co-author of the paper.

The researchers also learned ways to boost Minerva’s performance. For example, in a technique called majority voting, Minerva solved the same problem multiple times, counted its various results, and designated its final answer as whatever had come up most often (since there’s only one right answer, but so many possible wrong ones). Doing this increased its score on certain problems from 33% to 50%.

Also important was teaching Minerva to break its solution into a series of steps, a method called chain-of-thought prompting. This had the same benefits for Minerva that it does for students: It forced the model to slow down before producing an answer and allowed it to devote more computational time to each part of the task.

“If you ask a language model to explain step by step, the accuracy goes up immensely,” Gadgil said.

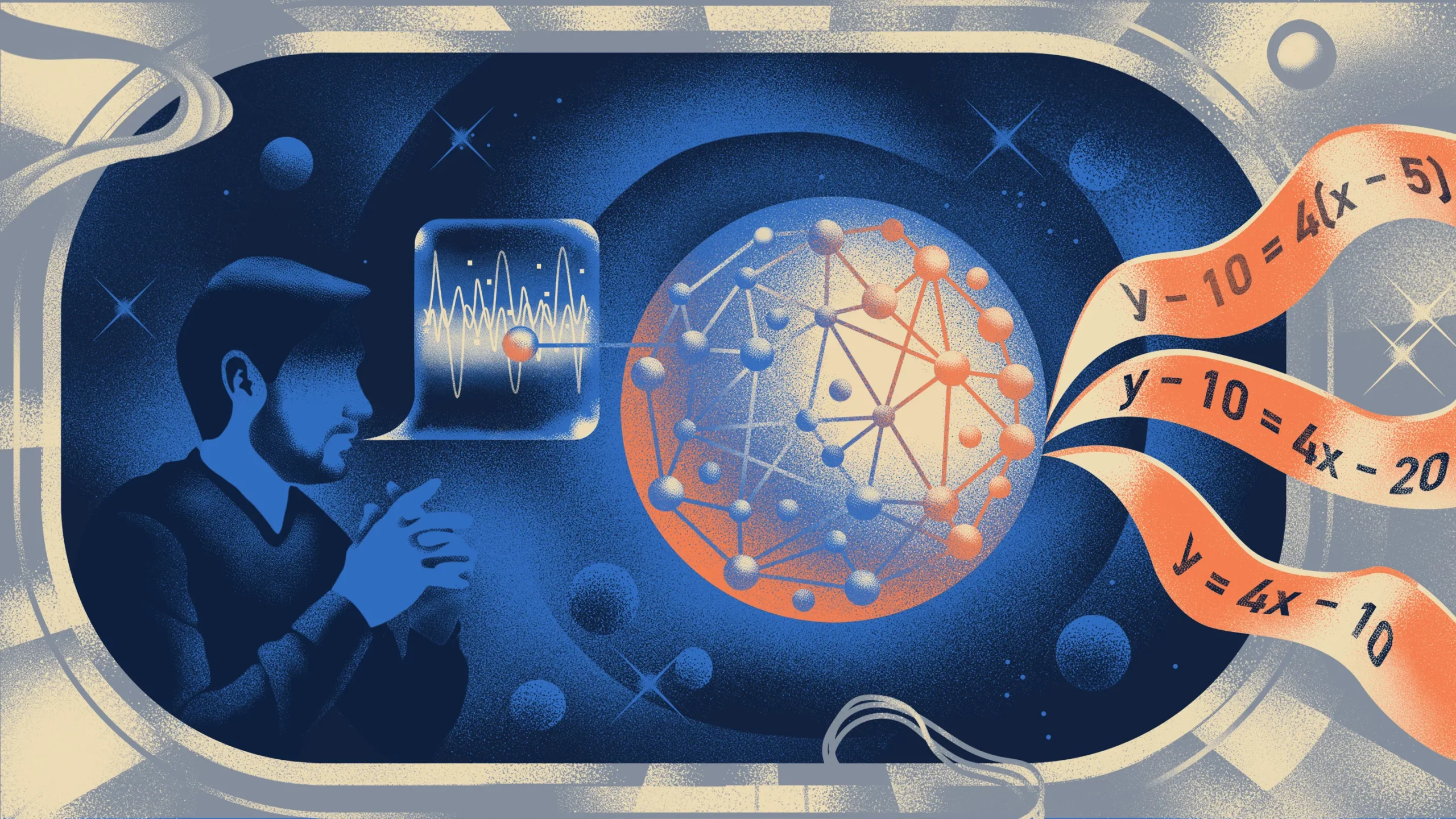

The Bridge Forms

While impressive, the Minerva work came with a substantial caveat, which the authors also noted: Minerva has no way of automatically verifying whether it has answered a question correctly. And even if it did answer a question correctly, it can’t check that the steps it followed to get there were valid.

“It sometimes has false positives, giving specious reasons for correct answers,” Gadgil said.

In other words, Minerva can show its work, but it can’t check its work, which means it needs to rely on human feedback to get better — a slow process that may put a cap on how good it can ever get.

“I really doubt that approach can scale up to complicated problems,” said Christian Szegedy, an AI researcher at Google and a co-author of the earlier paper.

Instead, the researchers behind both papers hope to begin teaching machines mathematics using the same techniques that have allowed the machines to get good at games. The world is awash in math problems, which could serve as training fodder for systems like Minerva, but it can’t recognize a “good” move in math, the way AlphaGo knows when it’s played well at Go.

“On the one side, if you work on natural language or Minerva type of reasoning, there’s a lot of data out there, the whole internet of mathematics, but essentially you can’t do reinforcement learning with it,” Wu said. On the other side, “proof assistants provide a grounded environment but have little data to train on. We need some kind of bridge to go from one side to the other.”

Autoformalization is that bridge. Improvements in autoformalization could help mathematicians automate aspects of the way they write proofs and verify that their work is correct.

By combining the advancements of the two papers, systems like Minerva could first autoformalize natural-language math problems, then solve them and check their work using a proof assistant like Isabelle/HOL. This instant check would provide the feedback necessary for reinforcement learning, allowing these programs to learn from their mistakes. Finally, they’d arrive at a provably correct answer, with an accompanying list of logical steps — effectively combining the power of LLMs and reinforcement learning.

AI researchers have even broader goals in mind. They view mathematics as the perfect proving ground for developing AI reasoning skills, because it’s arguably the hardest reasoning task of all. If a machine can reason effectively about mathematics, the thinking goes, it should naturally acquire other skills, like the ability to write computer code or offer medical diagnoses — and maybe even to root out those inconsistent details in a story about unicorns.