The Most Important Machine That Was Never Built

Kristina Armitage/Quanta Magazine; source: Geopix/Alamy

Introduction

Computation is a familiar concept most of us understand intuitively. Take the function f(x) = x + 3. When x is three, f(3) = 3 + 3. Six. Easy. It seems obvious that this function is computable. But some functions aren’t so simple, and it’s not so easy to determine if they can be computed, meaning they may never give us a final answer.

In 1928, the German mathematicians David Hilbert and Wilhelm Ackermann proposed a question called the Entscheidungsproblem (“decision problem”). In time, their question would lead to a formal definition of computability, one that allowed mathematicians to answer a host of new problems and laid the foundation for theoretical computer science.

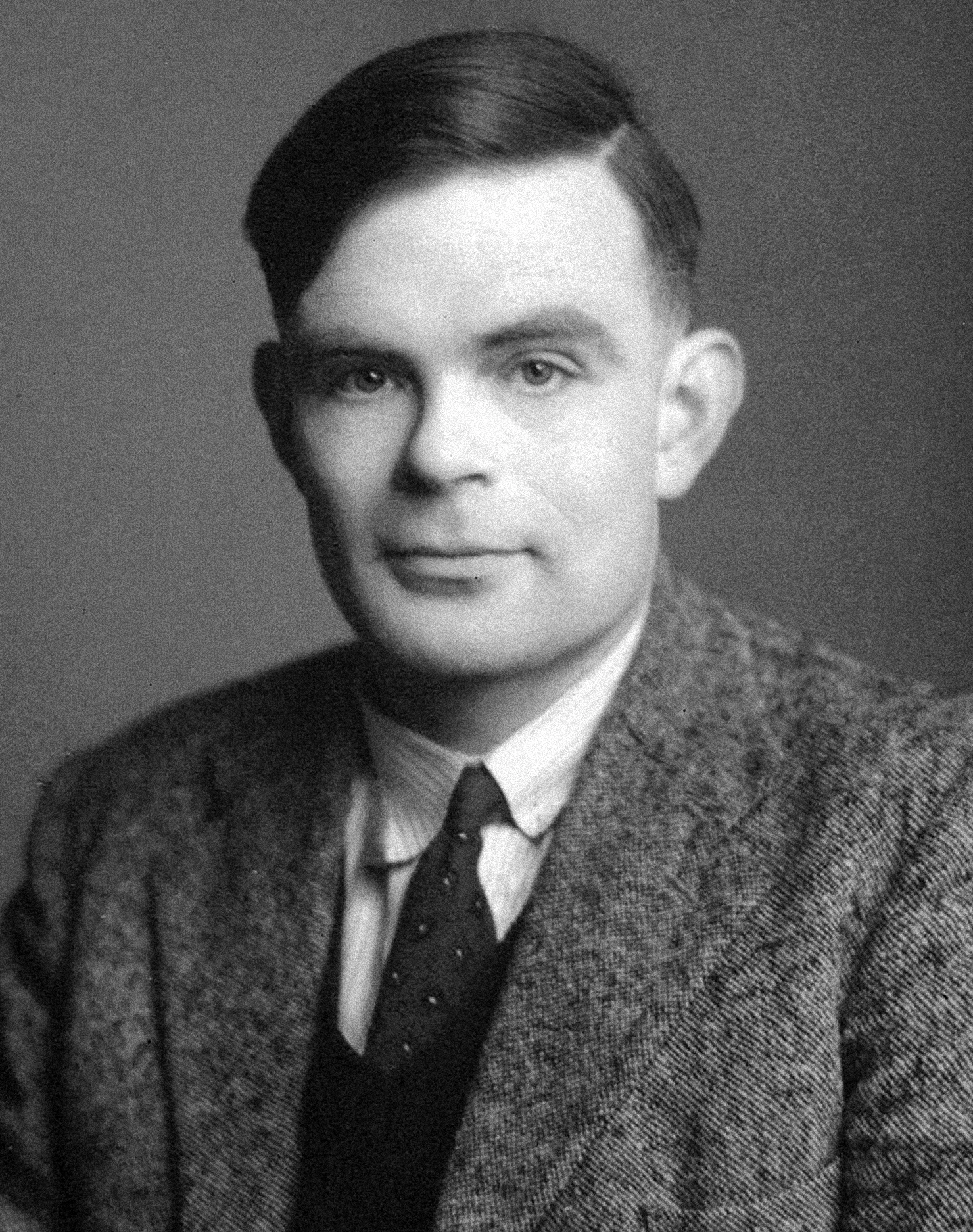

The definition came from a 23-year-old grad student named Alan Turing, who in 1936 wrote a seminal paper that not only formalized the concept of computation, but also proved a fundamental question in mathematics and created the intellectual foundation for the invention of the electronic computer. Turing’s great insight was to provide a concrete answer to the computation question in the form of an abstract machine, later named the Turing machine by his doctoral adviser, Alonzo Church. It’s abstract because it doesn’t (and can’t) physically exist as a tangible device. Instead, it’s a conceptual model of computation: If the machine can calculate a function, then the function is computable.

Here’s how it works. A Turing machine can read and alter symbols on an infinitely long tape, as dictated by a table of rules. The tape is made up of “cells,” which can each store exactly one symbol, and the machine reads and rewrites the cells’ contents with a tape head. Each rule in the table determines what the machine should do based on both its current state and the symbol it’s reading. The machine can enter a final state (“accept state” or “reject state”) upon which it halts, accepting or rejecting the input. Or it falls into an infinite loop and continues reading the tape forever.

The best way to understand a Turing machine is to consider a simple example. Let’s imagine one that’s designed to tell us whether a given input is the number zero. We’ll use the input number 0001 accompanied by blank symbols (#), so “#0001#” is the relevant part of our tape.

The machine starts in the initial state, which we’ll call q0. It reads the leftmost cell on our tape and finds a blank space. The rules say, “When in state q0, if the symbol is #, leave it as is without modification, move one cell to the right, and change the machine’s state to q1.” After this step, the machine is in state q1, and its head is reading the second symbol, 0.

Now we look for a rule that applies to these conditions. We find one that says, “Remain in state q1 and move the head one cell to the right.” This leaves us in the same position (in state q1, reading “0”), so we keep moving to the right until the head finally reads a different number, the 1.

When we consult the table again, we find a new rule: “If we encounter a 1, transition to q2, which is a ‘reject’ state.” The machine stops, answering “No” to the original question, “Is ‘0001’ zero?”

If instead the input is “#0000#,” the machine will encounter a # after all those zeros. When we consult the table, we find a rule saying that this means the machine enters state q3, an “accept” state. Now the machine answers “Yes” to the question “Is ‘0000’ zero?”

Alan Turing helped define computation, algorithms and what came to be known as the Turing machine.

GLArchive/Alamy

With his abstract machine, Turing established a model of computation to answer the Entscheidungsproblem, which formally asks: Given a set of mathematical axioms, is there a mechanical process — a set of instructions, which today we’d call an algorithm — that can always determine whether a given statement is true?

Say that we want to find an algorithm that can tell us if a certain chess position is possible. Here, the axioms are the rules of chess that govern legal moves. Can we follow a finite step-by-step sequence of procedures to arrive at that position? Though some positions may take longer than our lifetime to analyze — an algorithm may generate all possible positions and compare each of them to the input — such algorithms exist in the game of chess. As a result, we say that chess is “decidable.”

However, in 1936, Church and Turing — using different methods — independently proved that there is no general way of solving every instance of the Entscheidungsproblem. For example, some games, such as John Conway’s Game of Life, are undecidable: No algorithm can determine whether a certain pattern will appear from an initial pattern.

Turing showed that a function is computable if there exists an algorithm that can execute the desired task. At the same time, he showed that an algorithm is a process that can be defined by a Turing machine. Hence, a computable function is a function that has a Turing machine to compute it. This may seem like a circuitous way to define computability, but it’s the best we’ve got. “It’s not like you have a choice to define it some other way,” said Michael Sipser, a theoretical computer scientist at the Massachusetts Institute of Technology. “I think it is commonly accepted that the Church-Turing thesis says that the informal notion of algorithm corresponds to what any ‘reasonable’ computational model can do.” Other mathematicians have come up with different models of computation that look quite different on the surface but are actually equivalent: They can do any computation that Turing machines can do, and vice versa.

Only a few years after Kurt Gödel had proved that mathematics was incomplete, Church and Turing showed with this work that some problems in mathematics are undecidable — no algorithm, however sophisticated, can tell us whether the answer is yes or no. Both were devastating blows to Hilbert, who had hoped mathematics would have tidy, idealized answers. But it’s perhaps just as well: If a general solution to the Entscheidungsproblem existed, it would mean that all problems in mathematics could be reduced to simple mechanical calculations.

Beyond answering these fundamental questions, Turing’s machine also led directly to the development of modern computers, through a variant known as the universal Turing machine. This is a special kind of Turing machine that can simulate any other Turing machine on any input. It can read a description of other Turing machines (their rules and input tapes) and simulate their behaviors on its own input tape, producing the same output that the simulated machine would produce, just as today’s computers can read any program and execute it. In 1945, John von Neumann proposed a computer architecture — called the von Neumann architecture — that made the universal Turing machine concept possible in a real-life machine.

When Sanjeev Arora, a theoretical computer scientist at Princeton University, teaches this concept, he emphasizes a broader philosophical picture. “There’s two notions of ‘universal,’” he said. “One notion of the universal is that it can run any other Turing machine. But the other, bigger notion of ‘universal’ is that it can run any computation that you will come up with in the universe.” In the world of classical physics, any physical process can be modeled or simulated using algorithms, which, in turn, can be simulated by a Turing machine.

Another notable and increasingly useful variant is the probabilistic Turing machine. Unlike a regular Turing machine — which has a well-defined reaction to every input — a probabilistic Turing machine can have multiple reactions based on probabilities. This means it can yield different results for the same input at different times. Surprisingly, this kind of probabilistic strategy can be more efficient than a purely deterministic approach for certain problems. Ideas from probabilistic Turing machines have been shown to be practically useful in areas such as cryptography, optimization and machine learning.

These abstract machines are perhaps the best evidence that asking fundamental questions can be among the most useful things a scientist can do.