Bob Metcalfe, Ethernet Pioneer, Wins Turing Award

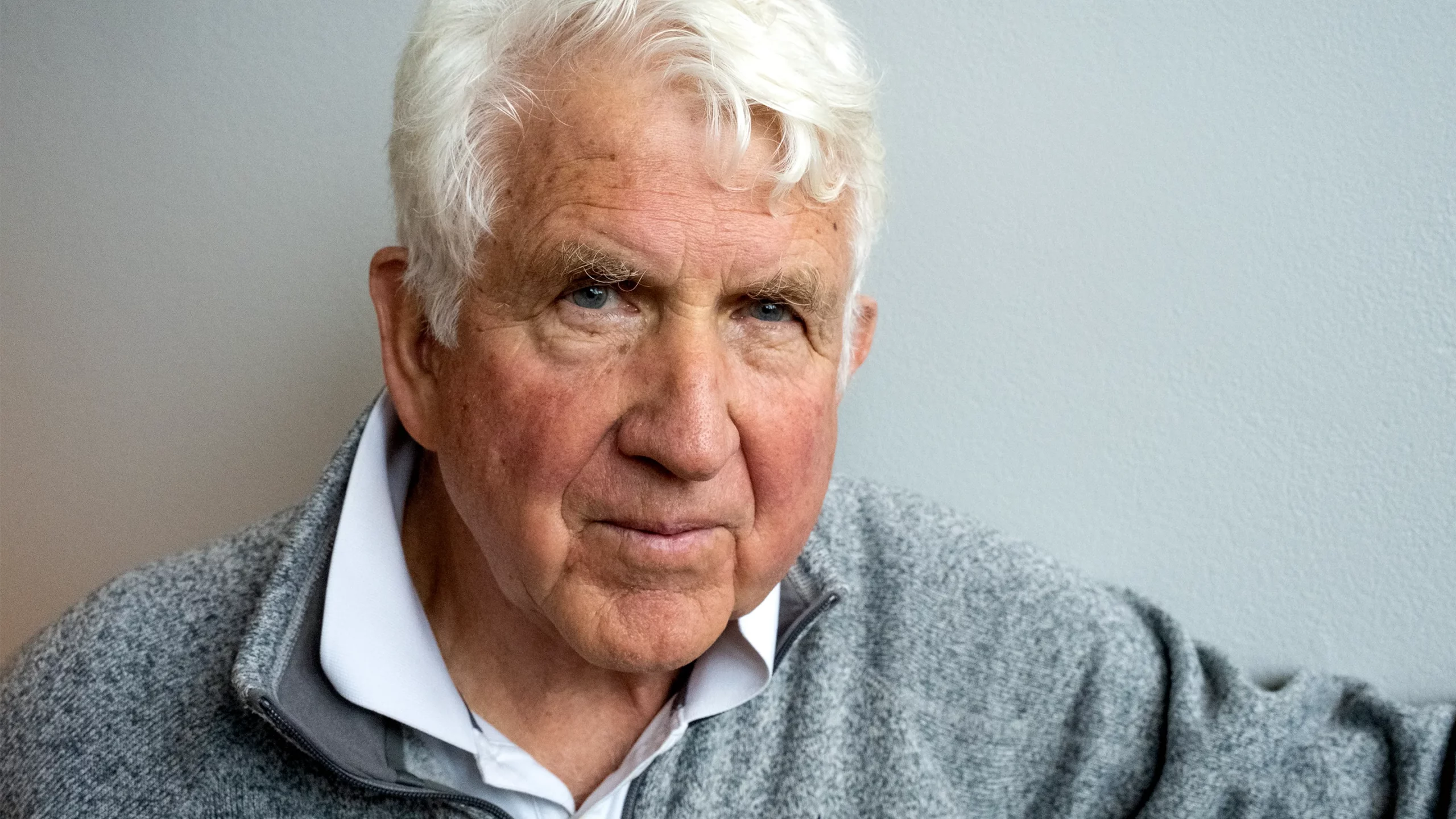

Bob Metcalfe won the Turing Award for his work developing Ethernet.

Bill McCullough for Quanta Magazine

Introduction

Bob Metcalfe has always been a believer in the power of networking. In the 1980s and 1990s he helped popularize the idea that a network’s value grows rapidly with the number of users, a precept now known as Metcalfe’s law. Today, with the internet ubiquitous, he thinks on a grander scale. “The most important new fact about the human condition is that we are now suddenly connected,” he said.

Today Metcalfe was named the winner of the A.M. Turing Award, an annual prize considered the highest honor in computer science, for his part in ushering in our hyperconnected age. Fifty years ago, Metcalfe helped invent Ethernet, the local networking technology that links personal computers around the world to the global internet. He also played a central role in standardizing and commercializing his invention.

“Bob is one of the people who lived on both sides. He could see the big picture,” said Steve Crocker, a computer networking pioneer who worked with Metcalfe on a precursor to the internet known as Arpanet.

Metcalfe’s career has grown in parallel with our networking capacity. He was born in Brooklyn in 1946 and studied electrical engineering and industrial management at the Massachusetts Institute of Technology. When he moved across town to Harvard University for graduate school, the U.S. Department of Defense was just ramping up its investment in Arpanet. Metcalfe proposed building an interface connecting the network to Harvard’s mainframe computer, but the university turned him down. He turned around and made the same proposal at MIT, where he was hired as a researcher while still a Harvard graduate student. When he presented a thesis describing the work to his dissertation committee in 1972, he failed his defense — the topic wasn’t theoretical enough, they said.

As a graduate student, Metcalfe built this interface to connect MIT’s mainframe computer to Arpanet, a precursor to the modern internet.

Bill McCullough for Quanta Magazine

By then Metcalfe had already accepted a job at the Xerox Corporation’s Palo Alto Research Center, or PARC, in California. The lab’s director, Bob Taylor, told him to come anyway and finish his dissertation from Palo Alto. Once there, Metcalfe started building another Arpanet interface for a new PARC computer, while searching for a theoretical topic to satisfy Harvard.

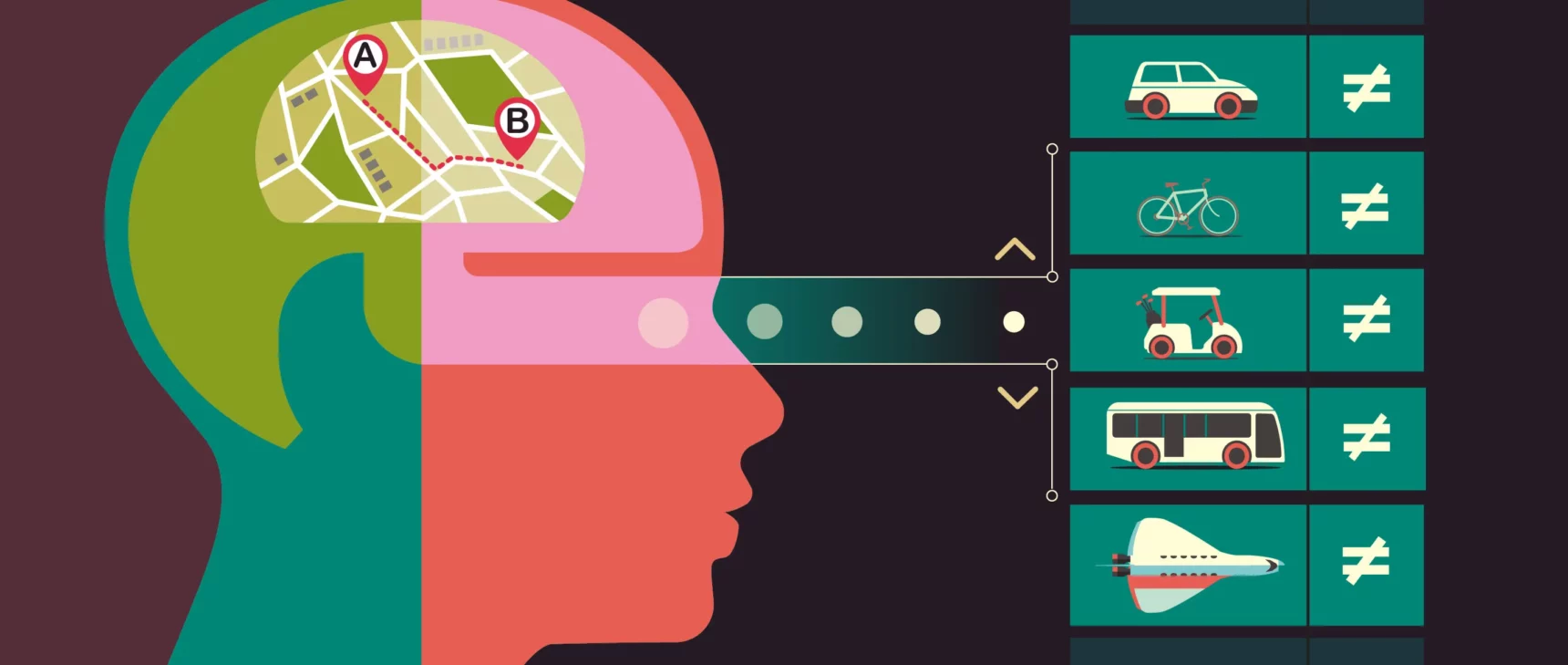

At the time, computer networking was as much a theoretical challenge as an engineering one. The fundamental problem was how to share access to a network among many users. Telephone networks dealt with this problem in the simplest possible way: a connection between two parties locked the communication channel for the duration of a call, making that channel inaccessible to other users even if it wasn’t being used to its full capacity. This inefficiency isn’t a big problem for phone conversations, which rarely lapse into silence for long. But computers communicate in short bursts, which are often separated by long stretches of dead time.

In the early 1960s, the computer scientist Leonard Kleinrock showed that queuing theory — the branch of mathematics that models traffic jams and other things that can happen while we’re waiting in line — could also describe the flow of data through a network. That model showed engineers how to substantially reduce dead time, and Arpanet demonstrated that it worked in practice. But coordinating the flow of traffic through the network was no easy task.

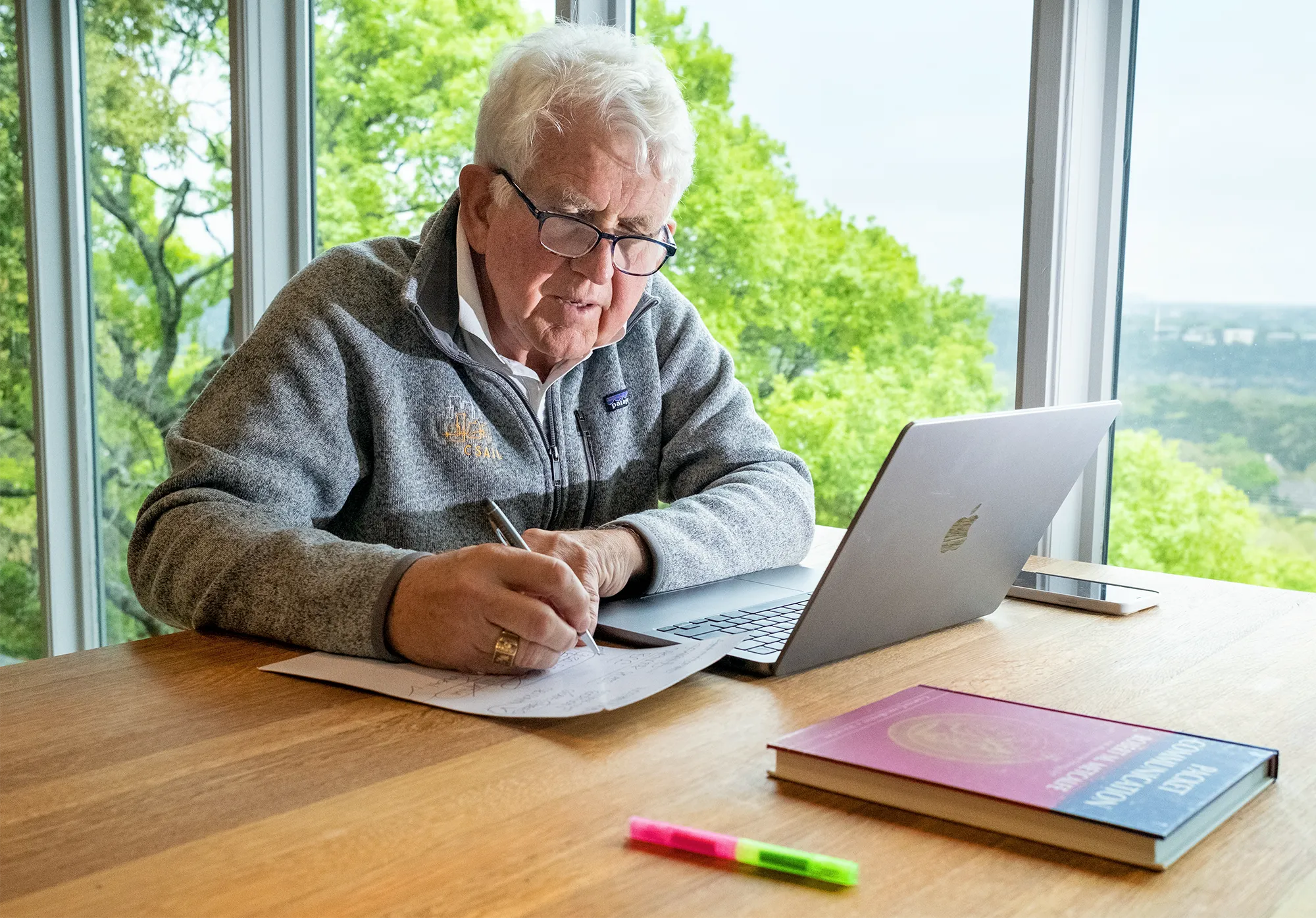

Metcalfe at his home in Austin, Texas, with a copy of his 1973 doctoral thesis.

Bill McCullough for Quanta Magazine

In 1971, the University of Hawai‘i professor Norm Abramson demonstrated a radical alternative to traffic coordination that would horrify any urban planner. He had built a radio network called ALOHAnet that, like Arpanet, transmitted data in tiny packets. But unlike Arpanet, ALOHAnet made no attempt to avoid collisions between packets. Instead, any user whose message was lost or garbled because of a collision would simply try again after a random time interval. This “randomized retransmission” is similar to the conversational etiquette of a dinner party: When two people begin speaking simultaneously, both stop and try again a moment later. The randomness of the wait time ensures that the situation will resolve itself after just a few tries. This strategy worked well in low-traffic situations, but when the network got crowded enough, collisions became so frequent that no messages could get through.

Metcalfe happened on a paper by Abramson describing the queuing theory behind ALOHAnet and devised a way around the logjam. In Metcalfe’s model, users would independently adjust average wait times between transmission attempts, taking the frequency of collisions into account: They’d be quicker to try again if collisions were rare, and they’d back off if the network was crowded, making communication much more efficient overall. That model gave Metcalfe’s dissertation enough theoretical heft to pass muster at Harvard, and he quickly realized he could put it into practice at his new job.

That was because the lab was pursuing an unusual approach to computer networking at the time. Arpanet had been conceived as a way to allow researchers to share mainframe computers — powerful but expensive machines. ALOHAnet, too, connected many access points to a central hub. At PARC, Taylor imagined a local network of many computers in the same building, and his new hire Metcalfe soon started to design it.

Metcalfe laid out his vision for a local network in a May 1973 memo. The proposal combined Abramson’s randomized retransmission system, Metcalfe’s adjustments to the timing, and other refinements to the ALOHAnet model that mitigated the effects of collisions. Some of these theoretical innovations had been developed by other researchers, but Metcalfe was the first to integrate them into a practical local network design.

Metcalfe’s plan also dispensed with ALOHAnet’s central hub. Instead, computers would connect via some passive medium. He had a specific kind of cable in mind with attractive properties for a practical implementation. But he noted that other cables or wireless networks would work just as well in theory, and might work better in practice as technology improved.

To avoid emphasizing specific hardware, Metcalfe dubbed his brainchild the Ether Network, later shortened to Ethernet. He was inspired by the hypothetical medium which 19th-century physicists (wrongly) assumed electromagnetic waves travel through. “The term was up for grabs, so we grabbed it,” Metcalfe said.

Metcalfe holds a prototype Ethernet adapter. It connected early personal computers to the first local network he helped develop in 1973.

Bill McCullough for Quanta Magazine

By November 1973, Metcalfe and his colleagues had their first network up and running. He continued to develop the design further, hoping to expand it beyond Xerox, but executives were slow to commercialize the new technology. By 1979, Metcalfe had had enough. He left PARC and founded his own company, 3Com, to do what Xerox wouldn’t. “Humble was not a word that you associated with Bob,” said Vint Cerf, an internet pioneer now at Google. “He took this idea and ran with it.”

Not long after striking out on his own, Metcalfe convinced representatives from Xerox, Intel and the now-defunct Digital Equipment Corporation to adopt Ethernet as an open industry standard for local networking. Other companies promoted their own technologies, but Ethernet eventually won out, due in large part to its simplicity and Metcalfe’s early push for standardization.

In 1990, Metcalfe left 3Com and became a pundit and tech columnist. It was the second time he had grown restless after about a decade in one career, and it wouldn’t be the last — he went on to work as a venture capitalist and later as a professor at the University of Texas, Austin. Metcalfe has a theory for what drives him to make such dramatic changes. “You start out not knowing anything, and then you go up the learning curve, and then you know everything,” he said, tracing out a curve with his finger. He pointed at the middle of the curve and added, “I have discovered through experience that the fun part to be on is right here.”

Ethernet has also adapted over the years, and few of the original technical details remain. But it has continued to play an indispensable role as the domestic plumbing for the networks of personal computers we now take for granted. “It was Ethernet that made that possible,” Cerf said. “It really was a hugely enabling technology.”

Less than a year ago, Metcalfe made yet another career change at the age of 76. He’s now a research affiliate at MIT, studying the application of supercomputers to complex problems in energy and other fields. “I’m still at the early part of the learning curve,” he said. “I don’t know much, but I’m working to fix that.”