Physicists Observe ‘Unobservable’ Quantum Phase Transition

Introduction

In 1935, Albert Einstein and Erwin Schrödinger, two of the most prominent physicists of the day, got into a dispute over the nature of reality.

Einstein had done the math and knew that the universe must be local, meaning that no event in one location could instantly affect a distant location. But Schrödinger had done his own math, and he knew that at the heart of quantum mechanics lay a strange connection he dubbed “entanglement,” which appeared to strike at Einstein’s commonsense assumption of locality.

When two particles become entangled, which can happen when they collide, their fates become linked. Measure the orientation of one particle, for instance, and you may learn that its entangled partner (if and when it is measured) points in the opposite direction, no matter its location. Thus, a measurement in Beijing could appear to instantly affect an experiment in Brooklyn, apparently violating Einstein’s edict that no influence can travel faster than light.

Einstein disliked the reach of entanglement (which he would later refer to as “spooky”) and criticized the then-nascent theory of quantum mechanics as necessarily incomplete. Schrödinger in turn defended the theory, which he had helped pioneer. But he sympathized with Einstein’s distaste for entanglement. He conceded that the way it seemingly allowed one experimenter to “steer” an otherwise inaccessible experiment was “rather discomforting.”

Physicists have since largely shed that discomfort. They now understand what Einstein, and perhaps Schrödinger himself, had overlooked — that entanglement has no remote influence. It has no power to bring about a specific outcome at a distance; it can distribute only the knowledge of that outcome. Entanglement experiments, such as those that won the 2022 Nobel Prize, have now grown routine.

Over the last few years, a flurry of theoretical and experimental research has uncovered a strange new face of the phenomenon — one that shows itself not in pairs, but in constellations of particles. Entanglement naturally spreads through a group of particles, establishing an intricate web of contingencies. But if you measure the particles frequently enough, destroying entanglement in the process, you can stop the web from forming. In 2018, three groups of theorists showed that these two states — web or no web — are reminiscent of familiar states of matter such as liquid and solid. But instead of marking a transition between different structures of matter, the shift between web and no web indicates a change in the structure of information.

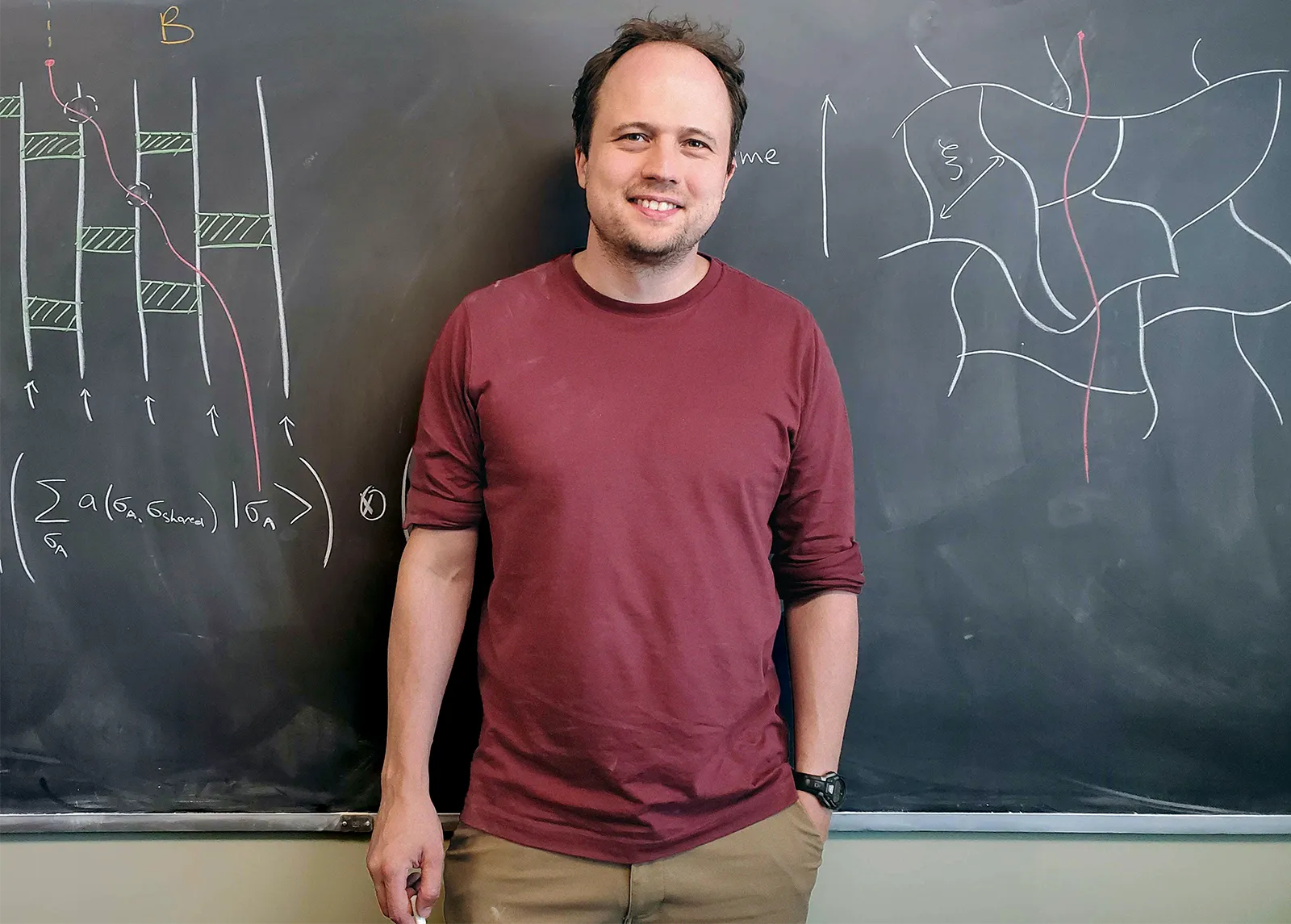

“This is a phase transition in information,” said Brian Skinner of Ohio State University, one of the physicists who first identified the phenomenon. “It’s where the properties in information — how information is shared between things — undergo a very abrupt change.”

Brian Skinner of Ohio State University and his colleagues showed that entanglement can survive the destructive effects of repeated measurements and spread throughout a system.

Aaron Hui

More recently, a separate trio of teams tried to observe that phase transition in action. They performed a series of meta-experiments to measure how measurements themselves affect the flow of information. In these experiments, they used quantum computers to confirm that a delicate balance between the competing effects of entanglement and measurement can be reached. The transition’s discovery has launched a wave of research into what might be possible when entanglement and measurement collide.

Entanglement “can have lots of different properties well beyond what we ever imagined,” said Jedediah Pixley, a condensed matter theorist at Rutgers University who has studied variations of the transition.

An Entangled Dessert

One of the collaborations that stumbled upon the entanglement transition was born over sticky toffee pudding at a restaurant in Oxford, England. In April 2018, Skinner was visiting his friend Adam Nahum, a physicist now at the École Normale Supérieure in Paris. Over the course of a sprawling conversation, they found themselves debating a fundamental question regarding entanglement and information.

First, a bit of a rewind. To understand what entanglement has to do with information, imagine a pair of particles, A and B, each with a spin that can be measured as pointing up or down. Each particle begins in a quantum superposition of up and down, meaning that a measurement produces a random outcome — either up or down. If the particles are not entangled, measuring them is like flipping two coins: Getting heads with one tells you nothing about what will happen with the other.

But if the particles are entangled, the two outcomes will be related. If you find B pointing up, for instance, a measurement of A will find it pointing down. The pair shares an “oppositeness” that resides not in either member but between them — a whiff of the nonlocality that unnerved Einstein and Schrödinger. One consequence of this oppositeness is that by measuring just one particle you learn about the other. “Measuring B first gave me some information about A,” Skinner said. “That reduces my ignorance about the state of A.”

How much a measurement of B reduces your ignorance about A is called the entanglement entropy, and like any type of information, it is counted in bits. Entanglement entropy is the main way physicists quantify the entanglement between two objects, or, equivalently, how much information about one is stored nonlocally in the other. No entanglement entropy means no entanglement; measuring B reveals nothing about A. High entanglement entropy means a lot of entanglement; measuring B teaches you a lot about A.

Over dessert, Skinner and Nahum took this thinking two steps further. They first extended the pair of particles into a chain as long as one cared to imagine. They knew that according to Schrödinger’s eponymous equation, the quantum mechanics analog of F = ma, entanglement would jump from one particle to the next like the flu. They also knew that they could calculate how fully entanglement had taken hold in the same way: Label one half of the chain A and the other half B; if entanglement entropy is high, then the two halves are highly entangled. Measuring half the spins will give you a good idea of what to expect when you measure the other half.

Next, they moved measurement from the end of the process — when the particle chain had already reached a particular quantum state — to the middle of the action, while entanglement was spreading. Doing so created a conflict because measurement is the mortal enemy of entanglement. Left untouched, the quantum state of a group of particles reflects all the possible combinations of ups and downs you might get when you measure those particles. But measurement collapses a quantum state and destroys any entanglement it contains. You get what you get, and any alternate possibilities vanish.

Nahum asked Skinner the following question: What if, while entanglement was in the process of spreading, you measured some of the spins here and there? Constantly measuring them all would snuff out all the entanglement in a boring way. But if you sporadically measured just a few spins, which phenomenon would emerge victorious? Entanglement or measurement?

Merrill Sherman/Quanta Magazine

Skinner, ad-libbing, reasoned that measurement would crush entanglement. Entanglement spreads lethargically from neighbor to neighbor, so it grows by at most a few particles at a time. But one round of measurement could hit many particles throughout the lengthy chain simultaneously, snuffing out entanglement at a multitude of sites. Had they considered the strange scenario, many physicists would likely have agreed that entanglement would be no match for measurement.

“There was some kind of folklore,” said Ehud Altman, a condensed matter physicist at the University of California, Berkeley, that “states that are very entangled are very fragile.”

But Nahum, who had been mulling over this question since the previous year, believed otherwise. He envisioned the chain extending into the future, moment by moment, to form a sort of chain-link fence. The nodes were the particles, and the connections between them represented links across which entanglement might form. Measurements clipped links in random locations. Snip enough links, and the fence falls apart. Entanglement can’t spread. But until that point, Nahum argued, even a somewhat tattered fence should allow entanglement to spread far and wide.

Nahum had managed to turn a problem about an ephemeral quantum occurrence into a concrete question about a chain-link fence. That happened to be a well-studied problem in certain circles — the “vandalized resistor grid” — and one that Skinner had studied in his first undergraduate physics class, when his professor had introduced it during a digression.

“That’s when I got really excited,” Skinner said. “There’s no way to make a physicist happier than to show that a problem that looks hard is actually equivalent to a problem you already know how to solve.”

Tracking Entanglement

But their dessert banter was just that: banter. To rigorously test and develop these ideas, Skinner and Nahum joined forces with a third collaborator, Jonathan Ruhman at Bar-Ilan University in Israel. The team digitally simulated the effects of snipping links at different speeds in chain-link fences. They then compared these simulations of classical nets with more accurate but more challenging simulations of real quantum particles to make sure the analogy held. They were making leisurely but steady progress.

Then, in the summer of 2018, they learned that they weren’t the only group thinking about measurements and entanglement.

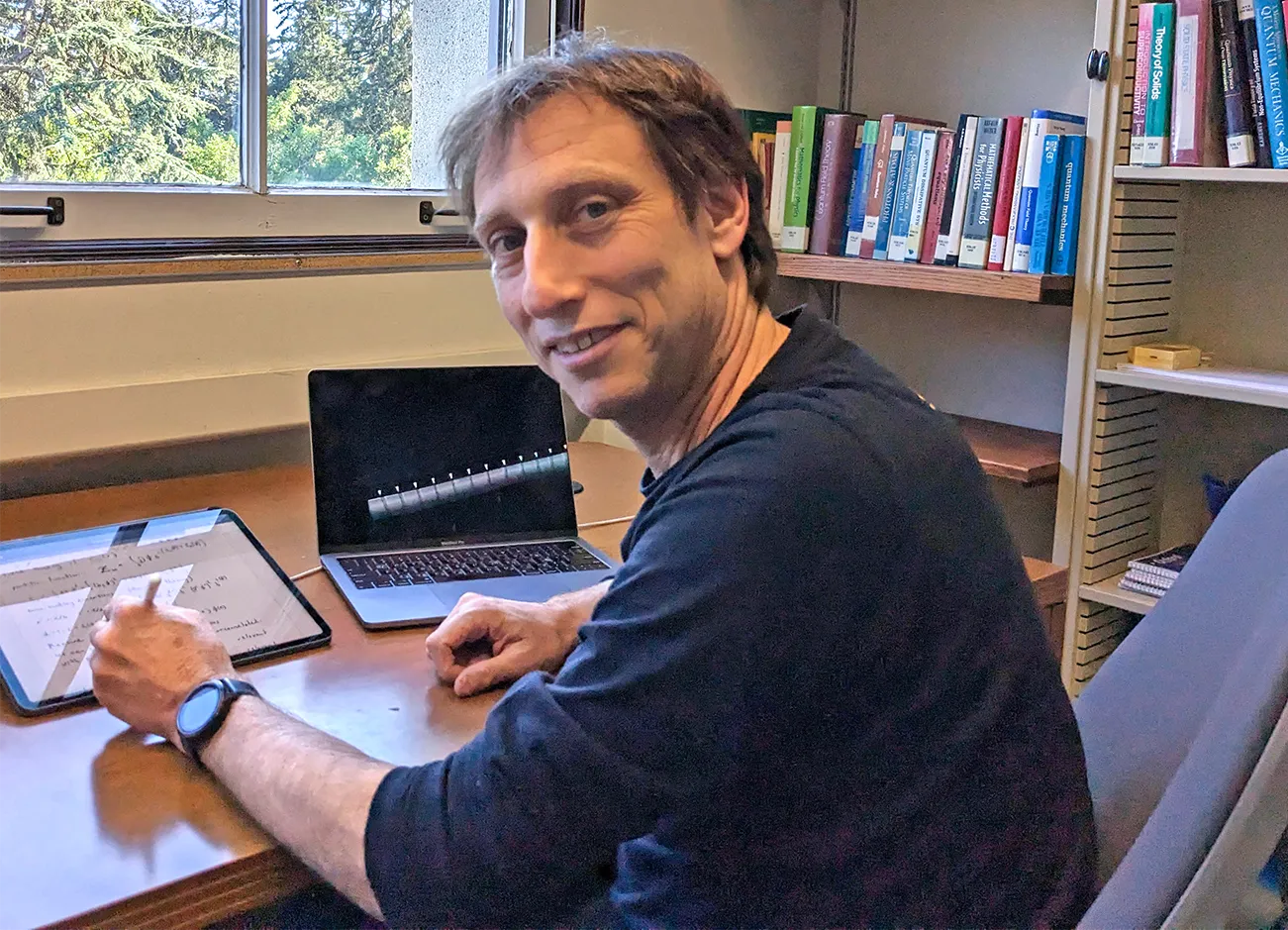

Matthew Fisher, a prominent condensed matter physicist at the University of California, Santa Barbara, had been wondering whether entanglement between molecules in the brain might play a role in how we think. In the model he and his collaborators were developing, certain molecules occasionally bind together in a way that acts like a measurement and kills entanglement. Then the bound molecules change shape in a way that could create entanglement. Fisher needed to know whether entanglement could thrive under the pressure of intermittent measurements — the same question Nahum had been considering.

“It was new,” Fisher said. “Nobody had looked at this before 2018.”

Matthew Fisher, a condensed matter physicist at the University of California, Santa Barbara, started studying the interplay of measurement and entanglement because he suspects that both phenomena could play a role in human cognition. UCSB

In a display of academic cooperation, the two groups coordinated their research publications with each other and with a third team studying the same problem, led by Graeme Smith of the University of Colorado, Boulder.

“It kicked into a feverish pitch of us all working in parallel to post our papers at the same time,” Skinner said.

That August, all three groups unveiled their results. Smith’s team was initially at odds with the other two, which both supported Nahum’s fence-inspired reasoning: At first, entanglement outpaced modest rates of measurement to spread across a chain of particles, yielding high entanglement entropy. Then, as the researchers cranked up measurements beyond a “critical” rate, entanglement would halt — entanglement entropy would drop.

The transition appeared to exist, but it wasn’t entirely clear to everyone where the intuitive argument — that the neighbor-to-neighbor creep of entanglement should get wiped out by the widespread lightning strikes of measurement — had gone wrong.

In the following months, Altman and his collaborators at Berkeley found a subtle flaw in the reasoning. “It doesn’t take into account [the spread of] information,” Altman said.

Altman’s group pointed out that not all measurements are highly informative, and therefore highly effective at destroying entanglement. This is because random interactions between the chain’s particles do more than just entangle. They also immensely complicate the state of the chain as time goes on, effectively spreading out its information “like a cloud,” Altman said. Eventually, each particle knows about the whole chain, but the amount of information it has is minuscule. And so, he said, “the amount of entanglement you can destroy [with each measurement] is ridiculously small.”

In March 2019, Altman’s group posted a preprint detailing how the chain effectively hid information from measurements and allowed much of the chain’s entanglement to escape devastation. Around the same time, Smith’s group updated their findings, bringing all four groups into agreement.

Ehud Altman, a physicist at the University of California, Berkeley, used an argument based on quantum information theory to help clarify why entanglement can overcome measurements.

Noga Altman

The answer to Nahum’s question was clear. A “measurement-induced phase transition” was, theoretically, possible. But unlike a tangible phase transition, such as water hardening into ice, this was a transition between information phases — one where information remains safely spread out among the particles and one in which it is destroyed through repeated measurements.

That’s kind of what you dream of doing in condensed matter, Skinner said — finding a transition between different states. “Now you’re left pondering,” he continued, “how do you see it?”

Over the next four years, three groups of experimenters would detect signs of the distinct flow of information.

Three Ways to See the Invisible

Even the simplest experiment that could pick up on the intangible transition is extremely tough. “At the practical level, it seems impossible,” Altman said.

The goal is to set a certain measurement rate (think rare, medium or frequent), let those measurements duke it out with entanglement for a bit, and see how much entanglement entropy you end up with in the final state. Then rinse and repeat with other measurement rates and see how the amount of entanglement changes. It’s a bit like raising the temperature to see how the structure of an ice cube changes.

But the punishing math of exponentially proliferating possibilities makes this experiment almost unthinkably difficult to pull off.

Entanglement entropy is not, strictly speaking, something you can observe. It’s a number you infer through repetition, the way you might eventually map out the weighting of a loaded die. Rolling a single 3 tells you nothing. But after tossing the die hundreds of times, you can learn the likelihood of getting each number. Similarly, finding one particle to point up and another to point down doesn’t mean they’re entangled. You’d have to get the opposite outcome many times to be sure.

Deducing the entanglement entropy for a chain of particles being measured is much, much harder. The final state of the chain depends on its experimental history — whether each intermediate measurement came out spin up or spin down. Those are twists of fate beyond the experimenter’s control, so to amass multiple copies of the same state, the experimenter needs to repeat the experiment over and over until they get the same sequence of intermediate measurements — like flipping a coin repeatedly until you get a bunch of heads in a row. Each additional measurement makes the effort twice as hard. If you make 10 measurements while preparing a string of particles, for instance, you’ll need to run another 210 or 1,024 experiments to get the same final state a second time (and you might need 1,000 more copies of that state to nail down its entanglement entropy). Then you’ll have to change the measurement rate and start again.

The extreme difficulty of sensing the phase transition caused some physicists to wonder whether it was, in any meaningful sense, real.

“You’re relying on something that’s exponentially unlikely in order to see it,” said Crystal Noel, a physicist at Duke University. “So it raises the question of what does it mean physically?”

Noel spent almost two years thinking about measurement-induced phases. She was part of a team working on a new trapped-ion quantum computer at the University of Maryland. The processor contained qubits, quantum objects that act like particles. They could be programmed to create entanglement via random interactions. And the device could measure its qubits.

Crystal Noel of Duke University was part of the first team that used a quantum computer to realize a version of the information phases.

Alex Mousan for Pratt Communications/Duke University

In 2019, Noel and her colleagues began collaborating with two theorists who had come up with an easier way of doing the experiment. They had worked out a way of setting aside one qubit that, like a canary in a coal mine, could serve as a bellwether for the state of the entire chain.

The group also used a second trick to reduce the number of repetitions — a technical procedure that amounted to digitally simulating the experiment in parallel with actually doing it. That way, they knew what to expect. It was like being told in advance how the loaded die was weighted, and it reduced the number of experimental runs needed to work out the invisible entanglement structure.

With those two tricks, they could detect the entanglement transition in chains that were 13 qubits long, and they posted their results in the summer of 2021.

“We were amazed,” Nahum said. “Certainly I didn’t think it would have happened so soon.”

Unbeknownst to Nahum or Noel, a full execution of the original, exponentially more difficult version of the experiment — with no tricks or caveats — was already underway.

By running more than 1.5 million trials on IBM’s quantum processors, Austin Minnich of the California Institute of Technology detected signs of the information transition.

The California Institute of Technology

Around that time, IBM had just upgraded its quantum computers, giving them the ability to make relatively quick and reliable measurements of qubits on the fly. And Jin Ming Koh, an undergraduate student at the time at the California Institute of Technology, had given an internal presentation to IBM researchers and convinced them to assist with a project that would push the new feature to its limits. Under the supervision of Austin Minnich, an applied physicist at Caltech, the team set out to directly detect the phase transition in an effort Skinner calls “heroic.”

After consulting Noel’s team for advice, the group simply rolled the metaphorical dice enough times to determine the entanglement structure of every possible measurement history for chains of up to 14 qubits. They found that when measurements were rare, entanglement entropy doubled when they doubled the number of qubits — a clear signature of entanglement filling the chain. The longest chains (which involved more measurements) required more than 1.5 million runs on IBM’s devices, and altogether, the company’s processors ran for seven months. It was one of the most computationally intensive tasks ever completed using quantum computers.

Minnich’s group posted their realization of the two phases in March 2022, and it quieted any lingering doubts that the phenomenon was measurable.

“They really just brute-force did this thing,” Noel said, and proved that “for small system sizes, it’s doable.”

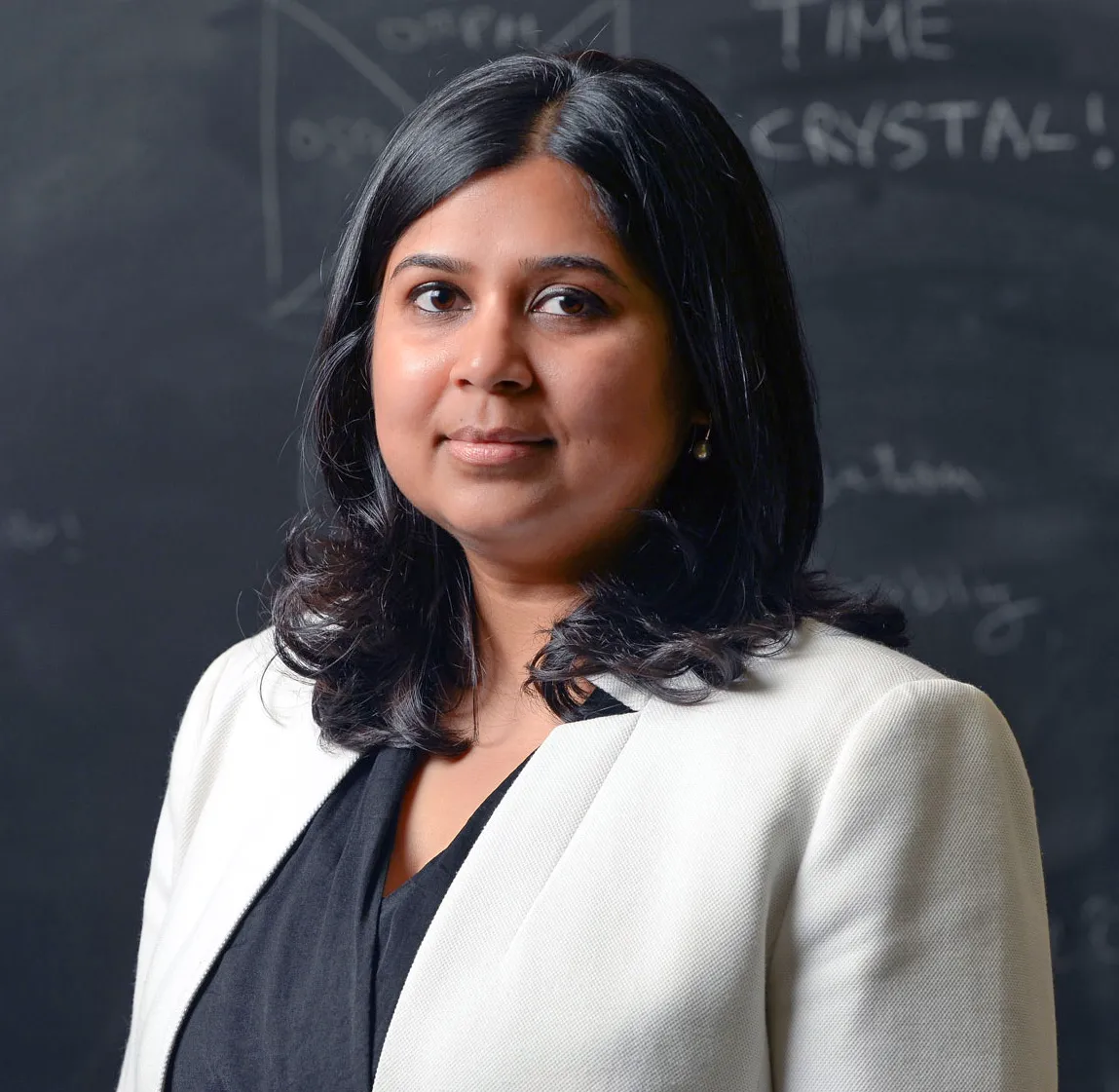

Recently, a team of physicists collaborated with Google to go even bigger, studying the equivalent of a chain almost twice as long as the previous two. Vedika Khemani of Stanford University and Matteo Ippoliti, now at the University of Texas, Austin, had already used Google’s quantum processor in 2021 to create a time crystal, which, like phases of spreading entanglement, is an exotic phase existing in a changing system.

Working with a large team of researchers, the pair took the two tricks developed by Noel’s group and added one new ingredient: time. The Schrödinger equation links a particle’s past with its future, but measurement severs that connection. Or, as Khemani put it, “once you put in measurements into a system, this arrow of time is completely destroyed.”

Without a clear arrow of time, the group was able to re-orient Nahum’s chain-link fence to let them access different qubits at different moments, which they used in advantageous ways. Among other results, they found a phase transition in a system equivalent to a chain of about 24 qubits, which they described in a March preprint.

Measurement Power

Skinner and Nahum’s debate over pudding, along with the work of Fisher and Smith, has spawned a new subfield among physicists interested in measurement, information and entanglement. At the heart of the various lines of investigation is a growing appreciation that measurements do more than just gather information. They are physical events that can generate genuinely new phenomena.

Along with researchers at Google, Vedika Khemani, a physicist at Stanford University, demonstrated information phases in some of the largest-qubit systems yet.

Rod Searcey

“Measurements are not something condensed matter physicists have thought about historically,” Fisher said. We make measurements to gather information at the end of an experiment, he continued, but not to actually manipulate a system.

In particular, measurements can produce unusual outcomes because they can have the same sort of everywhere-all-at-once flavor that once troubled Einstein. At the instant of measurement, the alternative possibilities contained in the quantum state vanish, never to be realized, including those that involve far-off spots in the universe. While the nonlocality of quantum mechanics doesn’t allow for faster-than-light transmissions in the way Einstein feared, it does enable other surprising feats.

“People are intrigued by what kind of new collective phenomena can be induced by these nonlocal effects of measurements,” Altman said.

Entangling a collection of many particles, for instance, has long been thought to require at least as many steps as the number of particles you hope to entangle. But last winter theorists detailed a way to pull it off in far fewer steps by using judicious measurements. Earlier this year, the same group put the idea into practice and fashioned a tapestry of entanglement hosting fabled particles that remember their pasts. Other teams are looking into other ways measurement could be used to supercharge entangled states of quantum matter.

The explosion of interest has come as a complete surprise to Skinner, who recently traveled to Beijing to receive an award for his work in the Great Hall of the People in Tiananmen Square. (Fisher’s team was also honored.) Skinner had initially believed that Nahum’s question was purely a mental exercise, but these days he isn’t so sure where it’s all heading.

“I thought it was just a fun game we were playing,” he said, “but I’d no longer be willing to put money on the idea that it’s not useful.”

Editor’s note: Jedediah Pixley receives funding from the Simons Foundation, which also funds this editorially independent magazine. Simons Foundation funding decisions have no influence on our coverage. More details are available here.