Experiments Spell Doom for Decades-Old Explanation of Quantum Weirdness

Theories that propose a natural trigger for the collapse of the quantum wave function appear to themselves be collapsing.

Michal Bednarski for Quanta Magazine

Introduction

How does objective reality emerge from the palette of possibilities supplied by quantum mechanics? That question — the deepest and most vexed issue posed by the theory — is still the subject of arguments a century old. Possible explanations for how observations of the world yield definite, “classical” results, drawing on different interpretations of what quantum mechanics means, have only multiplied over those hundred or so years.

But now we may be ready to eliminate at least one set of proposals. Recent experiments have mobilized the extreme sensitivity of particle physics instruments to test the idea that the “collapse” of quantum possibilities into a single classical reality is not just a mathematical convenience but a real physical process — an idea called “physical collapse.” The experiments find no evidence of the effects predicted by at least the simplest varieties of these collapse models.

It’s still too early to say definitively that physical collapse does not occur. Some researchers believe that the models could yet be modified to escape the constraints placed on them by the experiments’ null results. But while “it is always possible to rescue any model,” said Sandro Donadi, a theoretical physicist at the National Institute for Nuclear Physics (INFN) in Trieste, Italy, who led one of the experiments, he doubts that “the community will keep modifying the models [indefinitely], since there will not be too much to learn by doing that.” The noose seems to be tightening on this attempt to resolve the biggest mystery of quantum theory.

What Causes Collapse?

Physical collapse models aim to resolve a central dilemma of conventional quantum theory. In 1926 Erwin Schrödinger asserted that a quantum object is described by a mathematical entity called a wave function, which encapsulates all that can be said about the object and its properties. As the name implies, a wave function describes a kind of wave — but not a physical one. Rather, it is a “probability wave,” which allows us to predict the various possible outcomes of measurements made on the object, and the chance of observing any one of them in a given experiment.

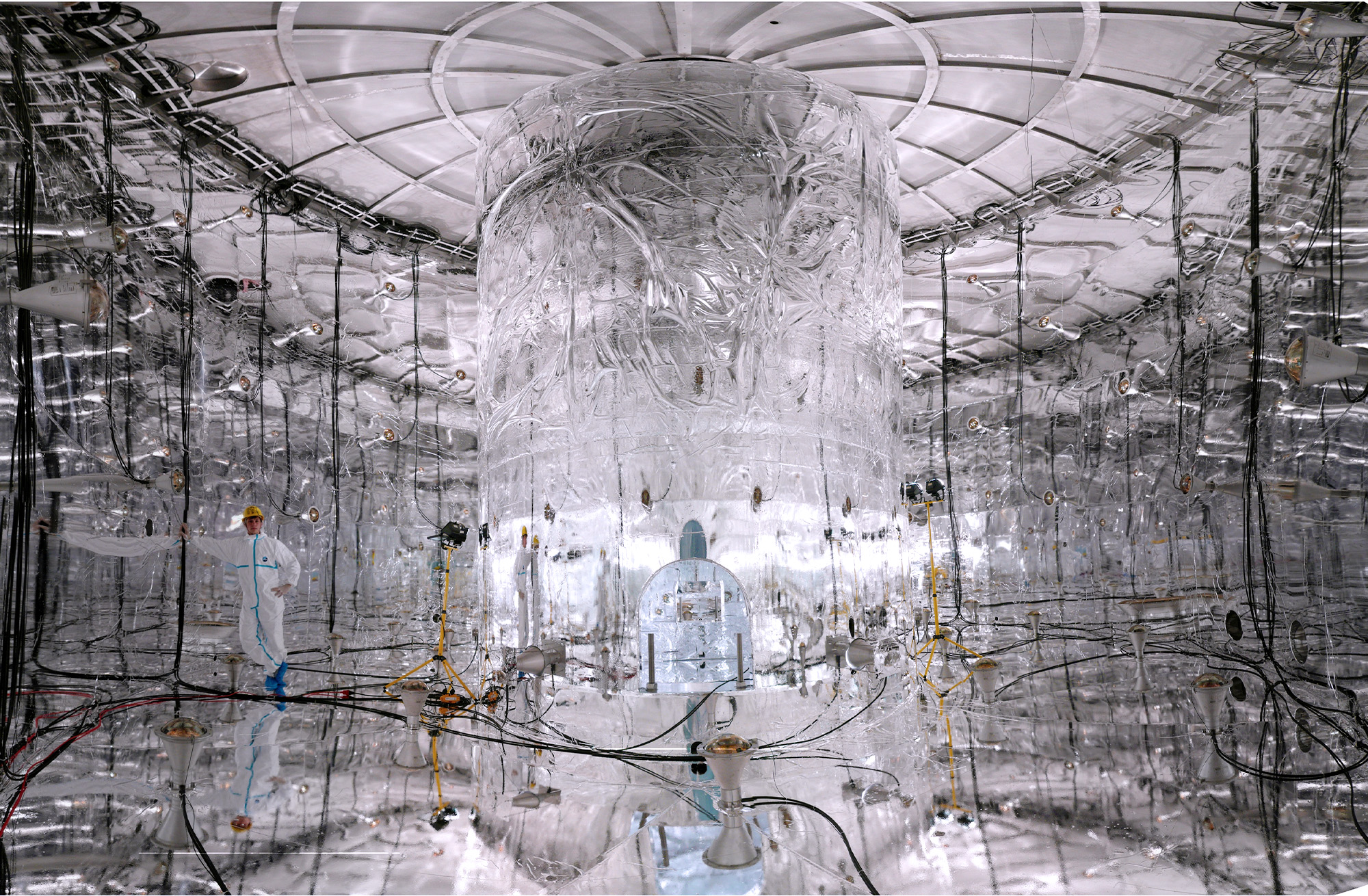

Detectors such as this one, originally designed for neutrino research, have taken on a second job testing the predictions of physical-collapse theories.

Nick Hubbard, Sanford Underground Research Facility

If many measurements are made on such objects when they’re prepared in an identical manner, the wave function always correctly predicts the statistical distribution of outcomes. But there’s no way of knowing what the outcome of any single measurement will be — quantum mechanics offers only probabilities. What determines a specific observation? In 1932, the mathematical physicist John von Neumann proposed that, when a measurement is made, the wave function is “collapsed” into one of the possible outcomes. The process is essentially random but biased by the probabilities it encodes. Quantum mechanics itself doesn’t appear to predict the collapse, which has to be manually added to the calculations.

As an ad hoc mathematical trick, it works well enough. But it seemed (and continues to seem) to some researchers to be an unsatisfactory sleight of hand. Einstein famously likened it to God playing dice to decide what becomes “real” — what we actually observe in our classical world. The Danish physicist Niels Bohr, in his so-called Copenhagen interpretation, simply pronounced the issue out of bounds, saying that physicists just had to accept a fundamental distinction between the quantum and classical regimes. In contrast, in 1957 the physicist Hugh Everett asserted that wave function collapse is just an illusion and that in fact all outcomes are realized in a near-infinite number of branching universes — what physicists now call “many worlds.”

The truth is that “the fundamental cause of the wave function collapse is yet unknown,” said Inwook Kim, a physicist at the Lawrence Livermore National Laboratory in California. “Why and how does it occur?”

In 1986, the Italian physicists Giancarlo Ghirardi, Alberto Rimini and Tullio Weber suggested an answer. What if, they said, Schrödinger’s wave equation was not the whole story? They posited that a quantum system is constantly prodded by some unknown influence that can induce it to spontaneously jump into one of the system’s possible observable states, on a timescale that depends on how big the system is. A small, isolated system, such as an atom in a quantum superposition (a state in which several measurement outcomes are possible), will stay that way for a very long time. But bigger objects — a cat, say, or an atom when it interacts with a macroscopic measuring device — collapse into a well-defined classical state almost instantaneously. This so-called GRW model (after the trio’s initials) was the first physical-collapse model; a later refinement known as the continuous spontaneous localization (CSL) model involved gradual, continuous collapse rather than a sudden jump. These models are not so much interpretations of quantum mechanics as additions to it, said the physicist Magdalena Zych of the University of Queensland in Australia.

What is it that causes this spontaneous localization via wave function collapse? The GRW and CSL models don’t say; they just suggest adding a mathematical term to the Schrödinger equation to describe it. But in the 1980s and ’90s, the mathematical physicists Roger Penrose of the University of Oxford and Lajos Diósi of Eötvös Loránd University in Budapest independently proposed a possible cause of the collapse: gravity. Loosely speaking, their idea was that if a quantum object is in a superposition of locations, each position state will “feel” the others via their gravitational interaction. It is as if this attraction causes the object to measure itself, forcing a collapse. Or if you look at it from the perspective of general relativity, which describes gravity, a superposition of localities deforms the fabric of space-time in two different ways at once, a circumstance that general relativity can’t accommodate. As Penrose has put it, in a standoff between quantum mechanics and general relativity, quantum will crack first.

The Test of Truth

These ideas have always been highly speculative. But, in contrast to explanations of quantum mechanics like the Copenhagen and Everett interpretations, physical-collapse models have the virtue of making observable predictions — and thus being testable and falsifiable.

If there is indeed a background perturbation that provokes quantum collapse — whether it comes from gravitational effects or something else — then all particles will be continuously interacting with this perturbation, whether they are in a superposition or not. The consequences should in principle be detectable. The interaction should create a “permanent zigzagging of particles in space” comparable to Brownian motion, said Catalina Curceanu, a physicist at INFN.

Current physical-collapse models suggest that this diffusive motion is only very slight. Nonetheless, if the particle is electrically charged, the motion will generate electromagnetic radiation in a process called bremsstrahlung. A lump of matter should thus continuously emit a very faint stream of photons, which typical versions of the models predict to be in the X-ray range. Donadi and his colleague Angelo Bassi have shown that emission of such radiation is expected from any model of dynamical spontaneous collapse, including the Diósi-Penrose model.

Yet “while the idea is simple, in practice the test is not so easy,” said Kim. The predicted signal is extremely weak, which means that an experiment must involve an enormous number of charged particles to get a detectable signal. And the background noise — which comes from sources such as cosmic rays and radiation in the environment — must be kept low. Those conditions can only be satisfied by the most extremely sensitive experiments, such as those designed to detect dark matter signals or the elusive particles called neutrinos.

In 1996, Qijia Fu of Hamilton College in New York — then just an undergraduate — proposed using germanium-based neutrino experiments to detect a CSL signature of X-ray emission. (Weeks after he submitted his paper, he was struck by lightning on a hiking trip in Utah and killed.) The idea was that the protons and electrons in germanium should emit the spontaneous radiation, which ultrasensitive detectors would pick up. Yet only recently have instruments with the required sensitivity come online.

In 2020, a team in Italy, including Donadi, Bassi and Curceanu, along with Diósi in Hungary, used a germanium detector of this sort to test the Diósi-Penrose model. The detectors, created for a neutrino experiment called IGEX, are shielded from radiation by virtue of their location underneath Gran Sasso, a mountain in the Apennine range of Italy.

Catalina Curceanu, seen here at a TedX event in Rome in 2016, used particle-physics detectors to put stringent limits on collapse theories.

TEDxRoma

After carefully subtracting the remaining background signal — mostly natural radioactivity from the rock — the physicists saw no emission at a sensitivity level that ruled out the simplest form of the Diósi-Penrose model. They also placed strong bounds on the parameters within which various CSL models might still work. The original GRW model lies just within this tight window: It survived by a whisker.

In a paper published this August, the 2020 result was confirmed and strengthened by an experiment called the Majorana Demonstrator, which was established primarily to search for hypothetical particles called Majorana neutrinos (which have the curious property of being their own antiparticles). The experiment is housed in the Sanford Underground Research Facility, which lies almost 5,000 feet underground in a former gold mine in South Dakota. It has a larger array of high-purity germanium detectors than IGEX, and they can detect X-rays down to low energies. “Our limit is much more stringent compared to the previous work,” said Kim, a member of the team.

A Messy End

Although physical-collapse models are badly ailing, they’re not quite dead. “The various models make very different assumptions about the nature and the properties of the collapse,” said Kim. Experimental tests have now excluded most plausible possibilities for these values, but there’s still a small island of hope.

Continuous spontaneous localization models propose that the physical entity perturbing the wave function is some sort of “noise field,” which the current tests assume is white noise: uniform at all frequencies. That’s the simplest assumption. But it’s possible that the noise might be “colored,” for example by having some high-frequency cutoff. Curceanu said that testing these more complicated models will require measuring the emission spectrum at higher energies than has been possible so far.

The Gerda experiment features a large tank that will hold the germanium detectors inside a protective layer of liquid argon. The hall surrounding the tank will be filled with purified water.

Kai Freund/LNGS-INFN.

The Majorana Demonstrator experiment is now winding down, but the team is forming a new collaboration with an experiment called Gerda, based at Gran Sasso, to create another experiment probing neutrino mass. Called Legend, it will have more massive and thus more sensitive germanium detector arrays. “Legend may be able to push the limits on CSL models further,” said Kim. There are also proposals for testing these models in space-based experiments, which won’t suffer from noise produced by environmental vibrations.

Falsification is hard work, and rarely reaches a tidy end point. Even now, according to Curceanu, Roger Penrose — who was awarded the 2020 Nobel Prize in Physics for his work on general relativity — is working on a version of the Diósi-Penrose model in which there’s no spontaneous radiation at all.

All the same, some suspect that for this view of quantum mechanics, the writing is on the wall. “What we need to do is to rethink what are these models trying to achieve,” said Zych, “and see if the motivating problems may not have a better answer through a different approach.” While few would argue that the measurement problem is no longer an issue, we have also learned much, in the years since the first collapse models were proposed, about what quantum measurement entails. “I think we need to go back to the question of what these models were created for decades ago,” she said, “and take seriously what we have learned in the meantime.”