Prepping for a Flood of Heavenly Bodies

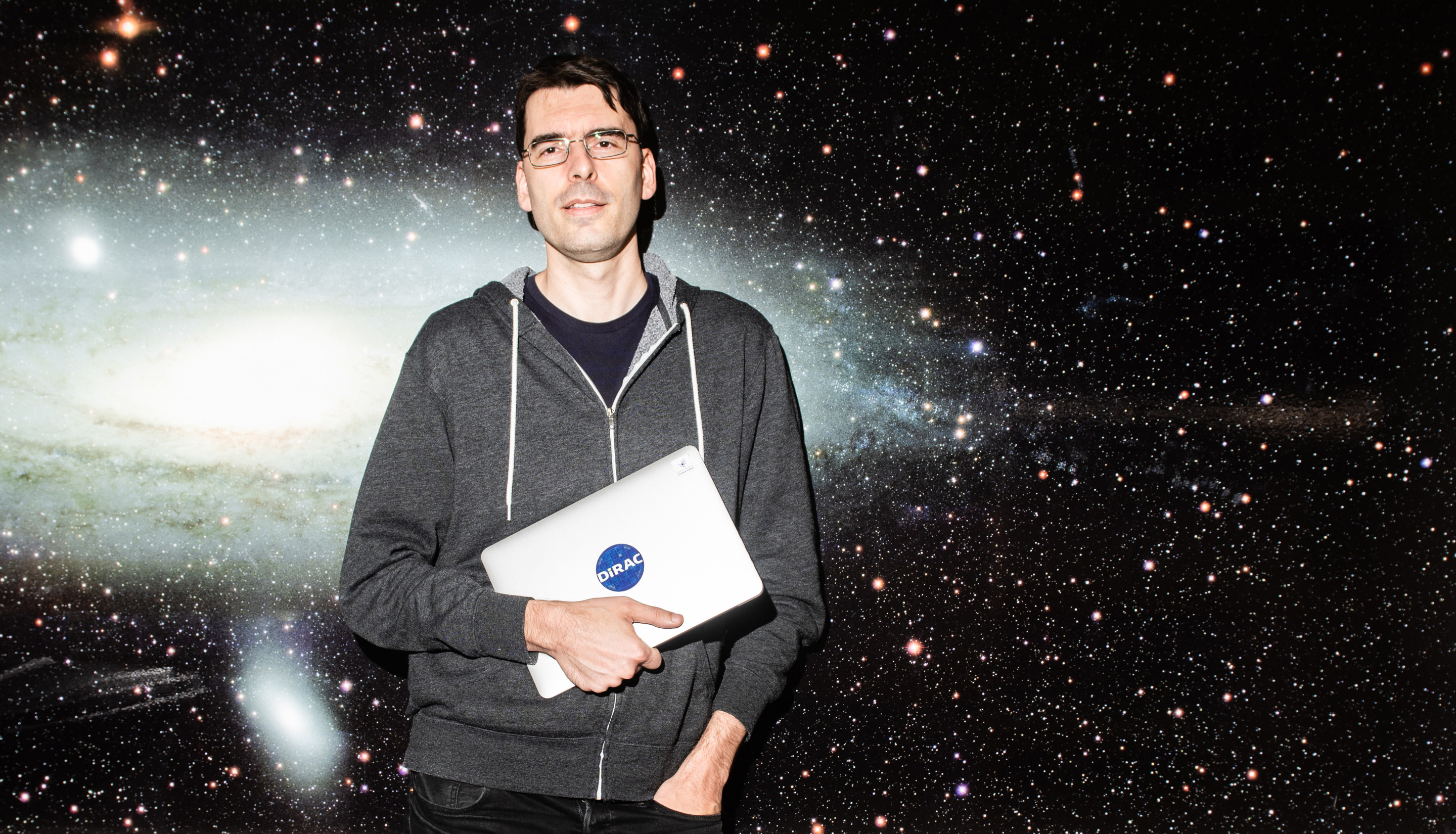

Mario Jurić at the University of Washington.

Chona Kasinger for Quanta Magazine

Introduction

This is a story of getting the good out of the bad, said Mario Jurić. As a boy in Yugoslavia, he would page through an introductory physics book that belonged to his grandfather. He learned that stars came in different colors, and that these colors signified different temperatures. So for his eighth-grade science fair project, Jurić wanted to capture that spectral light. His teacher lent him a prism. Jurić connected that prism to an old-fashioned camera using a cardboard toilet-paper tube and duct tape. He planned to keep the shutter open for a couple of minutes, let starlight pass through the prism, and capture that spread-out light on film.

But Zagreb, where he lived, was home to about a million people. Under ordinary circumstances the city’s light pollution would swamp his measurements. However, Jurić was in middle school during the brutal wars that accompanied the breakup of Yugoslavia. “We had blackouts in case of bombings,” he said. “And so the entire city was dark. My dad was, in retrospect, extremely permissive, because he’d let me go out in the backyard and set up my equipment, actually take pictures of the sky.” His science project worked, and that gave him confidence to continue studying the stars.

Good things can come from bad things, he said several times during our conversations. He went on in high school to turn a local 40-centimeter telescope into an asteroid-discovery machine. He wrote the software, and his friends worked on the hardware. Astronomy brought him to the U.S. in 2002, when he attended graduate school in physics at Princeton University. And now, as a professor at the University of Washington in Seattle, he spends much of his time figuring out how to manage the enormous amounts of data that will soon stream in from the Large Synoptic Survey Telescope (LSST), a wide-field telescope that features a 3,200-megapixel camera, the largest in the world. The telescope is expected to generate about 20 terabytes of data a night.

Quanta spoke with Jurić about how the swell of data is changing what it means to be an astronomer. An edited and condensed version of the conversations follows.

Jurić in front of the alert stream for the Zwicky Transient Facility (ZTF), an observatory that watches for objects like asteroids or supernovas that move or change in brightness. The ZTF sends out alerts to astronomers within about 20 minutes of if finding something, and sends up to 1 million alerts a night. The Large Synoptic Survey Telescope will send alerts within one minute of detecting something, and is expected to produce about 10 million alerts a night.

Chona Kasinger for Quanta Magazine

How is the rise in data changing astronomy?

Our biggest challenge essentially since the beginning, since ancient Greece, was collection of data. Astronomy was always a data-limited science. Now your typical survey generates information on hundreds of millions of stars, and then repeat observations of those. And with LSST, we’re getting in the regime where we will observe about 40 billion objects. Suddenly you have this huge influx of data into an area of science that was very much information-limited before. Instead of having a couple hundred objects on which you’re basing your theories and your understanding of the universe, we’re now dealing with a couple hundred million objects. The sheer amount of data has gone up tremendously. And that, of course, creates challenges in processing.

Now we have to come up with ways to turn those into something that’s useful for constructing theories, for making inferences. If I cannot express in code what it is I need the computer to do for me, or measure for me, then I have no way to actually convert any of that data we’ve collected into a form that I can use to reason about theories.

So computation and programming become very important?

The way I think about that is, when physics branched off from philosophy, the thing that made a difference was the introduction of mathematics, the ability to write down the logic in a set of equations and with strict rules that you know would take you from point A to point B in a self-consistent way. It’s the realization that to make the next step in physics, you actually have to do it that way. At that point, physics became linked to mathematics. Mathematics is the language of physics.

We’re now going through something similar. We’re getting to the point with these big data sets that you have to do the same thing with software engineering. It’s becoming the language that we need to speak just to make inferences about the world around us. What I feel is happening now is we’re in another one of those inflection points in the development of natural sciences. Programming is really becoming as important as the ability to do math to get science done.

How do you convert that enormous amount of data into something useful?

We now have to start learning how to teach the computers to do all kinds of measurements that we used to do manually and very carefully. Something as simple as looking at an image and saying, “oh look, there’s a galaxy” — to a computer that’s not obvious at all. So we’ve gone through a few decades of figuring out how to do that. How to teach the computer to look at an astronomical image, to properly identify all the objects, and then to properly measure all the objects without any help from the humans. I think the field has transitioned into the area where now the computers can process those data and give us catalogs.

The next step in this big data evolution is to teach the computer to take the outputs of the images, go through all those catalogs, and then find us a list of interesting things, and even rank them in terms of how interesting they are.

What’s an example?

In many areas of astrophysics, objects may change brightness and so on, but they don’t move. But the solar system is unique because solar system objects do move. So you now need to come up with algorithms that will literally connect the dots. When you take an image of the sky and you find an asteroid, it looks like a star. You come back a little bit later, you take another image, you’ll notice that it moved. Now with LSST, we’re going to see something like 5,000 asteroids in every image on the ecliptic. That translates into a couple of million per night. You have a million points every night that all move, and you figure out which one goes with which. So the thing that I’m focused on right now is making sure that we know how to build that connect-the-dots algorithm properly.

How do you find things that you don’t know are going to be there? If you haven’t programmed that thing into your algorithm, is that discovery lost?

That is one of the fears. It depends on how the algorithm’s been built. We really think hard about how is this going to behave for objects that have properties that they aren’t supposed to have, based on what we know today. We’re trying to make the algorithm as broad as possible to catch all those objects, and we try to understand where the algorithm is blind.

Chona Kasinger for Quanta Magazine

Where does your own interest in the intersection of astronomy and computer science come from?

I’m one of those people who could never decide whether I wanted to do computer science or astronomy. Computers are these wonderful things because it’s creation without boundaries. When you write a piece of code, it’s like building something, building a new world in that computer. It’s almost artistic. That was very appealing, but on the other hand I wanted to understand the world and I wanted to figure out how things work in the world. So I sort of flipped the coin and did physics. When I got to Princeton, where I did my Ph.D., the Sloan Digital Sky Survey was just starting. I thought, “Wow, there’s a tremendous amount of data, and people were struggling with understanding those data.” I realized at that point that my dreams came true: I don’t have to make a decision anymore whether to do something computer-related or astronomy-related because this type of environment requires both.

Does all of your astrophysics work relate to algorithms and computer programming?

I would say it’s a means to an end. I spend a lot more time focused on the algorithms themselves, but I mostly like to use those things to actually find interesting results. I’m driven by answering problems in astronomy, but I want to make sure I do it in such a way that the next person can build on what I’ve done.

You mentioned the Sloan Digital Sky Survey. How does LSST build upon that?

Sloan generated I think a total of about 10 to 20 terabytes over its history, in terms of imaging. LSST does that in a night. In terms of the number of objects, Sloan was an order of 500 million stars, [observed] once. With LSST, it is going to be about 20 billion stars, and every one of them is likely to be seen 825 times. We’re going to be looking at time domain. It’s a huge volume. The other problem is that — whenever I say problem, just think of it as opportunity — LSST is going to be measuring dozens and potentially hundreds of things about every object.

There was a realization in the 2000s, that instead of building a separate telescope to do this part of astronomy, a separate telescope for this part, what we’re going to do is we’re going to build a telescope, the telescope, to essentially download this sky. You still need to process those data into a form that will enable the solar system scientists to focus on solar system objects, and dark energy folks do their weak lensing maps. Data processing became a huge thing for LSST. It’s one of the rare projects in astronomy where the data system — for which I was responsible — is as expensive and as big as the telescope itself and as the camera itself.

Something we haven’t yet touched on, but is absolutely crucial in astronomy measurements, is statistics.

When you’ve collected all the data there is to collect, the only thing that’s left is to analyze it better. We take measurements, and then how do you know what the measurement is telling you about whatever hypothesis you have? There are statistical methods that allow you to do tests, to fit the models. Statistics is, among other things, about extracting knowledge from data, quantifying your knowledge given the data you have. We use [statistics] very prescriptively, as in, here’s a statistical cookbook. You have to look at the ingredients and pick the right rule, the right recipe. If you have a data set that needs to fall in certain criteria, and if you apply that rule, good things will happen. We’re getting to a point where we’re measuring almost everything we can. The only way forward is to now do your data analysis correctly, because, we cannot do approximations anymore. People think statistics is boring, but once you understand what it is, it’s a fundamental element of science, of discovering knowledge in data.

This big-data evolution, as you call it, it’s not just in astronomy, correct?

Particle physicists have been dealing with it for a little bit longer, they’re maybe five to 10 years ahead of us. Oceanography is now entering into the same region. Ecology is entering the same region. The basic tools that you need to know just to do your science are changing.