The Brain ‘Rotates’ Memories to Save Them From New Sensations

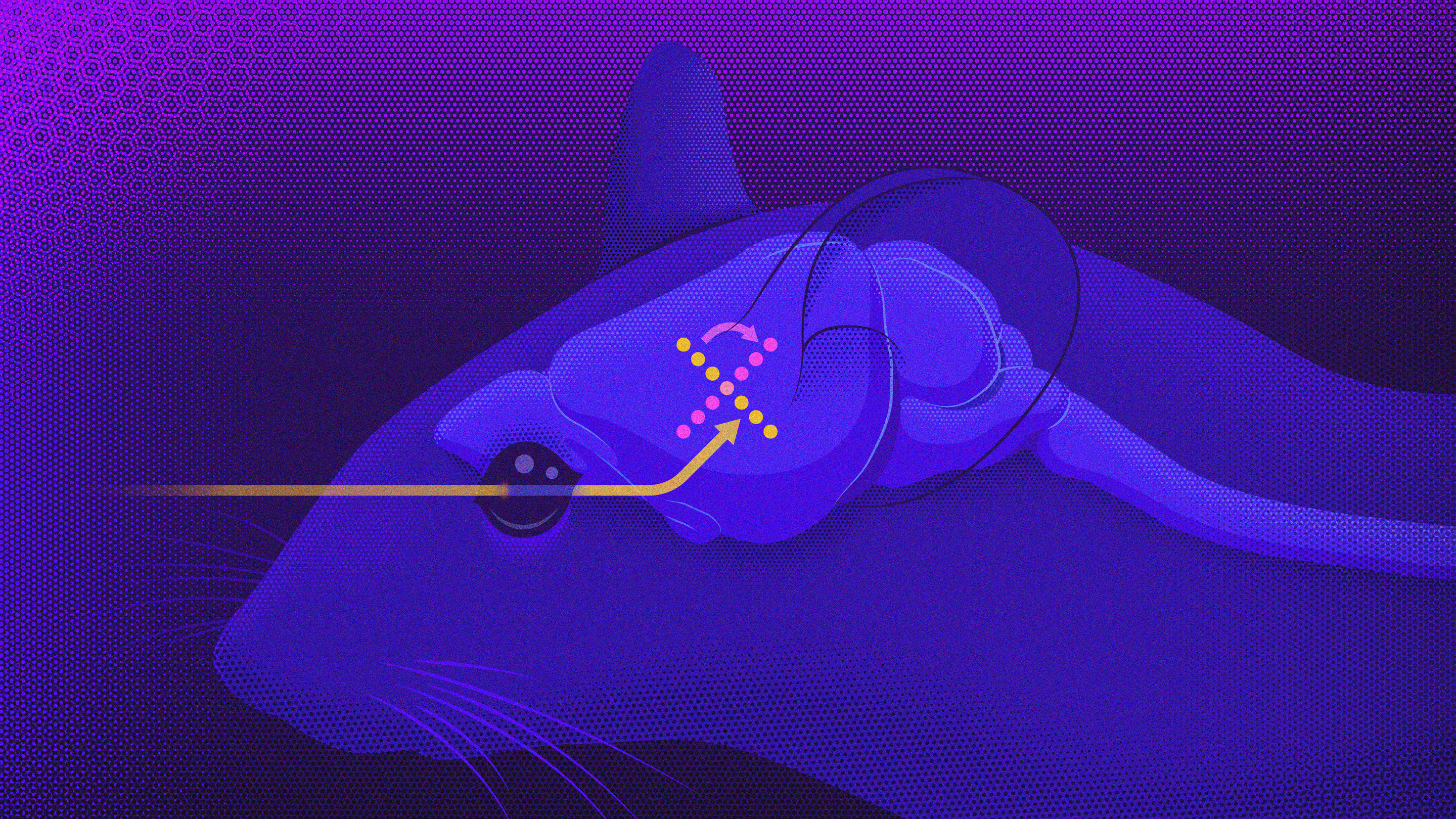

Research in mice shows that neural representations of sensory information get rotated 90 degrees to transform them into memories. In this orthogonal arrangement, the memories and sensations do not interfere with one another.

Samuel Velasco/Quanta Magazine

Introduction

During every waking moment, we humans and other animals have to balance on the edge of our awareness of past and present. We must absorb new sensory information about the world around us while holding on to short-term memories of earlier observations or events. Our ability to make sense of our surroundings, to learn, to act and to think all depend on constant, nimble interactions between perception and memory.

But to accomplish this, the brain has to keep the two distinct; otherwise, incoming data streams could interfere with representations of previous stimuli and cause us to overwrite or misinterpret important contextual information. Compounding that challenge, a body of research hints that the brain does not neatly partition short-term memory function exclusively into higher cognitive areas like the prefrontal cortex. Instead, the sensory regions and other lower cortical centers that detect and represent experiences may also encode and store memories of them. And yet those memories can’t be allowed to intrude on our perception of the present, or to be randomly rewritten by new experiences.

A paper published recently in Nature Neuroscience may finally explain how the brain’s protective buffer works. A pair of researchers showed that, to represent current and past stimuli simultaneously without mutual interference, the brain essentially “rotates” sensory information to encode it as a memory. The two orthogonal representations can then draw from overlapping neural activity without intruding on each other. The details of this mechanism may help to resolve several long-standing debates about memory processing.

To figure out how the brain prevents new information and short-term memories from blurring together, Timothy Buschman, a neuroscientist at Princeton University, and Alexandra Libby, a graduate student in his lab, decided to focus on auditory perception in mice. They had the animals passively listen to sequences of four chords over and over again, in what Buschman dubbed “the worst concert ever.”

These sequences allowed the mice to establish associations between certain chords, so that when they heard one initial chord versus another, they could predict what sounds would follow. Meanwhile, the researchers trained machine learning classifiers to analyze the neural activity recorded from the rodents’ auditory cortex during these listening sessions, to determine how the neurons collectively represented each stimulus in the sequence.

Buschman and Libby watched how those patterns changed as the mice built up their associations. They found that over time, the neural representations of associated chords began to resemble each other. But they also observed that new, unexpected sensory inputs, such as unfamiliar sequences of chords, could interfere with a mouse’s representations of what it was hearing — in effect, by overwriting its representation of previous inputs. The neurons retroactively changed their encoding of a past stimulus to match what the animal associated with the later stimulus — even if that was wrong.

The researchers wanted to determine how the brain must be correcting for this retroactive interference to preserve accurate memories. So they trained another classifier to identify and differentiate neural patterns that represented memories of the chords in the sequences — the way the neurons were firing, for instance, when an unexpected chord evoked a comparison to a more familiar sequence. The classifier did find intact patterns of activity from memories of the actual chords that had been heard — rather than the false “corrections” written retroactively to uphold older associations — but those memory encodings looked very different from the sensory representations.

The memory representations were organized in what neuroscientists describe as an “orthogonal” dimension to the sensory representations, all within the same population of neurons. Buschman likened it to running out of room while taking handwritten notes on a piece of paper. When that happens, “you will rotate your piece of paper 90 degrees and start writing in the margins,” he said. “And that’s basically what the brain is doing. It gets that first sensory input, it writes it down on the piece of paper, and then it rotates that piece of paper 90 degrees so that it can write in a new sensory input without interfering or literally overwriting.”

In other words, sensory data was transformed into a memory through a morphing of the neuronal firing patterns. “The information changes because it needs to be protected,” said Anastasia Kiyonaga, a cognitive neuroscientist at the University of California, San Diego who was not involved in the study.

This use of orthogonal coding to separate and protect information in the brain has been seen before. For instance, when monkeys are preparing to move, neural activity in their motor cortex represents the potential movement but does so orthogonally to avoid interfering with signals driving actual commands to the muscles.

Still, it often hasn’t been clear how the neural activity gets transformed in this way. Buschman and Libby wanted to answer that question for what they were observing in the auditory cortex of their mice. “When I first started in the lab, it was hard for me to imagine how something like that could happen with neural firing activity,” Libby said. She wanted to “open the black box of what the neural network is doing to create this orthogonality.”

Experimentally sifting through the possibilities, they ruled out the possibility that different subsets of neurons in the auditory cortex were independently handling the sensory and memory representations. Instead, they showed that the same general population of neurons was involved, and that the activity of the neurons could be divided neatly into two categories. Some were “stable” in their behavior during both the sensory and memory representations, while other “switching” neurons flipped the patterns of their responses for each use.

To the researchers’ surprise, this combination of stable and switching neurons was enough to rotate the sensory information and transform it into memory. “That’s the entire magic,” Buschman said.

In fact, he and Libby used computational modeling approaches to show that this mechanism was the most efficient way to build the orthogonal representations of sensation and memory: It required fewer neurons and less energy than the alternatives.

Buschman and Libby’s findings feed into an emerging trend in neuroscience: that populations of neurons, even in lower sensory regions, are engaged in richer dynamic coding than was previously thought. “These parts of cortex that are lower down in the food chain are also fitted out with really interesting dynamics that maybe we haven’t really appreciated until now,” said Miguel Maravall, a neuroscientist at the University of Sussex who was not involved in the new study.

The work could help reconcile two sides of an ongoing debate about whether short-term memories are maintained through constant, persistent representations or through dynamic neural codes that change over time. Instead of coming down on one side or the other, “our results show that basically they were both right,” Buschman said, with stable neurons achieving the former and switching neurons the latter. The combination of processes is useful because “it actually helps with preventing interference and doing this orthogonal rotation.”

Buschman and Libby’s study might be relevant in contexts beyond sensory representation. They and other researchers hope to look for this mechanism of orthogonal rotation in other processes: in how the brain keeps track of multiple thoughts or goals at once; in how it engages in a task while dealing with distractions; in how it represents internal states; in how it controls cognition, including attention processes.

“I’m really excited,” Buschman said. Looking at other researchers’ work, “I just remember seeing, there’s a stable neuron, there’s a switching neuron! You see them all over the place now.”

Libby is interested in the implications of their results for artificial intelligence research, particularly in the design of architectures useful for AI networks that have to multitask. “I would want to see if people pre-allocating neurons in their neural networks to have stable and switching properties, instead of just random properties, helped their networks in some way,” she said.

All in all, “the consequences of this kind of coding of information are going to be really important and really interesting to figure out,” Maravall said.

This article was reprinted on Wired.com.