Sleeping Beauty’s Necker Cube Dilemma

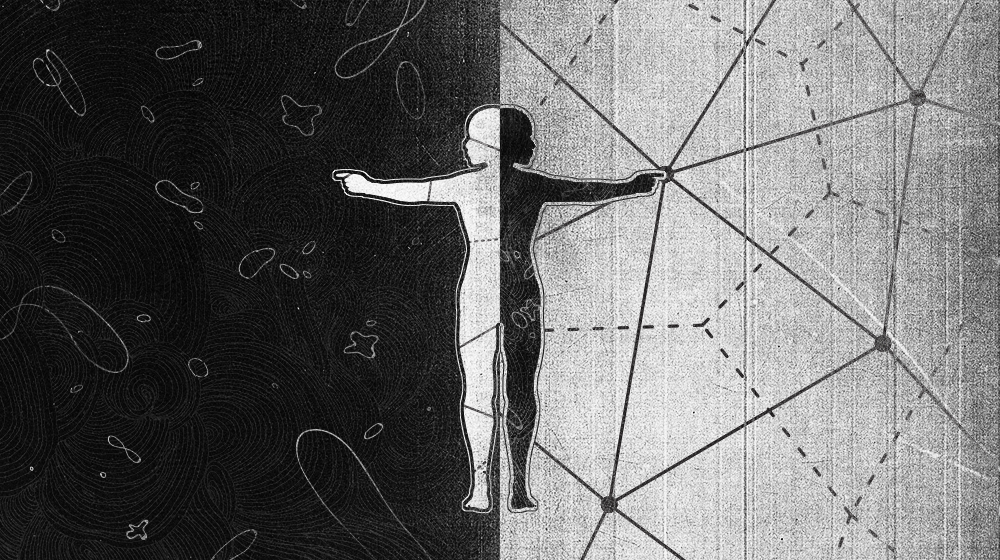

We are used to the idea that perception can be ambiguous — there are visual illusions such as the famous Necker cube that can be perceived in two completely different ways. We accept that both perceptions are equally valid and that it is fruitless to debate which one is “right.” Most of us imagine that such radically different views of the same object cannot occur in the realm of mathematics; after all, we are taught to think that every problem has a single correct answer. As we saw in “The Slippery Eel of Probability,” this is not always the case when the technique to be used in solving the problem is not given. For this month’s Insights puzzle, we consider a famous problem that has divided people across the board and generated endless debate: the Sleeping Beauty problem.

The famous fairy-tale princess Sleeping Beauty participates in an experiment that starts on Sunday. She is told that she will be put to sleep, and while she is asleep a fair coin will be tossed that will determine how the experiment will proceed. If the coin comes up heads, she will be awakened on Monday, interviewed, and put back to sleep, but she won’t remember this awakening. If the coin comes up tails, she will be awakened and interviewed on Monday and Tuesday, again without remembering either awakening. In either case, the experiment ends when she is awakened on Wednesday without being interviewed.

Whenever Sleeping Beauty is awakened and interviewed, she won’t know which day it is or whether she has been awakened before. During each awakening, she is asked: “What is your degree of certainty that the coin landed heads?” What should her answer be?

If you’re having trouble picturing the problem, Julia Galef explains it nicely in this video:

This problem has intuitively appealing solutions that are so entrenched that they have been given names: the thirder position and the halfer position. Before we review these, remember that in the Bayesian view of probability, the degree of subjective certainty is constantly updated by new knowledge. Thus, if we knew nothing about a coin toss except that the coin was fair, our subjective probability of it being heads is one-half. But if a hundred reliable witnesses tell us that it was heads, or we see a video of the event, our subjective probability can change from one-half to one.

Let’s see how the thirders and halfers apply this notion to the above problem.

Thirders argue that in the universe of possibilities, there are three possible situations in which Sleeping Beauty could have been awakened, which are indistinguishable to her. The coin could have come up heads and it is Monday, the coin could have come up tails and it is Monday, or the coin could have come up tails and it is Tuesday. Each of these is equally likely from her perspective, so the probability of each is one-third. So her subjective probability that the coin came up heads is one-third.

“Not so fast!” cry the halfers. Since the coin was fair, the chance that it came up heads is half. Sleeping Beauty has received no new information about the result of the coin toss when she is woken up. So her subjective probability that the coin came up heads should continue to be half.

Think about both of these positions, and let us know what position the Necker cube of your mind lands on. Do add your thoughts to the animated debate on this question, which is already extensive, as a Google search will demonstrate. Below we present two variations on this classic problem. In these versions, you have to make a life-or-death decision based on what you believe. But first, here’s a curious coincidence — the type that makes people go, “What are the chances of that?” On January 11, the Insights team decided to feature the Sleeping Beauty problem this month. The next day we discovered that January 12 is the birthday of Charles Perrault, the original author of the Sleeping Beauty story. Perrault was born in Paris on January 12, 1628. Feel free to weigh in on how often such surprising and rare coincidences have happened to you; I have expressed my views on this phenomenon in a suite of puzzles elsewhere.

OK, now it’s time to put your life on the line. Here are two new variations of the intriguing Sleeping Beauty problem, in a brilliant scenario created by Quanta reader eJ, which I felt needed to be better known and discussed. In both of these variations, you are Sleeping Beauty, and you are awakened based on the result of the coin toss and made to forget this fact, as before. However, the experiment is being performed by evil alien scientists who have kidnapped you, and they ask you to make what turns out to be a life-or-death choice. Now your subjective probability is not just a hypothetical concept: It actually affects your chances of coming out of the situation alive. The correct choice will maximize your chance of survival. Will you stick to your original conclusion, or will you let your mental Necker cube flip?

Variation 1:

Upon each awakening, Sleeping Beauty is presented with two bags of beans, marked “H” and “T.” She is instructed to reach into one bag, grab a single bean, and put it aside. At the end of the experiment, she will have to eat the bean or beans that she has pulled. She is told that the bags are filled with identical looking jellybeans (J) or poisoned pills (K), as follows:

* If the coin came up heads, bag H has 7J, and bag T has 7K.

* If the coin came up tails, bag H has 1J and 6K, while bag T has 6J and 1K.

You are Sleeping Beauty. Which bag would you pick, and what are your chances of survival?

Variation 2:

You, as Sleeping Beauty, are told that you have to go through the original experiment (without the beans) every week for many months, and the memory of each waking will be wiped from your memory. The evil chief scientist has determined that on your hundredth awakening in this series of experiments, you will be presented with the two bags of beans and instructed exactly as in Variation 1 above. If you pick a poisoned pill, you will die; otherwise, you will go free. Now which bag do you pick, and what are your chances of surviving?

Kudos to eJ for making the Sleeping Beauty scenario concrete in such an interesting fashion. They say the thought of death, even in imagination, focuses the mind like nothing else. Does it work that way for you? Happy puzzling, and may the insight be with you!

Editor’s note: The reader who submits the most interesting, creative or insightful solution (as judged by the columnist) in the comments section will receive a Quanta Magazine T-shirt. And if you’d like to suggest a favorite puzzle for a future Insights column, submit it as a comment below, clearly marked “NEW PUZZLE SUGGESTION” (it will not appear online, so solutions to the puzzle above should be submitted separately).

Note that we will hold comments for the first day to allow for independent contributions.

Update: The solution has been published here.