How to Tame Quantum Weirdness

Olena Shmahalo/Quanta Magazine

Introduction

Quantum mechanics is universally considered to be so weird that, as Niels Bohr quipped, “if you are not shocked by it, you don’t really understand it.” One of the most shocking phenomena predicted by quantum mechanics is quantum entanglement, which Einstein called “spooky action at a distance.” He thought a more complete theory could avoid it, but in 1964 John Bell showed that if the predictions of quantum mechanics are true, then spooky action at a distance must indeed take place, given certain reasonable assumptions. Last week, in her article “Experiment Reaffirms Quantum Weirdness,” Natalie Wolchover reported that physicists are closing the door on an intriguing loophole related to these assumptions. This “freedom of choice” loophole had offered die-hards a possible way to avoid believing in spooky action at a distance.

This month’s Insights puzzle takes on the shocking weirdness of the quantum realm as implied by Bell’s theorem. It uses familiar objects and phenomena to reason about quantum particles in an intuitive way that, in my view, gets rid of the weirdness or at least shoves it out of sight so that the results don’t seem so strange at all. Is a simple physical model of quantum mechanics possible? Perhaps! You be the judge.

But first, let’s review Bell’s theorem and introduce our puzzle:

Two students, A and B, who are polar opposites of each other, are gearing up to do a course on quantum mechanics. Thirty-seven days before the course (Day –37) they take a computer test consisting of 100 true/false questions. Every question that A answers as true, B answers as false, and vice versa — their answers are perfectly anti-correlated. At the start of the course (Day 0), the two take the same test again. Some of their answers are now different from what they were the first time, but they are still perfectly anti-correlated. Thirty-seven days later (Day +37), they take the same test for the third time. Again, some of their answers are different, but they are still perfectly anti-correlated.

You and a friend sit at separate computer terminals and compare the tests. You can bring up just one of A’s tests on your computer screen at any given time, while your friend can bring up just one of B’s. First, the two of you pull up the tests the students took on the same day, comparing A’s Day –37 test with B’s Day –37 test, and so on. Sure enough, they are all perfectly anti-correlated, with no matching answers at all. Next, you compare A’s Day 0 test with B’s Day –37 test. In this case, there are exactly 10 answers that match. Similarly, B’s Day 0 test has 10 answers that match those in A’s Day +37 test. Finally, you compare B’s Day –37 test with A’s Day +37 test. And here comes the surprise …

Question 1: What are the minimum and maximum numbers of matching answers you would expect for these two tests?

Question 2: If you found that there were 36 answers that matched, how would you explain it?

Question 3: Where do all the numbers in the above scenario (–37, 0, +37, 10 and 36) come from? (If you have no idea, read on for a hint.)

OK, what does all this have to do with Bell’s theorem? To quote Wolchover:

… when two particles interact, they can become “entangled,” shedding their individual probabilities and becoming components of a more complicated probability function that describes both particles together. This function might specify that two entangled photons are polarized in perpendicular directions, with some probability that photon A is vertically polarized and photon B is horizontally polarized, and some chance of the opposite. The two photons can travel light-years apart, but they remain linked: Measure photon A to be vertically polarized, and photon B instantaneously becomes horizontally polarized, even though B’s state was unspecified a moment earlier and no signal has had time to travel between them. This is the “spooky action” that Einstein was famously skeptical about in his arguments against the completeness of quantum mechanics in the 1930s and ’40s.

In 1964, the Northern Irish physicist John Bell found a way to put this paradoxical notion to the test. He showed that if particles have definite states even when no one is looking (a concept known as “realism”) and if indeed no signal travels faster than light (“locality”), then there is an upper limit to the amount of correlation that can be observed between the measured states of two particles. But experiments have shown time and again that entangled particles are more correlated than Bell’s upper limit, favoring the radical quantum worldview over local realism.

These experiments map directly to our puzzle. A and B’s same-day tests are the anti-correlated photons, and you and your friend are the experimenters. The days of the tests represent the angles, in degrees, of your respective polarizers. If the polarizers are at the same angle (same-day tests), the photons are 100 percent anti-correlated, just as the students are. Since the situations are isomorphic, we should be able to replicate the photon correlation results with the test correlation results — the situations should give identical numerical answers for all angles (days) under common-sense assumptions. These commonsense assumptions are: Completed tests with definite answers exist (realism), they cannot influence each other while the grading is being done (locality), and the examiners are free to compare any of A’s tests with any of B’s (freedom of choice). For polarizers at different angles, the quantum mechanical prediction, now experimentally well established, is that the correlation between them is given by the formula 1 – cos2(θ/2), where θ is the angle between the two polarizers. This innocent-looking correlation function cannot be achieved with the assumptions given above: The discrepancy is clearest if you take the value for a given angle (correlation between A and B’s tests taken a given number of days apart) and use it to calculate the maximum value for twice that angle (correlation between A and B’s tests taken twice the number of days apart) as we verified above. The correlation between the entangled photons is much higher than that possible between the students’ tests. This is an example of how quantum mechanical correlations for entangled particles breach what is known as “Bell’s inequality.”

Question 4: Using the above formula, what is the largest possible difference between the actual correlation for an angle 2θ and the maximum value calculated for 2θ from the given correlation for θ, under the three assumptions described above? At what angle between the polarizers does this largest possible difference take place?

If you have followed the above calculations diligently, you cannot escape the conclusion that the polarization of both photons (represented in the figure by a red or blue color) only takes on a unique value at the instant of, and through the act of, measurement itself. There is absolutely no way to explain the results using real-world objects, is there?

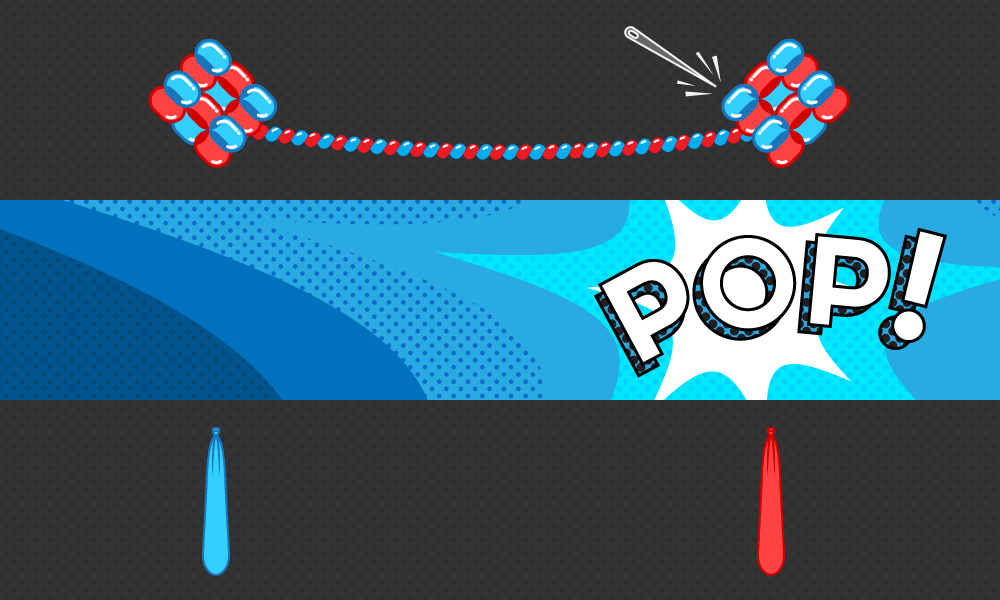

But wait a minute. Let’s consider just one qualitative aspect of the quantum weirdness — the idea that the quantum attributes of an entangled pair of quantum particles are chosen at random by the act of measurement, at the instant of measurement, at potentially widely dispersed points in space. What if you pictured the photons not as solid particles but as being similar to elongated “balloon animal” balloons as shown in the illustration at the top of the page? Imagine that the horizontally photon balloon is a red balloon, and the vertically polarized photon is a blue one. In what follows, try not to focus on the mechanism of how this could be achieved with real balloons, but rather on how balloon-like objects would behave in this kind of set-up. When entangled photons supposedly rush off in opposite directions, imagine they are actually like elongating self-inflating balloons twisting tightly around each other, with each balloon projecting at the speed of light in both directions. Imagine that the balloons are rigged up (entangled) in such a way that they always deflate together and in opposite directions. Then each balloon will be accessible at both ends — if you blindly grasp one (make a measurement), you could come up with either. Imagine that when the measurement is made, it “spears” one of the two twisted balloons at random. This results in instant disentanglement and deflation of both balloons, and the non-speared one, now no longer anchored, snaps back to the opposite end (I have frequently, and painfully, experienced a similar phenomenon with balloons and rubber bands). It’s easy to see why the color of the balloon at one end turns out to be the opposite of the color at the other end. This easy-to-visualize model captures how the selection of attributes could happen only at the instant of measurement, in places widely dispersed.

What about the fact that the two measurements could potentially be carried out light years apart? Wouldn’t there be tremendous lag between the results at either end? Well, when I say the balloons disentangle instantaneously, I mean instantaneously — the above-mentioned snapping back happens faster than the speed of light! The potentially infinite extension of the particles and their superluminal snapping back in this model, though, are not really a problem: These properties are implicit in the mathematics of quantum mechanics anyway. Quantum mechanics specifies that particles can have a finite amplitude to be everywhere in the universe, and wave function collapse (represented here by the superluminal snapping back) is internal to each particle and therefore cannot transmit information. This visualization thus hides away the weird aspects of quantum mechanics and does not break any laws.

I find elastic balloons or bubbles very useful for representing quantum particles. Anyone who has played with soap bubbles in a sink, or air bubbles trapped under a plastic sheet or a carpet, has seen how large bubbles can divide into myriads of “bubblets” that are all over the place, just like particle amplitudes. These bubblets can suddenly and unexpectedly coalesce into the original-size bubble at a completely different location, just like quantum particles. Imagine a two-slit experiment where a bubble splits into two equal-size wave-borne bubblets and goes through both slits, to suddenly coalesce, fully formed, at the instant and place where the measurement is made! It’s fully faithful to the quantum mechanical idea that each particle ultimately interferes only with itself. Maybe quantum particles are like dynamic subdividing, shape-shifting bubbles trapped within a plastic-sheet universe, taking on and revealing their individual attributes only when we probe them and force them to become whole at some location. Perhaps each particle is free to fractionate into millions of dispersed parts in its own private cosmic wormhole, until a measurement forces it to become whole at some particular location, chosen probabilistically.

For now, this idea of visualizing quantum objects by means of bubbles or elastic balloons is just a fun heuristic exercise. Can we use it to build a fully deterministic theory containing real, albeit strange, internally superluminal objects, while using completely traditional probabilities? I’d like to know what readers think. And if any of you possess the deep training and expertise in this field that would be required to create a full-fledged theory, and would like to collaborate, I’d love to hear from you. Happy puzzling!

Editor’s note: The reader who submits the most interesting, creative or insightful solution (as judged by the columnist) in the comments section will receive a Quanta Magazine T-shirt. And if you’d like to suggest a favorite puzzle for a future Insights column, submit it as a comment below, clearly marked “NEW PUZZLE SUGGESTION” (it will not appear online, so solutions to the puzzle above should be submitted separately).

Note that we may hold comments for the first day or two to allow for independent contributions by readers.

Update: The solution has been published here.