Data Compression Drives the Internet. Here’s How It Works.

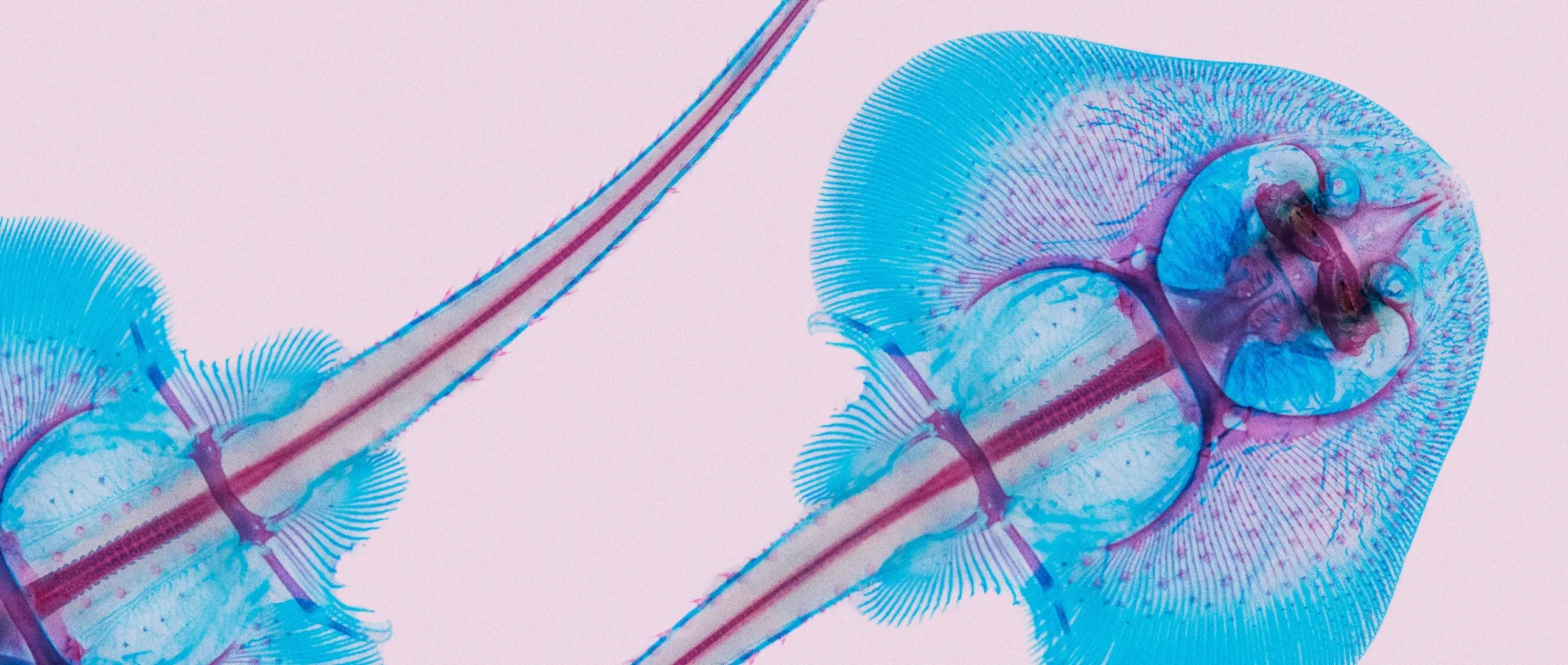

Kristina Armitage/Quanta Magazine

Introduction

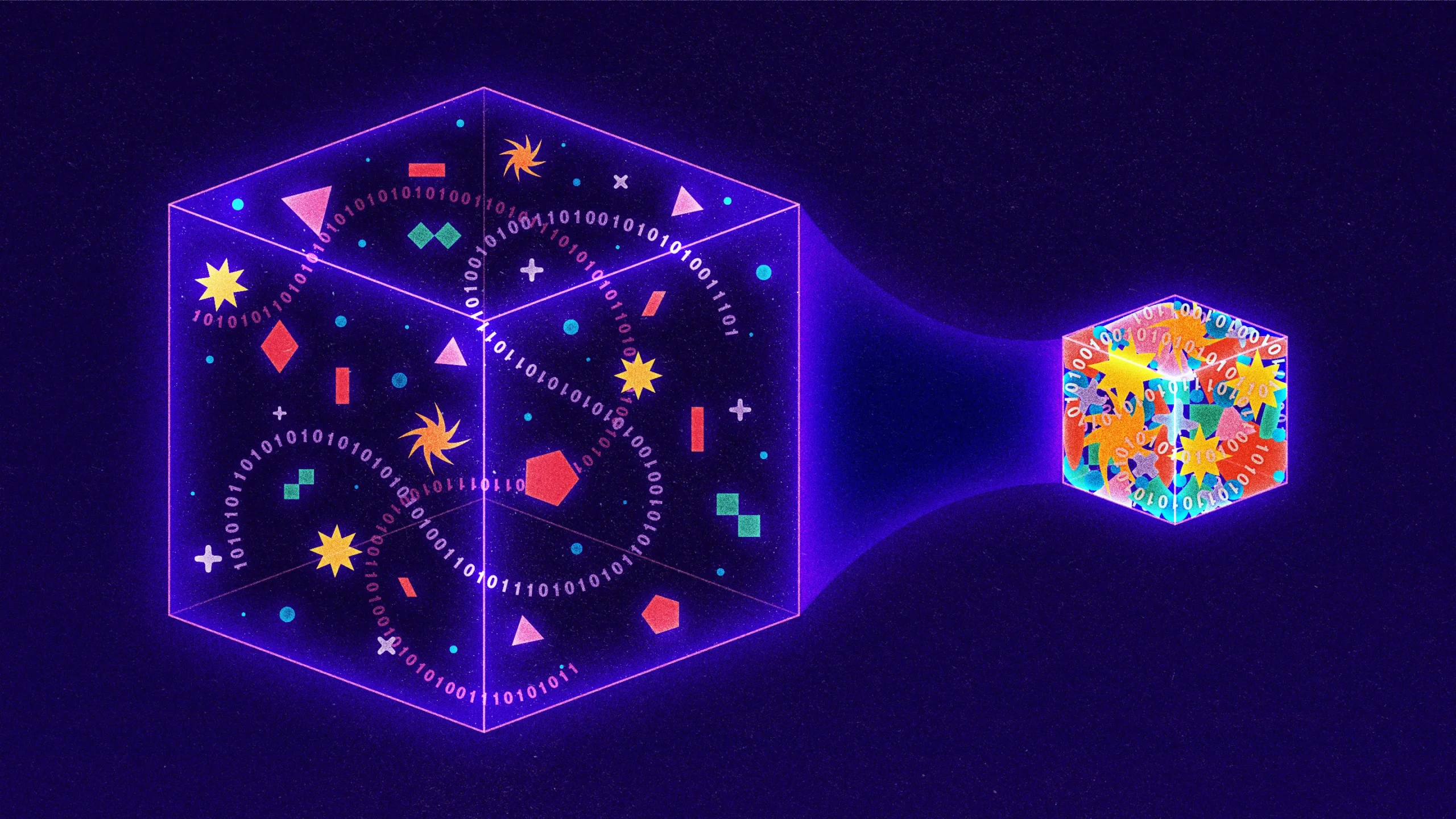

With more than 9 billion gigabytes of information traveling the internet every day, researchers are constantly looking for new ways to compress data into smaller packages. Cutting-edge techniques focus on lossy approaches, which achieve compression by intentionally “losing” information from a transmission. Google, for instance, recently unveiled a lossy strategy where the sending computer drops details from an image and the receiving computer uses artificial intelligence to guess the missing parts. Even Netflix uses a lossy approach, downgrading video quality whenever the company detects that a user is watching on a low-resolution device.

Very little research, by contrast, is currently being pursued on lossless strategies, where transmissions are made smaller, but no substance is sacrificed. The reason? Lossless approaches are already remarkably efficient. They power everything from the PNG image standard to the ubiquitous software utility PKZip. And it’s all because of a graduate student who was simply looking for a way out of a tough final exam.

Seventy years ago, a Massachusetts Institute of Technology professor named Robert Fano offered the students in his information theory class a choice: Take a traditional final exam, or improve a leading algorithm for data compression. Fano may or may not have informed his students that he was an author of that existing algorithm, or that he’d been hunting for an improvement for years. What we do know is that Fano offered his students the following challenge.

Consider a message made up of letters, numbers and punctuation. A straightforward way to encode such a message would be to assign each character a unique binary number. For instance, a computer might represent the letter A as 01000001 and an exclamation point as 00100001. This results in codes that are easy to parse — every eight digits, or bits, correspond to one unique character — but horribly inefficient, because the same number of binary digits is used for both common and uncommon entries. A better approach would be something like Morse code, where the frequent letter E is represented by just a single dot, whereas the less common Q requires the longer and more laborious dash-dash-dot-dash.

Yet Morse code is inefficient, too. Sure, some codes are short and others are long. But because code lengths vary, messages in Morse code cannot be understood unless they include brief periods of silence between each character transmission. Indeed, without those costly pauses, recipients would have no way to distinguish the Morse message dash dot-dash-dot dot-dot dash dot (“trite”) from dash dot-dash-dot dot-dot-dash dot (“true”).

Fano had solved this part of the problem. He realized that he could use codes of varying lengths without needing costly spaces, as long as he never used the same pattern of digits as both a complete code and the start of another code. For instance, if the letter S was so common in a particular message that Fano assigned it the extremely short code 01, then no other letter in that message would be encoded with anything that started 01; codes like 010, 011 or 0101 would all be forbidden. As a result, the coded message could be read left to right, without any ambiguity. For example, with the letter S assigned 01, the letter A assigned 000, the letter M assigned 001, and the letter L assigned 1, suddenly the message 0100100011 can be immediately translated into the word “small” even though L is represented by one digit, S by two digits, and the other letters by three each.

To actually determine the codes, Fano built binary trees, placing each necessary letter at the end of a visual branch. Each letter’s code was then defined by the path from top to bottom. If the path branched to the left, Fano added a 0; right branches got a 1. The tree structure made it easy for Fano to avoid those undesirable overlaps: Once Fano placed a letter in the tree, that branch would end, meaning no future code could begin the same way.

A Fano tree for the message “encoded.” The letter D appears after a left then a right, so it’s coded as 01, while C is right-right-left, 110. Crucially, the branches all end once a letter is placed.

To decide which letters would go where, Fano could have exhaustively tested every possible pattern for maximum efficiency, but that would have been impractical. So instead he developed an approximation: For every message, he would organize the relevant letters by frequency and then assign letters to branches so that the letters on the left in any given branch pair were used in the message roughly the same number of times as the letters on the right. In this way, frequently used characters would end up on shorter, less dense branches. A small number of high-frequency letters would always balance out some larger number of lower-frequency ones.

The message “bookkeeper” has three E’s, two K’s, two O’s and one each of B, P and R. Fano’s symmetry is apparent throughout the tree. For example, the E and K together have a total frequency of 5, perfectly matching the combined frequency of the O, B, P and R.

The result was remarkably effective compression. But it was only an approximation; a better compression strategy had to exist. So Fano challenged his students to find it.

Fano had built his trees from the top down, maintaining as much symmetry as possible between paired branches. His student David Huffman flipped the process on its head, building the same types of trees but from the bottom up. Huffman’s insight was that, whatever else happens, in an efficient code the two least common characters should have the two longest codes. So Huffman identified the two least common characters, grouped them together as a branching pair, and then repeated the process, this time looking for the two least common entries from among the remaining characters and the pair he had just built.

Consider a message where the Fano approach falters. In “schoolroom,” O appears four times, and S/C/H/L/R/M each appear once. Fano’s balancing approach starts by assigning the O and one other letter to the left branch, with the five total uses of those letters balancing out the five appearances of the remaining letters. The resulting message requires 27 bits.

Huffman, by contrast, starts with two of the uncommon letters — say, R and M — and groups them together, treating the pair like a single letter.

His updated frequency chart then offers him four choices: the O that appears four times, the new combined RM node that is functionally used twice, and the single letters S, C, H and L. Huffman again picks the two least common options, matching (say) H with L.

The chart updates again: O still has a weight of 4, RM and HL now each have a weight of 2, and the letters S and C stand alone. Huffman continues from there, in each step grouping the two least frequent options and then updating both the tree and the frequency chart.

Ultimately, “schoolroom” becomes 11101111110000110110000101, shaving one bit off the Fano top-down approach.

One bit may not sound like much, but even small savings grow enormously when scaled by billions of gigabytes.

Indeed, Huffman’s approach has turned out to be so powerful that, today, nearly every lossless compression strategy uses the Huffman insight in whole or in part. Need PKZip to compress a Word document? The first step involves yet another clever strategy for identifying repetition and thereby compressing message size, but the second step is to take the resulting compressed message and run it through the Huffman process.

Not bad for a project originally motivated by a graduate student’s desire to skip a final exam.

Correction: June 1, 2023

An earlier version of the story implied that the JPEG image compression standard is lossless. While the lossless Huffman algorithm is a part of the JPEG process, overall the standard is lossy.