Probability and Number Theory Collide — in a Moment

Kristina Armitage/Quanta Magazine

Introduction

Their ambitions were always high. When Will Sawin and Melanie Matchett Wood first started working together in the summer of 2020, they set out to rethink the key components of some of the most tantalizing conjectures in number theory. The subjects of their attention, class groups, are intimately related to basic questions about how arithmetic works when numbers are extended beyond the integers. Sawin, at Columbia University, and Wood, at Harvard, wanted to make predictions about structures that are even more general and mathematically intimidating than the class group.

Even before they finished formulating their predictions, in October they proved a new result that lets mathematicians apply one of the most useful tools of probability theory not only to class groups, but also to collections of numbers, networks, and many other mathematical objects.

“This is just going to be the foundational paper that everybody turns to when they start thinking about these problems,” said David Zureick-Brown, a mathematician at Emory University. “It doesn’t feel like you have to invent things by scratch anymore.”

A Class Act

A class group is an example of a structured mathematical set called a group. Groups include many familiar sets, like the integers. What makes the integers a group, rather than just a set of numbers, is that you can add its elements together and get another integer. In general, a set is a group if it comes with some operation that, like addition, combines two elements into a third element in a way that satisfies some basic requirements. For instance, there should be a version of zero, an element that doesn’t change any of the other ones.

The integers, which mathematicians usually call $latex \mathbb{Z}$, are infinite. But lots of groups have a finite number of elements. For instance, to make a group that has four elements, consider the set {0, 1, 2, 3}. Instead of performing regular addition, divide the sum of any two numbers by 4 and take the remainder. (Under these rules, 2 + 2 = 0, and 2 + 3 = 1.) This group is called $latex \mathbb{Z}/4\mathbb{Z}$.

In general, if you want to make a group with $latex n$ elements, you can take the numbers zero through n – 1 and consider the remainder when dividing by n. The resulting group is called $latex \mathbb{Z}/n\mathbb{Z}$, though this is not always the only group with n elements.

The class group appears when number theorists investigate the structure of numbers beyond the integers. To do this, they add new numbers to the integers, such as i (the square root of −1), $latex \sqrt{5}$, or even $latex \sqrt{–5}$.

“Things that we’re used to about numbers are no longer true in this context. Or at least, they’re not necessarily true,” said Jordan Ellenberg, a mathematician at the University of Wisconsin, Madison.

“That fixed list is kind of like the glasses that you put on, and the more groups that you’re willing to consider, the better your glasses are,” Melanie Matchett Wood said.

Kevin Grady/Harvard Radcliffe Institute

Specifically, factoring works differently in extensions of the integers. If you stick to just the integers, numbers can be factored into primes (numbers that can only be divided by themselves and 1) in only one way. For example, 6 is 2 × 3, and it cannot be factored into other prime numbers. This property is called unique factorization.

But if you add $latex \sqrt{–5}$ to your number system, you don’t have unique factorization anymore. You can factor 6 into primes two different ways. It’s still 2 × 3, but it’s also $latex (1 + \sqrt{–5})$ × $latex (1 – \sqrt{–5})$.

Class groups are created from such extensions to the integers. “Class groups are incredibly important,” Wood said. “And so it’s natural to wonder: What are they usually like?”

The size of the class group associated with any extension of the integers is a barometer for how much unique factorization breaks down. Though mathematicians have proved that class groups are always finite, figuring out their structure and size is complicated. That’s why in 1984, Henri Cohen and Hendrik Lenstra ventured some guesses. Their conjectures, now called the Cohen-Lenstra heuristics, concerned all the class groups that pop up when you add new square roots to the integers. If all those class groups were gathered together, Cohen and Lenstra suggested answers to questions like: What proportion of them contain the group $latex \mathbb{Z}/3\mathbb{Z}$? Or $latex \mathbb{Z}/7\mathbb{Z}$? Or some other known type of finite group?

Cohen and Lenstra nudged number theorists to consider not just isolated examples of class groups, but statistics that underlie class groups as a whole. Their predictions tapped into a vision of mathematics as a universe with patterns to be uncovered at every level.

Almost 40 years later, the Cohen-Lenstra heuristics are widely believed to be true, though nobody has come close to proving them. Their impact on mathematics has been palpable, said Nigel Boston, a professor emeritus at the University of Wisconsin, Madison. “What’s been discovered is this amazing web,” he said. “There’s this huge infrastructure of the way we think the world is put together.”

The Only Game in Town

Unable to tackle the heuristics directly, mathematicians came up with more tractable problems that they hoped would illuminate the situation. From that work, a useful set of quantities emerged that mathematicians began calling moments, after a term used in probability theory.

In probability, moments can help you work out the distributions behind random numbers. For example, consider the distribution of the daily high temperature on January 1 in New York City — the chances that on January 1 of next year, it will be 10 degrees Fahrenheit, or 40 degrees, or 70 or 120. All you have to work with is past data: a history of the daily high on January 1 every year since the beginning of recorded history.

If you calculate the average of these temperatures, you’ll learn a little, but not everything. An average high temperature of 40 degrees doesn’t tell you the chances that the temperature will be above 50 degrees or below 20.

But this changes if you’re given more information. Specifically, you might learn the average of the square of the temperature, a quantity known as the second moment of the distribution. (The average is the first moment.) Or you might learn the average of the cubes, which is known as the third moment, or the average of the fourth powers — the fourth moment.

By the 1920s, mathematicians had figured out that if moments in this series grow sufficiently slowly, then knowing all the moments lets you deduce that only one possible distribution has those moments. (Though this doesn’t necessarily let you directly calculate that distribution.)

“That’s really unintuitive,” Wood said. “If you think of a continuous distribution, it has some shape. It sort of feels like it has more than can just be captured in a sequence of numbers.”

Mathematicians interested in the Cohen-Lenstra heuristics figured out that, just as moments in probability theory could be wielded to get at a probability distribution, moments defined in a particular way for class groups can be a lens through which we can see their size and structure. Jacob Tsimerman, a mathematician at the University of Toronto, said he can’t imagine how the distribution of class group sizes could be directly calculated. Using moments, he said, is “more than easier. It’s the only game in town.”

This Magic Moment

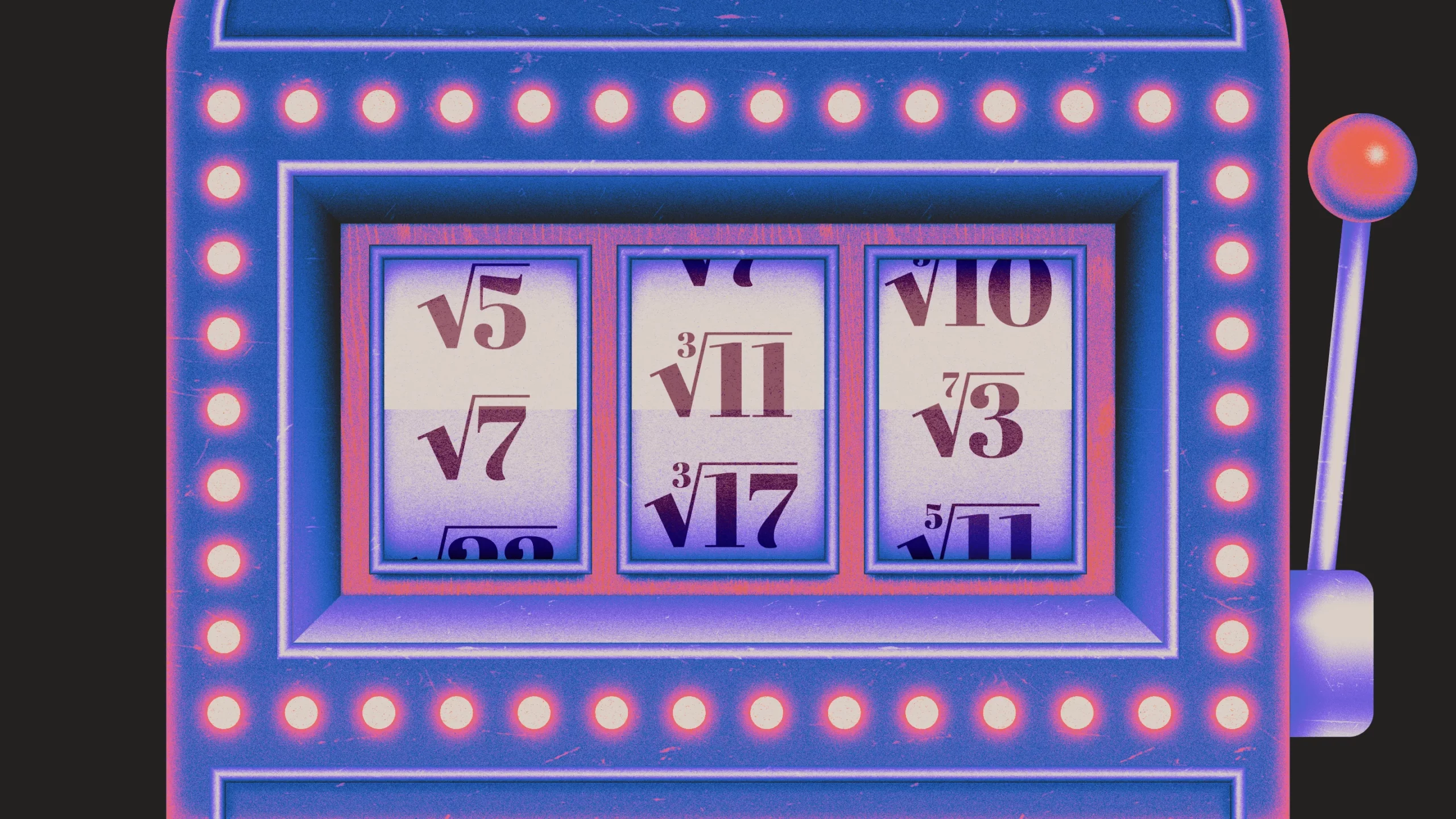

While each moment in probability is associated with an integer — the third power, the fourth power, and so on — the new quantities introduced by number theorists each correspond to a group. These new moments depend on the fact that you can often reduce a group to a smaller group by collapsing different elements together.

To calculate the moment associated with a group G, take all the possible class groups — one for each new square root you add to the integers. For each class group, count up the number of different ways you can collapse it into G. Then, take the average of those numbers. This process might seem convoluted, but it is far easier to work with than the actual distribution behind Cohen and Lenstra’s predictions. Though the Cohen-Lenstra heuristics themselves are complicated to state, the moments of the distribution they predict are all 1.

“That makes you think, wow, maybe the moments are the natural way to approach it,” Ellenberg said. “It seems more believable to be able to prove that something is equal to 1 than to prove that it’s equal to some crazy infinite product.”

“The ideas were there, but we just hadn’t found the right way to express them,” Will Sawin said.

Yihe Dong

When mathematicians study distributions over groups, (class groups or otherwise) they end up with an equation for each group G, with the probabilities now representing, say, the proportion of class groups that look like $latex \mathbb{Z}/3\mathbb{Z}$. With infinitely many equations, and infinitely many possible class groups, it’s tricky to solve for the probabilities. It’s not obvious that it even makes sense to do so.

“When you have infinite sums, things can go wrong,” Wood said.

Yet mathematicians, still unable to find other paths to studying the distributions, kept returning to the moment problem. In work published in the Annals of Mathematics in 2016, Ellenberg, along with Akshay Venkatesh and Craig Westerland, used moments to study the statistics of class groups in a slightly different setting than Cohen and Lenstra had considered. This idea was reused several times. But each time researchers used the moments, they would lean on the quirks of their particular problem to prove that the infinite set of equations had a solution. That meant their techniques were not transferable. The next mathematician who needed to use moments would have to solve the moment problem all over again.

At the start of their collaboration, Sawin and Wood also planned to go this route. They’d use moments to make predictions about how more complicated versions of class groups were distributed. But about a year into their project, they turned their focus to the moment problem itself.

Getting Sidetracked

Colleagues describe Sawin and Wood as unusually passionate about their work. “They’re both very smart. But there’s a lot of smart people,” Zureick-Brown said. “They just have this positive attitude toward doing mathematics.”

Initially, Sawin and Wood wanted to use moments to broaden the Cohen-Lenstra predictions to new settings. But they soon became dissatisfied with their moment problem argument. “We had the need to write similar arguments repeatedly,” Sawin recalled. Moreover, he added, the mathematical language they were using “didn’t seem to be getting at the heart of what the argument was doing…The ideas were there, but we just hadn’t found the right way to express them.”

Sawin and Wood dug deeper into their proof, trying to figure out what was really underneath it all. They ended up with a proof that solved the moment problem not just for their specific application, but for any distribution of groups — and for all kinds of other mathematical structures.

They split the problem up into small, manageable steps. Instead of trying to solve for the entire probability distribution in one go, they focused on only a small slice of the moments.

For example, to solve the moment problem for a probability distribution over groups, each moment would be associated with a group G. At first, Sawin and Wood would look at a system of equations that included only the moments for a restricted list of groups. They’d then slowly add groups to the list, looking at more and more moments each time. By incrementally making the problem more complex, they made each step into a solvable problem. Bit by bit, they built up to a full solution of the moment problem.

“That fixed list is kind of like the glasses that you put on, and the more groups that you’re willing to consider, the better your glasses are,” Wood explained.

When they finally dusted off the last of the extraneous details, they found themselves with an argument whose tendrils reached across mathematics. Their result worked for class groups, for groups associated with geometric shapes, for networks of dots and lines, as well as for other sets with more mathematical intricacy. In all of these situations, Sawin and Wood found a formula that takes in a set of moments and spits out the distribution that has those moments (so long as the moments don’t grow too quickly, among other requirements).

“It’s very much in Melanie’s style,” Ellenberg said. “To be like, ‘Let’s prove a very general theorem that handles a lot of different cases sort of uniformly and elegantly.’”

Sawin and Wood are now making their way back to their original goal. In early January, they shared a new paper that corrects erroneous Cohen-Lenstra predictions made in the late 1980s by Cohen and his colleague Jacques Martinet. Beyond that, they have still more results in their queue, with plans to expand the heuristics to even more new situations. “I don’t know whether this project will ever end,” Sawin said.

The moment problem that Sawin and Wood solved has been “sort of a thorn at the back of your head for a lot of different questions,” Tsimerman said. “I think a lot of mathematicians are going to breathe a sigh of relief.”