‘Magical’ Error Correction Scheme Proved Inherently Inefficient

Myriam Wares for Quanta Magazine

Introduction

If you’ve ever sent a text message, played a CD, or stored a file in the cloud, you’ve benefited from error correction. This revolutionary idea dates back to the 1940s, when researchers first realized that it’s possible to rewrite any message in a form that allows later corruption to be easily reversed.

Over the years, researchers have developed many ingenious schemes, called error-correcting codes, that encode data in different ways and use different procedures to fix errors. But to theoretical computer scientists, few are as compelling as so-called locally correctable codes. These codes have two simultaneous properties that sound almost contradictory: Any error can be corrected by reading the encoded data in just a few places, yet no attacker can foil this correction procedure by selectively tampering with the code. It’s as if you could recover any page torn out of a book by just glancing at a few others.

“It’s quite a magical phenomenon,” said Tom Gur, a computer scientist at the University of Cambridge. “A priori, it’s not obvious that such a mathematical object could exist at all.”

But this magic comes at a steep cost. The only known examples of locally correctable codes are extremely inefficient — encoding any message also makes it exponentially longer. Entire books encoded this way would be far too unwieldy.

Computer scientists have long wondered whether better locally correctable codes are possible. They’ve focused especially on codes that use only three queries to correct any error, hoping that this severe restriction might make these codes easier to understand. But even this simple case has stumped researchers for over 20 years.

Now the computer scientist Pravesh Kothari of Carnegie Mellon University and his graduate student Peter Manohar have finally proved that it’s impossible to build a three-query locally correctable code that avoids that exponential cost. It may be a negative result, but anything that clarifies the limits of error correction is exciting to researchers, especially because the mathematics of locally correctable codes crops up in areas far removed from communication.

“This result is amazing,” said Shubhangi Saraf, a computer scientist at the University of Toronto. “It’s a huge breakthrough.”

Strength in Numbers

To understand error correction, imagine the data you’d like to protect as a sequence of bits, or 0s and 1s. An error, in this model, can be any unwanted flip of a 0 into a 1 or vice versa, whether it’s due to a random fluctuation or deliberate tampering.

Suppose you want to send a message to a friend, but you’re concerned that errors might change the meaning. One simple strategy is to replace each 0 in your message with 000 and each 1 with 111. If your friend sees a part of the message that doesn’t contain three identical bits in a row, they’ll know that an error has occurred. And if errors are random and relatively rare, then any string of 110 is much more likely to be a corrupted 111 than a corrupted 000. A simple majority vote within each triplet will suffice to correct most errors.

This scheme, called the repetition code, has the virtue of simplicity, but little else to recommend it. For one thing, it requires tripling the length of every message just to deal with relatively infrequent errors, and if there’s a decent chance of two adjacent errors, we’ll need even more redundancy. Worse still, it quickly becomes useless if errors aren’t random, such as when attackers actively try to sabotage the code. In the repetition code, all the information needed to correct a given bit is stored in just a few other bits, leaving it vulnerable to a targeted attack.

Fortunately, many error-correcting codes fare better. One famous example, called the Reed-Solomon code, works by transforming messages into polynomials — mathematical expressions like x2 + 3x + 2 that consist of different terms added together, each with a variable (such as x) raised to a different power. Encoding a message using a Reed-Solomon code involves building a polynomial with one term for each character in the message, then plotting the polynomial as a curve on a graph and storing the coordinates of points that lie on the curve (taking at least one more point than the number of characters). Errors might push a few of these points off the curve, but if there aren’t too many errors, only one polynomial curve will pass through most of the points. That curve almost certainly corresponds to the true message.

Reed-Solomon codes are hyperefficient — you only need to store a few extra points to correct errors, so any encoded message is only marginally longer than the original. They’re also less vulnerable to the sort of targeted disruption that would spell disaster for the repetition code, because the information used to correct an error anywhere is distributed across the entire encoded message.

Think Globally, Act Locally

The strength of the Reed-Solomon code stems from interconnectedness. But precisely because of that interconnectedness, there’s no way to fix a single error in an encoded message without reading the whole thing. That may not sound like a problem in the context of communication: If you’re sending a message, you probably want the recipient to read all of it. But it can be a liability in data storage — another major application of error correction.

Consider a company that stores users’ emails in the cloud — that is, on a vast array of servers. You can think of the whole collection of emails as one long message. Now suppose one server crashes. With a Reed-Solomon code, you’d need to perform a massive computation involving all the encoded data to recover your emails from that one lost server. “You would have to look at everything,” said Zeev Dvir, a computer scientist at Princeton University. “That could be billions and billions of emails — it could take a really long time.”

Researchers use the term “local” to describe codes that use only a fraction of the encoded message to spot errors or correct them. The simple repetition code has something of this local character, but that’s precisely what makes it so vulnerable to tampering. A locally correctable code, by contrast, gets the best of both worlds — it can correct an error in any bit with just a few queries, all without losing the interconnectedness that makes Reed-Solomon codes so resilient.

“This is a really stringent notion,” Kothari said.

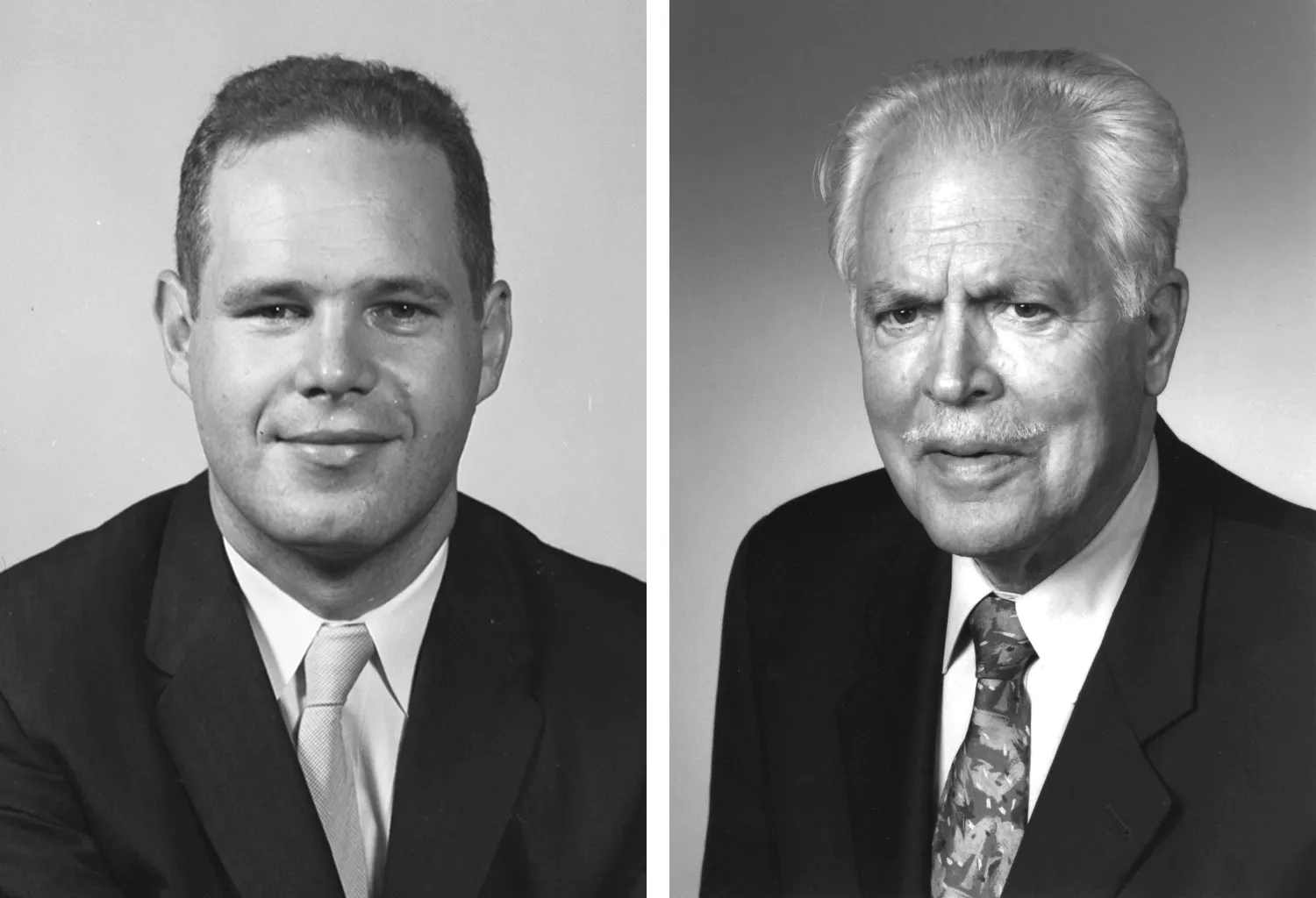

In 1954, David E. Muller (left) and Irving S. Reed invented a way to encode data that makes it possible to fix errors with very little information — at the cost of making messages much longer.

Courtesy of the University of Illinois Archives (left); Courtesy of IEEE AESS

The most famous examples of locally correctable codes are versions of a venerable error-correcting code invented in 1954 by the mathematicians David Muller and Irving Reed (who also helped develop Reed-Solomon codes). Like Reed-Solomon codes, Reed-Muller codes use polynomials with many terms added together to encode long messages.

The polynomials used in Reed-Solomon codes involve a single variable, x, so the only way to add more terms is to use higher powers of x. This results in a curve with many wiggles that can only be pinned down by looking at many points. Reed-Muller codes instead use polynomials in which each term can contain multiple variables multiplied together. More variables mean more ways to combine them, which in turn offers a way to increase the number of polynomial terms without raising any individual variable to such high powers.

Reed-Muller codes are very flexible. You can encode longer messages by increasing the highest power that appears in the polynomial, increasing the number of variables, or both. To make a Reed-Muller code locally correctable, you simply cap the maximum power of each variable at a small constant value, and handle longer messages by increasing only the number of variables.

For a three-query locally correctable code specifically, that maximum power is set at 2. Then as far as each individual variable is concerned, the polynomial encoding the message traces out a simple parabola. To determine the exact shape of that parabola, you only need to examine the curve at three points. What’s more, with many variables there are many such parabolas, any of which can be used to correct errors. That’s what makes Reed-Muller codes so resilient.

Merrill Sherman/Quanta Magazine

Unfortunately, the Reed-Muller code has a serious drawback: The number of bits required to encode a message increases exponentially with the number of variables. If you want a highly local code that corrects errors with just a handful of queries, you’ll need a lot of variables for long messages, and the Reed-Muller code will quickly become useless in practice.

“Exponential in this case is very bad,” Dvir said. But is it inevitable?

Correctable or Decodable?

As computer scientists tried and failed to find more efficient locally correctable codes, they began to suspect that such codes weren’t possible at all. In 2003, two researchers proved that there’s no way to beat the Reed-Muller code using only two queries. But that’s as far as anybody got.

“Once you go to three, our knowledge becomes very sketchy,” Kothari said.

The next breakthrough just complicated matters further. In two papers published in 2008 and 2009, the computer scientists Sergey Yekhanin and Klim Efremenko showed how to construct three-query codes that were more efficient than Reed-Muller codes, but these codes were not quite locally correctable. Instead, they had a subtly different property called local decodability.

To understand the difference, let’s again imagine a cloud storage provider that combines users’ data into one long message and protects it using an error-correcting code. Both locally correctable codes and locally decodable codes can correct an error in any bit of the original message with just a few queries.

But every error-correcting code also requires extra bits that weren’t in the original message — that’s why encoding a message makes it longer. The two types of codes differ in how they treat these additional bits. Locally decodable codes make no promises about the number of queries needed to correct errors in these bits. But in a locally correctable code, an error in any of the extra bits can be fixed in precisely the same way as an error in any bit of the original message.

“Everything that you store, whether it’s the original data of users or the redundancy and the check information — all of this can be locally corrected,” said Madhu Sudan, a computer scientist at Harvard University.

Though different in principle, local correctability and local decodability always seemed interchangeable in practice before 2008 — every known locally decodable code was also locally correctable. Yekhanin and Efremenko’s discovery raised the possibility of a fundamental difference between the two conditions. Or perhaps it was possible to modify Yekhanin and Efremenko’s codes to make them locally correctable. That would put the two conditions on equal footing once more, but it would also mean that researchers had been mistaken about how efficient three-query locally correctable codes could get. Either way, conventional wisdom would have to change.

Borrowing Logic

Kothari and Manohar finally resolved that tension by adapting a technique from a different area of computer science: the study of so-called constraint satisfaction problems. Trying to coordinate dinner plans with a group of friends is a constraint satisfaction problem of a sort. Everyone has choices they’ll accept and choices they’ll veto. Your job is to either find a plan that satisfies everybody, or, if there is no such plan, figure that out as soon as possible.

Peter Manohar realized that the new technique he and Kothari had developed would be especially well suited to studying the limits of locally correctable codes.

Sean Means

There’s an inherent asymmetry between those two possible outcomes. An acceptable solution may not be easy to find, but once you have it, it’s easy to convince anyone else that it will work. But even if you know that the problem really is “unsatisfiable,” there may not be an example that provides proof.

In 2021, Kothari and Manohar, together with Venkatesan Guruswami of the University of California, Berkeley, made a major breakthrough in the study of constraint satisfaction problems using a new theoretical technique for identifying those tricky unsatisfiable cases. They suspected that the new method would be a powerful tool for solving other problems too, and Guruswami’s graduate student Omar Alrabiah suggested that they look at three-query locally decodable codes.

“This was a nail with a hammer in our hand, so to speak,” Kothari said.

Yekhanin and Efremenko’s surprising results had shown that three-query locally decodable codes could be more efficient than Reed-Muller codes. But were their codes the best ones possible, or could three-query locally decodable codes get even more efficient? Kothari, Manohar, Guruswami and Alrabiah thought their new technique might be able to prove limits on how efficient such codes could get. Their plan was to build a logical formula encompassing the structure of all possible three-query locally decodable codes of a given size, and prove it unsatisfiable, thereby showing that no such code could exist.

The four researchers took a first step in that direction in 2022, setting a new limit on the maximum efficiency of three-query locally decodable codes. The result went well beyond what researchers had been able to achieve with other techniques, but it didn’t rule out all codes more efficient than Yekhanin and Efremenko’s.

Kothari and Manohar suspected they could go further. But progress stalled until Manohar jotted down a quick back-of-the-envelope calculation indicating that the technique might work even better for locally correctable codes than it had for locally decodable ones.

A few months later, after many more false starts that made them fear they’d been too optimistic, the technique finally delivered on its promise. Kothari and Manohar proved that as researchers had suspected, it’s impossible for any three-query locally correctable code to work appreciably better than Reed-Muller codes. That exponential scaling is a fundamental limitation. Their result was also a dramatic demonstration that local correctability and local decodability, though superficially similar, really do differ at a fundamental level: The latter is unequivocally easier to realize than the former.

Kothari and Manohar now hope to extend their techniques to study codes that are allowed to make more than three queries, as very little is known about them now. And progress in the theory of error correction often has implications in other seemingly unrelated fields. In particular, locally correctable codes make surprise appearances everywhere from the problem of private database searches in cryptography to proofs of theorems in combinatorics. It’s too early to say how Kothari and Manohar’s technique will impact these different fields, but researchers are feeling optimistic.

“There’s a really beautiful new idea here,” Dvir said. “I think there’s a lot of potential.”