‘A-Team’ of Math Proves a Critical Link Between Addition and Sets

A newly proved theorem about long strings of 0s and 1s unlocks a connection between addition and algebraic structure.

Samuel Velasco/Quanta Magazine

Introduction

In a randomly chosen set of numbers, addition can go wild.

Add together every pair from such a set, and you’ll end up with a new list that’s likely to have a lot more numbers than what you started with. Start with 10 random numbers, and this new list (called the sumset) will have about 50 elements. Start with 100 and the sumset will probably have around 5,000; 1,000 random initial numbers will make a sumset 500,000 numbers long.

But if your initial set has structure, the sumset can end up with fewer numbers than this. Consider another 10-number set: all the even numbers from 2 to 20. Because different pairs will add up to the same number — 10 + 12 is the same as 8 + 14 and 6 + 16 — the sumset has just 19 numbers, not 50. This difference becomes more and more profound as the sets get larger. A structured list of 1,000 numbers might have a sumset with just 2,000 numbers in it.

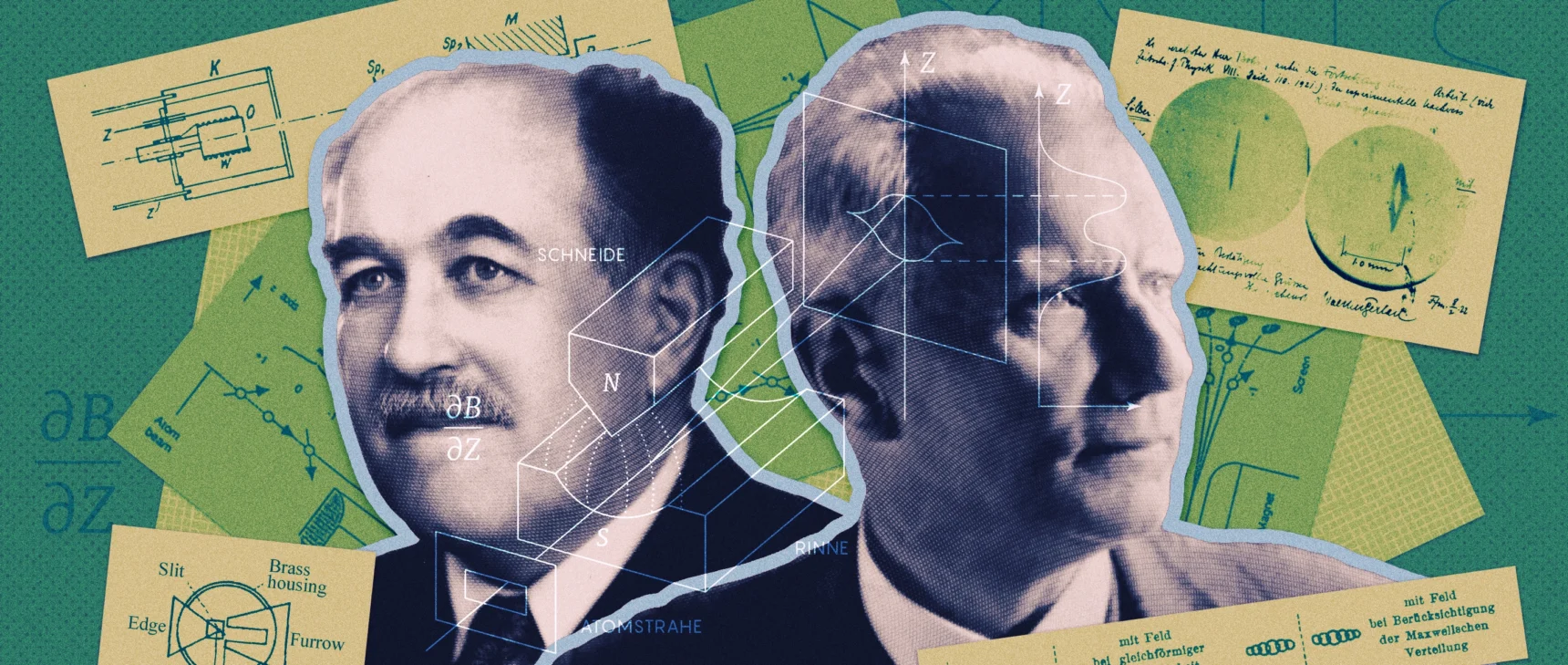

In the 1960s, a mathematician named Gregory Freiman began investigating sets with small sumsets in an effort to probe the link between addition and set structure — a crucial connection that defines the mathematical field of additive combinatorics. Freiman made impressive progress, proving that a set with a small sumset must be contained by a larger set whose elements are spaced in a highly regular pattern. But then the field stagnated. “Freiman’s original proof was extraordinarily hard to understand, to the point where nobody really was absolutely sure that it was correct. So it didn’t really have the impact that it might have had,” said Timothy Gowers, a mathematician at the Collège de France and the University of Cambridge and a Fields medalist. “But then Imre Ruzsa burst onto the scene.”

In a series of two papers in the 1990s, Ruzsa re-proved Freiman’s theorem with an elegant new argument. A few years later, Katalin Marton, an influential Hungarian mathematician who died in 2019, tweaked the question of what a small sumset implies about the structure of the original set. She replaced the types of elements that appeared in the set and the type of structure that mathematicians should look for, thinking that would allow mathematicians to extract even more information. Marton’s conjecture has links to proof systems, coding theory and cryptography, and occupies an exalted place in additive combinatorics.

Her conjecture “feels like really one of the most basic things that we didn’t understand,” said Ben Green, a mathematician at the University of Oxford. It “just sort of underpinned lots of things that I care about.”

Green joined forces with Gowers, Freddie Manners of the University of California, San Diego, and Terence Tao, a Fields medalist at the University of California, Los Angeles to form what the Israeli mathematician and blogger Gil Kalai called an “A-team” of mathematicians. They proved a version of the conjecture in a paper shared on November 9.

Nets Katz, a mathematician at Rice University who was not involved in the work, describes the proof as “beautifully straightforward” — and “more or less completely out of the blue.”

Tao then kicked off an effort to formalize the proof in Lean, a programming language that helps mathematicians verify theorems. In just a few weeks, that effort succeeded. Early Tuesday morning of December 5, Tao announced that Lean had proved the conjecture without any “sorrys” — the standard statement that appears when the computer can’t verify a certain step. This is the highest-profile use of such verification tools since 2021, and marks an inflection point in the ways mathematicians write proofs in terms a computer can understand. If these tools become easy enough for mathematicians to use, they might be able to substitute for the often prolonged and onerous peer review process, said Gowers.

The ingredients of the proof had been simmering for decades. Gowers conceived of its first steps in the early 2000s. But it took 20 years to prove what Kalai called “a holy grail” of the field.

The In-Group

To understand Marton’s conjecture, it helps to be familiar with the concept of a group, a mathematical object that consists of a set and an operation. Think of the integers — an infinite set of numbers — and the operation of addition. Every time you add two integers together, you get another integer. Addition also obeys a few other rules of group operations, like associativity, which lets you change the order of operations: 3 + (5 + 2) = (3 + 5) + 2.

Within a group, you can sometimes find smaller sets that satisfy all the group properties. For example, if you add any two even numbers, you’ll get another even number. The even numbers are a group unto themselves, making them a subgroup of the integers. The odd numbers, by contrast, are not a subgroup. If you add up two odd numbers, you get an even number — not in the original set. But you can get all the odd numbers by simply adding 1 to every even number. A shifted subgroup like this is called a coset. It doesn’t have all the properties of a subgroup, but it retains the structure of its subgroup in many ways. For example, just like the even numbers, the odd numbers are all evenly spaced.

Timothy Gowers came up with ideas about how to prove Marton’s conjecture decades ago; they became central to the new proof.

Patrick Imbert/Collège de France

Marton supposed that if you have a set that we’ll call A made up of group elements whose sumset is not all that much bigger than A itself, then there exists some subgroup — call it G — with a special property. Shift G a few times to make cosets, and those cosets will, taken together, contain the original set A. Moreover, she supposed that the number of cosets doesn’t grow much faster than the size of the sumset — she believed it should be related by a polynomial factor, as opposed to much quicker exponential growth.

This might sound like a highly technical curiosity. But because it relates a simple test — what happens when you add just two elements in the set? — to the overarching structure of a subgroup, it is very important to mathematicians and computer scientists. The same general idea shows up when computer scientists try to encrypt messages so that you can decode just a bit of the message at time. It also appears in probabilistically checkable proofs, a form of proof which computer scientists can verify by checking only a few isolated bits of information. In each of these cases, you work with just a couple of points in a structure — decoding just a few bits from a long message, or verifying a small portion of a complicated proof — and conclude something about a larger, higher-level structure.

“You can either pretend everything is a large subset of a group,” said Tom Sanders, a former student of Gowers who is now a colleague of Green’s at Oxford, or you can, “get everything you wanted from the existence of many additive coincidences. Both of these perspectives are useful.”

Ruzsa published Marton’s conjecture in 1999, giving her full credit. “She came to that conjecture independently of me and Freiman, and probably before us,” he said. “That’s why, when I talked to her, I decided to call it her conjecture.” Still, mathematicians now refer to it as the polynomial Freiman-Ruzsa conjecture, or PFR.

Zeros and Ones

Groups, like many mathematical objects, take lots of different forms. Marton supposed that her conjecture is true for all groups. This has yet to be shown. The new paper proves it for a particular kind of group, which takes as its elements lists of binary numbers like (0, 1, 1, 1, 0). Because computers work in binary, this group is crucial in computer science. But it’s also been useful in additive combinatorics. “It’s like this toy setting in which you can kind of play around and try things,” Sanders said. “The algebra is much, much nicer” than working with whole numbers, he added.

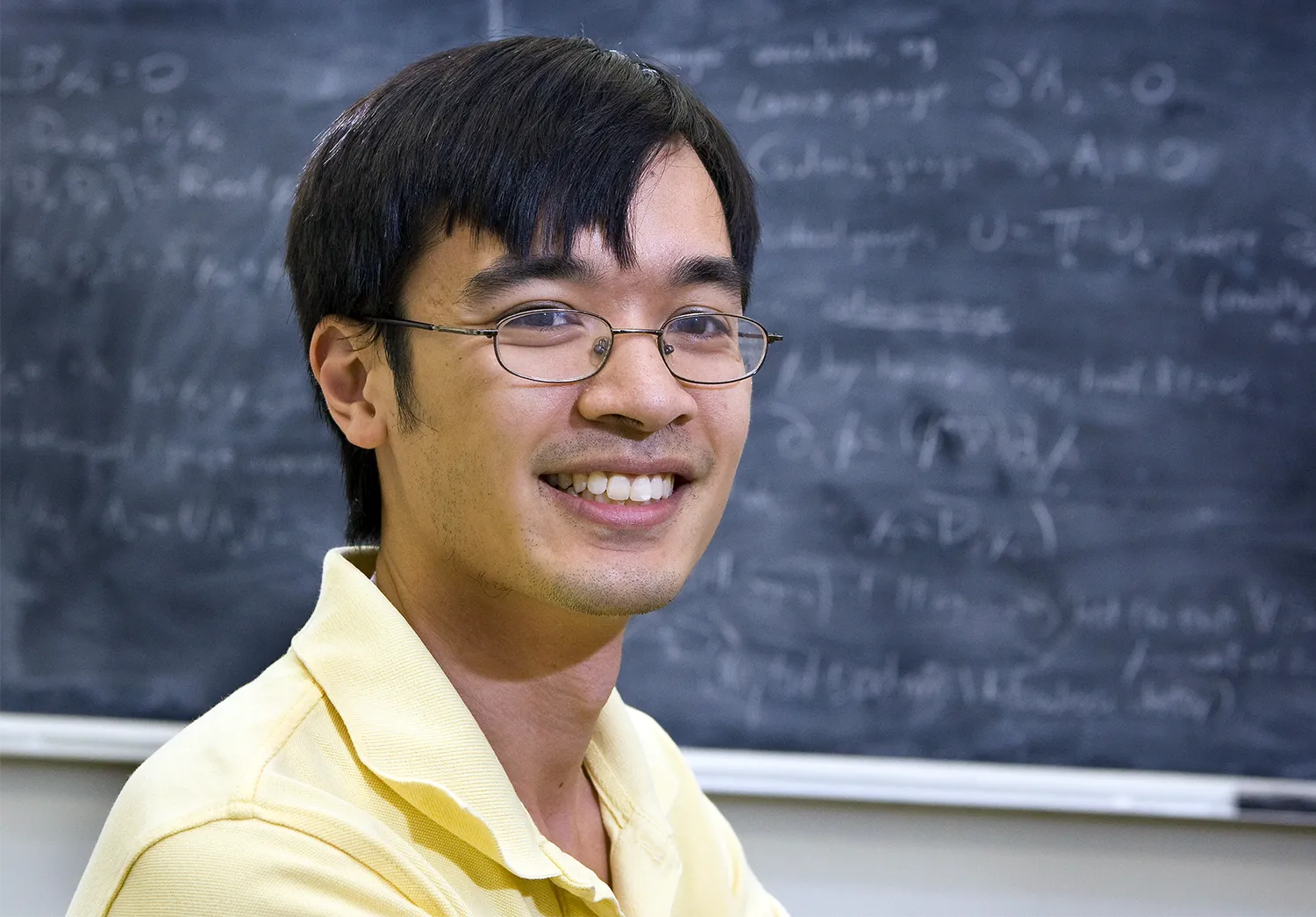

Terence Tao led a collaborative effort to formalize the proof using the Lean proof assistant. It took just three weeks.

Reed Hutchinson

The lists have fixed lengths, and each bit can be either 0 or 1. You add them together by adding each entry to its counterpart in another list, with the rule that 1 + 1 = 0. So (0, 1, 1, 1, 0) + (1, 1, 1, 1, 1) = (1, 0, 0, 0, 1). PFR is an attempt to figure out what a set can look like if it isn’t quite a subgroup but has some grouplike features.

To make PFR precise, imagine you have a set of binary lists called A. Now take every pair of elements from A and add them up. The resulting sums make up the sumset of A, called A + A. If the elements of A are chosen randomly, then most of the sums are different from each other. If there are k elements in A, that means there will be around k2/2 elements in the sumset. When k is large — say, 1,000 — k2/2 is a lot bigger than k. But if A is a subgroup, every element of A + A is in A, meaning that A + A is the same size as A itself.

PFR considers sets that are not random, but are not subgroups either. In these sets, the number of elements in A + A is somewhat small — say, 10k, or 100k. “It’s really useful when your notion of structure is much more rich than just being an exact algebraic structure,” said Shachar Lovett, a computer scientist at the University of California, San Diego.

All the sets mathematicians knew of that obeyed this property “are pretty close to actual subgroups,” Tao said. “That was the intuition, that there weren’t any other sort of fake groups lying around.” Freiman had proved a version of this statement in his original work. In 1999, Ruzsa extended Freiman’s theorem from the integers to the setting of binary lists. He proved that when the number of elements in A + A is a constant multiple of the size of A, A is contained within a subgroup.

But Ruzsa’s theorem required the subgroup to be enormous. Marton’s insight was to posit that rather than being contained in one giant subgroup, A could be contained in a polynomial number of cosets of a subgroup that is no bigger than the original set A.

‘I Know a Real Idea When I See a Real Idea’

Around the turn of the millennium, Gowers came across Ruzsa’s proofs of Freiman’s theorem while studying a different problem about sets containing strings of evenly spaced numbers. “I needed something like this, kind of getting structural information from much looser information about a certain set,” Gowers said. “I was very fortunate that not that long before, Ruzsa produced this absolutely gorgeous proof.”

Freddie Manners and his collaborators had the outlines of a proof a few months ago, but had to push to finalize it. “You want to publish this great result and tell the world, but you do actually still have to write your midterms,” he said.

UCSD

Gowers began to work out a potential proof of the polynomial version of the conjecture. His idea was to start with a set A whose sumset was relatively small, then gradually manipulate A into a subgroup. If he could prove that the resulting subgroup was similar to the original set A, he could easily conclude the conjecture was true. Gowers shared his ideas with colleagues, but no one could mold them into a full proof. Though Gowers’ strategy was successful in some cases, in others the manipulations took A further away from the desired conclusion of the polynomial Freiman-Ruzsa conjecture.

Eventually, the field moved on. In 2012, Sanders almost proved PFR. But the number of shifted subgroups he needed was above the polynomial level, though only by a little bit. “Once he did that, it meant that it became a less urgent thing, but still a really nice problem for which I have a great fondness,” Gowers said.

But Gowers’ ideas lived on in his colleagues’ memories and hard drives. “There’s a real idea there,” said Green, who had also been a student of Gowers. “I know a real idea when I see a real idea.” This summer, Green, Manners and Tao finally liberated Gowers’ ideas from their purgatory.

Green, Tao and Manners were 37 pages deep in collaboration before they thought to return to Gowers’ 20-year-old ideas. In a June 23 paper, they had successfully used a concept from probability theory called random variables to probe the structure of sets with small sumsets. By making this switch, the group could manipulate their sets with more finesse. “Dealing with random variables is somehow much less rigid than dealing with sets,” Manners said. With a random variable, “I can tweak one of the probabilities by a small amount, and that might give me a better random variable.”

Using this probabilistic perspective, Green, Manners and Tao could move from working with the number of elements in a set to a measurement of the information contained in a random variable, a quantity called entropy. Entropy was not new to additive combinatorics. In fact, Tao had attempted to popularize the concept in the late 2000s. But nobody had yet tried to use it on the polynomial Freiman-Ruzsa conjecture. Green, Manners and Tao discovered it was powerful. But they still couldn’t prove the conjecture.

Marton’s conjecture “feels like really one of the most basic things that we didn’t understand,” said Ben Green.

A. K. Purkiss

As the group chewed over their new results, they realized that they’d finally built an environment in which Gowers’ dormant ideas could flourish. If they measured the size of a set using its entropy, rather than its number of elements, the technical details might work out much better. “At some point we realized that these old ideas from Tim from 20 years ago were actually more likely to work than the ones we were trying,” Tao said. “And so we brought Tim back into the project. And then all the pieces fit together surprisingly nicely.”

Still, there were many details to figure out before a proof came together. “It was sort of foolish that all four of us were incredibly busy with other things,” Manners said. “You want to publish this great result and tell the world, but you do actually still have to write your midterms.” Eventually, the group pushed through, and on November 9, they posted their paper. They proved that if A + A is no bigger than k times the size of A, then A can be covered by no more than about k12 shifts of a subgroup that is no bigger than A. This is a potentially enormous number of shifts. But it is a polynomial, so it doesn’t grow exponentially faster as k gets bigger, as it would if k were in the exponent.

A few days later, Tao began to formalize the proof. He ran the formalization project collaboratively, using the version-control package GitHub to coordinate contributions from 25 volunteers around the world. They used a tool called Blueprint developed by Patrick Massot, a mathematician at Paris-Saclay University, to organize the efforts to translate from what Tao called “Mathematical English” into computer code. Blueprint can, among other things, create a chart depicting the various logical steps involved in the proof. Once all the bubbles were covered in what Tao called a “lovely shade of green,” the team was done. They discovered a few very minor typos in the paper — in an online message, Tao noted that “the most mathematically interesting portions of the project were relatively straightforward to formalize, but it was the technical ‘obvious’ steps that took the longest.”

Marton died just a few years before her famous conjecture was proved, but the proof echoes her life’s work on entropy and information theory. “Everything works much better when you do it in this entropy framework than in the framework I was trying to do it,” Gowers said. “To me, it still seems somewhat magical.”

Correction: December 13, 2023

A link in this story to Imre Ruzsa directed to a webpage not about the mathematician, but about a philosopher of the same name. That link has been corrected.