New Algorithm Closes Quantum Supremacy Window

In random circuit sampling, researchers take quantum bits and randomly manipulate them. A new paper explores how errors in quantum computers can multiply to thwart these efforts.

Kristina Armitage and Merrill Sherman/Quanta Magazine

Introduction

In what specific cases do quantum computers surpass their classical counterparts? That’s a hard question to answer, in part because today’s quantum computers are finicky things, plagued with errors that can pile up and spoil their calculations.

By one measure, of course, they’ve already done it. In 2019, physicists at Google announced that they used a 53-qubit machine to achieve quantum supremacy, a symbolic milestone marking the point at which a quantum computer does something beyond the reach of any practical classical algorithm. Similar demonstrations by physicists at the University of Science and Technology of China soon followed.

But rather than focus on an experimental result for one particular machine, computer scientists want to know whether classical algorithms will be able to keep up as quantum computers get bigger and bigger. “The hope is that eventually the quantum side just completely pulls away until there’s no competition anymore,” said Scott Aaronson, a computer scientist at the University of Texas, Austin.

That general question is still hard to answer, again in part because of those pesky errors. (Future quantum machines will compensate for their imperfections using a technique called quantum error correction, but that capability is still a ways off.) Is it possible to get the hoped-for runaway quantum advantage even with uncorrected errors?

Most researchers suspected the answer was no, but they couldn’t prove it for all cases. Now, in a paper posted to the preprint server arxiv.org, a team of computer scientists has taken a major step toward a comprehensive proof that error correction is necessary for a lasting quantum advantage in random circuit sampling — the bespoke problem that Google used to show quantum supremacy. They did so by developing a classical algorithm that can simulate random circuit sampling experiments when errors are present.

“It’s a beautiful theoretical result,” Aaronson said, while stressing that the new algorithm is not practically useful for simulating real experiments like Google’s.

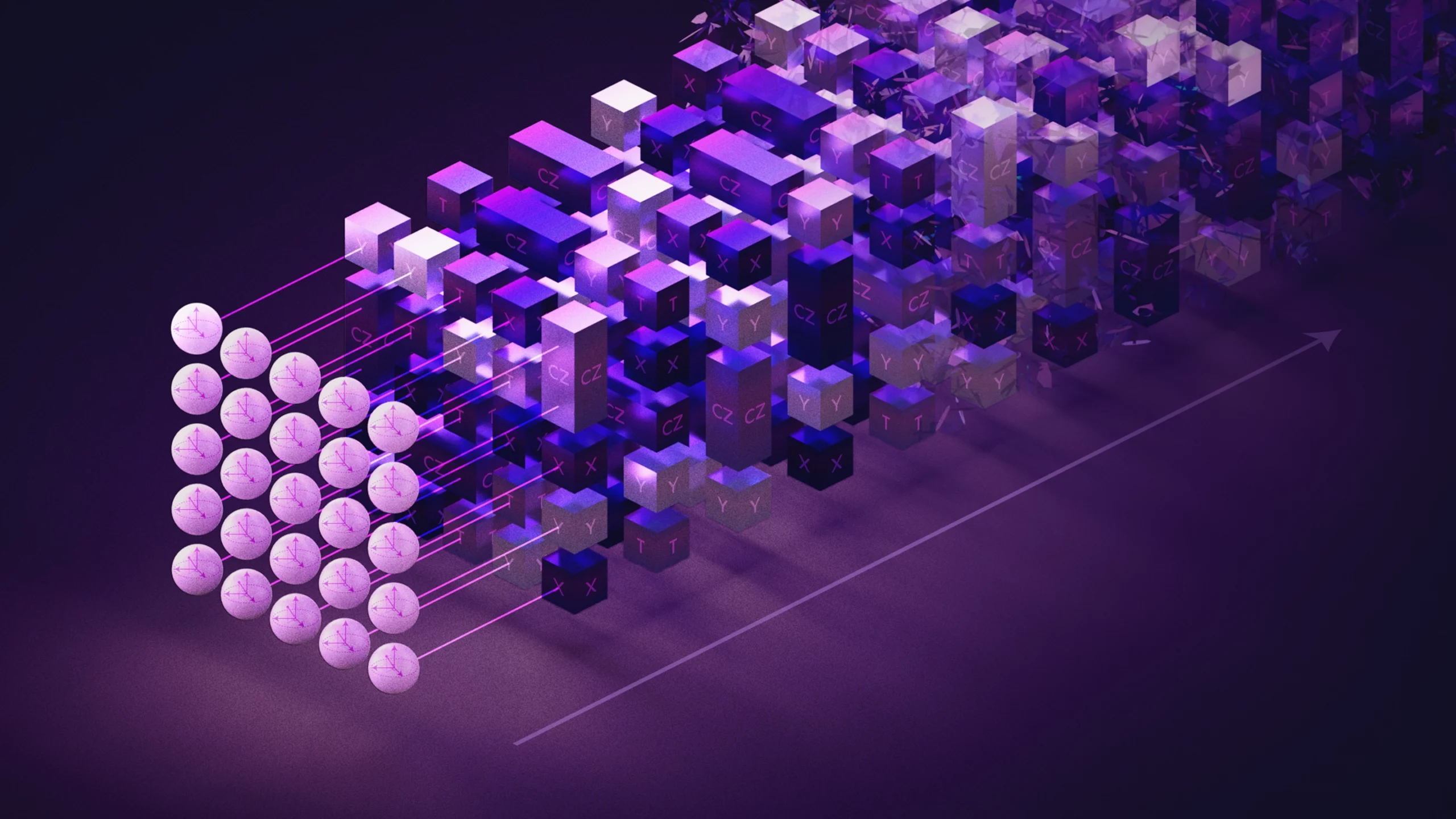

In random circuit sampling experiments, researchers start with an array of qubits, or quantum bits. They then randomly manipulate these qubits with operations called quantum gates. Some gates cause pairs of qubits to become entangled, meaning they share a quantum state and can’t be described separately. Repeated layers of gates bring the qubits into a more complicated entangled state.

To learn about that quantum state, researchers then measure all the qubits in the array. This causes their collective quantum state to collapse to a random string of ordinary bits — 0s and 1s. The number of possible outcomes grows rapidly with the number of qubits in the array: With 53 qubits, as in Google’s experiment, it’s nearly 10 quadrillion. And not all strings are equally likely. Sampling from a random circuit means repeating such measurements many times to build up a picture of the probability distribution underlying the outcomes.

The question of quantum advantage is simply this: Is it hard to mimic that probability distribution with a classical algorithm that doesn’t use any entanglement?

In 2019, researchers proved that the answer is yes for error-free quantum circuits: It is indeed hard to classically simulate a random circuit sampling experiment when there are no errors. The researchers worked within the framework of computational complexity theory, which classifies the relative difficulty of different problems. In this field, researchers don’t treat the number of qubits as a fixed number such as 53. “Think of it as n, which is some number that’s going to increase,” said Aram Harrow, a physicist at the Massachusetts Institute of Technology. “Then you want to ask: Are we doing things where the effort is exponential in n or polynomial in n?” This is the preferred way to classify an algorithm’s runtime — when n grows large enough, an algorithm that’s exponential in n lags far behind any algorithm that’s polynomial in n. When theorists speak of a problem that’s hard for classical computers but easy for quantum computers, they’re referring to this distinction: The best classical algorithm takes exponential time, while a quantum computer can solve the problem in polynomial time.

Yet that 2019 paper ignored the effects of errors caused by imperfect gates. This left open the case of a quantum advantage for random circuit sampling without error correction.

If you imagine continually increasing the number of qubits as complexity theorists do, and you also want to account for errors, you need to decide whether you’re also going to keep adding more layers of gates — increasing the circuit depth, as researchers say. Suppose you keep the circuit depth constant at, say, a relatively shallow three layers, as you increase the number of qubits. You won’t get much entanglement, and the output will still be amenable to classical simulation. On the other hand, if you increase the circuit depth to keep up with the growing number of qubits, the cumulative effects of gate errors will wash out the entanglement, and the output will again become easy to simulate classically.

But in between lies a Goldilocks zone. Before the new paper, it was still a possibility that quantum advantage could survive here, even as the number of qubits increased. In this intermediate-depth case, you increase the circuit depth extremely slowly as the number of qubits grows: Even though the output will steadily get degraded by errors, it might still be hard to simulate classically at each step.

The new paper closes this loophole. The authors derived a classical algorithm for simulating random circuit sampling and proved that its runtime is a polynomial function of the time required to run the corresponding quantum experiment. The result forges a tight theoretical connection between the speed of classical and quantum approaches to random circuit sampling.

The new algorithm works for a major class of intermediate-depth circuits, but its underlying assumptions break down for certain shallower ones, leaving a small gap where efficient classical simulation methods are unknown. But few researchers are holding out hope that random circuit sampling will prove hard to simulate classically in this remaining slim window. “I give it pretty small odds,” said Bill Fefferman, a computer scientist at the University of Chicago and one of the authors of the 2019 theory paper.

The result suggests that random circuit sampling won’t yield a quantum advantage by the rigorous standards of computational complexity theory. At the same time, it illustrates the fact that polynomial algorithms, which complexity theorists indiscriminately call efficient, aren’t necessarily fast in practice. The new classical algorithm gets progressively slower as the error rate decreases, and at the low error rates achieved in quantum supremacy experiments, it’s far too slow to be practical. With no errors it breaks down altogether, so this result doesn’t contradict anything researchers knew about how hard it is to classically simulate random circuit sampling in the ideal, error-free case. Sergio Boixo, the physicist leading Google’s quantum supremacy research, says he regards the paper “more as a nice confirmation of random circuit sampling than anything else.”

On one point, all researchers agree: The new algorithm underscores how crucial quantum error correction will be to the long-term success of quantum computing. “That’s the solution, at the end of the day,” Fefferman said.

Editor’s note: Scott Aaronson is a member of Quanta Magazine’s Advisory Board.