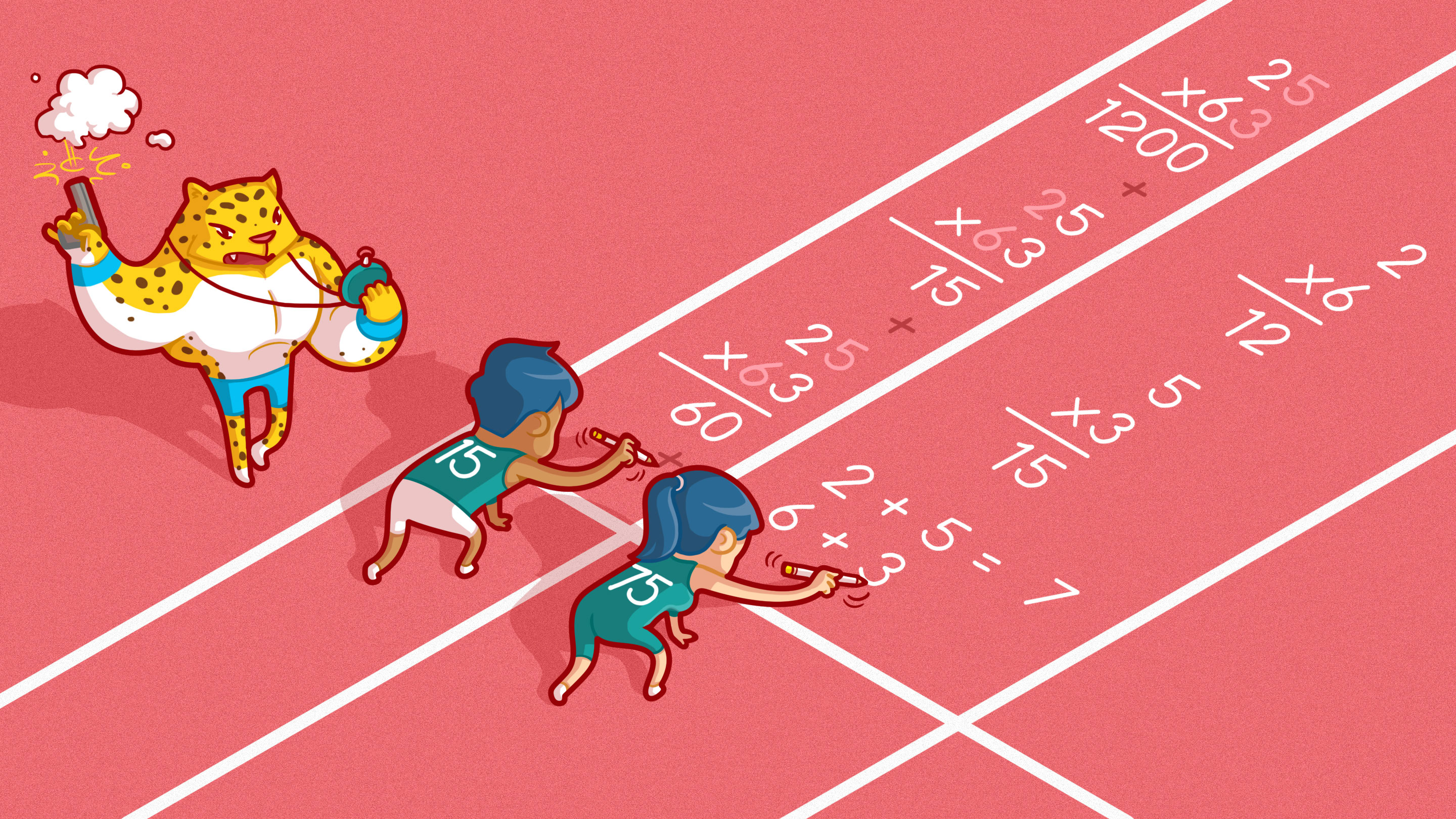

On Your Mark, Get Set, Multiply

BIG MOUTH for Quanta Magazine

Introduction

This summer, battle lines were drawn over a simple math problem: 8 ÷ 2(2 + 2) = ? If you divide 8 by 2 first, you get 16, but if you multiply 2 by (2 + 2) first, you get 1. So, which answer is right? The conflict grew so heated that it made the pages of The New York Times. And as the comments section shows, even a professional mathematician weighing in on the matter wasn’t enough to bring the two sides together.

The problem here is simply how we interpret the division symbol. Does ÷ mean divide by the one number right after it or by everything after it? This isn’t much of a concern for most mathematicians, as they don’t use this symbol very often. Ask them to solve this problem and they’ll probably just make the whole thing into a multiplication problem: Once you choose to write it as either

$latex 8 \times \frac{1}{2}(2+2)$ or $latex 8 \times \frac{1}{2(2+2)}$,

the ambiguity is gone and the answer is clear. As a multiplication question, this isn’t particularly interesting.

But one multiplication question mathematicians do find interesting may surprise you: What is the best way to multiply?

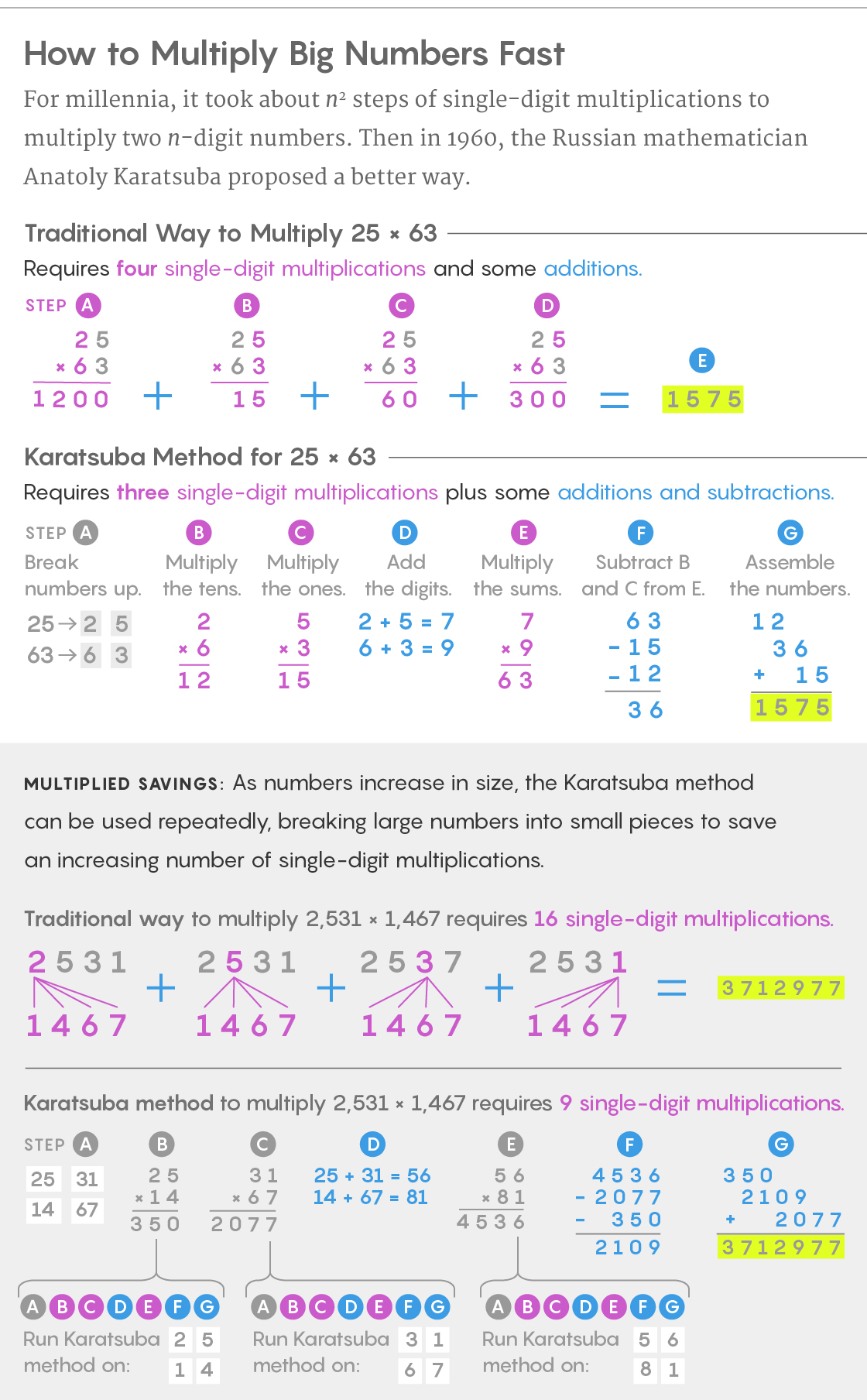

Suppose you were asked to multiply 25 and 63. If you’re like most people, you’d probably reach for a calculator. But if you couldn’t find one, you’d probably use the standard algorithm you learned in elementary school, multiplying each digit from one number with each digit from the other and then adding up the products:

$latex \begin{array}{r}{25} \\ { \times 63} \\ \hline 15 \\ {60} \\ {300} \\ {+1200} \\ \hline 1575\end{array}$

If you’re comfortable doing mental math, you might take another approach using the distributive property to make it easier to calculate the answer in your head:

25 × 63 = 25 × (60 + 3) = 25 × 60 + 25 × 3 = 1500 + 75 = 1575.

Both give us the correct answer, but is one way better than the other? Perhaps it’s just personal choice. But there is at least one way to objectively compare multiplication methods: Efficiency. While efficiency can mean different things to different people in different contexts, let’s think about it from a computer’s perspective. How long would it take a computer to perform the multiplication?

Evaluating the efficiency of computer algorithms is complex, but we will take a simple approach that relies on two assumptions. First, the multiplication of two large numbers can always be broken down into a bunch of small multiplications and additions. And second, the way most computers are designed, small additions can be performed much faster than small multiplications. So when it comes to measuring the efficiency of a multiplication algorithm, it’s the small multiplications that concern us most.

Let’s revisit our example, 25 × 63, with efficiency in mind. In order to compute 25 × 63 using the standard algorithm, we had to perform four small multiplications: 3 × 5, 3 × 2, 6 × 5 and 6 × 2. The small multiplications gave us our rows of 15, 60, 300 and 1,200, which added up to 1,575. It’s important to note that when we apply the standard algorithm, 3 × 2 is really 3 × 2 × 10 = 60, 6 × 5 is really 6 × 10 × 5 = 300, and 6 × 2 is really 6 × 10 × 2 × 10 = 1,200. However, we don’t count multiplying by 10 against the efficiency of our algorithm, as it’s just as easy for computers to multiply by 10 as it is for us — we just shift the digits over. The same is true for multiplying by 100, 1,000, and so on.

Thus, our execution of the standard algorithm to compute 25 × 63 requires four small multiplications and some additions. What about the distributive approach?

25 × 63 = 25 × (60 + 3) = 25 × 60 + 25 × 3 = 1500 + 75 = 1575.

In computing 25 × 60, we have to multiply 6 by both 2 and 5, and in computing 25 × 3, we have to multiply 3 by both 2 and 5. That’s still four small multiplications. Since each method requires four small multiplications, by our simple measure the two methods are roughly equivalent in terms of efficiency. So it shouldn’t come as a surprise that the standard algorithm is really just an application of the distributive property. Let’s see why.

Think about multiplying two arbitrary two-digit numbers ‘AB’ and ‘CD’. Here I’ll use single quotes to denote a number described by its digits: ‘AB’ is the number whose tens digit is A and whose ones digit is B. In other words, ‘AB’ is the number 10A + B. So, if ‘AB’ is the number 25, A = 2 and B = 5. And if ‘CD’ is the number 63, C = 6 and D = 3.

In order to find the product of ‘AB’ and ‘CD’, we need to multiply the two numbers 10A + B and 10C + D. We can do this using the distributive property twice:

(10A + B) × (10C + D) = 100(A × C) + 10(A × D) + 10(B × C) + B × D.

Here’s how it looks if we plug in our original numbers:

25 × 63 = (10 × 2 + 5) × (10 × 6 + 3)

= 100(2 × 6) + 10(2 × 3) + 10(5 × 6) + 5 × 3

= 1200 + 60 + 300 + 15

= 1575.

Notice that all the components of our application of the standard algorithm are there, just organized differently. And in terms of efficiency, we see exactly the same small multiplications performed in both: 3 × 5, 3 × 2, 6 × 5 and 6 × 2. These two methods are doing essentially the same thing. Either way, we have to multiply A × C, A × D, B × C and B × D. These four small multiplications appear to set a limit for how efficient multiplication can be.

But in 1960, the Russian mathematician Anatoly Karatsuba found a new limit, using his own application of the distributive law to find a more efficient way to multiply. Karatsuba noticed that all four small multiplications required to compute the product of ‘AB’ and ‘CD’ appear when we multiply the sums of their digits, A + B and C + D:

(A + B) × (C + D) = A × C + A × D + B × C + B × D.

The sum of those four small multiplications isn’t exactly what we want, but Karatsuba knew he could work with it. Let’s see what he did.

In the Karatsuba method for computing ‘AB’ × ‘CD’, we first perform the two small multiplications A × C and B × D. These are essentially the hundreds digit (A × C) and the ones digit (B × D) of our final answer. (There may be some carrying, but remember, small additions are much faster than small multiplications.) A negligible multiplication by 100 gets us two of the four terms we’re looking for:

100(A × C) + B × D.

To complete the entire multiplication problem, we want:

100(A × C) + 10(A × D) + 10(B × C) + B × D.

So we are only missing 10(A × D) + 10(B × C). Here’s where Karatsuba’s clever trick comes in. We can rearrange the product of the sums of digits

(A + B) × (C + D) = A × C + A × D + B × C + B × D

to get

(A + B) × (C + D) − A × C − B × D = A × D + B × C.

We need 10(A × D) + 10(B × C), which is the same as 10(A × D + B × C), so we can just multiply both sides of the above equation by 10 to get:

10((A + B) × (C + D) − A × C − B × D) = 10(A × D + B × C).

At first glance this doesn’t seem like an improvement. We’ve turned something fairly simple with only two small multiplications, 10(A × D + B × C), into something that has three multiplications,10((A + B) × (C + D) − A × C − B × D). But Karatsuba’s ultimate goal wasn’t to make things look nicer. It was to make multiplication more efficient. The secret to Karatsuba’s method is that we don’t need to compute A × C and B × D again: Those were the first two things we multiplied. We already know them!

We’re really replacing the two multiplications A × D and B × C with two multiplications we’ve already performed — A × C and B × D — and one new small multiplication, (A + B) × (C + D). This means we can compute ‘AB’ × ‘CD’ using only three small multiplications: A × C, B × D and (A + B) × (C + D).

Lucy Reading-Ikkanda/Quanta Magazine

Karatsuba’s method isn’t easy, and you certainly wouldn’t use it to multiply 25 by 63 just to save one small multiplication. The method requires more additions, and (A + B) × (C + D) might not even seem all that small. (Though, if you think about it, the largest value of A + B or C + D can’t be much bigger than a single-digit number). The important thing is that in the context of multiplying very large numbers, like when computers use mathematical techniques to encrypt and decrypt secret messages and sensitive data, these small trade-offs add up to big gains in speed.

For example, suppose we wanted to multiply two four-digit numbers like 1,234 and 5,678. Traditional multiplication techniques would require multiplying each digit of one number by each digit of the other, for a total of 4 × 4 = 16 small multiplications. But a simple application of Karatsuba’s method can reduce that: By thinking of 1,234 as 12 × 100 + 34 and 5,678 as 56 × 100 + 78 and using the distributive property, we see that:

1234 × 5678 = (12 × 100 + 34) × (56 × 100 + 78)

= 12 × 56 × 10000 + 12 × 78 × 100 + 34 × 56 × 100 + 34 × 78.

This requires four products of pairs of two-digit numbers, which, at four small multiplications each, give us our 16 multiplications. But by using Karatsuba’s method four times, we could reduce those four products to three small multiplications each, for a total of 12. And this 25% improvement is just the beginning. Further clever applications of Karatsuba’s method can reduce the number of multiplications even more, and the savings grow as the numbers get larger.

When multiplying two n-digit numbers together using traditional methods, we would expect to perform n × n = n2 small multiplications — each digit of the first number times each digit of the second number. But full implementations of Karatsuba’s algorithm require only around n1.58 small multiplications. This makes a huge difference as the numbers get larger. Multiplying two 10-digit numbers using traditional methods requires 10 × 10 = 102 = 100 small multiplications, but only around 101.58 ≈ 38 using Karatsuba’s method. That’s a 62% decrease. And for two 100-digit numbers, the savings are even greater: 1002 = 10,000 versus 1001.58 ≈ 1,445, an 85% difference!

You wouldn’t use this algorithm when calculating a tip, but when it comes to multiplying large numbers, Karatsuba’s method was a big advance. And once Karatsuba opened the door to faster multiplication in 1960, mathematicians have been setting new speed records ever since using advanced techniques like the Fourier transform. This method turns problems about multiplying numbers into problems about multiplying polynomials, where there are surprising shortcuts that create even faster algorithms — ones that computers still use today. These improvements culminated earlier this year when two researchers verified a nearly 50-year-old conjecture about the maximum efficiency of multiplication methods, finally settling the question about the fastest way to multiply.

But the question of how you should multiply is still open. Know your algorithms, but do what works best for you. And remember, multiplication is not a race. Unless you’re trying to set a new speed record.