Thirty Years Later, a Speed Boost for Quantum Factoring

Introduction

Peter Shor didn’t set out to break the internet. But an algorithm he developed in the mid-1990s threatened to do just that. In a landmark paper, Shor showed how a hypothetical computer that exploited the quirks of quantum physics could break large numbers into their prime factors far faster than any ordinary classical machine.

The result had implications far beyond mathematics. At the time, a vital component of internet security called public-key cryptography relied on the assumption that factoring large numbers is so computationally difficult as to be effectively impossible. That assumption still underpins some critical protocols today. Shor’s algorithm showed that it would fail spectacularly in a world with powerful quantum computers.

In the past 30 years, computer scientists have streamlined Shor’s algorithm in preparation for the day that quantum technology matures enough to run it. But a new variant, from the New York University computer scientist Oded Regev, is faster in a fundamentally new sense. It’s the first to improve the relationship between the size of the number being factored and the number of quantum operations required to factor it.

“It’s really remarkable that somebody has apparently been able to improve the complexity of this result many, many years later,” said Ashley Montanaro, a quantum computing researcher at the University of Bristol. “This is really exciting.”

Martin Ekerå, a cryptographer at the Swedish National Communications Security Authority, agreed that Regev’s paper is interesting but cautioned that beating the state of the art in practice will require further optimization. “Shor’s original algorithms are already surprisingly efficient, so it is not trivial to make major improvements,” he wrote in an email.

Regev developed his new algorithm by augmenting Shor’s algorithm with techniques from a branch of cryptography dealing with high-dimensional geometry.

“I would have thought that any algorithm that worked with this basic outline would be doomed,” said Shor, an applied mathematician now at the Massachusetts Institute of Technology. “But I was wrong.”

Internet security depends on the computational difficulty of breaking large numbers into their prime factors. In 1994, Peter Shor discovered a quantum algorithm that can factor large numbers quickly.

Christopher Harting

Finding Factors

Quantum computers derive their power from the peculiar way they process information. Classical computers use bits, each of which must always be in one of two states, labeled 0 and 1. Quantum bits, or “qubits,” can additionally be in combinations of their 0 and 1 states — a phenomenon called superposition. It’s also possible to coax multiple qubits into a collective superposition state: A two-qubit superposition has four components that can perform different computations simultaneously, and the number of such components grows exponentially as the number of qubits increases. That allows quantum computers to effectively perform exponentially many different computations in parallel.

But there’s a catch: Reading the result of a computation performed in superposition only reveals the answer to the part computed by one random component. To reap the benefits of computing in superposition, you must somehow map the end result onto a simpler state where it’s safe to read the result. That’s not possible in most cases, and at first nobody knew how to make it work for any problem. “There were very few people who even had the courage to think about quantum computations,” Regev said.

Then in 1994, Shor read a paper by the computer scientist Daniel Simon that showed how to exploit quantum superposition to solve a contrived problem. Shor figured out how to extend Simon’s result to a more general and practical problem called period finding. A mathematical function is said to be periodic when its output cycles repeatedly through the same values as the input increases; the length of a single cycle is known as the function’s period.

To find the period of a given function using a quantum computer, start by setting up a very large superposition in which each component computes the function’s output for a different input. Then use Shor’s method to convert that large superposition into a simpler state and read the result. At that point, a classical computer can take over and finish the calculation quickly. Overall, Shor’s period-finding algorithm runs exponentially faster than any classical alternative because it computes different outputs of the periodic function simultaneously using superposition.

As Shor looked for applications for his quantum period-finding algorithm, he rediscovered a previously known but obscure mathematical theorem: For every number, there exists a periodic function whose periods are related to the number’s prime factors. So if there’s a number you want to factor, you can compute the corresponding function and then solve the problem using period finding — “exactly what quantum computers are so good at,” Regev said.

On a classical computer, this would be an agonizingly slow way to factor a large number — slower even than trying every possible factor. But Shor’s method speeds up the process exponentially, making period finding an ideal way to construct a fast quantum factoring algorithm.

Shor’s algorithm was one of a few key early results that transformed quantum computing from an obscure subfield of theoretical computer science to the juggernaut it is today. But putting the algorithm into practice is a daunting task, because quantum computers are notoriously susceptible to errors: In addition to the qubits required to perform their computations, they need many others doing extra work to keep them from failing. A recent paper by Ekerå and the Google researcher Craig Gidney estimates that using Shor’s algorithm to factor a security-standard 2,048-bit number (about 600 digits long) would require a quantum computer with 20 million qubits. Today’s state-of-the-art machines have at most a few hundred.

That’s why some critical internet protocols still rely on how hard it is to factor large numbers, but researchers don’t want to get too complacent. Theoretical and technological innovations could bring the required qubit count down further, and there’s no proof that Shor’s algorithm is optimal — there might be a better quantum factoring algorithm out there that nobody’s discovered.

If so, Regev said, “we should know as early as possible, before it’s too late.”

Lost in the Trees

Regev began his academic career in the late 1990s, when cryptographers were searching for a new form of public-key cryptography that wasn’t vulnerable to Shor’s algorithm. The most promising approach, called lattice-based cryptography, relies on the apparent difficulty of computational problems involving high-dimensional arrays of points, or lattices. One such problem is akin to the task of locating the tree closest to a random point in a forest.

“If it’s a hundred-dimensional forest, then that’s much more complicated than if it’s a two-dimensional forest,” said Greg Kuperberg, a mathematician at the University of California, Davis.

Regev began studying lattice-based cryptography as a postdoc, initially as an attacker — he wanted to stress-test the new approach by finding weaknesses that a quantum computer could exploit. But he couldn’t make any progress, and he soon wondered if there was a deeper reason for that. In 2005, he found a way to parlay those failed attacks into a form of lattice-based cryptography superior to all other variants.

“Oded is absolutely brilliant with lattices,” Kuperberg said.

Over the years, as Regev taught Shor’s algorithm to successive generations of students, he found himself wondering whether the techniques he’d used for attacking lattice-based cryptography might actually prove useful in factoring algorithms. That’s because one step in the final, classical stage of Shor’s algorithm amounts to finding the nearest point in a one-dimensional lattice. That one-dimensional problem is trivially easy, but the resemblance to the analogous problem in hundreds of dimensions whose hardness underpins lattice-based cryptography was unmistakable.

“If you’re someone that does lattices like me, you think, ‘OK, there’s some lattice going on here,’” Regev said. “But it wasn’t clear to me how to make use of that.” For years he toyed with other ideas for new quantum factoring algorithms, but he never got anywhere. Then last winter he returned to the problem and resolved to pin down that tantalizing connection between factoring and lattice-based cryptography. This time, he found success.

Extra Dimensions

Regev knew he needed to start by generalizing the periodic function at the heart of Shor’s algorithm from one dimension to many dimensions. In Shor’s algorithm, that function involves repeatedly multiplying a random number, dubbed g, with itself. But the period of this function — the number of times you must multiply by g before the output of the function starts repeating — can be very large, and that means that a quantum computer must multiply large numbers in some components of the superposition it uses to compute the periodic function. Those large multiplications are the most computationally costly part of Shor’s algorithm.

The analogous two-dimensional function instead uses a pair of numbers, g1 and g2. It involves multiplying g1 with itself many times and then repeatedly multiplying by g2. The period of this function is also two-dimensional — it’s defined by the number of g1 multiplications and g2 multiplications that together make the function’s output start repeating. There are many different combinations of g1 and g2 multiplications that will do the trick.

Regev worked through the technical details to generalize the algorithm to an arbitrary number of dimensions, not just two, but his initial results weren’t encouraging. To compute the periodic function in many dimensions, the quantum computer would still have to multiply many numbers together. Each number wouldn’t need to get multiplied as many times as in the one-dimensional case, but there were more distinct numbers to multiply. The whole thing seemed to be a wash.

“You think, ‘Great, I just did everything in high dimensions, and it’s exactly the same running time as Shor’s,’” Regev said. “I was stuck with that for a while.” Then he realized he could get around the problem by changing the order of the multiplications. Instead of repeatedly tacking numbers onto a single product that would grow progressively larger over the course of the quantum computation, he started with pairs of small numbers, multiplied the resulting products together, and proceeded upward. The total number of multiplications didn’t change much, but now nearly all of them involve relatively small numbers, making the calculation faster.

“That makes all the difference in the world,” said Vinod Vaikuntanathan, a cryptographer at MIT.

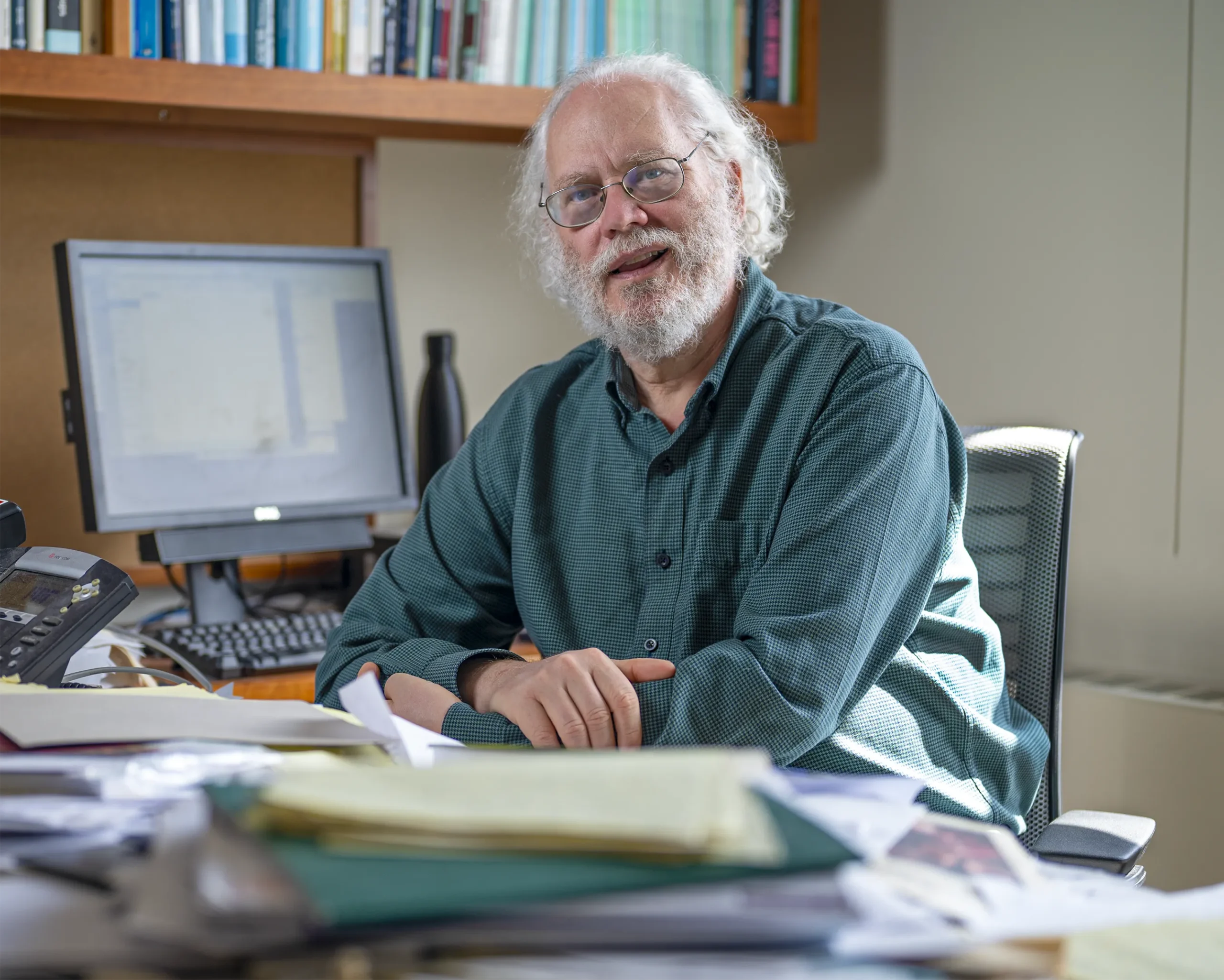

Merrill Sherman/Quanta Magazine; source: Oded Regev

At first, it looked as though Regev had just replaced one problem with another. He’d sped up the quantum computation of the periodic function by increasing the number of dimensions, but the subsequent classical computation required to extract the period was now similar to locating the nearest lattice point in a high-dimensional space — a task widely believed to be hard. The analogy to lattice-based cryptography that motivated his new approach seemed to doom it to failure.

One cold morning in March before a trip to a seminar at Princeton University, Regev found himself waiting for the colleague he was carpooling with. “I arrived early, and he was late to pick up the car,” he said. While he was sitting around waiting, the last piece of the puzzle suddenly came to him. “That’s the moment when things fell into place, but it was baking for a while.”

It all came down to the right number of dimensions. When the lattice dimension was too low, his algorithm couldn’t take full advantage of the speedup from multiplying smaller numbers. When it was too high, the quantum computation was fast, but the classical part required solving a prohibitively hard lattice problem. Regev had known from the beginning that to have any hope of success, he would have to work somewhere in between, but it wasn’t clear whether a sweet spot existed. That morning in March, he realized how he could tweak the details of the algorithm to make it run quickly in a few dozen dimensions.

Writing in the Sand

The improvement was profound. The number of elementary logical steps in the quantum part of Regev’s algorithm is proportional to n1.5 when factoring an n-bit number, rather than n2 as in Shor’s algorithm. The algorithm repeats that quantum part a few dozen times and combines the results to map out a high-dimensional lattice, from which it can deduce the period and factor the number. So the algorithm as a whole may not run faster, but speeding up the quantum part by reducing the number of required steps could make it easier to put it into practice.

Of course, the time it takes to run a quantum algorithm is just one of several considerations. Equally important is the number of qubits required, which is analogous to the memory required to store intermediate values during an ordinary classical computation. The number of qubits that Shor’s algorithm requires to factor an n-bit number is proportional to n, while Regev’s algorithm in its original form requires a number of qubits proportional to n1.5 — a big difference for 2,048-bit numbers.

In classical computing, speed is usually a more important consideration than memory, because classical bits are extremely robust: You can save a file on your computer and not worry about it randomly changing when you open it again later. Quantum computing researchers aren’t always so lucky.

“Our qubits are constantly trying to fall apart, and we’re trying to stop them from falling apart,” Gidney said. “It’s like you’re trying to write in the sand and the wind is blowing it away.” That means the extra qubits required by Regev’s algorithm could be a major drawback.

But Regev’s paper isn’t the end of the story. Two weeks ago, Vaikuntanathan and his graduate student Seyoon Ragavan found a way to reduce the algorithm’s memory use. Their variant of Regev’s algorithm, like Shor’s original algorithm, requires a number of qubits proportional to n rather than n1.5. Ekerå wrote in an email that the work “brings us a lot closer to an implementation that would be more efficient in practice.”

The broader lesson of Regev’s new algorithm, beyond the implications for factoring, is that quantum computing researchers should always be open to surprises, even in problems that have been studied for decades.

“This variant of my algorithm was undiscovered for 30 years and came out of the blue,” Shor said. “There’s still probably lots of other quantum algorithms to be found.”

Editor’s note: Oded Regev receives funding from the Simons Foundation, which also funds this editorially independent magazine. Simons Foundation funding decisions have no influence on our coverage. More details are available here.