A New Generation of Mathematicians Pushes Prime Number Barriers

Introduction

More than 2,000 years ago, the Greek mathematician Eratosthenes came up with a method for finding prime numbers that continues to reverberate through mathematics today. His idea was to identify all the primes up to a given point by gradually “sieving out” the numbers that aren’t prime. His sieve starts by crossing out all the multiples of 2 (except 2 itself), then the multiples of 3 (except 3 itself). The next number, 4, is already crossed out, so the next step is to cross out the multiples of 5, and so on. The only numbers that survive are primes — numbers whose only divisors are 1 and themselves.

Eratosthenes was focused on the full set of primes, but you can use variations on his sieve to hunt for primes with all kinds of special features. Want to find “twin primes,” which are only 2 apart, like 11 and 13 or 599 and 601? There’s a sieve for that. Want to find primes that are 1 bigger than a perfect square, like 17 or 257? There’s a sieve for that too.

Modern sieves have fueled many of the biggest advances in number theory on problems ranging from Fermat’s Last Theorem to the still unproved twin primes conjecture, which says that there are infinitely many pairs of twin primes. Sieve methods, the Hungarian mathematician Paul Erdős wrote in 1965, are “perhaps our most powerful elementary tool in number theory.”

Yet this power is constrained by mathematicians’ limited understanding of how primes are distributed along the number line. It’s simple to carry out a sieve up to some small number, like 100. But mathematicians want to understand the behavior of sieves when numbers get big. They can’t hope to list all the numbers that survive the sieve up to some extremely large stopping point. So instead, they try to estimate how many numbers are on that list.

Merrill Sherman/Quanta Magazine

For the sieve of Eratosthenes, this estimate depends on how often whole numbers are divisible by 2, or 3, or 5, and so on — comparatively easy information to obtain. But for more complicated sieves, such as the ones for twin primes, the crucial information often concerns the remainders that primes leave behind when divided by different numbers. For instance, how often do primes leave a remainder of 1 when divided by 3? Or a remainder of 8 when divided by 15?

As you move out along the number line, these remainders settle into statistically predictable patterns. In 1896, the Belgian mathematician Charles-Jean de la Vallée Poussin proved that remainders gradually even out — for example, if you drop primes into one of two buckets depending on whether their remainder is 1 or 2 when they’re divided by 3, the two buckets will eventually hold roughly the same number of primes. But to extract the full potential from sieve methods, mathematicians need to know not only that the buckets eventually even out, but how soon they do so.

That has proved challenging. After a burst of progress in the 1960s and another in the 1980s, new developments mostly petered out. A notable exception occurred in 2013, when Yitang Zhang published a landmark proof that there are infinitely many pairs of primes closer to each other than some finite bound. But the main body of work developed in the ’80s saw essentially no progress for more than three decades.

Now the subject is enjoying a renaissance, sparked by a series of three papers written by the Oxford mathematician James Maynard in 2020 (two years before he was awarded the Fields Medal, mathematics’ highest honor). Maynard analyzed a number called the “level of distribution” that captures how quickly prime remainders become evenly distributed into buckets (sometimes with reference to particular types of sieves). For many commonly used sieves, he showed that the level of distribution is at least 0.6, beating the previous record of 0.57 from the 1980s.

Maynard’s work and the follow-on studies it has prompted “are breathing new life into analytic number theory,” said John Friedlander of the University of Toronto, who played a large role in the developments in the 1980s. “It’s a real revival.”

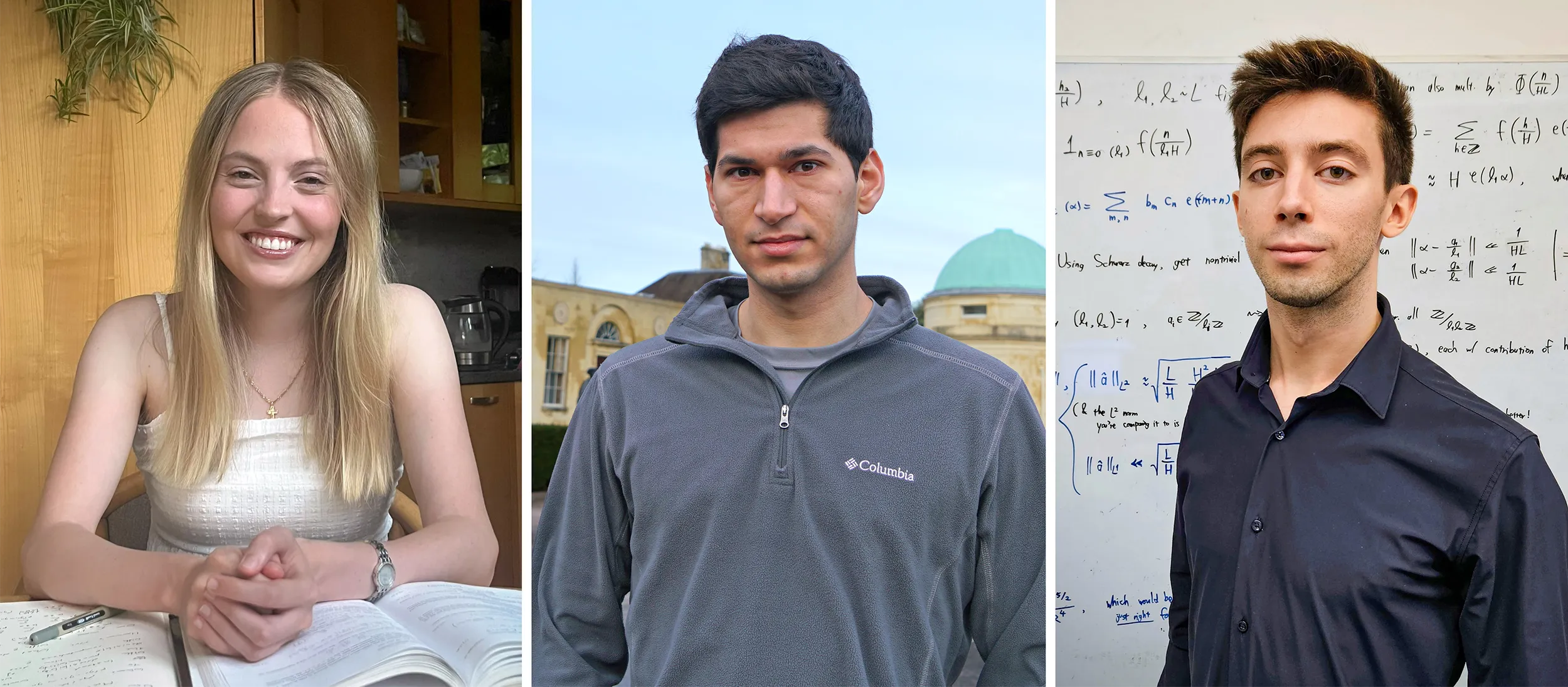

Julia Stadlmann, Jared Duker Licthman and Alexandru Pascadi (top to bottom) have all proved new results about how prime numbers are distributed.

Hannah Stadlmann (top); Ruoyi Wang; Alexa Tudose

Julia Stadlmann, Jared Duker Lichtman and Alexandru Pascadi (left to right) have all proved new results about how prime numbers are distributed.

Hannah Stadlmann (left); Ruoyi Wang; Alexa Tudose

In the past few months, three of Maynard’s graduate students have written papers extending both Maynard’s and Zhang’s results; one of these papers, by Jared Duker Lichtman (now a postdoctoral fellow at Stanford University), pushed Maynard’s level of distribution up to about 0.617. Lichtman then used that increase to calculate improved upper bounds on the number of twin primes up to a given stopping point, and the number of “Goldbach representations” — representations of even numbers as the sum of two primes.

“These younger people are following up [on] what’s really the hot topic now,” said Andrew Granville of the University of Montreal.

An increase from 0.6 to 0.617 might seem of small account to people outside number theory. But in sieve theory, Granville said, “sometimes those little wins can have devastating consequences.”

Including and Excluding

To estimate how many numbers a sieve removes up to some stopping point N, mathematicians use an approach based on something called inclusion/exclusion. To see how this works, consider the sieve of Eratosthenes. This sieve starts by removing all multiples of 2 — that’s about half the numbers up to N. Next the sieve removes all multiples of 3 — about 1/3 of the numbers up to N. So you might think that so far you’ve removed about 1/2 + 1/3 of the numbers up to N.

But this is an overcount, because you’ve double-counted numbers that are multiples of both 2 and 3 (multiples of 6). These are about 1/6 of all the numbers up to N, so to correct for counting them twice, you need to subtract 1/6, bringing the running total for what you are removing to 1/2 + 1/3 − 1/6.

Next you can move on to multiples of 5 — that’s going to add 1/5 to the count, but you have to subtract 1/10 and 1/15 to correct for overcounting numbers that are divisible by both 2 and 5, or both 3 and 5. Even then you’re not quite done — you’ve accidentally corrected twice for the numbers that are divisible by 2, 3 and 5, so to fix that you have to add 1/30 to your count, bringing the running total to 1/2 + 1/3 − 1/6 + 1/5 − 1/10 − 1/15 + 1/30.

As this process continues, the sum gains more and more terms, involving fractions with bigger and bigger denominators. To prevent the small errors in approximations like “about 1/2” and “about 1/3” from piling up too much, number theorists usually stop the addition and subtraction process before they’ve gone through the whole sieve, and content themselves with upper and lower bounds instead of an exact answer.

In theory, a similar process should work for fancier sets of primes, like twin primes. But when it comes to something like twin primes, inclusion/exclusion won’t work unless you know how evenly prime remainders are distributed into buckets.

Merrill Sherman/Quanta Magazine

To see this, think about how a twin prime sieve could work. You can start by using the sieve of Eratosthenes to find all the primes up to N. Then, do a second round of sieving that removes every prime that isn’t part of a twin prime pair. One way to do this is to sieve out a prime if the number that’s two spots to its left is not prime (or you could look two spots to the right — either sieve will work). Using the leftward sieve, you’ll keep primes such as 13, since 11 is also prime, but cross out primes like 23, since 21 is not prime.

You can think of this sieve as first shifting the set of primes two spots to the left on the number line, then crossing out the numbers in the shifted set that aren’t prime (such as 21). In the shifted set, you’ll cross out multiples of 3, then multiples of 5, and so on. (You don’t have to worry about multiples of 2, since the numbers in the shifted set are all odd, except the very first one.)

Next comes inclusion/exclusion, to estimate how many numbers you’ve crossed out. In the sieve of Eratosthenes, crossing out multiples of 3 removes about 1/3 of all numbers. But in the smaller set of shifted primes, it’s harder to predict how many will fall when we cross out multiples of 3.

Any number k in the shifted set is 2 less than some prime. So if k is a multiple of 3, then its corresponding prime, k + 2, has a remainder of 2 when divided by 3. Prime numbers have a remainder of either 1 or 2 when divided by 3 (except for 3 itself), so you might guess that half the primes up to N have a remainder of 1 and half have a remainder of 2. That would mean that in this step of the sieve you’re crossing out approximately half the numbers in the shifted set (instead of 1/3 as in the sieve of Eratosthenes). So you would write a 1/2 term in your inclusion/exclusion sum.

Thanks to de la Vallée Poussin, we know that eventually half of all primes have a remainder of 1 and half have a remainder of 2 when you divide by 3. But to do inclusion/exclusion, it’s not enough to know that the remainder buckets balance out eventually — you need to know that they balance out by N. Otherwise, you can’t have any confidence in the “1/2” in the inclusion/exclusion sum. Perhaps, mathematicians have worried for more than a century, the distribution of primes has weird quirks that undermine some of the counts needed for our inclusion/exclusion sum.

“If you don’t have distribution theorems, you can’t understand what happens when you finish your sieve,” said Terence Tao of the University of California, Los Angeles.

A Fundamental Waypoint

One prediction about how fast the buckets start to even out was available to number theorists in the form of the most celebrated unsolved problem in number theory — the generalized Riemann hypothesis. This hypothesis, if true, would imply that if we’re looking at all the primes up to some very large number N, then prime remainders are evenly distributed into buckets for any divisor up to about the square root of N. So for example, if you’re looking at the primes less than 1 trillion, you’d expect them to be distributed evenly into remainder buckets when you divide them by 120, or 7,352, or 945,328 — any divisor less than about 1 million (the square root of 1 trillion). Mathematicians say that the generalized Riemann hypothesis predicts that the primes’ level of distribution is at least 1/2, since another way to write the square root of N is as N1/2.

James Maynard and his students have been figuring out how quickly primes start adhering to statistically predictable patterns.

Tom Medwell

If this hypothesis is correct, that would mean that when you’re sieving up to 1 trillion, you can cross off multiples of 2, then 3, then 5, and keep going until the inclusion/exclusion sum starts to involve divisors over about 1 million — beyond that point, you can’t calculate the terms in your sum. In the mid-1900s, number theorists proved many sieve theorems of the form, “If the generalized Riemann hypothesis is correct, then … ”

But a lot of these results didn’t actually need the full strength of the generalized Riemann hypothesis — it would be enough to know that primes were well distributed into buckets for almost every divisor, instead of every single divisor. In the mid-1960s, Enrico Bombieri and Askold Vinogradov separately managed to prove just that: The primes have a level of distribution of at least 1/2, if we’re content with knowing that the buckets even out for almost every divisor.

The Bombieri-Vinogradov theorem, which is still widely used, instantly proved many of the results that had previously relied on the unproved generalized Riemann hypothesis. “It’s kind of the gold standard of distribution theorems,” Tao said.

But mathematicians have long suspected — and numerical evidence has suggested — that the true level of distribution of the primes is much higher. In the late 1960s, Peter Elliott and Heini Halberstam conjectured that the level of distribution of the primes is just a shade below 1 — in other words, if you’re looking at primes up to some huge number, they should be evenly distributed into buckets even for divisors very close in size to the huge number. And these large divisors matter when you’re doing inclusion/exclusion, since they come up when you’re correcting for overcounts. So the closer mathematicians can get to the level of distribution Elliott and Halberstam predicted, the more terms they can calculate in the inclusion/exclusion sum. Proving the Elliott-Halberstam conjecture, Tao said, is “the dream.”

To this day, however, no one has been able to beat the 1/2 level of distribution in the full degree of generality that the Bombieri-Vinogradov theorem achieves. Mathematicians have taken to calling this stumbling block the “square-root barrier” for prime numbers. This barrier, Lichtman said, is “a fundamental kind of waypoint in our understanding of the primes.”

New World Records

For many sieve problems, though, you can make progress even with incomplete information about how the primes divide into buckets. Take the twin primes problem: Sieving out a prime if the number two spots to its left is divisible by 3 or 5 or 7 is the same as asking whether the prime itself has a remainder of 2 when divided by 3 or 5 or 7 — in other words, whether the prime falls into the “2” bucket for any of these divisors. So you don’t need to know whether primes are evenly distributed across all the buckets for these divisors — you just need to know whether each “2” bucket holds the number of primes we expect.

In the 1980s, mathematicians started figuring out how to prove distribution theorems that focus on one particular bucket. This work culminated in a 1986 paper by Bombieri, Friedlander and Henryk Iwaniec that pushed the level of distribution up to 4/7 (about 0.57) for single buckets, not for all sieves but for a wide class of them.

As with the Bombieri-Vinogradov theorem, the body of ideas developed in the 1980s found a host of applications. Most notably, it enabled a huge leap in mathematicians’ understanding of Fermat’s Last Theorem, which says that the equation an + bn = cn has no natural-number solutions for any exponent n higher than 2. (This was later proved in 1994 using techniques that didn’t rely on distribution theorems.) After the excitement of the 1980s, however, there was little progress on the level of distribution of the primes for several decades.

Then in 2013, Zhang figured out how to get over the square-root barrier in a different direction from that of Bombieri, Friedlander and Iwaniec. He dug into old, unfashionable methods from the early 1980s to eke out the tiniest of improvements on Bombieri and Vinogradov’s 1/2 level of distribution in a context where you’re sieving only with “smooth” numbers — ones that have no large prime factors. This tiny improvement enabled Zhang to prove the long-standing conjecture that as you go out along the number line, you’ll keep encountering pairs of primes that are closer together than some fixed bound. (Subsequently, Maynard and Tao each separately came up with another proof of this theorem, by using an improved sieve rather than an improved level of distribution.)

Zhang’s result drew on a version of the Riemann hypothesis that lives in the world of algebraic geometry. The work of Bombieri, Friedlander and Iwaniec, meanwhile, relied on what Maynard calls a “somewhat magical connection” to objects called automorphic forms, which have their own version of the Riemann hypothesis. Automorphic forms are highly symmetric objects that, Tao says, belong to “the high-powered end of number theory.”

A few years ago, Maynard became convinced that it should be possible to squeeze more juice out of these two methods by combining their insights. In his series of three papers in 2020, which Granville labeled a “tour de force,” Maynard managed to push the level of distribution up to 3/5, or 0.6, in a slightly narrower context than the one Bombieri, Friedlander and Iwaniec studied.

Now, Maynard’s students are pushing these techniques further. Lichtman recently figured out how to extend Maynard’s level of distribution to about 0.617. He then parlayed this increase into new upper bounds on the counts of both twin primes and Goldbach representations of even numbers as the sum of two primes. For the latter, it’s the first time anyone has been able to use a level of distribution beyond the 1/2 from the classic Bombieri-Vinogradov theorem.

Another of Maynard’s students, Alexandru Pascadi, has matched the 0.617 figure for the level of distribution not of primes but of smooth numbers. Like primes, smooth numbers come up all over number theory, and results about their level of distribution and that of the primes often go hand in hand.

Meanwhile, a third student, Julia Stadlmann, has boosted the level of distribution of primes in the setting that Zhang studied, in which the divisors (instead of the numbers being divided) are smooth numbers. Zhang narrowly beat the square-root barrier in this context, reaching a 0.5017 level of distribution, and then an online collaboration called a Polymath project raised that number to 0.5233; Stadlmann has now raised it to 0.525.

Other mathematicians tease analytic number theorists, Tao said, for their obsession with small numerical advances. But these tiny improvements have a significance beyond the numbers in question. “It’s like the 100-meter dash or something, [where] you shave 3.96 seconds to 3.95 seconds,” he said. Each new world record is “a benchmark for how much your methods have progressed.”

Overall, “the techniques are getting more clear and more unified,” he said. “It’s becoming clear, once you have an advance on one problem, how to adapt it to another problem.”

There’s no bombshell application for these new developments yet, but the new work “definitely changes the way we think,” Granville said. “This isn’t just banging a nail in harder — this is actually getting a more upgraded hammer.”