Quantum Computing Without Qubits

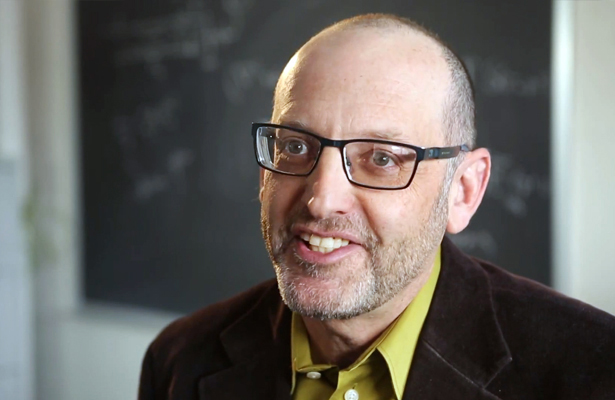

For more than 20 years, Ivan H. Deutsch has struggled to design the guts of a working quantum computer. He has not been alone. The quest to harness the computational might of quantum weirdness continues to occupy hundreds of researchers around the world. Why hasn’t there been more to show for their work? As physicists have known since quantum computing’s beginnings, the same characteristics that make quantum computing exponentially powerful also make it devilishly difficult to control. The quantum computing “nightmare” has always been that a quantum computer’s advantages in speed would be wiped out by the machine’s complexity.

Yet progress is arriving on two main fronts. First, researchers are developing unique quantum error-correction techniques that will help keep quantum processors up and running for the time needed to complete a calculation. Second, physicists are working with so-called analog quantum simulators — machines that can’t act like a general-purpose computer, but rather are designed to explore specific problems in quantum physics. A classical computer would have to run for thousands of years to compute the quantum equations of motion for just 100 atoms. A quantum simulator could do it in less than a second.

Quanta Magazine spoke with Deutsch about recent progress in the field, his hopes for the near future, and his own work at the University of New Mexico’s Center for Quantum Information and Control on scaling up binary quantum bits into base-16 digits.

QUANTA MAGAZINE: Why would a universal quantum machine be so uniquely powerful?

IVAN DEUTSCH: In a classical computer, information is stored in retrievable bits binary coded as 0 or 1. But in a quantum computer, elementary particles inhabit a probabilistic limbo called superposition where a “qubit” can be coded as 0 and 1.

Here is the magic: Each qubit can be entangled with the other qubits in the machine. The intertwining of quantum “states” exponentially increases the number of 0s and 1s that can be simultaneously processed by an array of qubits. Machines that can harness the power of quantum logic can deal with exponentially greater levels of complexity than the most powerful classical computer. Problems that would take a state-of-the-art classical computer the age of our universe to solve, can, in theory, be solved by a universal quantum computer in hours.

What is the quantum computing “nightmare”?

The same quantum effects that make a quantum computer so blazingly fast also make it incredibly difficult to operate. From the beginning, it has not been clear whether the exponential speed up provided by a quantum computer would be cancelled out by the exponential complexity needed to protect the system from crashing.

Is the situation hopeless?

Not at all. We now know that a universal quantum computer will not require exponential complexity in design. But it is still very hard.

So what’s the problem, and how do we get around it?

The hardware problem is that the superposition is so fragile that the random interaction of a single qubit with the molecules composing its immediate surroundings can cause the entire network of entangled qubits to delink or collapse. The ongoing calculation is destroyed as each qubit transforms into a digitized classical bit holding a single value: 0 or 1.

In classical computers, we reduce the inevitable loss of information by designing a lot of redundancy into the system. Error-correcting algorithms compare multiple copies of the output. They select the most frequent answer and discard the rest of the data as noise. We cannot do that with a quantum computer, because trying to directly compare qubits will crash the program. But we are gradually learning how to keep systems of entangled qubits from collapsing.

The major obstacle, to my mind, is creating error-correcting software that can keep data from being corrupted as the calculation proceeds toward the final readout. The great trick is to design and implement an algorithm that only measures the errors and not the data, thus preserving the superposition that contains the correct answer.

Will that end the nightmare?

It turns out that the error correction technique itself introduces errors. One of the most wonderful advances in quantum computing was recognizing that, in theory, we can correct the new errors without requiring 100 percent precision, allowing minor background noise to pollute the calculation as it rolls along. We cannot actually do this — yet. The main reason that we do not have a working universal quantum computer is that we are still experimenting with how to implant such a “fault-tolerant” algorithm into a quantum circuit. Right now we can control 10 qubits reasonably well. But there is no error-correcting technique, to my knowledge, capable of controlling the thousands of qubits needed to construct a universal machine.

Is that what you’re working on?

I study the information processing capabilities of trapped atoms. My colleague Poul Jessen at the University of Arizona and I are pushing the logical power beyond binary-based qubits. For example, what if we can control the superposition of an atom with, say, 16 different energy levels? Using base 16, we can then store what we call a “qudit” in a single atom. That would move us beyond the information processing speed obtainable by a base 2 system, the qubit.

What other options do we have?

There may be significant applications available for making non-universal machines: Special purpose, analog quantum simulators designed to solve specific problems, such as how room-temperature superconductors work or how a particular protein folds.

Are these actually computers?

They are not universal machines capable of solving any type of question. But say that I want to model global climate change. One way to do this is to write down a mathematical model and then solve the equations on a digital computer. That is typically what climate scientists do. Another way is to try to simulate some aspect of the earth’s climate in a controllable experiment. I can create a simple physical system that obeys the same laws of motion as the system I’m trying to model — mixing nitrogen, oxygen, and hydrogen in a tank, for example. What goes on inside the tank is a real-world computation that tells me something about atmospheric turbulence under certain conditions.

It is the same with an analog quantum simulator — I use one controllable physical system to simulate another. For example, successfully simulating a superconductor with such a device would reveal the quantum mechanics of high-temperature superconductivity. That could lead to the manufacture of non-brittle superconducting materials for many uses, including building less-fragile quantum circuits. Hopefully, we can learn how to build a robust universal digital computer by experimenting with analog simulators.

Has anyone built a working analog quantum simulator?

In 2002, a group at the Max Planck Institute in Germany built an optical lattice — a super-chilled egg carton made of light — and controlled it by pulsing different strengths of laser beams at it. This was a fundamentally analog device designed to obey quantum mechanical equations of motion. The short story is that it successfully simulated how atoms transition between acting as superfluids or insulators. That experiment has sparked a lot of research in analog quantum computing with optical lattices and cold atom traps.

What are the main challenges for these quantum simulators?

Because the evolution of the analog simulation is not digitized, the software cannot correct the tiny errors that accumulate during the calculation as we could error-correct noise on a universal machine. The analog device must keep a quantum superposition intact long enough for the simulation to run its course without resorting to digital error correction. This is a particular challenge for the analog approach to quantum simulation.

Is the D-Wave machine a quantum simulator?

The D-Wave prototype is not a universal quantum computer. It is not digital, nor error-correcting, nor fault tolerant. It is a purely analog machine designed to solve a particular optimization problem. It is unclear if it qualifies as a quantum device.

Will a scalable quantum computer be deployed during your lifetime?

We are pushing past the nightmare. Around the world, many university-based labs are working hard to remove or bypass the road block of fault tolerance. Academic researchers are leading the way, intellectually. For example, the groups of Rob Schoelkopf and Michel H. Devoret at Yale are taking superconducting technologies close to fault-tolerance.

But constructing a working universal digital quantum computer will likely require mobilizing industrial-scale resources. To that end, IBM is exploring quantum computing with superconducting circuits with personnel largely from the Yale groups. Google is working with John Martinis’s lab at the University of California, Santa Barbara. HRL Laboratories is working on silicon-based quantum computing. Lockheed Martin is exploring ion traps. And who knows what the National Security Agency is up to.

But generally in academic labs, without these industrial-scale resources, scientists are focusing more and more on learning how to control analog quantum simulators. There is short-term fruit to be picked in that arena — both intellectually and in the currency of academics: publishable papers.

Are you willing to settle for analog?

I favor pursuing the digital approach full force. Before I die, I would love to see just one universal logical qubit that can be indefinitely error corrected. It would instantly be classified by the government, of course. But I dream on, regardless.