Scientists Find Optimal Balance of Data Storage and Time

Allison Li for Quanta Magazine

Introduction

About 70 years ago, an engineer at IBM named Hans Peter Luhn quietly changed the course of computer science. Luhn already held several patents, including one for a device that could measure a cloth’s thread count and another for a guide that determined what mixed drinks you could make from the ingredients in your kitchen. But in a 1953 internal IBM paper, he proposed a new technique for storing and retrieving information that is now built into just about all computational systems: the hash table.

Hash tables are a major class of data structures. They offer an especially convenient method for accessing and altering information in massive databases. But this technology comes with an unavoidable trade-off.

In a 1957 paper published in the IBM Journal of Research and Development, W. Wesley Peterson identified the main technical challenge that hash tables pose: They need to be fast, meaning that they can quickly retrieve the necessary information. But they also need to be compact, using as little memory as possible. These twin objectives are fundamentally at odds. Accessing and modifying a database can be done more quickly when the hash table has more memory; and operations become slower in hash tables that use less space. Ever since Peterson laid out this challenge, researchers have tried to find the best balance between time and space.

Computer scientists have now mathematically proved that they have found the optimal trade-off. The solution came from a pair of recent papers that complemented each other. “These papers resolve the long-standing open question about the best possible space-time trade-offs, yielding deeply surprising results that I expect will have a significant impact for many years to come,” said Michael Mitzenmacher, a computer scientist at Harvard University who was not involved in either study.

“I would definitely say it is a big deal,” added Rasmus Pagh, a computer scientist at the University of Copenhagen. “A lot of people have worked on this problem, trying to see how much you can squeeze space, while also having time-efficient operations. This is the one I would have loved to solve.”

Making a Hash of It

Hash tables are among the oldest, simplest, fastest and most widely used data structures today. They’re designed to perform three basic operations: insertions, which add new items to the database; queries, which access an item or check to see whether it exists; and deletions. A hash table can be ephemeral — existing only as long as a particular program runs — or it can be a permanent part of your computer’s operating system. A web browser such as Chrome or Safari may have multiple built-in hash tables intended to keep track of different kinds of data.

Entries in a hash table are stored as pairs, with the item — the information itself — connected to a key that identifies the information. Plug a key into a hash table’s query algorithm, and it takes you directly to the item. This may not sound so extraordinary, but for enormous databases it can be a great time-saver.

In 1953, Hans Peter Luhn suggested a new way to store and retrieve information called the hash table.

Courtesy of IBM Corporation

To take an extremely simplified example, consider the Oxford English Dictionary, which has definitions for more than 600,000 words. If a digital edition relies on a hash table, you can simply use a given word as a key and proceed straight to the definition. Without a hash table, the dictionary would likely rely on a much slower search mechanism, using a process of elimination to eventually converge on the requested definition. And while a hash table can find any word in a constant amount of time (usually a tiny fraction of a second), the search time for other methods can go up as the number of words in the dictionary increases. A hash table also offers another advantage: It can keep the dictionary dynamic, making it easy to insert new words and delete outdated ones.

Researchers have spent decades building hash tables that attempt to maximize speed and minimize memory. In the 20th century, solutions tended to offer significant gains in just one aspect, time or space. Then in 2003, researchers showed that it was theoretically possible to make a major efficiency leap in both time and space simultaneously. It would take another two decades, however, for researchers to figure out the ideal balance between the two.

The Data Shuffle

The first major step toward that goal came in 2022 at a major computer science conference in Rome. There, a team proposed a hash table with new features that could deliver the best combination of time and space efficiency yet conceived. The first author of the paper (listed alphabetically) was Michael Bender of Stony Brook University, so it is commonly referred to as the Bender et al. hash table. While the team didn’t try to build a functioning hash table, they proved that it could, in principle, be constructed with the features they described.

To evaluate the hash table they came up with, the group produced a trade-off curve — a graph that plots the time per operation (insertion or deletion) on one axis and the space taken up by memory on the other. But this graph defines space in a special way: Because of how they’re built, hash tables need more memory than just the bare minimum required to store a given set of items. Computer scientists call this extra space “wasted bits,” even though they’re not really wasted and are, to some extent, necessary. The space axis on a trade-off curve measures the number of wasted bits per key.

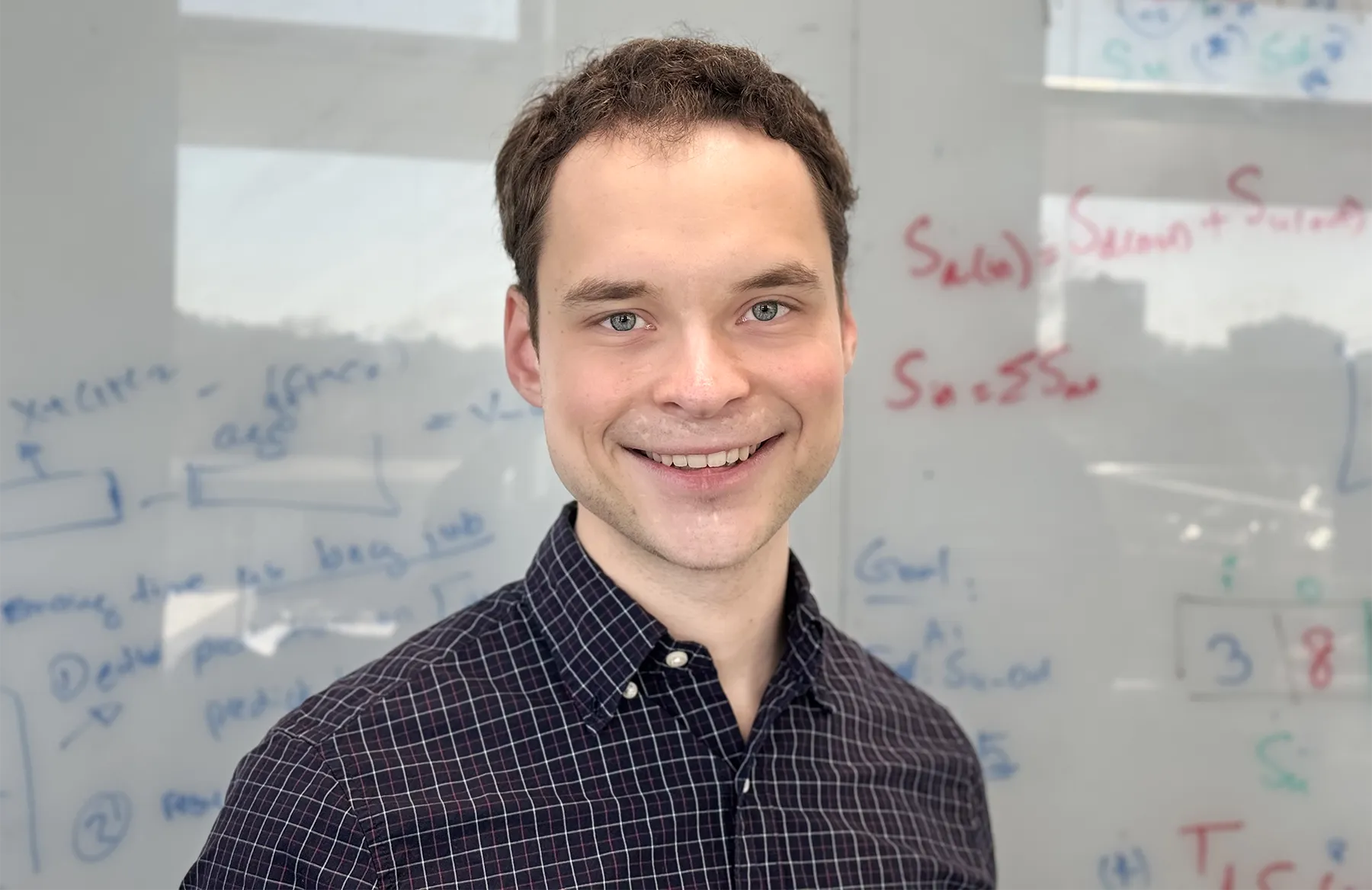

By analyzing a trade-off curve, researchers can figure out the fastest time possible for a hash table that uses a given amount of space. They can also flip the question around to figure out the smallest possible space for a given operation time. Usually, a small change in one variable will lead to a small change in the other, said William Kuszmaul, a theoretical computer scientist at Harvard and a co-author of the 2022 paper. “If you double the time, perhaps you’ll halve the number of wasted bits per key.”

But that’s not the case with the hash table they designed. “If you increase the time by a little bit, the wasted bits per key decrease exponentially,” Kuszmaul said. The trade-off curve was so steep, it was literally off the charts.

William Kuszmaul helped design a hash table in 2022 that seemed incredibly efficient in both memory and speed.

Rose Silver

The team built their hash table in two parts. They had a primary data structure, in which the items are stored without any wasted bits at all, and a secondary data structure, which helps a query request find the item it’s looking for. While the group did not invent the notion of a secondary data structure, they did make a crucial discovery that made their hyperefficient hash table possible: The structure’s overall memory efficiency depends on how the primary structure arranges its stored items.

The basic idea is that every item in the primary structure has preferred storage locations — a best location, a second-best one, a third best and so on. If an item is in its best spot, the number 1 is affixed to it, and that number is stored in the secondary data structure. In response to a query, the secondary structure provides just the number 1, which spells out the item’s exact location in the primary structure.

If the item is in its 100th-best spot, the secondary data structure attaches the number 100. And because the system uses binary, it represents the number 100 as 1100100. It takes more memory, of course, to store the number 1100100 than 1 — the number assigned to an item when it’s in the best spot. Differences like that become significant if you’re storing, say, a million items.

So the team realized that if you continually shift items in the primary data structure into their more preferred locations, you could significantly reduce the memory consumed by the secondary structure without having to increase query times.

“Before this work, no one had realized you could further compress the data structure by moving information around,” Pagh said. “That was the big insight of the Bender paper.”

The authors showed that their invention established a new upper bound for the most efficient hash tables, meaning that it was the best data structure yet devised in terms of both time and space efficiency. But the possibility remained that someone else might do even better.

Bound to Succeed

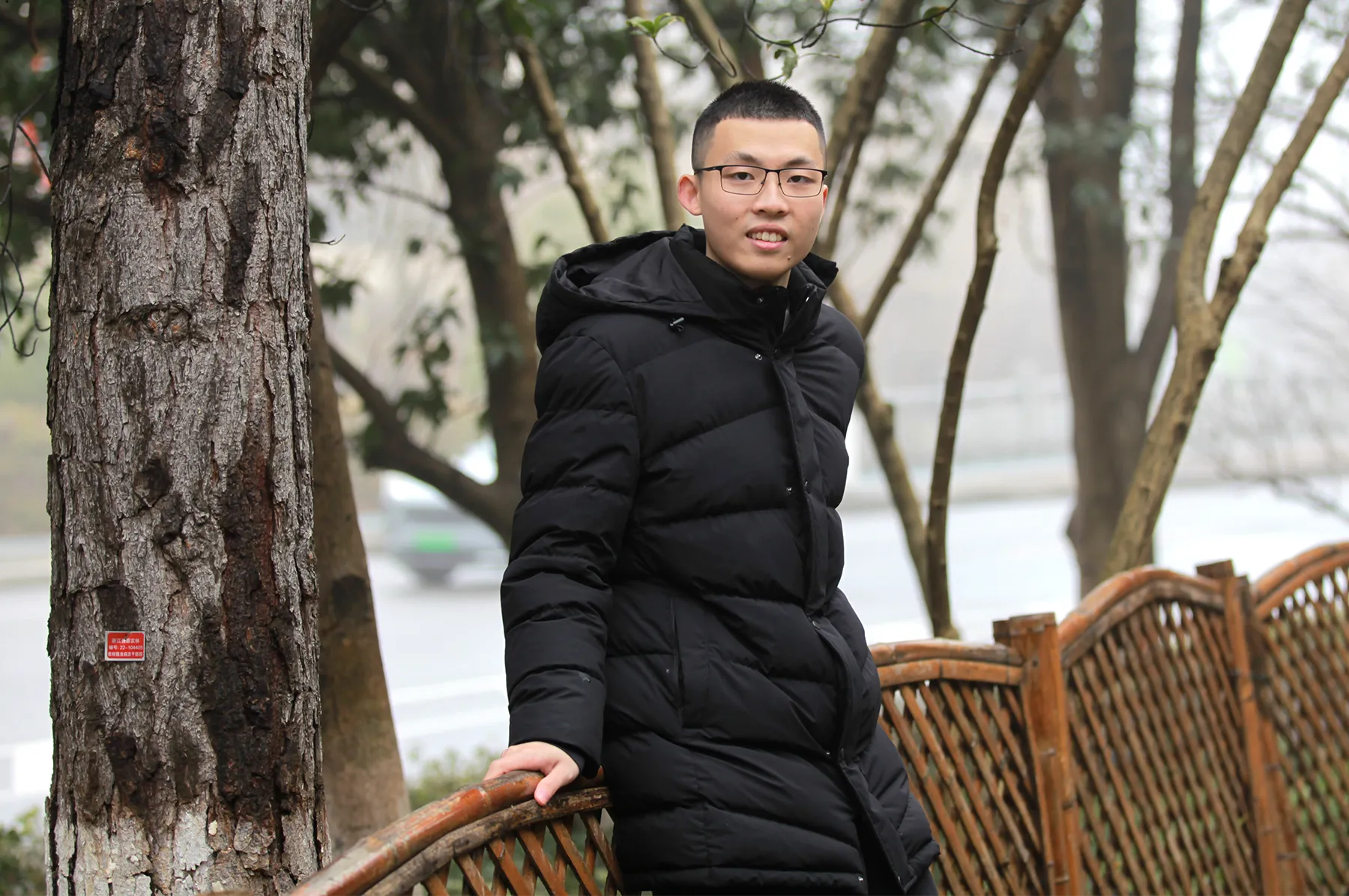

The next year, a team led by Huacheng Yu, a computer scientist at Princeton University, tried to improve the Bender team’s hash table. “We worked really hard and couldn’t do it,” said Renfei Zhou, a student at Tsinghua University in Beijing and a member of Yu’s team. “That’s when we suspected that their upper bound was [also] a lower bound” — the best that can possibly be achieved. “When the upper bound equals the lower bound, the game is over, and you have your answer.” No matter how clever you are, no hash table can do any better.

Yu’s team employed a novel strategy to find out if that hunch was correct by calculating a lower bound from first principles. First, they reasoned that to perform an insertion or a deletion, a hash table — or, really, any data structure — must access the computer’s memory some number of times. If they could figure out the minimum number of times needed for a space-efficient hash table, they could multiply that by the time required per access (a constant), giving them a lower bound on the runtime.

But if they didn’t know anything about the hash table (except that it was space-efficient), how could the researchers figure out the minimum number of times required to access the memory? They derived it purely from theory, using a seemingly unrelated field called the theory of communication complexity, which studies how many bits are required to convey information between two parties. Eventually, the team succeeded: They figured out how many times a data structure must access its memory per operation.

Renfei Zhou was part of a team that proved the 2022 hash table was as efficient as any data structure could possibly be.

Wei Yu

This was their key achievement. They were then able to establish a lower bound on the runtime for any space-efficient hash table. And they saw that it matched the Bender hash table exactly. “We thought [at first] it could be improved,” Zhou said. “It turned out we were wrong.” That, in turn, meant that Peterson’s problem had finally been solved.

Besides answering the decades-old question, Kuszmaul said, the amazing thing about the Yu proof is its generality. “Their lower bound applies to all possible data structures, including ones that have not been invented yet.” That means no method of data storage can ever beat the Bender hash table in terms of memory and speed.

Hashing Into the Future

Despite the new hash table’s unprecedented efficiency, no one is likely to try building it anytime soon. It’s just too complicated to construct. “An algorithm that is fast in theory is not necessarily fast in practice,” Zhou said.

It’s not unusual for such gaps between theory and practice to persist for a long while, Kuszmaul said, because theorists tend to ignore constant factors. The time it takes to perform an operation is typically multiplied by a number, some constant whose exact value may be immaterial from a theoretical standpoint. “But in practice, constants really matter,” he said. “In the real world, a factor of 10 is a game ender.”

Actual hash tables are still improving in material ways, even if they’re falling far short of the theoretical ideal. For example, a new hash table called IcebergHT, built by Bender, Kuszmaul and others, is far better than its predecessors. According to Kuszmaul, it’s twice as fast as the most space-efficient hash table available today, and it uses three times less space than the fastest hash table.

Mitzenmacher hopes that the 2023 result may soon yield another kind of benefit: “Whenever you get a new lower bound — especially one that involves some new techniques — there is always hope that you could use them … for related problems.”

There’s also the intellectual satisfaction that comes from knowing you have solved a difficult and long-standing problem, said the computer scientist Piotr Indyk of the Massachusetts Institute of Technology. “Once you’re sure that certain data structures cannot be improved upon, that can help focus the research effort.” Finally, data researchers can turn their attention away from Peterson’s challenge and focus on new problems in theoretical computer science, of which there is no shortage.