Solution: ‘Randomness From Determinism’

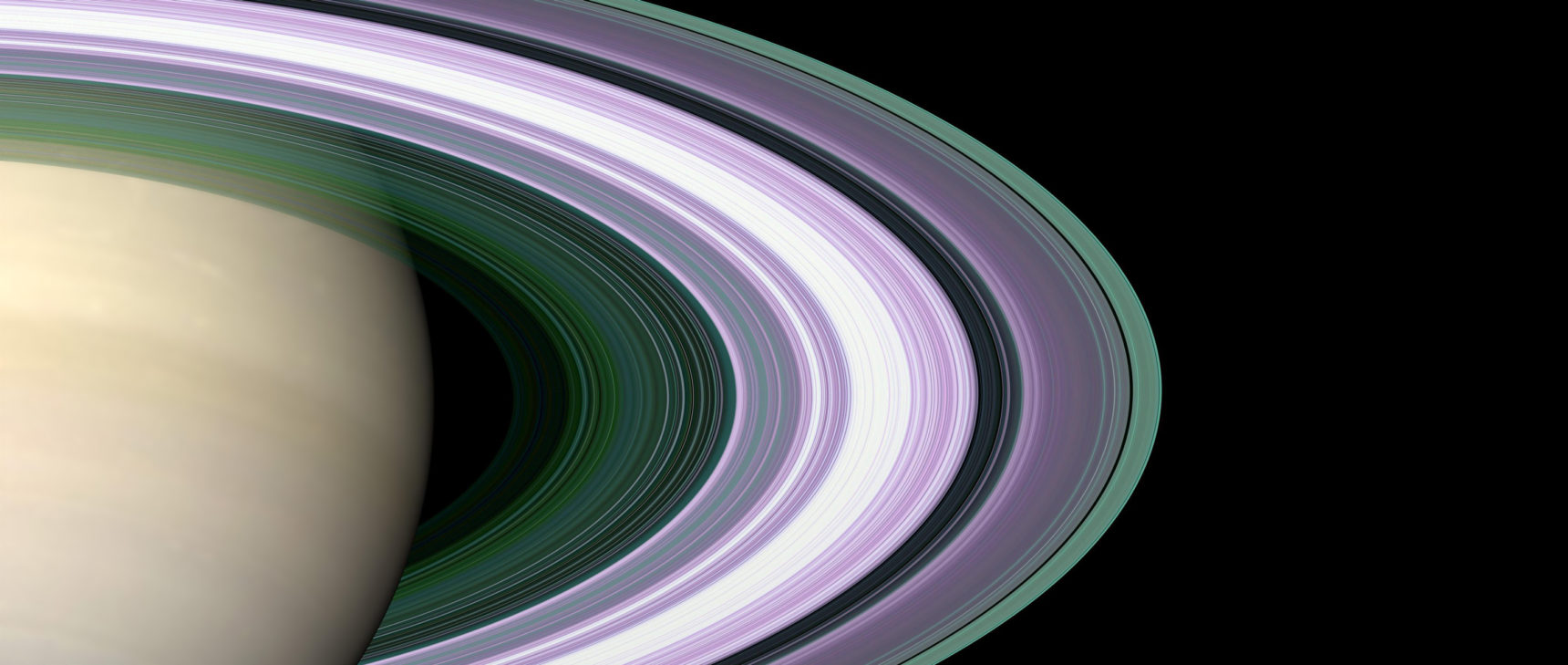

Dan Page for Quanta Magazine

Introduction

Our last Insights puzzle explored how a smooth, random distribution of objects arises in a classic, deterministic machine called a Galton board or bean machine. We examined the inner workings of this by playing with some puzzles. I also used the Galton board’s probabilistic result to suggest that perhaps the probabilistic equations of quantum mechanics spring from underlying deterministic laws that we may not be privy to. Readers responded spiritedly to both the puzzle questions and to the philosophical proposition. Let’s look at the puzzle questions first.

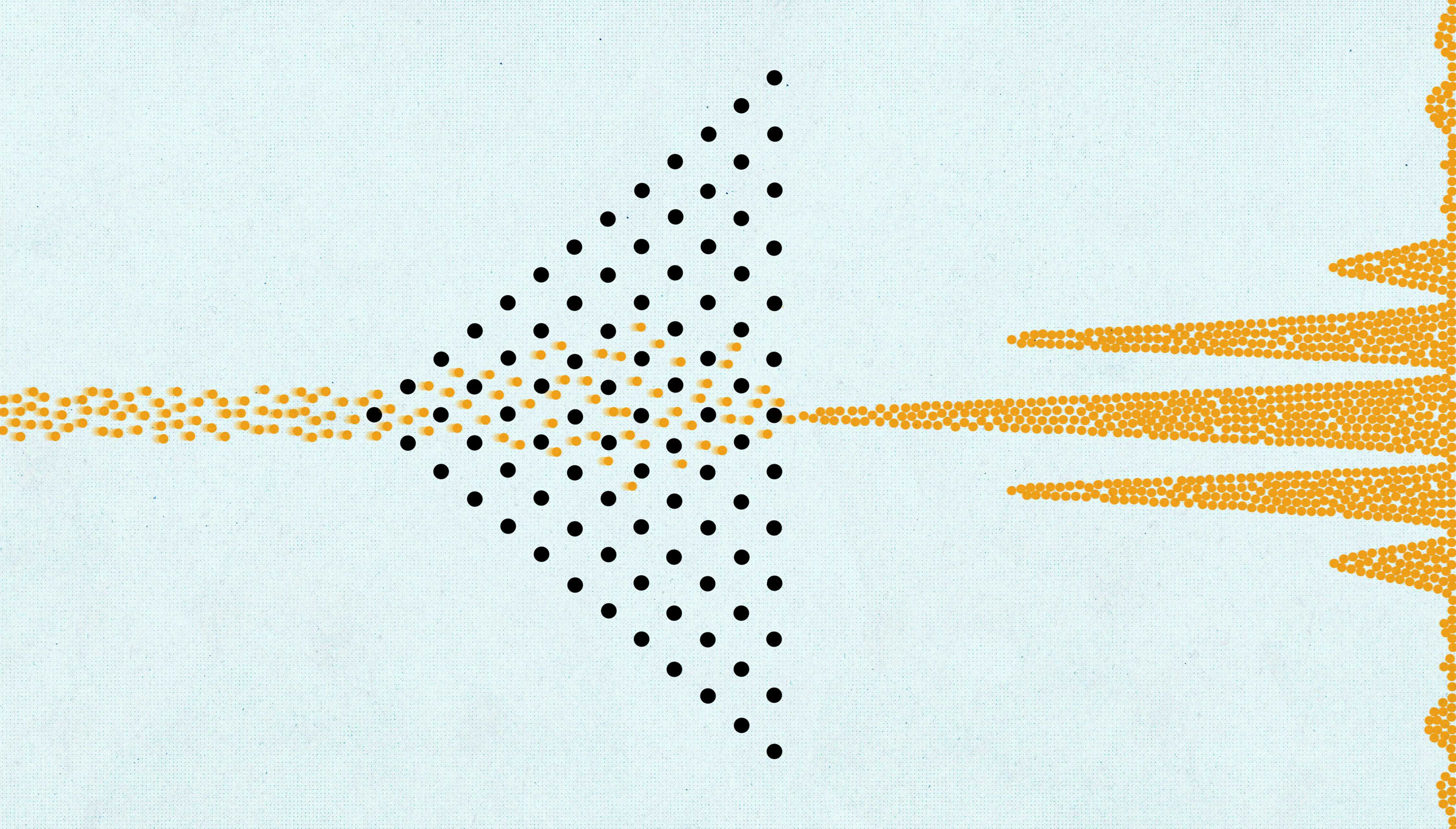

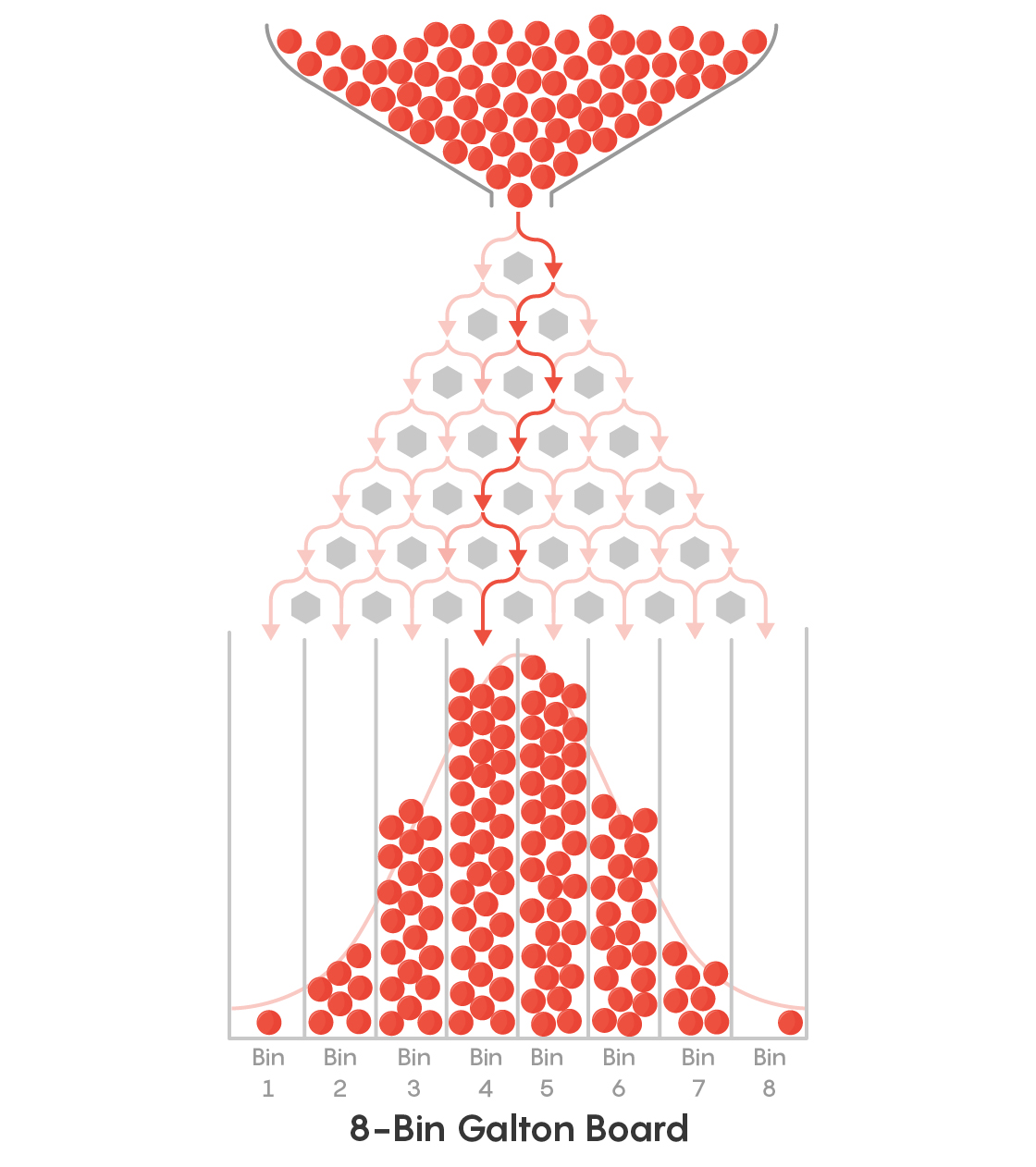

As seen below, the Galton board consists of an upright board with rows of pegs that create multiple paths along which marbles can roll from top to bottom. Marbles are dropped at the top and take either the rightward or leftward path with equal probability when they encounter a peg. At the bottom, the marbles accumulate in a set of bins, and can reproduce the Pascal’s triangle and the Gaussian distributions.

Puzzle 1: The Bins Demand Equality!

Imagine that you have a Galton board of the kind shown in the picture above. This one has bins in place of the eighth row and possesses traditional equal-probability pegs. You wish to modify it so that each of the bins at the bottom will collect an equal number of marbles. You know that you will have to replace some of the traditional pegs with new ones that direct the marbles unequally to their left or right side. You can choose pegs that direct a marble entirely to their left, entirely to their right, or in any proportion between these two extremes.

What is the smallest number of pegs you will need to replace, and with what left-right ratios, in order to achieve the goal of complete equality for all bins?

As a bonus question, can you derive and justify a formula that generalizes the above result to a Galton board of any size?

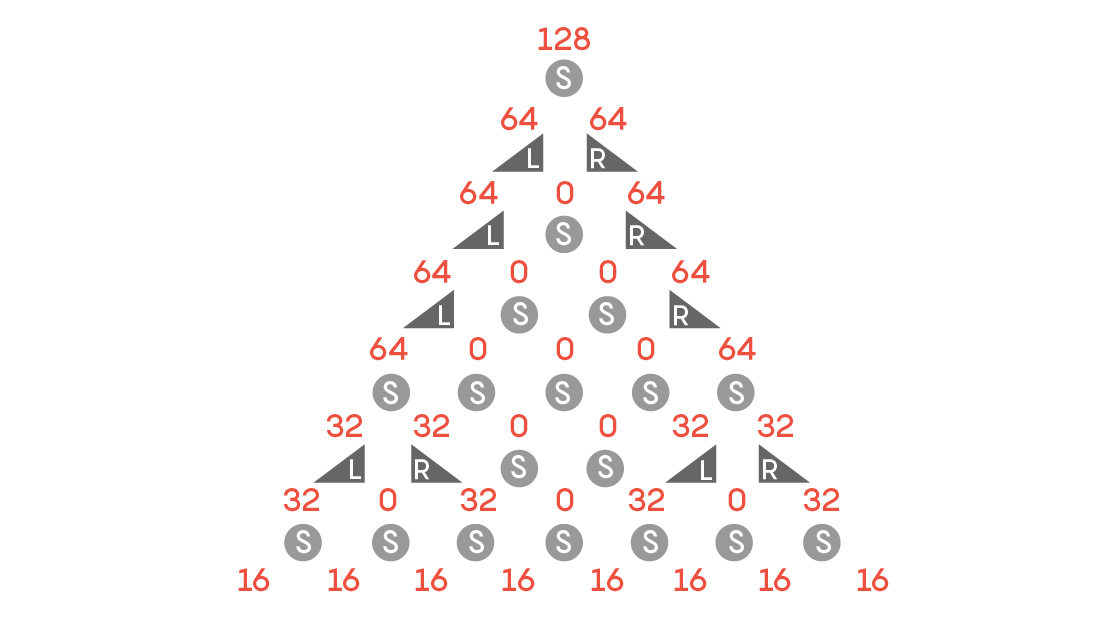

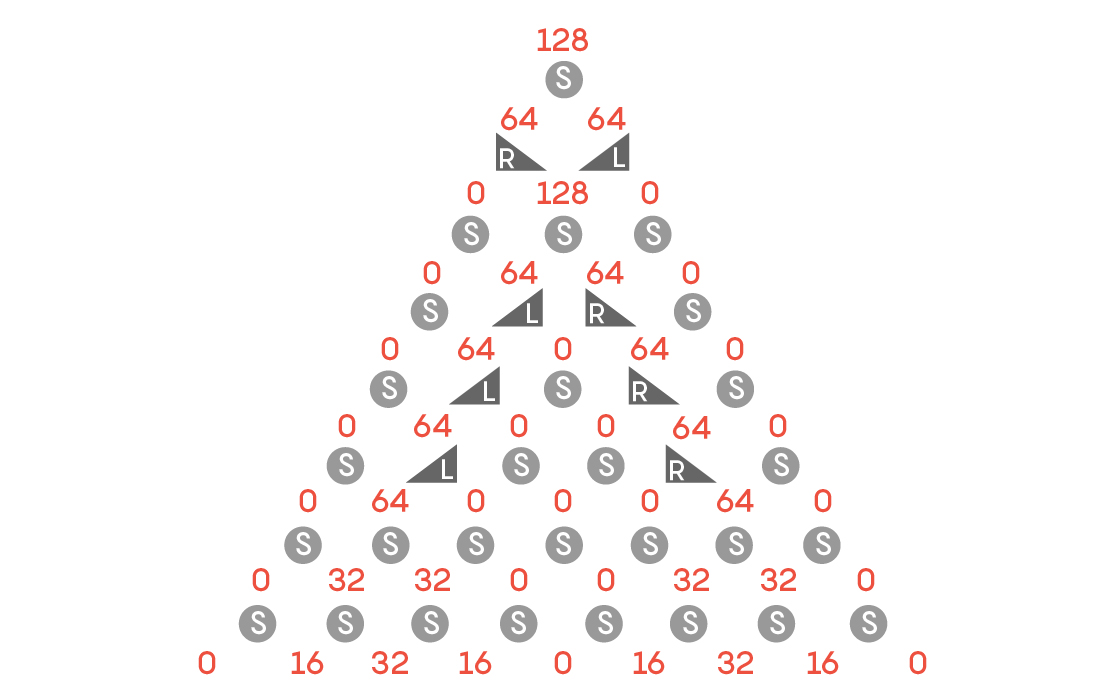

Rob Corlett correctly answered this problem, and Thana Somsirivattana submitted a formal proof. It turns out that just 10 pegs need to be replaced out of the 28. The replacement pegs are simple: They merely route any marble that strikes them to their left (L pegs) or to their right (R pegs). Following the convention of Lionel Lincoln and Rob Corlett, we can call the equal-probability pegs standard (S) pegs. Here is the solution showing how the pegs are arranged and how they route 128 marbles.

The arrangement is nice and regular, with the replacement pegs down the left and right sides.

As for the bonus question, this arrangement generalizes to other cases for which the number of bins is a power of 2. The following formula was recursively derived by Rob Corlett. Here, p(n) is the number of nonstandard pegs for a board with n bins:

p(1) = 0

p(2) = 0

p(n) = 2p(n/2) + n – 2

Thus, the number of replacements needed for a 4-bin board is 2p(2) + 4 – 2 = 2 × 0 + 2 = 2. The number for a 16-bin setup would be 2 × 10 + 16 – 2 = 34.

If the number of bins is not a power of 2, the problem becomes far more difficult. In the words of Rob Corlett, “This is an incredibly complex problem when you dig into the structure!” Using an algebraic approach, Corlett generalized from the power-of-2 solution and derived the following formula representing an upper bound for the minimum replacements for an n-bin board where n is not a power of 2:

p(n) = p(m) + p(n – m) + n – 1

where m is the largest power of 2 smaller than n.

It turns out that you can do better than this, as cornflower showed using linear programming for boards of different sizes. Cornflower gives examples of minimum solutions for the Galton boards with five, six or seven bins. The minimum number of pegs that need to be replaced for 5-bin boards is five, for 6-bin boards is six and for 7-bin boards is eight.

The numbers are smaller than predicted by the formula because it turns out that in the above cases you can leave the original pegs at the ends of one of the rows. In all these cases, several minimum-replacement configurations are possible, as Rob Corlett showed algebraically. Cornflower also gave a symmetrical solution for a 12-bin setup, which Corlett showed to be unique.

I recommend reading the great work from these two contributors. Kudos!

Puzzle 2: Twin Peaks

As in Puzzle 1, start with a traditional Galton board, but this time one with nine bins at the bottom. You have to modify it by changing the minimum number of pegs so that the distribution of marbles at the bottom is as follows: 0, x, 2x, x, 0, x, 2x, x, 0, where x represents 1/8 of the total number of marbles.

Rob Corlett gives the following elegant solution, with the eight replacement pegs symmetrically arranged around the middle this time.

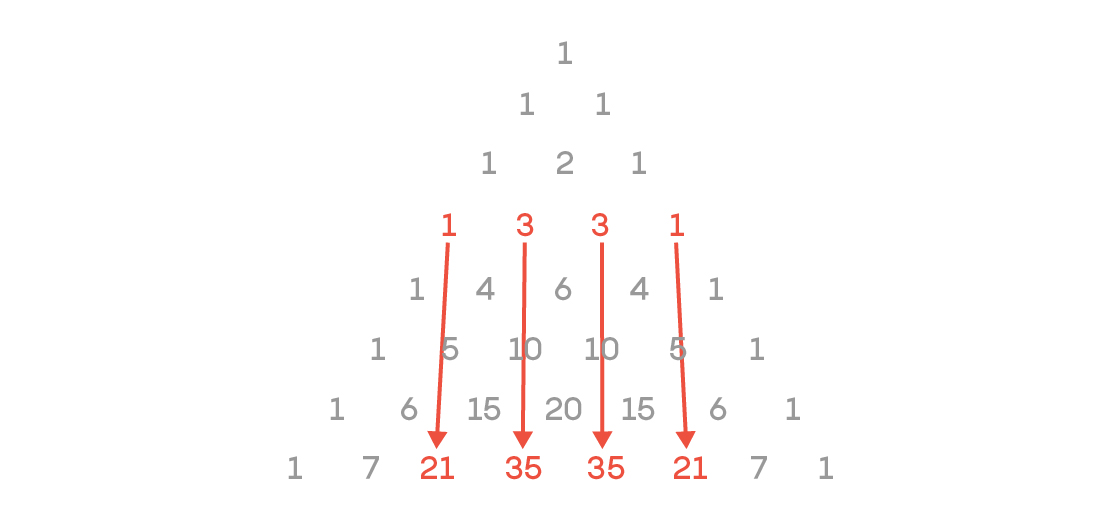

Puzzle 3: Predicting Individual Behavior

In this picture, consider a marble to have had a “drift” of 0 if it was in one of the four positions in row 4 and ended up in the corresponding position in row 8, as shown by the arrows. If it ended up in any other bin, the value of the drift is equal to the square of the distance from the expected bin. Thus, if a marble started from the leftmost position in row 4 and ended up in the bin marked 7, one bin to the left of its expected bin, its drift is 12 = 1. If it ended up in the leftmost bin in the final row (marked 1), then its drift would be 22 = 4. The mean drift for a particular Galton board is the average drift of all the marbles as they move from row 4 to row 8.

What is the average drift of:

1. The original Galton board.

2. The modified Galton board from Puzzle 1.

3. The modified Galton board from Puzzle 2.

The answers were once again correctly given by Rob Corlett. They are 1, 1.5 and 2.5. Corlett offers a clever tip that simplifies the problem: “The trick here is to notice that a ball on row 4 falling to row 8 is effectively the same as a ball coming in at row 1 and falling to row 5.”

Now let’s discuss the philosophical questions, which elicited many interesting reader comments. Before I discuss some of these, I’d like to make two points about where I was coming from.

First, my perspective is that of a scientist interested solely in the causal chain for a given quantum event (for example, a photon striking a specific point at the far end of a double slit experiment). I know there are probabilistic formulas, but they don’t answer what John Bell noneuphoniously called the “beables” — simply, what causes what. Some antecedent must have propelled the photon to that particular point and no other, be it something interior to the photon, the environment, a complex interaction of the two or the state of the universe in the specific fraction of a yoctosecond that the experiment happened to be carried out in. From this point of view, C T Johnson’s statement that “in a team B view, the photon is in a randomizing environment” and Hank Smith’s declaration that “the quantum universe is inherently probabilistic” seem to smack of magic, or else they are logically incoherent. Surely the random choice was not made in zero time, without any antecedents. If you could examine the tiny fraction of a yoctosecond preceding the photon’s choice, surely something happened that resulted in that choice.

So, and this is my second point, I don’t see how pure random choices without antecedents are logically possible in any conceivable universe. Quantum randomness can seem perfect and inherent only because it is caused by antecedents that are orders of magnitude too small for us to ever hope to observe. But the antecedents have to exist. The randomness, like the randomness of a pair of dice and all other random phenomena, are caused by our ignorance. I agree with segrimm, who replied to another reader’s comment, “Sorry, a single event is determined too because our universe acts non-local. The determination is the result of all the previous changes ‘inside and outside’ the local phenomenon. The fact that we cannot predict the outcome doesn’t mean the outcome is not determined.”

By the way, several readers questioned why I named the two antagonistic perspectives team E (Albert Einstein) and team B (Niels Bohr). I was alluding to the debates that took place at the Solvay Conference, which brought together the world’s top physicists in 1927, soon after the birth of quantum mechanics. The leaders of the two opposing viewpoints were Einstein and Bohr, who argued furiously, raising points and counterpoints that gave each other sleepless nights. Finally, Bohr’s perspective of inherent randomness prevailed as part of the emerging Copenhagen interpretation, while Einstein’s view was encapsulated in his famous exclamation, “God does not play dice with the universe.” The prominent physicists on Bohr’s team were pragmatic ones: Werner Heisenberg, Paul Dirac, Wolfgang Pauli and most others. On Einstein’s team were de Broglie and later, Bohm and Bell. Erwin Schrödinger, whose famous equation contributed to the official team B view, later defected and argued forcefully for team E, especially by proposing the classic Schrödinger’s cat thought experiment.

Now let’s take a look at some of the philosophical responses:

Responding to my statement that the many worlds interpretation (MWI) “assumes that the entire universe is being cloned countless times whenever a tiny particle makes a trivially different choice,” TJ_3rd suggested that I read Hugh Everett’s original thesis. Sure, that would be great to do, and thank you for the link! However, given my declared perspective above, I don’t see how that would affect this discussion. As TJ_3rd states, “Everett went into great detail about how his ‘many worlds interpretation’ does not and cannot predict individual outcomes.” But what causes the individual outcome is exactly what I’m curious about. As for my belief that MWI suggests universe “cloning” and particles making “choices,” these are not my words, but those employed by some modern followers of Everett such as David Deutsch, who seem to believe that multiple parallel universes either exist or actually come into being. I know there are other followers who don’t subscribe to this — apparently, the “other” worlds of MWI can have two different interpretations: real or unreal. But if the worlds are unreal, then the Everett procedure is merely a mathematical artifice that allows you to imagine that the quantum evolution enshrined in the Schrödinger equation is the only process that exists, and therefore saves physicists from bothering with the pesky waveform collapses. But this would make sense only if the Schrödinger equation were a true equation of motion. It is not. It is a probabilistic representation, just as a Gaussian distribution is a probabilistic representation of the outcome of a Galton board, and hence must refer to an ensemble, if you exclude the magical inherent indeterminacy that I referred to above.

Jon Richfield points to the fact that there is a difference between determinism and causality and that determinism requires infinite precision of measurement, which is impossible. I agree that, as chaos theory demonstrates, Pierre-Simon Laplace, who championed a completely deterministic world, was wrong even in the classical sense, but while he was wrong predictively, he was not wrong retrospectively. Given most uncomplicated classical results, you can infer with finite precision what the initial conditions needed to be and the paths that led to that result. If you cannot, your laws are incomplete. But I do agree that for the present argument, I am not so much interested in strict determinism as I am in causality.

Zdeněk Skoupý commented that “if we realistically solve the ‘causality’ of the movement of the balls into ever greater detail, then in principle we cannot go to infinity — the end is at the quantum level.” But balls are large enough macroscopic objects that we need not go to the quantum level to determine their behavior. I think that the randomizing physical mechanism that determines which way a ball goes in a practical, real-world Galton board can be completely explained classically — its motion, as a macroscopic object, is effectively sealed off from the quantum world.

Devin Wesley Harper’s idea that something like the Galton board operates to give the double slit results is interesting. However, it must be kept in mind that it has been convincingly demonstrated that the double slit pattern is an interference pattern caused by each photon interfering with itself. Also, the pattern occurs even in a vacuum. Devin, your theory will need to explain these two caveats if it is to be successful. Let us know how it goes.

That’s all for now. Thank you to all who commented. If any of you want to continue this discussion, you are welcome to do so in the comments section here. I will certainly attempt to respond, if only to applaud.

This month’s Insights prize goes to Rob Corlett. An honorable mention goes to cornflower — thank you again for your contribution. Congratulations!