The AI Researcher Giving Her Field Its Bitter Medicine

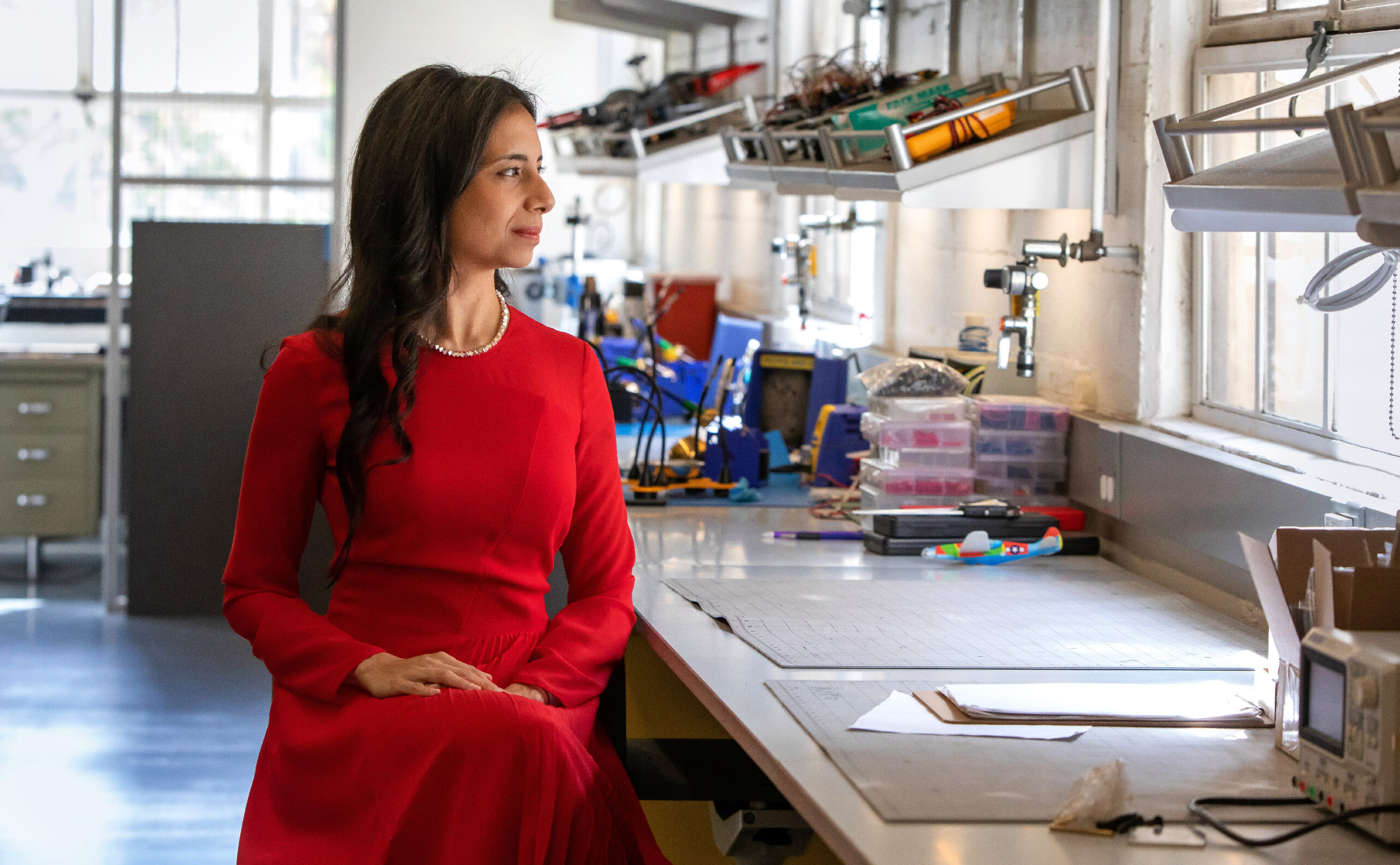

Anima Anandkumar stands in front of the CAST fan array at the California Institute of Technology, which simulates weather for drone experiments. Her work as a computer scientist helps make artificial intelligence more flexible, allowing drones to fly under more turbulent conditions.

Monica Almeida for Quanta Magazine

Introduction

Anima Anandkumar, Bren Professor of computing at the California Institute of Technology and senior director of machine learning research at Nvidia, has a bone to pick with the matrix. Her misgivings are not about the sci-fi movies, but about mathematical matrices — grids of numbers or variables used throughout computer science. While researchers typically use matrices to study the relationships and patterns hiding within large sets of data, these tools are best suited for two-way relationships. Complicated processes like social dynamics, on the other hand, involve higher-order interactions.

Luckily, Anandkumar has long savored such challenges. When she recalls Ugadi, a new year’s festival she celebrated as a child in Mysore (now Mysuru), India, two flavors stand out: jaggery, an unrefined sugar representing life’s sweetness, and neem, bitter blossoms representing life’s setbacks and difficulties. “It’s one of the most bitter things you can think about,” she said.

She’d typically load up on the neem, she said. “I want challenges.”

This appetite for effort propelled her to study electrical engineering at the Indian Institute of Technology in Madras. She earned her doctorate at Cornell University and was a postdoc at the Massachusetts Institute of Technology. She then started her own group as an assistant professor at the University of California, Irvine, focusing on machine learning, a subset of artificial intelligence in which a computer can gain knowledge without explicit programming. At Irvine, Anandkumar dived into the world of “topic modeling,” a type of machine learning where a computer tries to glean important topics from data; one example would be an algorithm on Twitter that identifies hidden trends. But the connection between words is one of those higher-order interactions too subtle for matrix relationships: Words can have multiple meanings, multiple words can refer to the same topic, and language evolves so quickly that nothing stays settled for long.

This led Anandkumar to challenge AI’s reliance on matrix methods. She deduced that to keep an algorithm observant enough to learn amid such chaos, researchers must design it to grasp the algebra of higher dimensions. So she turned to what had long been an underutilized tool in algebra called the tensor. Tensors are like matrices, but they can extend to any dimension, going beyond a matrix’s two dimensions of rows and columns. As a result, tensors are more general tools, making them less susceptible to “overfitting” — when models match training data closely but can’t accommodate new data. For example, if you enjoy many music genres but only stream jazz songs, your streaming platform’s AI could learn to predict which jazz songs you’d enjoy, but its R&B predictions would be baseless. Anandkumar believes tensors make machine learning more adaptable.

It’s not the only challenge she’s embraced. Anandkumar is a mentor and an advocate for changes to the systems that push marginalized groups out of the field. In 2018, she organized a petition to change the name of her field’s annual Neural Information Processing Systems conference from a direct acronym to “NeurIPS.” The conference board rejected the petition that October. But Anandkumar and her peers refused to let up, and weeks later the board reversed course.

Quanta spoke with Anandkumar at her office in Pasadena about her upbringing, tensors and the ethical challenges facing AI. The interview has been condensed and edited for clarity.

How did your parents influence your perception of machines?

In the early 1990s they were among the first to bring programmable manufacturing machines into Mysore. At that time it was seen as something odd: “We can hire human operators to do this, so what is the need for automation?” My parents saw that there can be huge efficiencies, and they can do it a lot faster compared to human-operated machines.

Was that your introduction to automation?

Yeah. And programming. I would see the green screen where my dad would write the program, and that would move the turret and the tools. It was just really fascinating to see — understanding geometry, understanding how the tool should move. You see the engineering side of how such a massive machine can do this.

What was your mother’s experience in engineering?

My mom was a pioneer in a sense. She was one of the first in her community and family background to take up engineering. Many other relatives advised my grandfather not to send her, saying she may not get married easily. My grandfather hesitated. That’s when my mom went on a hunger strike for three days.

As a result, I never saw it as something weird for women to be interested in engineering. My mother inculcated in us that appreciation of math and sciences early on. Having that be just a natural part of who I am from early childhood went a long way. If my mom ever saw sexism, she would point it out and say, “No, don’t accept this.” That really helped.

Anandkumar in the CAST arena lab at Caltech.

Monica Almeida for Quanta Magazine

Did anything else get you excited about math and science?

Before high school all the math that is taught is deterministic. Addition, multiplication, everything you do — there is one answer. In high school, I started learning about probability and that we can reason about things with randomness. To me it makes more sense, because there is much more to nature. There’s randomness, and even chaos.

There’s so much in our own lives that we can’t predict. But we shouldn’t “overfit” to previous experiences which will not allow us to adapt to new conditions in our lives. I realized that with AI, you should have flexibility to generalize to new things, learn new skills.

And that’s why you began questioning matrix operations in machine learning?

In practice, matrix methods in machine learning can’t effectively capture higher-order relationships. Essentially, you can’t learn at all. So we asked: What if we looked at higher-order [operations]? That got us into tensor algebra.

With their multiple dimensions and flexibility, tensors seem like a natural fit for higher-order problems in AI. Why hadn’t anyone used them before?

I was sure people would have thought about this. After we came up with a method, we went back to do a literature search and see. In fact, in 1927 there was a psychometrics paper suggesting that to analyze different forms of intelligence, you should do these tensor operations. So people have been proposing these ideas for a while.

But computations of the time couldn’t handle these higher-order [operations], meaning generally correlations among at least three parties. We also didn’t have enough data. Timing was important. Having the latest hardware, more data, will help us now to move to higher-order methods.

If you succeed in making AI more flexible, what happens then?

Rethinking the foundations of AI itself.

For instance, in many scientific domains I cannot force my data to be on a fixed grid. Numerical solvers are flexible: If you’re using a traditional solver, you can easily find a solution at any point in space. But standard machine learning models are not built that way. ImageNet [a database used to train AI to recognize images] has a fixed image size, or resolution. You train a network on that resolution, so you test it in the same resolution. If you now use this network but change the resolution, it completely fails. It’s not useful in real applications. Scientists want flexibility.

We’ve developed neural operators that do not have this shortcoming. That has led to significant speedups while maintaining accuracy. For instance, we can accurately predict fluid dynamics in real time and we plan to deploy this on drones that can fly under high wind conditions at a drone wind testing facility at Caltech.

As a student, you interned at IBM, and now in addition to your job at Caltech, you work with Nvidia. Why do you mix academic theory and industrial application?

My parents are entrepreneurial. But my great-great-grandfather on my dad’s side was the scholar who rediscovered this ancient text called the Arthashastra. That was the first known book on economics, from 300 BCE. So growing up, I always wondered: How do I straddle these two worlds?

I think this is where this current era is so great. We’re seeing a lot of openness in how companies like Nvidia are investing in open research.

Anandkumar works with Caltech students (from left) Zongyi Li, Sahin Lale and Kimia Hassibi.

Monica Almeida for Quanta Magazine

You’ve mentioned wanting a sort of Hippocratic oath for AI research. Why?

It’s always important to question how our work is going to impact the world. It can be challenging, especially in a large company, because you’re building one part of this huge system. But so much of the way we teach in universities is derived from military school. Engineering came from that background, and some of it lingers. Like thinking that scientists and engineers should focus on the technical stuff and let others take care of the rest. It’s wrong. We all need humane thinking.

How do today’s inflexible algorithms contribute to these ethical problems?

Humans have been conditioned to thinking you can trust machines. Before, if you asked a machine to multiply, it would always be correct. Whereas humans can be wrong, and so can our data. Now, when an AI’s training data is racially biased, we’re fitting to the assumptions of the training data. We can have not just wrong answers, but wrong answers with high confidence. That is dangerous.

Then how do we move forward?

In terms of building better algorithms, we need to at least ask: Can we give the right confidence level? If another human being says, “Maybe I’m 60% sure this is the right answer,” then you take that into account.

So if I look outside my window and see a catlike animal the size of a building, I can think: “Yes, that looks like a cat, but I’m not yet certain what that thing actually is.”

Exactly. Because that’s when these models have the overconfidence issue. In the standard training, you’re incentivizing them to be very confident.

You have mentored for Caltech’s WAVE Fellows program, which brings students from underrepresented backgrounds to do research. What do you think is the role of mentorship in AI?

One of the senior women in the field once lamented to me that women feel we are like islands. We are so disconnected. We don’t know what’s going on with others. We don’t know about pay scales, or anything. It’s this feeling of disconnectedness — that you’re not part of the system. You do not feel like you belong here. I think that is so important to fix by showing that there’s more than just affinity groups, like WIML and Black in AI. There is a broader set of mentors and people who are invested in these efforts.

Is that related to your experience with the NeurIPS name change? Why was that fight so important to you?

For a lot of people, it was like, “Oh, a silly name change.” But it brought in toxicity. I didn’t expect there would be long Reddit threads making fun of us and our appearance, and all kinds of threats, all kinds of attempts to dox people. So much of this was underground. And it exposed people to what women would face in these conferences.

Ultimately, I would say it brought the community together. It brought the moderate set of people who were unaware into the fold. And that has really helped to enhance our diversity and inclusion.

Even though you’re used to challenging people, I imagine it was still hard to speak out.

It was really difficult. I’m a private person, but when I started speaking up on social media, even just posting the work we are doing or something very benign, the comments were unfiltered. There was this whole nature of “Let’s avoid talking about negative things. Let’s bury it.” But I’m of the mindset that we have to bring this out into the open and we have to hold ourselves accountable.

That people need to eat their neem?

Exactly, exactly. You have to take the bitter truth.