What's up in

Machine learning

Latest Articles

The AI Revolution in Math Has Arrived

AI is being used to prove new results at a rapid pace. Mathematicians think this is just the beginning.

Why Do We Tell Ourselves Scary Stories About AI?

Our tales of AI developing the will to survive, commandeer resources, and manipulate people say more about us than they do about language models.

An Arctic Road Trip Brings Vital Underground Networks into View

A vast meshwork of soil-bound fungi governs life aboveground. In Alaska, and at field sites around the world, researchers are racing to understand exactly how, with essential stores of carbon at stake.

When Coupled Volcanoes Talk, These Researchers Listen

Around the world, volcanologists are following the path of magma as it travels between connected volcanoes, in an effort that could lead to improved eruption forecasts.

Why Do Humanoid Robots Still Struggle With the Small Stuff?

The last decade has seen vast improvements in humanoid robots, but graduating to widespread use might require going back to the fundamentals.

Fed on Reams of Cell Data, AI Maps New Neighborhoods in the Brain

Machine learning is helping neuroscientists organize vast quantities of cells’ genetic data in the latest neurobiological cartography effort.

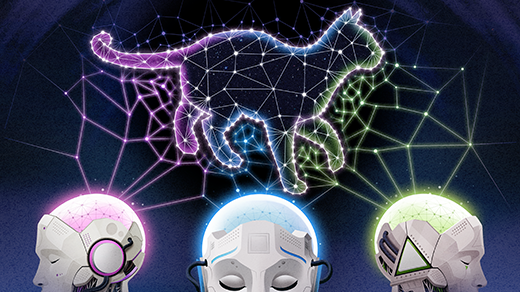

Distinct AI Models Seem To Converge On How They Encode Reality

Is the inside of a vision model at all like a language model? Researchers argue that as the models grow more powerful, they may be converging toward a singular “Platonic” way to represent the world.

Cryptographers Show That AI Protections Will Always Have Holes

Large language models such as ChatGPT come with filters to keep certain info from getting out. A new mathematical argument shows that systems like this can never be completely safe.

The Game Theory of How Algorithms Can Drive Up Prices

Recent findings reveal that even simple pricing algorithms can make things more expensive.