How Writing Changes Mathematical Thought

David E. Dunning explores how choices of notation — such as using Roman or Hindu-Arabic numerals — changes what you can do with math.

Valerie Plesch for Quanta Magazine

Introduction

It’s natural to think of math as being fundamentally abstract. Whether it’s invented or discovered, its truths are so literally universal that even aliens would agree (so the thinking goes) that 2 and 2 make 4.

The actual work of mathematics, though, typically involves something utterly earthbound: “making marks on paper or blackboards,” said David E. Dunning, a historian of mathematics and curator of the history of science at the Smithsonian’s National Museum of American History. And that mark-making, or “notation” — which might include anything from notches on a stick to obscure typographical symbols on a screen — produces not only intellectual ripple effects, but practical, material, and social ones too.

“Mathematicians are doing something when they explore this realm that we experience as abstract,” Dunning said. “Ideas have developed in tandem with different ways of representing them in written form. This is where I find focusing on notation so useful — I see [it] as the way of grounding what mathematicians do in lived activity, in the physical world.”

Dunning, who studies the social effects of notation, found his calling gradually, beginning with a double major in math and English as an undergraduate. “They felt related to me,” he said. “They were both about the worlds and structures that can be summoned by writing.” After pursuing both interests separately, he realized that graduate study in the history of science could unite them. In particular, he was inspired by the sociologist David Bloor’s treatise Knowledge and Social Imagery to learn more about “how the creation of mathematical knowledge is something that people do in interaction with each other, deeply embedded in their context.”

In other words, you don’t have to believe that math itself is all relative — which, to be clear, Dunning doesn’t — in order to engage with the social contingencies of its various forms of notation. Those forms “have to be invented, we have to learn to use them, they have affordances and limitations,” he said. “It is a technology in a lot of ways.”

Quanta spoke with Dunning about how that technology has influenced mathematical thought — about why Roman numerals are hard to use, how symbols beat shapes in calculus, and why arguments about writing things down may have produced some of our most fundamental insights about logic and computation. The conversation has been condensed and edited for clarity.

Dunning studied both English and math as an undergraduate. “They felt related to me,” he said. “They were both about the worlds and structures that can be summoned by writing.”

Valerie Plesch for Quanta Magazine

Where does a system of math notation come from? How does it get started?

A notation is always a set of practices. The most fundamental type of mathematical notation is numerals — being able to write down numbers. On the level of what squiggles you make, there’s always something arbitrary about it. But notation isn’t just the specific squiggles. It’s the rules, it’s what you do with it. And different numeral systems have different strengths and weaknesses.

Like what?

The one we use, the Hindu-Arabic numerals, developed in India, traveled to the Arab world, and then spread to Europe largely through communities of merchants. In comparison with the Roman numerals that were previously widespread in Europe, the Hindu-Arabic numerals facilitate the reckoning that merchants need to do for commerce much more easily. It’s not impossible to conceptualize arithmetic in Roman numerals, but it doesn’t lend itself to arithmetical activity in the same way.

Why not?

With Roman numerals, you need a new symbol every time you reach a new order of magnitude. If you’re writing years, that’s not a problem, but it has a limit. Whereas if you understand the Hindu-Arabic numerals, then with only 10 symbols, you can represent potentially infinitely many numbers. You can, in theory, understand any natural number.

Physical models, such as these seen at the Smithsonian’s National Museum of American History, were long used to gain new insights into surfaces and other mathematical objects. “They used to be in every math department,” Dunning said. “There was an attitude in which part of studying math was to cultivate this physical intuition for the forms that equations represented.”

Valerie Plesch for Quanta Magazine

More importantly, they’re not just a system for static representation. With them comes the algorithms we know for addition, multiplication, and so on — a way of calculating. We take that for granted: Schoolchildren learn how to carry digits and multiply large numbers together. But I think that brings into focus how the Hindu-Arabic numerals are an amazing technology. We are so used to a world where that technology has been widely available for so long, but it’s important to realize that historically, multiplying large numbers was a difficult thing to do — until you had a system that made it easy.

That is the power of the notation.

Is writing a prerequisite? Are there other ways of having a notation for math?

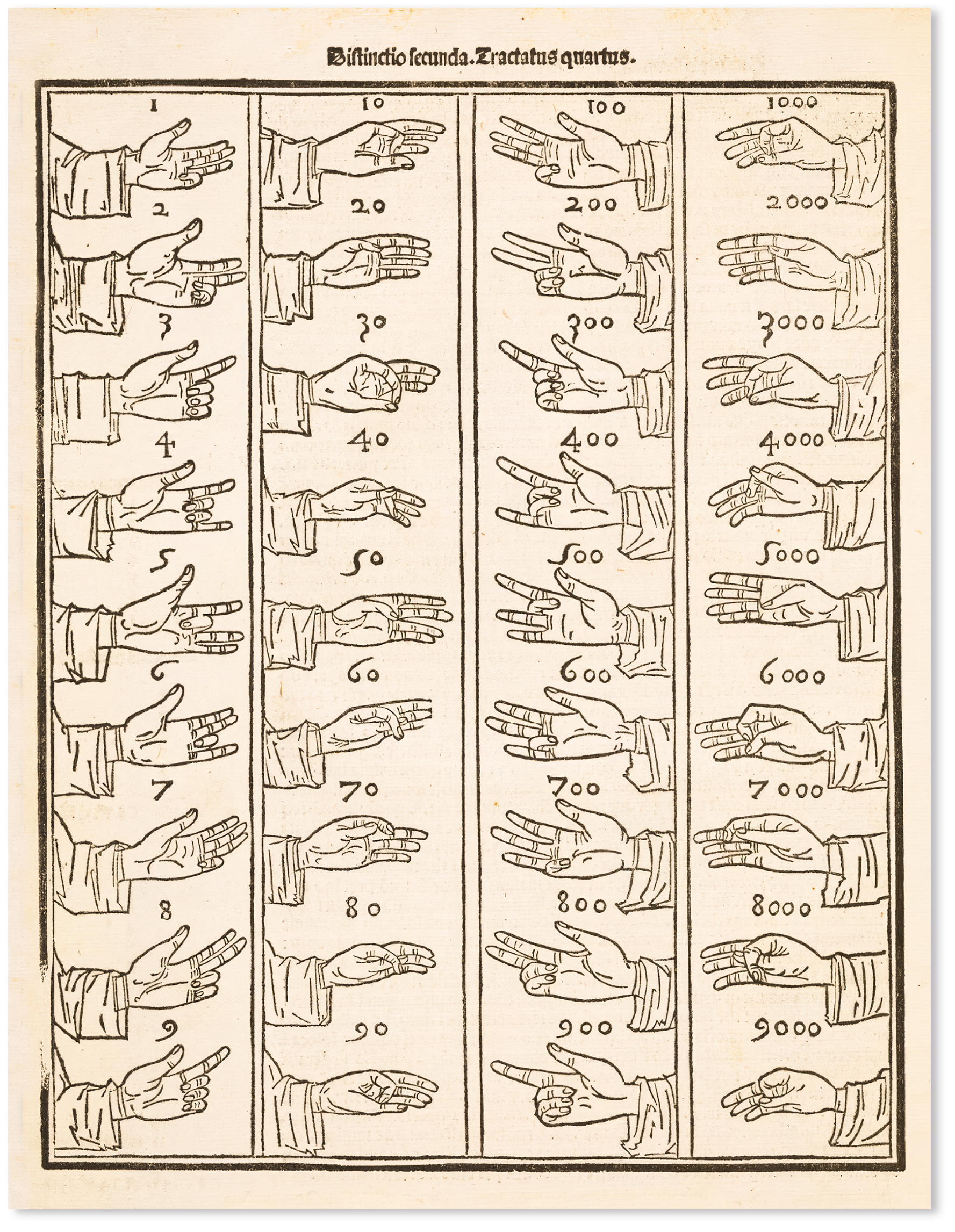

When you go further back, there are also complex systems for representing numbers that are not writing per se. I’m thinking in particular of the Incan quipus, which are systems of knotted cords that can encode sophisticated numerical information. And there’s a Roman finger-counting system, which continued to be pretty widely used through the Middle Ages in Europe, that could go up to 9,999 on two hands.

An illustration — found in the Summa de Arithmetica, an Italian treatise on algebra published in 1494 — of a Roman finger-counting system that allowed people to represent numbers up to 9,999 using two hands.

Public Domain

So why did writing win? Why don’t we teach people to do math with, say, a more pictorial or visual kind of notation?

There is some relevant history we can think about to get at this idea — in the different ways that Newton and Leibniz approached calculus and its notation.

For context, you’re referring to how Gottfried Leibniz and Isaac Newton independently invented calculus in the 17th century, using different notations, right?

That’s right. Newton understood his calculus to be much more rooted in geometry than Leibniz did. Newton’s masterpiece, the Principia, doesn’t introduce symbolic calculus — like Euclid, it opens with definitions and axioms and then has a lot of diagrams. It’s important to realize that [at the time] Euclid was still the foundational text of mathematics, while algebra was often seen as sort of a hack to get the value you wanted for a problem that was, in its “truer” form, geometric.

“Ideas have developed in tandem with different ways of representing them in written form,” Dunning said.

Valerie Plesch for Quanta Magazine

Leibniz wanted a much more algebraic, symbolic, notation-focused calculus. There’s this quote you see from him: “I dare say that this is the last effort of the human mind, and when this project shall have been carried out, all that men will have to do will be to be happy.” I mean, he sounds like an AI hype guy or something — this idea that our systems of writing can do the thinking for us. That’s really how Leibniz understood notation.

And his notation is the one we still use for calculus today?

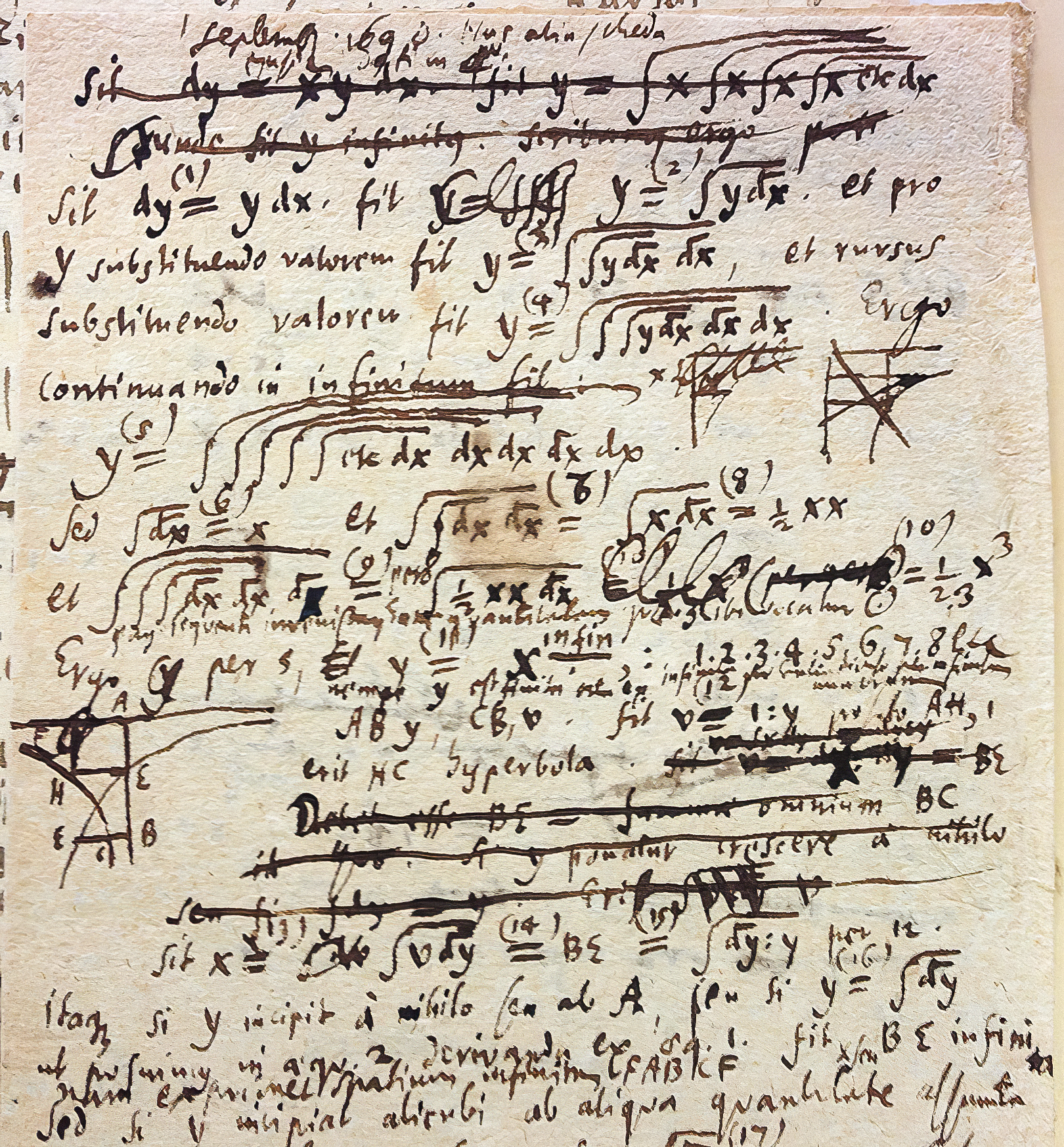

Yes — most significantly the integral sign, which is the most recognizable imagery of calculus. It’s an elongated “S,” for “sum.” Leibniz’s calculus got used a lot more in continental Europe, and it just grew and was fertile in a way that Newton’s wasn’t.

Is that because it actually made doing calculus easier, as Hindu-Arabic numerals did for arithmetic?

I do think to some degree that’s true. One thing people point to is that Leibniz’s dy/dx notation [for differentiation] invited “playing with” in ways that Newton’s doesn’t.

Notes that Gottfried Leibniz wrote about integral calculus, found in his archive in Hanover, Germany. His notation later gave rise to the notation we use in calculus today.

Gottfried Wilhem Leibniz Bibliothek/Stephen Wolfram/Public Domain

But I don’t want to reduce it to that. Leibniz’s notation catches on because his circle of collaborators takes it and runs with it, and their successors — people like Euler and Lagrange and Laplace — develop analysis into a whole discipline in continental Europe over the next century. In England, Newton’s mathematical physics gets positioned as important, but not as the foundation of a research culture. So you get this situation where the English tradition that so venerates Newton isn’t actually keeping up with what Newtonian mechanics is like in other places.

In the early 19th century, young mathematics students in England are frustrated that they’re still being taught in Newton’s notation when all of math is happening in Leibniz’s. This is also a time when the French Revolution and the Napoleonic Wars make cultural exchange between England and Paris especially difficult. So [the notation] is not something that they can just quickly switch, and they don’t have the power to. It’s more of a gradual transition, as that generation works their way up to be in the position to set exam questions. And by the middle of the 19th century, English mathematics switches over to Leibniz’s notation, and the transformation is really complete.

Dunning works with the Smithsonian’s mathematics and computer collections.

Valerie Plesch for Quanta Magazine

Do notations always converge like this on something dominant?

Not always, and not always so clearly. Mathematical logic is an especially important example where you see a huge proliferation of notations — and how comfortable authors seem [to be] with it. Eventually they converge, more or less, but you also just see coexistence.

One reason is that [logicians] have very different goals, especially early on. George Boole, who publishes his first book on mathematical logic in 1847, believes that logic is mathematical, and that you can do the same logic as Aristotle more efficiently by representing syllogisms as equations. So for him, it’s very important to use existing algebraic notation: the system readers already know.

But in 1879, Gottlob Frege, the German mathematician, publishes his Begriffsschrift, which means “concept script.” For him, the goal is the opposite: It’s to show that mathematics really is logic. To do that, his notation for logic needs to have no mathematical notation in it at all, because he’s going to eventually rebuild mathematics. And so he invents a system that looks nothing like prior mathematical notation.

For a while, this multiplicity [of notations] just becomes how logic works. Part of it is because logic doesn’t have one very clear application or one unified purpose. Different writers think it matters for different reasons, and that is reflected in what system of notation they think best serves their purposes. Keeping up with the literature meant constantly moving between these systems and thinking about what they could and couldn’t do.

This proliferation of notations isn’t unique to logic, but it uniquely matters. In the 1930s, you see this culminating period where you have Kurt Gödel and Alan Turing and Alonzo Church putting forward really important theorems [about incompleteness and computation] — where what a system of writing can do is the subject matter, is what you would prove a theorem about. And these kinds of meta-questions, I think, started by having this field where nobody writes the same way. It is not random that the context [they’re] working in is this tradition where there’s so many notations and you’re constantly paying attention to what they can do.

How is mathematical notation still evolving? Do we need to push it beyond writing?

I doubt that we are hitting the ceiling. Computers allow for all kinds of modeling, and I think we will probably see more and more areas of mathematics where the result is something dynamic —[models or simulations] of objects and processes that you just can’t print. But this is not unprecedented in the history of math.

We talked about paradigms other than writing in the more distant past, and in the late 19th century there was a real heyday of physical models. There were a lot of plaster geometric models, and we have a ton here at the [Smithsonian] museum. They used to be in every math department: You had your set of models for all sorts of different surfaces, and there was an attitude in which part of studying math was to cultivate this physical intuition for the forms that equations represented. You can see the model-making practice is actually a type of research exploration.

Analogously to that, computers open up a ton of possibilities for representations that are not typographic and will enable new questions to be asked.

A qualification we should have: We’re talking about elite mathematical discourse, and it’s a useful shorthand to call that “math,” but that actually leaves a lot out. The knowledge somebody uses in a grocery store, dealing with the prices of things and their budget, that is also mathematical. We have these notational technologies that allow us to not see this as significant math, but it is.

Has notation become widespread or familiar in other ways?

Another one that comes to mind is the use of x as a variable. It takes a long time for that practice to develop in mathematical history. And when children in grade school first arrive at the idea that we’re going to treat letters as if they’re numbers, it still hits [them] as something esoteric. But [now] it is very widely learned, and all sorts of people who would not consider themselves mathematical are comfortable using x in a sentence to stand in for the unknown. You can say, “Suppose I have x pounds of apples,” and someone who wants nothing to do with the actual manipulating of an equation is not put off by that way of speaking.

That’s what notation makes possible — the esoteric. And what counts as esoteric can change.