In New Paradox, Black Holes Appear to Evade Heat Death

Olena Shmahalo for Quanta Magazine

Introduction

Heat death held a morbid fascination for Victorian-era physicists. It was an early example of how everyday physics connects to the grandest themes in cosmology. Drop ice cubes into a glass of water, and you create a situation that is out of equilibrium. The ice melts, the liquid chills, and the system reaches a common temperature. Although motion does not cease — water molecules continue to reshuffle themselves — it loses any sense of progress, and the overall distribution of molecular speeds doesn’t change.

The 19th-century founders of thermodynamics realized that the same goes for the universe as a whole. Once the stars all burn out, whatever is left — gas, dust, stellar corpses, radiation — will come to equilibrium. “The universe from that time forward would be condemned to a state of eternal rest,” Hermann von Helmholtz wrote in 1854. Modern cosmology has not altered this basic picture.

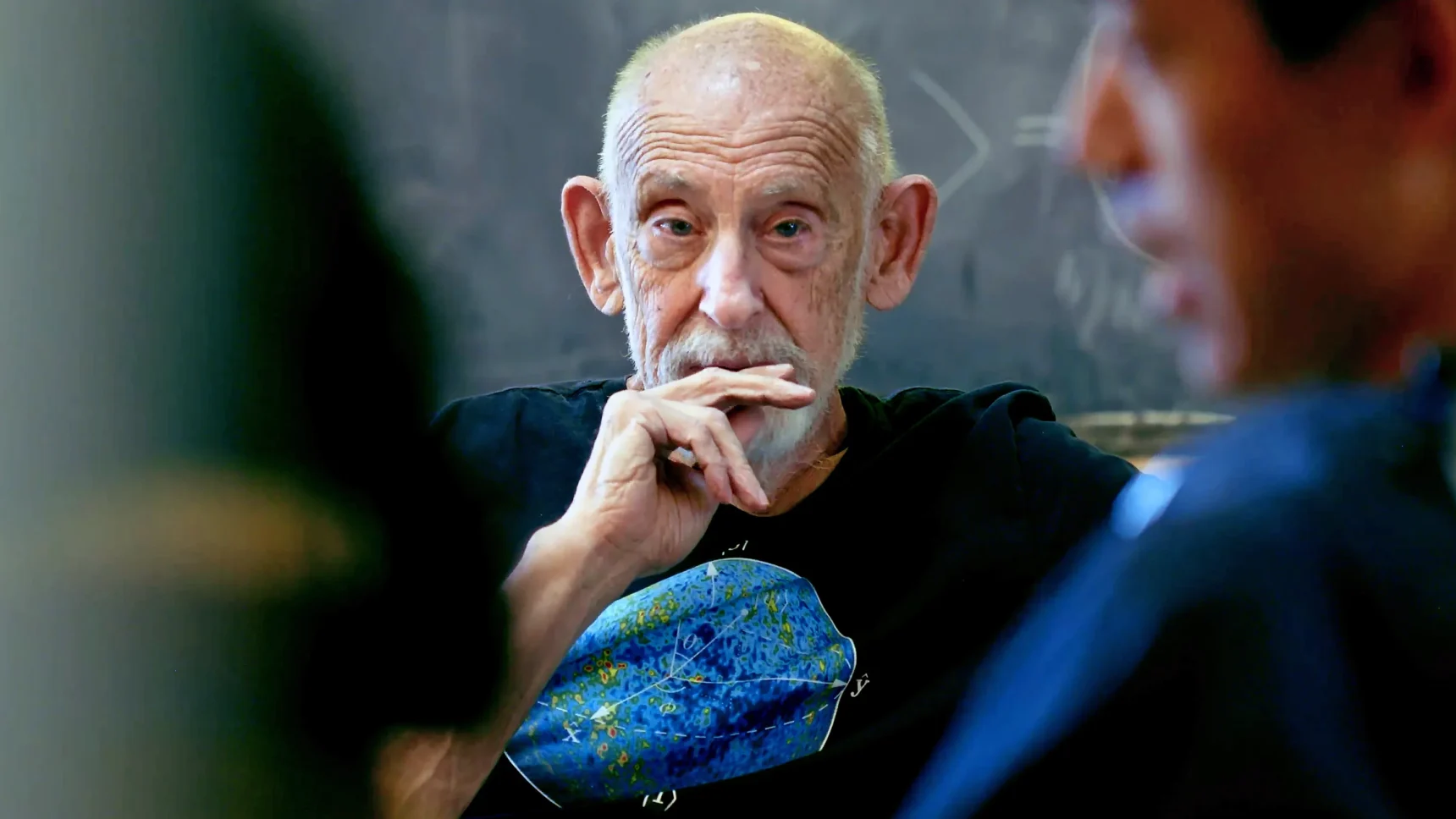

But lately physicists have been thinking that a supposedly heat-dead universe is a lot more interesting than it looks. Their story starts with a question about black holes — another riddle beyond the ones that get the most attention. According to our standard understanding of black holes, they continue to change long after they should have come to equilibrium. An investigation into why has led researchers to reconsider how things in general evolve — including the universe itself. “No one much thought about this because it’s just sort of boring: It looks like equilibrium, and nothing happens,” said Brian Swingle, a physicist at Brandeis University. “But then along came black holes.”

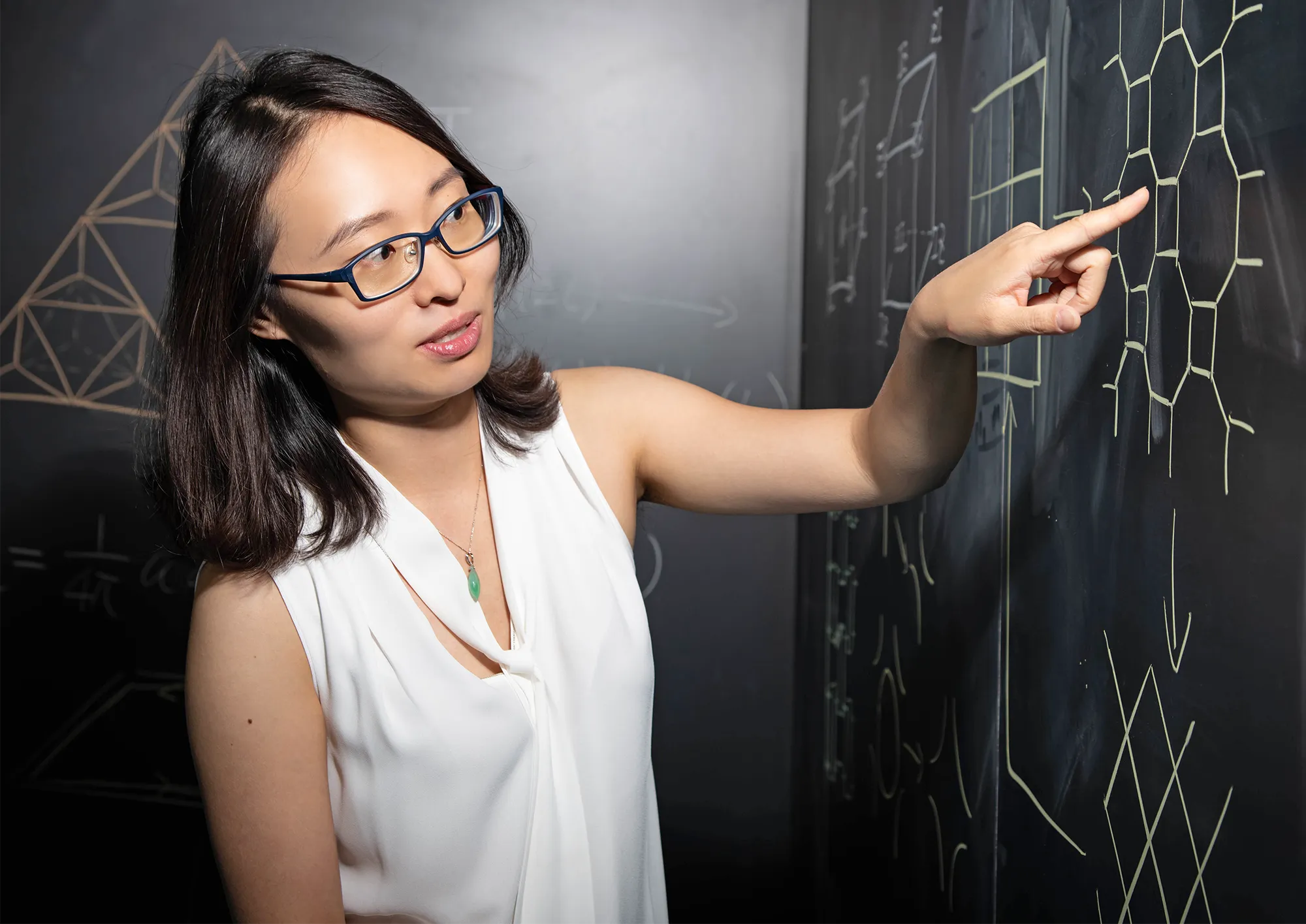

When an ice cube melts and attains equilibrium with the liquid, physicists usually say the evolution of the system has ended. But it hasn’t — there is life after heat death. Weird and wonderful things continue to happen at the quantum level. “If you really look into a quantum system, the particle distribution might have equilibrated, and the energy distribution might have equilibrated, but there’s still so much more going on beyond that,” said Xie Chen, a theoretical physicist at the California Institute of Technology.

Chen, Swingle and others think that, if an equilibrated system looks boring and blah, we’re just not looking at it in the right way. The action has moved from quantities that we can see directly to highly delocalized ones that require new measures to track. The favorite measure, at the moment, is known as circuit complexity. The concept originated in computer science and has been appropriated — misappropriated, some have grumbled — to quantify the blossoming patterns in a quantum system. The work is fascinating for the way it brings together multiple areas of science, not just black holes but also quantum chaos, topological phases of matter, cryptography, quantum computers, and the possibility of even more powerful machines.

A New Paradox of Black Holes

In the mid-20th century, black holes were mysterious because of their “singularity” at their core, a place where infalling matter becomes infinitely compacted, gravity intensifies without limit, and the known laws of physics break down. In the 1970s Stephen Hawking realized that the perimeter or “horizon” of a black hole is equally weird, creating the much-discussed information paradox. Both puzzles continue to perplex theorists, and they drive the search for a unified theory of physics.

In 2014 Leonard Susskind of Stanford University identified yet another conundrum: the black hole’s interior volume. From the outside, a black hole looks like a big black ball. According to Einstein’s general theory of relativity, the ball grows when stuff falls in, but otherwise it just sits there.

The inside looks very different, however. The spherical volume formula that you learned in grade school doesn’t apply. The problem is that spatial volume is defined at one moment in time. To calculate it, you have to slice up the space-time continuum into “space” and “time,” and inside a black hole there is no unique way to do that.

Susskind argued that the most natural choice is a slicing process that maximizes the spatial volume at every moment; by the logic of relativity, it amounts to the shortest distance across the hole. “It’s a natural volume analogue of the shortest-line rule,” said Adam Brown, a physicist at Stanford. And because the interior space-time is so warped, the volume by this measure grows with time forever. “The slice on which I measure this volume gets deformed more and more,” said Luca Iliesiu, a physicist also at Stanford.

This growth is weird because the black hole should be governed by the same laws of thermodynamics as the glass of water. If the ice and liquid eventually reach equilibrium, so should the hole. It should stabilize, not grow forever.

To formulate the paradox, Susskind applied a form of lateral thinking. The strategy, known as the AdS/CFT duality, conjectures that any situation in fundamental physics can be viewed in two mathematically equivalent ways, one with gravity, one without. The black hole is a strongly gravitating system — there are none stronger. It is mathematically equivalent to a nongravitational but strongly quantum system. In technical terms, the black hole is equivalent to a thermal state of quantum fields — essentially, a hot plasma made up of nuclear particles.

Xie Chen, a physicist at the California Institute of Technology, explores the far-reaching consequences of quantum entanglement.

Lance Hayashida

A black hole doesn’t look anything like a hot plasma, nor does a plasma seem to have anything to do with a black hole. That is what makes the duality so powerful. It relates two things that should not be related. If someone gave you such a plasma, you could measure its temperature, and that would be the temperature of the black hole. If you dropped material into the plasma, a ripple would reverberate through it, and that would be like the black hole swallowing an object. “The ripple gradually dissipates, and things return to equilibrium,” said Suvrat Raju, a theoretical physicist at the International Center for Theoretical Sciences in Bengaluru who has studied how AdS/CFT describes black holes.

The duality swaps the weirdness of gravity for the complexities of quantum theory, which for Susskind was an improvement. It let him pose the question of how the black hole should or shouldn’t evolve. A plasma reaches equilibrium quickly; its overall properties stop changing. But if it is mathematically equivalent to a black hole, whose interior volume continues to grow, something about the plasma must continue to evolve. Casting about for what that property could be, he proposed something that seems, on the face of it, to have nothing at all to do with either plasmas or black holes — or indeed, any physical system at all.

What Complexity Means

In particular, Susskind proposed a particular property known as circuit complexity.

The word “circuit” has its origins in the “switching circuits” once used to route telephone calls. These circuits carry signals that are controlled by “gates,” which are electronic components that perform logical or arithmetic operations. A few basic types of gates can be strung together to implement more involved operations. All ordinary computers are built this way.

The inventors of quantum computers adopted the same framework. A quantum circuit acts on its basic units of information, qubits, using a standardized repertoire of gates. Some gates perform familiar operations such as addition, while others are quintessentially quantum. A “controlled NOT” gate, for example, can bind together two or more qubits into an indivisible whole, known as an entangled state.

Inside a quantum computer, qubits might be particles, ions or superconducting current loops. But in general, their precise physical form doesn’t matter. Any system composed of discrete units can be recast as a circuit, even a system that looks nothing like a computer. “The air molecules in a room are moving around and banging into each other, and we can think of any collision as a gate,” said Nicole Yunger Halpern, a quantum information theorist at the University of Maryland.

Though a technical concept, circuit complexity is not far from what we mean by “complexity” in daily life. When we say a job is complex, we usually mean that it involves a large number of steps. In a quantum system, complexity is the number of elementary gates (or operations) needed to replicate a particular state. Complexity according to this definition is an integer — the number of gates — but researchers have also explored using geometric concepts to define complexity as a continuous or real number.

Susskind applied this concept to the hot plasmas that, through the AdS/CFT duality, are equivalent to black holes. He suggested that, even after the plasma reaches a condition of thermal equilibrium, its quantum state does not stop evolving. It becomes ever more complex. The ripples that are reverberating through the plasma dissipate but do not entirely go away, and they are still there if you look at the plasma at the quantum level. Attempting to re-create another plasma with the same pattern of ripples would become increasingly laborious.

Susskind thus laid out his solution to the problem of the ever-growing black hole: The black hole is equivalent to a nuclear plasma; the volume of the black hole is mathematically equivalent to the circuit complexity of the plasma; and because the circuit complexity keeps growing, so must the volume.

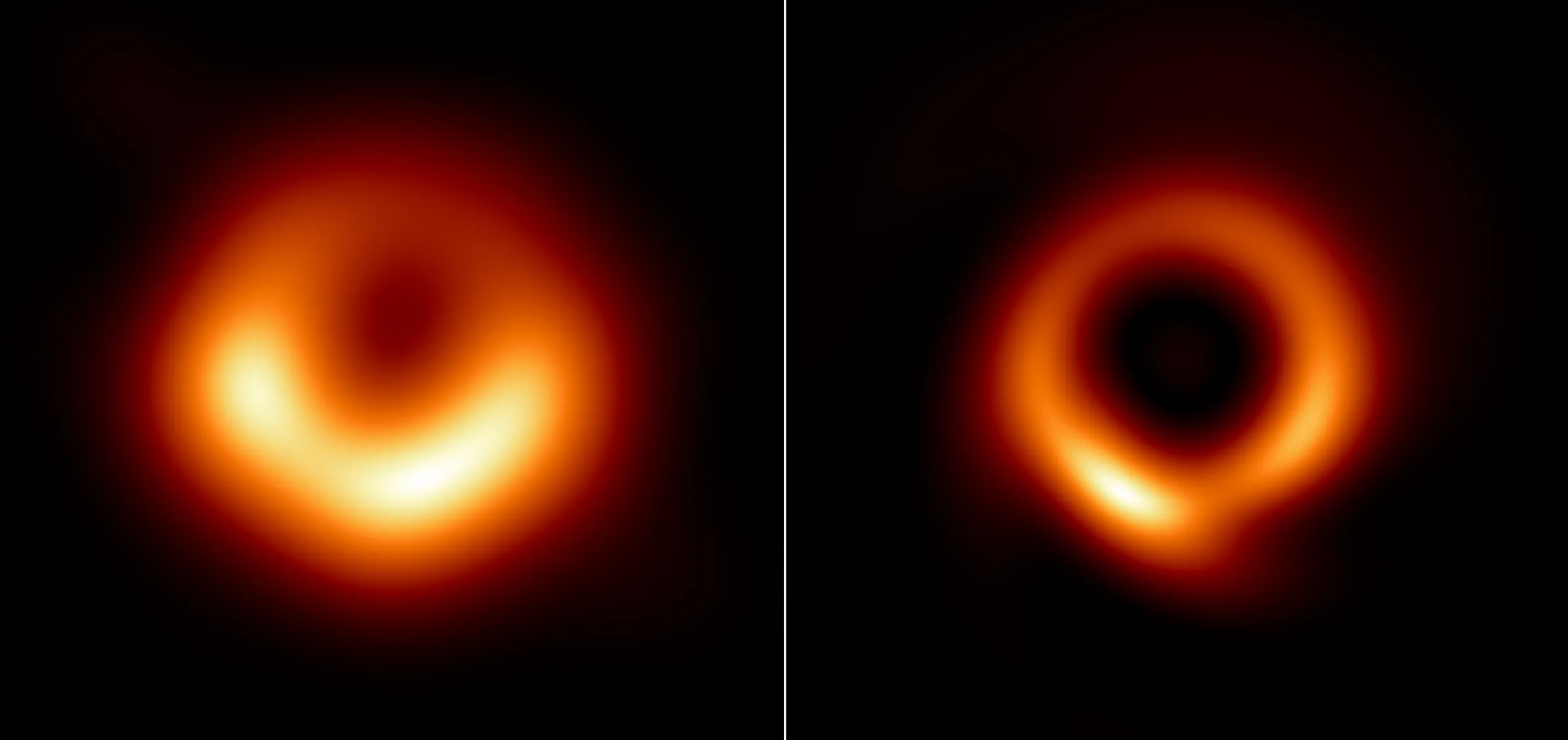

The black hole at the heart of the M87 galaxy as originally seen in 2017 (left) and after recent data processing by a machine learning algorithm (right).

Medeiros (Institute for Advanced Study), D. Psaltis (Georgia Tech), T. Lauer (NSF’s NOIRLab), and F. Ozel (Georgia Tech)

Borrowed Without Permission

Computer scientists, on first hearing the proposal, were aghast. They never intended circuit complexity to describe the evolution of physical systems. The concept just measures the intrinsic difficulty of a computational task. “The point of circuit complexity is to try to capture those rare examples where you can compute something more quickly,” said Aram Harrow, a physicist at the Massachusetts Institute of Technology.

For instance, consider multiplying two numbers. In the usual procedure of long multiplication, you multiply every digit by every other digit. Increase the number of digits, and the number of steps scales up as the square of that number. Yet this turns out to be wasteful; the circuit complexity of multiplication is lower than the grade school method would imply.

Computer scientists couldn’t see what any of this had to do with physics. Circuit complexity for them is a theoretical tool for evaluating algorithms, not a physical quantity. Suppose someone gave you an algorithm that yielded the digits 3, 1, 4, 1, 5, 9. On the face of it, these digits look like the product of a long, complex algorithm. They have no obvious pattern; they look random, which is a state of maximal complexity. The only algorithm that can produce a random series of digits is one that has those digits preprogrammed into it. Only because someone told you years ago do you recognize that those digits are not random after all, but rather the start of π, and therefore the output of a simple algorithm.

Without that helpful tip, the only way to ascertain the circuit complexity would be trial and error: trying out every possible circuit, looking for one that reproduced the digits. In fact, it wouldn’t be enough to find just one — you’d need to find each and every circuit and then take the absolute shortest. “It’s very hard to ‘feel’ or estimate the complexity of these functions,” said Adam Bouland, a computer scientist at Stanford.

Scott Aaronson, a computer scientist at the University of Texas, Austin who spent years cajoling his physics friends to think about computational complexity, felt dubious now that it had happened. “I’ve been banging the drum for a long time about complexity theory being potentially relevant to fundamental physics, but then once Lenny got involved, then I was in the very odd position of trying to put on the brakes,” he recalled.

The Code to Be Broken

Although computer scientists could see Susskind’s point that complexity grows and a black hole’s interior volume also grows, they doubted there was a real connection. Either some other quantity was equivalent to interior volume, or the AdS/CFT duality was wrong and the search for such a quantity was a wild goose chase.

To investigate further, Bouland, Bill Fefferman at the University of Chicago and Umesh Vazirani at the University of California, Berkeley dissected Susskind’s proposal, studying both sides of the holographic duality. On one side, they analyzed the black hole and its interior volume. On the other, they took up the hot plasma to which it is supposedly equivalent.

Start with the hole. This was supposed to be the easy bit. Researchers had been assuming all along that, although circuit complexity seems like a theoretical abstraction, the interior volume of a black hole as Susskind defined it is a measurable quantity. Building contractors measure the volume of spaces all the time.

But astronauts plummeting into a black hole aren’t in any condition to break out a tape measure. Once they cross the event horizon, they are moving at the speed of light toward certain doom. “They don’t have much time in there before they hit the singularity, so they can’t possibly feel the entire space,” Bouland said.

Adam Bouland, a computer scientist at Stanford University, explored the connections between black holes and computational complexity.

Mary Bender

He and his co-authors realized that you don’t need to jump into a black hole. The black hole is governed by the laws of gravity, so if you can simulate those laws on a computer with enough precision, you get as much information as you would taking the plunge for real. So they imagined a simulation that includes a team of astronauts who enter the hole from different directions. They beam laser signals to one another, and each will see some of the others’ signals, but not all of them, depending on the volume of the interior. Although no single person has time to assemble the data, you — as the physicist running the simulation — can do that for them. “We can gain a God’s-eye view over the space-time which is not accessible to anyone within it,” Bouland said. It turned out that, despite the researchers’ initial worries, the black hole’s interior volume is eminently calculable.

Then they turned their attention to the plasma. They conceived of it cryptographically, as a so-called block cipher. Block ciphers go back to the 1850s and are the core of most modern encryption schemes. With such a cipher, you reshuffle the message characters multiple times using a code key, thus hiding the text behind multiple layers of misdirection. Code breakers are reduced to brute force: guessing the key to see whether they can recover meaningful text. But they succeed only if they guess the key precisely; because of all the reshuffling, even a single error will yield gibberish. So breaking the code is computationally hard.

A block cipher doesn’t seem anything like a plasma, let alone a black hole, but the reshuffling of code characters is analogous to the churning of particles in the plasma. Bouland and his co-authors demonstrated their mathematical equivalence. Furthermore, decrypting a message encoded with a block cipher is equivalent to inferring the circuit complexity of a quantum state.

Putting the two sides of the AdS/CFT duality together, the researchers were faced with an apples-and-oranges problem. The black hole volume is fairly straightforward to calculate, but the circuit complexity is anything but. This was a problem. The entire field of theoretical computer science is built on the principle that computational tasks fall into distinct classes of complexity. Hard is hard, easy is easy, and never the twain shall meet.

The bottom line is that computer scientists couldn’t brush off Susskind’s conjecture as the misappropriation of their concept of circuit complexity. In fact, the black hole volume paradox was now as much a problem for them as for physicists, because it threatened to collapse gradations of computational difficulty.

The Hard Problems

To resolve this paradox, researchers needed to ensure that hard stays hard. Something about the supposedly easy volume calculations has to be secretly hard. Bouland and his co-authors considered two options.

First, maybe black holes aren’t so straightforward to simulate after all. If they’re not, then you can’t calculate their interior volume so easily. But that would violate the entire conception of a computer. A computer is defined as a universal device capable of simulating anything in nature efficiently. Computer scientists regard this generality — which goes by the somewhat unwieldy name of the quantum extended Church-Turing thesis — as a deep principle on a par with any law of physics. It reflects, ultimately, the reductionist structure of nature. By recapitulating this structure, a computer can do anything nature can. “A world that didn’t have this kind of programmability would also be one that didn’t break up into little parts interacting by simple rules,” Harrow said.

As profound as this thesis is, a violation is not entirely implausible. Scientists have been here before. The original Church-Turing thesis turned out to be wrong, because an ordinary computer can’t efficiently simulate everything in nature. In particular, to efficiently simulate a quantum system, you need a quantum computer.

Maybe history is repeating itself. Maybe the physics governing black holes — the quantum theory of gravity — is beyond the power even of quantum computers. If so, you might well learn things by jumping into a black hole that you couldn’t learn just by simulating them. In effect, a black hole would be a computer as powerful in comparison to a quantum computer as a quantum computer is compared to a classical one. “You can jump into a black hole and learn things fast that a quantum computer would take a very long exponential time to compute,” Susskind suggested. Then you would need a quantum gravitational extended Church-Turing thesis.

While this is possible, most theorists think quantum gravity should still be quantum, and thus within reach of quantum computers. Susskind, Aaronson and other luminaries debated this scenario for much of last year and now think any violation would be, at the least, very hard to engineer.

So they are inclined to accept Bouland, Fefferman and Vazirani’s other option: that the act of translating from black hole to plasma, or vice versa, is computationally taxing. The black hole may itself be comparatively easy for a computer to analyze, and the same may go for the plasma, but your computer could spend a near-eternity mapping a property on one side to its equivalent on the other. The translation software doing the mapping “would involve something that is exponentially hard to compute, even for a quantum computer,” Aaronson said. When the mapping is so convoluted, a hard problem will always be hard, whether you try to solve it directly or use the AdS/CFT duality, hoping it will be easier.

Bill Fefferman, a computer scientist at the University of Chicago, found that translating between equivalent descriptions of a black hole is computationally difficult.

Department of Computer Science, University of Chicago

The AdS/CFT duality has been reliably blowing people’s minds for over 25 years. It’s hard to visualize how such different systems as a black hole and a hot plasma could be equivalent. Now it seems that the difficulty is not just a failure of the human imagination, but a feature of the mathematics.

As a result of all this, computer scientists have come around to Susskind’s view that circuit complexity is a perfectly legitimate physical quantity. They hadn’t liked it because it was hard, verging on impossible, to measure or compute. But if the black-hole-to-plasma translation is hard, then any quantity that is equivalent to black hole volume is going to be hard to compute. The difficulty of calculating circuit complexity is not a strike against it. To the contrary, it is precisely what you would expect. When the translation is hard, a measurable physics quantity on one side is necessarily “unfeelable” on the other. “The unfeelability is simply a reflection of the extreme difficulty of making the dictionary from one to the other,” Susskind said. “I think physicists have not really realized the implications of this.”

Susskind is also gratified that his sharpest critics have become his closest allies. “I was amused,” he said. “I watched them go through their contortions. They’re very good scientists. And in the end the conclusion was no, complexity is the only possible thing that it could be.”

The Pace of Space

A second potential problem with Susskind’s conjecture is that the circuit complexity of a hot plasma might not grow at the right rate. It seems intuitive, even trivial, that circuit complexity would grow with time. With each passing moment, more happens to the hot plasma. So it stands to reason that ever more operations would be required to reproduce its present state.

The trouble, though, is that circuit complexity is being pressed into a task for which it was not originally intended. The operations occurring in the hot plasma are uncontrolled random interactions, not the predictable logic operations of a computer algorithm. So theorists can’t be sure what will happen. The plasma might undergo a million interactions, creating an increasingly complex quantum state, and then the next interaction might abruptly leave it in a simple state — one that could have been created using a mere 1,000 interactions. It wouldn’t matter that the plasma had undergone a million and one interactions; the complexity is defined by the number of interactions it needs to undergo to reach the end point.

It would be like setting out to explore your neighborhood, turning left at some intersections and right at others, and eventually arriving at a hole-in-the-wall restaurant you’d never seen before. Your sense of accomplishment would turn to chagrin when you realized it was just across the street from your house. The distance from your house to the restaurant depends on their relative positions, not on how much walking you’ve done.

Susskind’s original argument for why this shouldn’t happen — why the complexity should grow in a continuous linear trend — was that the space of possibilities is, to quote Douglas Adams, vastly, hugely, mind-bogglingly big. Susskind thought it very unlikely that the system would stumble into a simpler state. But turning this intuition into a solid argument has been tough.

In one of several approaches that theorists have taken, Fernando Brandão, a quantum computing scientist at Caltech, and his co-authors studied what happens when a system undergoes one random interaction after another. It enters states that are uniformly spread through the space of possibilities, forming a set known as a design. It turns out that a chaotic system will naturally create a sequence of designs that approximate a truly random distribution with increasing refinement. Because randomness is maximal complexity, getting closer to randomness means that the system grows ever more complex, and at nearly the same rate at which the black hole interior grows bigger.

But Brandão’s approach and others make some debatable simplifications, and not all match the black hole perfectly, so a full proof remains on theorists’ to-do list.

A New Second Law

Not letting the lack of a rigorous proof stop them, Susskind and Brown suggested in 2018 that the steady growth of complexity qualifies as a new law of nature, the second law of quantum complexity — a quantum analogue of the second law of thermodynamics. The second law of thermodynamics holds that closed systems increase in entropy until they reach thermal equilibrium, the state of maximal entropy. According to Susskind and Brown, the same happens with complexity. A system increases in complexity for eons after it reaches thermal equilibrium. But it does eventually plateau, reaching “complexity equilibrium.” At that point, a quantum system has explored every possible state it is capable of and will finally lose any sense of progress.

The eventual plateauing of circuit complexity led Susskind to revisit his original motivation for considering circuit complexity — namely, the growth of black hole interiors. General relativity predicts that they grow forever, but the fun has to end sometime. That means general relativity itself must eventually fail. Theorists already had plenty of reasons to suspect that black holes ultimately need to be described by a quantum theory of gravity, but the cessation of volume growth is a new one.

In 2021 Iliesiu, Márk Mezei of Oxford University, and Gábor Sárosi of CERN studied what that means for black holes. They used a standard quantum physics method known as the path integral, which has the nice feature of being agnostic to whatever the full quantum theory of gravity is, be it string theory or one of its competitors. The theorists found that quantum effects accumulate like barnacles on a ship’s hull and eventually arrest the growth of the interior. At that point, the black hole’s interior geometry changes. This is an additional milestone in black hole evolution, with no obvious relation to events that theorists already knew about, such as the final evaporation and disappearance of the object.

The Five Stages of Quantum Systems

So far, all of this concerns black holes. But the black holes really just reveal a more general principle about matter. Gradually emerging from all this work is a picture of the full life cycle of quantum systems — the chaotic ones, which means most of them, including the universe as a whole. According to this picture, they go through five distinct stages.

The first is initialization. The system starts simply: just a bunch of particles or other building blocks, acting independently.

Then comes thermalization. The particles bounce around and collide with one another, eventually reaching thermal equilibrium. Their shenanigans also begin to link the particles through quantum entanglement. In a process that Susskind calls “scrambling,” information is disseminated through the system until it no longer resides in localized places, much as a butterfly flapping its wings in Brazil can affect weather over the whole globe. “Operators that are initially local have spread over the entire system in a butterfly-effect-like way,” said Nick Hunter-Jones, a theoretical physicist at the University of Texas, Austin.

Next is complexification. Here, the system is in thermal equilibrium but has not stopped evolving. It keeps getting more complex, but in a way that is almost invisible to standard measures such as entropy. Theorists rely instead on circuit complexity, which expresses the increasingly intricate linkages among entangled particles. “Complexity is really like a microscope into the entanglement structure of the system,” Hunter-Jones said. This stage lasts exponentially longer than thermalization.

Then the system reaches complexity equilibrium, where complexity hits a ceiling. Although the system continues to change, it can no longer be said to evolve — it has no sense of directedness, but wanders among equal states of maximal complexity.

The last stage is called recurrence, where the system stumbles back into its original simple condition. For this to happen by accident is highly improbable. But eternity is a long time, so it ultimately does happen, after a period of time that is not merely exponential, but an exponential of an exponential. The whole process then repeats.

In short, quantum systems that reach thermal equilibrium are like the happy couples in romantic comedies. The film typically ends when the couple gets married, as if that were the end of one’s love life. In reality, it’s just the start.