Old Problem About Mathematical Curves Falls to Young Couple

Nadzeya Makeyeva for Quanta Magazine

Introduction

A basic fact of geometry, known for millennia, is that you can draw a line through any two points in the plane. Any more points, and you’re out of luck: It’s not likely that a single line will pass through all of them. But you can pass a circle through any three points, and a conic section (an ellipse, parabola or hyperbola) through any five.

More generally, mathematicians want to know when you can draw a curve through arbitrarily many points in arbitrarily many dimensions. It’s a fundamental question — known as the interpolation problem — about algebraic curves, one of the most central objects in mathematics. “This is really about just understanding what curves are,” said Ravi Vakil, a mathematician at Stanford University.

But curves that live in higher dimensions, despite having been studied with state-of-the-art tools for hundreds of years, are tricky beasts. In two-dimensional space, a curve can be cut out by a single equation: A line might be written as y = 3x − 7, a circle by x2 + y2 = 1. In spaces of three or more dimensions, however, a curve gets much more complicated, and it is often defined by so many equations in so many variables that you cannot possibly hope to fully understand its geometry. As a result, a curve’s most basic properties can be exceedingly difficult to grasp — including the seemingly simple notion of whether it passes through some collection of points in space.

For centuries, mathematicians have been proving cases of the interpolation problem: Can you, for instance, put a curve with certain specified properties through 16 points in three-dimensional space, or a billion points in five-dimensional space? That work has not only allowed them to answer important questions in algebraic geometry, but also helped inspire developments in cryptography, digital storage and other areas well beyond pure mathematics.

Still, Vakil said, it’s not enough to understand interpolation for most curves. Mathematicians want to know it for all of them.

Merrill Sherman/Quanta Magazine

Now, in a proof posted online earlier this year, two young mathematicians at Brown University, Eric Larson and Isabel Vogt, have finally dealt the problem its final blow, solving it completely and systematically. The paper marks the culmination of nearly a decade of work, during which they gradually chipped away at the question, solved important related problems about what curves look like and how they behave — and also got married.

“It’s really a remarkable story,” said Sam Payne, a mathematician at the University of Texas, Austin, “for [people] that young and that early in their mathematical development to latch on to such a deep, hard problem, and then to be so persistent.”

Embedding Curves

The solution to the interpolation problem builds on work that dates back to the 19th century — work that answers an even more basic question. What algebraic curves are out there at all?

A curve is a one-dimensional object that sits in a higher-dimensional space. While it’s not usually clear how to describe a curve using specific equations, mathematicians can characterize it based on certain numerical properties instead. The first of these is the dimension of the space that the curve lives in. The second is the degree of the curve, which is the number of times it intersects with a hyperplane — a subspace whose dimension is one less than that of the space. For instance, a circle in the two-dimensional plane has degree 2, because when it’s sliced with a one-dimensional line, the line generally hits it at two points. Meanwhile, the degree of a curve embedded in 20-dimensional space is the number of times it intersects with a 19-dimensional hyperplane. You can think of the degree as a kind of measure of how twisted up the curve is.

The third number that mathematicians use to describe a curve is called its genus. Because a curve is a one-dimensional object that is defined in terms of complex numbers, each of its points can also be written as a pair of real numbers, rather than one complex number. This means that from a topological point of view, a curve actually looks like a two-dimensional surface — and that surface can have holes. (A typical example is the surface of a doughnut.) A curve’s genus, then, is how many holes it has.

Before mathematicians could even start to contemplate what curves of a given genus and degree might look like, they needed to figure out when such curves could exist in the first place. Even that turned out to be a big challenge.

In the 1870s, the mathematicians Alexander von Brill and Max Noether (father of the famous mathematician Emmy Noether) formulated a prediction using only three properties: the genus (g), or number of holes it has; the degree (d), or its twistiness; and the number of dimensions (r) that the curve lives in. They conjectured that you can embed a curve of a given genus in a space of a given number of dimensions if and only if the degree of that curve is sufficiently large — that is, if the curve is sufficiently floppy. They wrote out a precise inequality in terms of g, d and r, and argued that only if that inequality held would a curve of your choosing be possible.

But their argument fell short of an actual proof. That wouldn’t come for more than a century, when in 1980 Phillip Griffiths and Joe Harris used modern techniques in algebraic geometry to show that the Brill-Noether theorem was indeed true. (Since then, mathematicians have produced around half a dozen different proofs of the theorem and have developed a rich theory around it.)

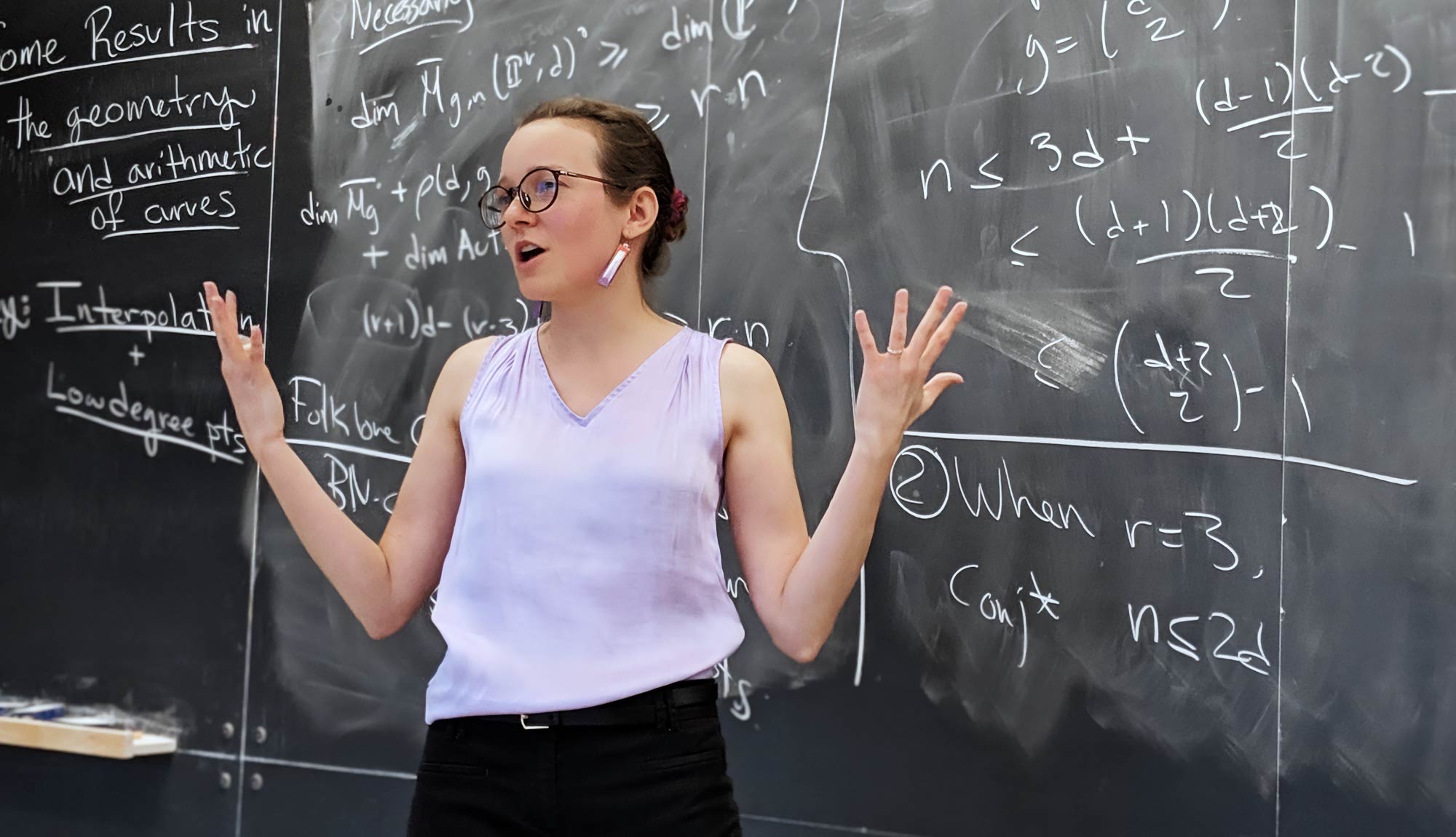

Isabel Vogt, a mathematician at Brown University, works at the intersection of algebraic geometry and number theory. Much of her work focuses on the geometry of algebraic curves.

Padmavathi Srinivasan

The result finally made it possible for mathematicians to return to the interpolation problem — that is, figuring out how many random points in r-dimensional space a curve of genus g and degree d could pass through. (Here, the curve is said to be “general,” meaning that it doesn’t get embedded in space in a special way.) Based on Brill and Noether’s work, they had an educated guess about what the answer to that question should be. As with the Brill-Noether theorem, it came in the form of a particular inequality that the curve’s parameters needed to satisfy — this time written not just in terms of g, d and r, but also in terms of n, the number of points.

But unlike in the Brill-Noether theorem, there were clear exceptions to this rule, cases where the geometry of a curve restricted how many points it would otherwise have been expected to pass through. “That’s already an indication that this is a hard theorem, this is a deep theorem, this requires a lot of work,” Payne said.

That’s the problem that Larson and Vogt got interested in. They were inspired in part by Harris, who was one of their professors when they were undergraduate students together at Harvard University, where they met in 2011. Harris later became Larson’s doctoral adviser and Vogt’s co-adviser when, as graduate students at the Massachusetts Institute of Technology, they started working on interpolation in earnest.

Breaking the Problem

Larson began his involvement with the interpolation problem while he was working on another major question in algebraic geometry known as the maximal rank conjecture. When, as a graduate student, he set his sights on this conjecture — which had been open for more than a century — it seemed like “a really dumb idea, because this conjecture was like a graveyard,” Vakil said. “He was trying to chase something which people much older than him had failed at over a long period of time.”

But Larson kept at it, and in 2017, he presented a full proof that established him as a rising star in the field.

The key to that proof involved working out various cases of the interpolation problem. That was because a big part of Larson’s approach to the maximal rank conjecture (which is also about algebraic curves) was to break a curve of interest into multiple curves, study their properties, then glue them back together in just the right way. And to glue those simpler curves together, he had to make each of them pass through the same group of points — which in turn meant proving an interpolation problem. “Interpolation gives you a machine for building these [more complicated] curves,” Larson said.

Vogt was already working on interpolation. In the first paper she wrote in graduate school, she proved all cases of interpolation (including all exceptions) in three-dimensional space; the following year, she teamed up with Larson to solve the problem in four-dimensional space as well. While the couple has since collaborated on other projects, “this is how we got started working together,” Vogt said. That same year — which was also the year Larson posted his proof of maximal rank — they got married. Since then, they’ve often found themselves discussing ideas after dinner, working through problems on the chalkboards they have in their home.

The interpolation problem asks if a certain type of curve can pass through a given collection of random points. To prove it, the duo would have to show that the curve could wiggle in space in a particular way. Consider, for instance, three points on a line. If you move one point slightly away from the line but keep the other two points fixed, you can’t shift the line in any way that would allow it to pass through the new configuration of points. Trying to hit all three would force the line to bend, so that it would no longer be a line. And so a line can interpolate through two points but not three.

The mathematicians wanted to figure out something similar for more complicated curves in higher-dimensional spaces — to shift them at certain points, and study how they moved.

To do so, they needed to look at a structure called the normal bundle of the curve, which essentially controls how the curve can wiggle around. The interpolation question could then be rewritten as a problem about computing properties of the normal bundle of a curve.

But these get prohibitively difficult to study for the more complicated curves that Larson and Vogt were concerned with. So they used a similar strategy to the one Larson used in the proof of the maximal rank conjecture. Given a curve, they broke it into pieces — but delicately, just so. “They took the problem, and they broke it, but in just the right way so they could see exactly what was going on,” Vakil said.

Take a simple example. Say you have a hyperbola in the plane, a single curve that looks like a pair of mirror-image arcs facing away from each other. You can “deform” this curve until it breaks into two simpler curves, in this case a pair of lines that cross each other in an X shape. Some aspects of the geometry of the hyperbola are still reflected in the geometry of those lines. But since the lines are simpler, they’re easier to work with, and it’s easier to analyze their normal bundles.

Merrill Sherman/Quanta Magazine

That said, you can’t simply look at the normal bundles of the individual lines and immediately translate that to an understanding of the normal bundle of the hyperbola. That’s because at the point where the two lines meet, the normal bundle misbehaves, in a sense. Instead, mathematicians have to study the normal bundle with certain modifications.

Of course, Larson and Vogt weren’t looking at hyperbolas and lines, but at much more intricate situations. They would start by splitting a complex curve into two pieces: a line and a simpler (but still complicated) curve that met that line at either one or two points. They would then break the more complicated curve into two, and repeat the process again, and again, and again, until they reduced everything to truly simple “base” curves, “the sort of thing that you can work out with your bare hands,” Vogt said. Throughout that process, they had to keep track of the normal bundles of the pieces — and all the modifications to those normal bundles that piled up — to prove what they needed to prove about the original normal bundle.

But these ways of breaking the curves up weren’t enough. They didn’t work for all the kinds of curves covered by the Brill-Noether theorem.

Larson and Vogt had to introduce a new method for breaking their curves up — a method that didn’t involve one of the pieces being a line. Figuring that out was a challenge, not only because it simply might not do what they wanted it to at a given step in their argument, but also because they had to watch out for the exceptions where the interpolation statement didn’t hold true. “Your argument has to be sufficiently complicated, because you can’t ever [end up with] an exception” as your base case, Vogt said. “That would be really bad.”

As a graduate student in 2017, the mathematician Eric Larson solved the maximal rank conjecture, a major open problem about algebraic curves.

Lori Nascimento

Eventually they found a way to do this. “It’s technically extremely difficult. It’s a very, very demanding construction argument,” Harris said. “Frankly, I think it requires somebody with the exceptional skills of Larson and Vogt to carry it out.”

At the same time, they developed methods for dealing with all the modifications to the normal bundle that piled up over the course of that argument. “It’s an amazing feat, keeping track of all these data, and being able to carry it through,” said Gavril Farkas, a mathematician at the Humboldt University of Berlin.

“Eric is really good at this thing,” Vogt said. Izzet Coskun, a mathematician at the University of Illinois who frequently collaborates with Larson and Vogt, agreed. “Eric is a little bit scary,” he said. “Most of us, we see a set of 12 inequalities, we give up and our eyes glaze over … but he doesn’t give up. There’s nothing too complicated for him.”

Ultimately, Larson and Vogt proved that curves will always interpolate through the expected number of points, with the exception of four special cases. They provided geometric reasons for why those four types of curves interpolate through an unexpected number of points. With that, they’d completed the problem once and for all.

“They make the arguments seem very natural. Like, it seems very unsurprising,” said Dave Jensen, a mathematician at the University of Kentucky. “Which is odd, because this is a result that other people tried to prove and were unable to.”

“It’s sheer perseverance. It’s more than that. It’s brilliant, actually, to be able to finish it,” Farkas said. “It’s quite something to see.”

A Family Legacy

While this proof might mark the end of one narrative thread, the story is far from over — from both a mathematical and a personal standpoint.

There’s no lack of questions you can ask about curves. And Larson and Vogt’s work provides a recipe of sorts for getting ahold of these central but elusive mathematical objects. “I think that a lot of the classical problems now are more approachable,” Coskun said. “Things that we would have thought there’s no way that you can even start to think about that … now you can ask.”

Meanwhile, Larson’s younger sister, Hannah Larson, is also a mathematician — currently a Clay fellow after earning her doctorate from Stanford University this spring — working on related questions about algebraic curves and Brill-Noether theory. “She is a machine,” said Vakil, who was her doctoral adviser. “She can do anything.”

She recently developed a new proof of the Brill-Noether theorem that Vakil called state-of-the-art. And she’s also been working, both independently and in collaboration with her brother and Vogt, on proving an analogue of the Brill-Noether theorem for certain special curves. “They’re a really impressive family,” Jensen said.

“It’s so fun to have something that we can do together like this,” Hannah Larson said of working with her brother and sister-in-law.

Like her brother, Hannah was inspired to study the material as an undergraduate, after taking a class taught by Harris. But she also credits Eric and Isabel for some of her interest in the subject as well. “When you hang out with somebody and you see how much fun they’re having doing math, or a particular kind of math, it made me want to try it too,” she said.

“What’s really neat [is that] they get along ridiculously well,” Vakil said. “People should not get along as well as the three of them get along.”

They’re now continuing to forge ahead in illuminating what different kinds of curves look like, how they behave, and what that might mean for other mathematical problems. “So this story is not yet complete in any way,” Hannah Larson said.