A Computer Scientist Who Tackles Inequality Through Algorithms

Constanza Hevia for Quanta Magazine

Introduction

When Rediet Abebe arrived at Harvard University as an undergraduate in 2009, she planned to study mathematics. But her experiences with the Cambridge public schools soon changed her plans.

Abebe, 29, is from Addis Ababa, Ethiopia’s capital and largest city. When residents there didn’t have the resources they needed, she attributed it to community-level scarcity. But she found that argument unconvincing when she learned about educational inequality in Cambridge’s public schools, which she observed struggling in an environment of abundance.

To learn more, Abebe started attending Cambridge school board meetings. The more she discovered about the schools, the more eager she became to help. But she wasn’t sure how that desire aligned with her goal of becoming a research mathematician.

“I thought of these interests as different,” said Abebe, a junior fellow of the Harvard Society of Fellows and an assistant professor at the University of California, Berkeley. “At some point, I actually thought I had to choose, and I was like, ‘OK, I guess I’ll choose math and the other stuff will be my hobby.’”

After college Abebe was accepted into a doctoral program in mathematics, but she ended up deferring to attend an intensive one-year math program at the University of Cambridge. While there, she decided to switch her focus to computer science, which allowed her to combine her talent for mathematical thinking with her strong desire to address social problems related to discrimination, inequity and access to opportunity. She ended up getting a doctorate in computer science at Cornell University.

Today, Abebe uses the tools of theoretical computer science to help design algorithms and artificial intelligence systems that address real-world problems. She has modeled the role played by income shocks, like losing a job or government benefits, in leading people into poverty, and she’s looked at ways of optimizing the allocation of government financial assistance. She’s also working with the Ethiopian government to better account for the needs of a diverse population by improving the algorithm the country uses to match high school students with colleges.

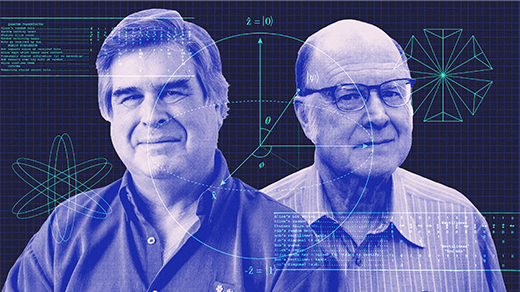

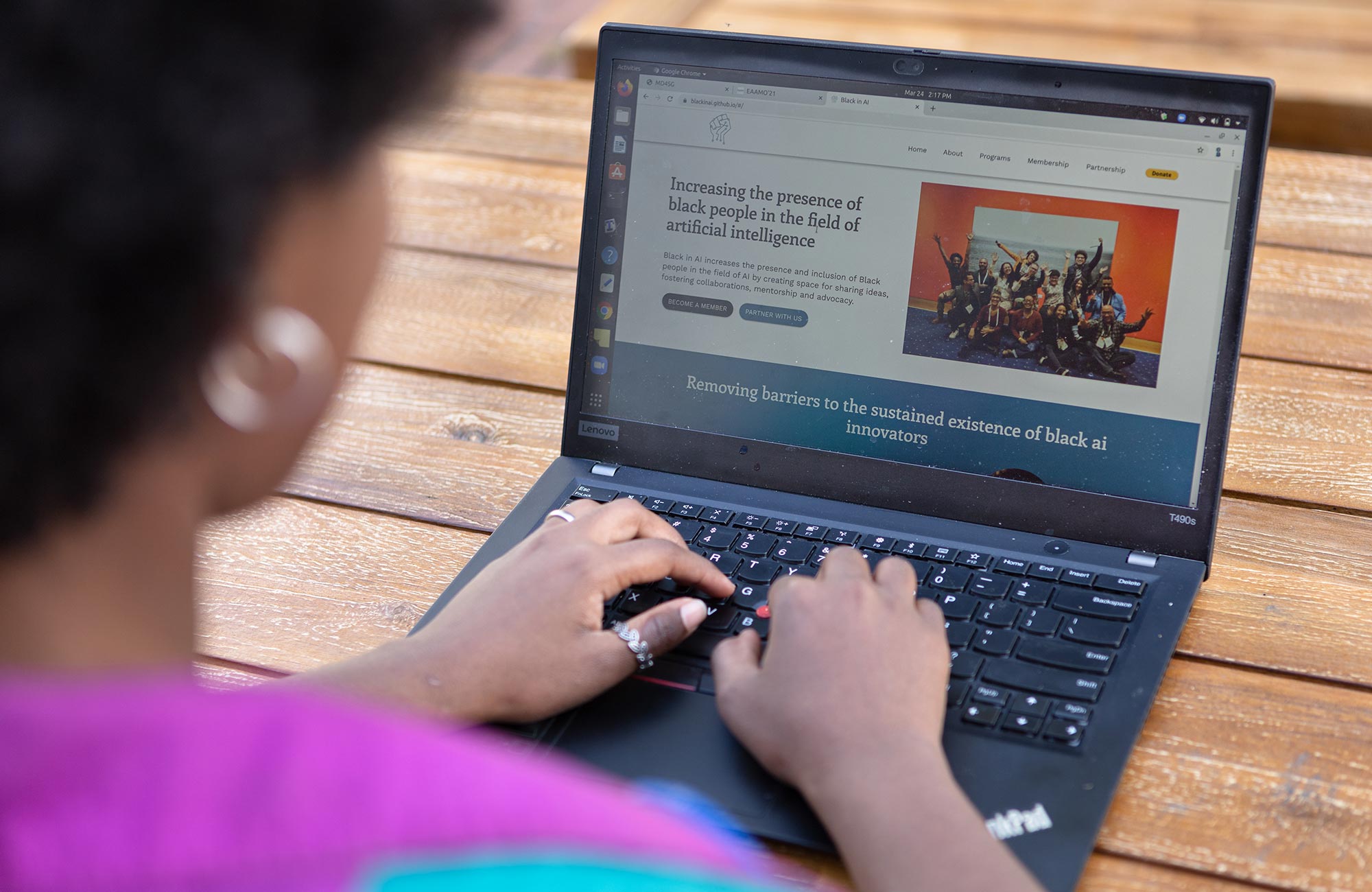

Abebe is a co-founder of the organizations Black in AI — a community of Black researchers working in artificial intelligence — and Mechanism Design for Social Good, which brings together researchers from different disciplines to address social problems.

Quanta Magazine spoke with Abebe recently about her childhood fear that she’d be forced to become a medical doctor, the social costs of bad algorithmic design, and how her background in math sharpens her work. This interview is based on multiple phone interviews and has been condensed and edited for clarity.

You’re currently involved in a project to reform the Ethiopian national educational system. The work was born in part from your own negative experiences with it. What happened?

In the Ethiopian national system, when you finished 12th grade, you’d take this big national exam and submit your preferences for the 40-plus public universities across the country. There was a centralized assignment process that determined what university you were going to and what major you would have. I was so panicked about this.

Why?

I realized I was a high-scoring student when I was in middle school. And the highest-scoring students tended to be assigned to medicine. I was like 12 and super panicked that I might have to be a medical doctor instead of studying math, which is what I really wanted to do.

What did you end up doing?

I thought, “I may have to go abroad.” I learned that in the U.S., you can get full financial aid if you do really well and get into the top schools.

So you went to Harvard as an undergraduate and planned to become a research mathematician. But then you had an experience that changed your plans. What happened?

I was excited to study math at Harvard. At the same time, I was interested in what was going on in the city of Cambridge. There was a massive achievement gap in elementary schools in Cambridge. A lot of students who were Black, Latinx, low-income or students with disabilities, or immigrant students, were performing two to four grades below their peers in the same classroom. I was really interested in why this was happening.

You eventually switched focus from math to computer science. What about computer science made you think that it was a place you could work on social issues that you care about?

It’s an inherently outward-looking field. Let’s take a government organization that has income subsidies it can give out. And it has to do so under budget constraints. You have some objective you’re trying to optimize for and some constraints around fairness or efficiency. So you have to formalize that.

From there, you can design algorithms and prove things about them. So you can say, “I can guarantee that the algorithm does this; I can guarantee that it gives you the optimal solution or at least it’s this close to the optimal solution.”

Does your math background still help?

Math and theoretical computer science force you to be precise. Ambiguity is a bug in mathematics. If I give you a proof and it’s vague, then it’s not complete. On the algorithmic side of things, it forces you to be very explicit about what your goals are and what the input is.

Within computer science, what would you say is your research community?

I’m one of the co-founders and an organizer for Mechanism Design for Social Good. We started in 2016 as a small online reading group that was interested in understanding how we can use techniques from theoretical computer science, economics and operations research communities to improve access to opportunity. We were inspired by how algorithmic and mechanism design techniques have been used in problems like improving kidney exchange and the way students are assigned to schools. We wanted to explore where else these techniques, combined with insights from the social sciences and humanistic studies, can be used.

The group grew steadily. Now it’s massive and spans over 50 countries and multiple disciplines, including computer science, economics, operations research, sociology, public policy and social work.

The term “mechanism design” may not be immediately familiar to a lot of people. What does it mean?

Mechanism design is like if you had an algorithm designed, but you were aware that the input data is something that could be strategically manipulated. So you’re trying to create something that’s robust to that.

When you see a social problem that you want to work on, what’s your process for getting started?

Let’s say I’m interested in income shocks and what impact those have on people’s economic welfare. First I go and learn from people from other disciplines. I talk to social workers, policymakers and nonprofits. I try to absorb as much information as I can and understand as best I can what other experts find useful.

And I let this very bottom-up process determine what types of questions I should tackle. So sometimes that ends up being like, there’s some really interesting data set and people are like, “Here’s what we’ve done with it, but maybe you can do more.” Or it ends up being a modeling question, where there’s some phenomenon that the algorithmic side of my work allows us to capture and model, and then I ask questions around some sort of intervention.

Does your work address any issues tied to the COVID-19 pandemic?

My income-shocks work is extremely timely. If you’re losing a job, or a lot of people are getting sick, those are shocks. Medical expenses are a shock. There’s been this massive global disruption that we all have to deal with. But certain people have to deal with more of it and different types of it than others.

Abebe is co-founder of the organization Black in AI, a community of Black researchers working in artificial intelligence.

Constanza Hevia for Quanta Magazine

How did you start to dig into this as a research topic?

We were first interested in how to best model welfare when we know individuals are experiencing income shocks. We wanted to see whether we could provide a model of welfare that captures people’s income and wealth, as well as the frequency with which they may experience income shocks and the severity of those shocks.

Once we created a model, we were then able to ask questions around how to provide assistance, such as income subsidies.

And what did you find?

We find, for example, that if the assistance is a wealth subsidy, which gives people a one-time, upfront subsidy, rather than an income subsidy, which is a month-to-month commitment, then the set of individuals you should target can be completely different from one another.

These types of qualitative insights have been useful in discussions with individuals working in policy and nonprofit organizations. Often in discussions around poverty-alleviation programs, we hear statements like, “This program would like to assist the most number of people,” but we ignore that there are a lot of decisions that have to be made to translate such a statement into a concrete allocation scheme.

You also published a paper exploring how different types of big, adverse life events relate to poverty. What did you find?

Predicting what factors lead someone into poverty is very hard. But we can still get qualitative insights about what things help you predict poverty better than others. We find that for male respondents, interactions with the criminal justice system, like being stopped by the police or being a victim of a crime, seem to be very predictive of experiencing poverty in the future. Whereas for female respondents, we find that financial shocks like income decreases, major expenses, benefit decreases and so on seem to hold a lot more predictive power.

You are also the co-founder of the Equity and Access in Algorithms, Mechanisms, and Optimization conference, which is being held for the first time later this year and which engages lots of the types of questions we’ve been talking about. What is its focus?

We are providing an international venue for researchers and practitioners to come together to discuss problems that impact marginalized communities, like housing instability and homelessness, equitable access to education and health care, and digital and data rights. It is inspiring to see the investment folks make to identify the right questions, provide holistic solutions, think critically about unintended consequences, and iterate many times over as needed.

You also have that ongoing work with Ethiopia’s government on its national education system. How are you trying to change the way this assignment process works?

I’m working with the Ethiopian Ministry of Education to understand and inform the matching process of seniors in high school to public universities. We’re still in the beginning stages of this.

Ethiopia has over 80 different ethnic groups and an incredibly diverse people. There are diversity considerations. You have different genders, different ethnic groups and different regions that they came from.

You might say, “We’re still going to try to make sure that everyone gets one of their top three choices.” But we want to make sure that you don’t end up in a school that has everyone from the same region or everyone is one gender.

What are the costs of getting the matching process wrong?

I mean, in any of the social problems that I work on, the cost of getting something wrong is super high. With this matching case, once you match to something, that’s probably where you’re going to go because the outside options might not be good. And so I’m deciding whether you end up close to home versus really, really far away from home. I’m deciding whether you end up in a region or in a school that has studies, classes and research that align with your work or not. It really, really matters.