Statistics Postdoc Tames Decades-Old Geometry Problem

Olena Shmahalo/Quanta Magazine

Introduction

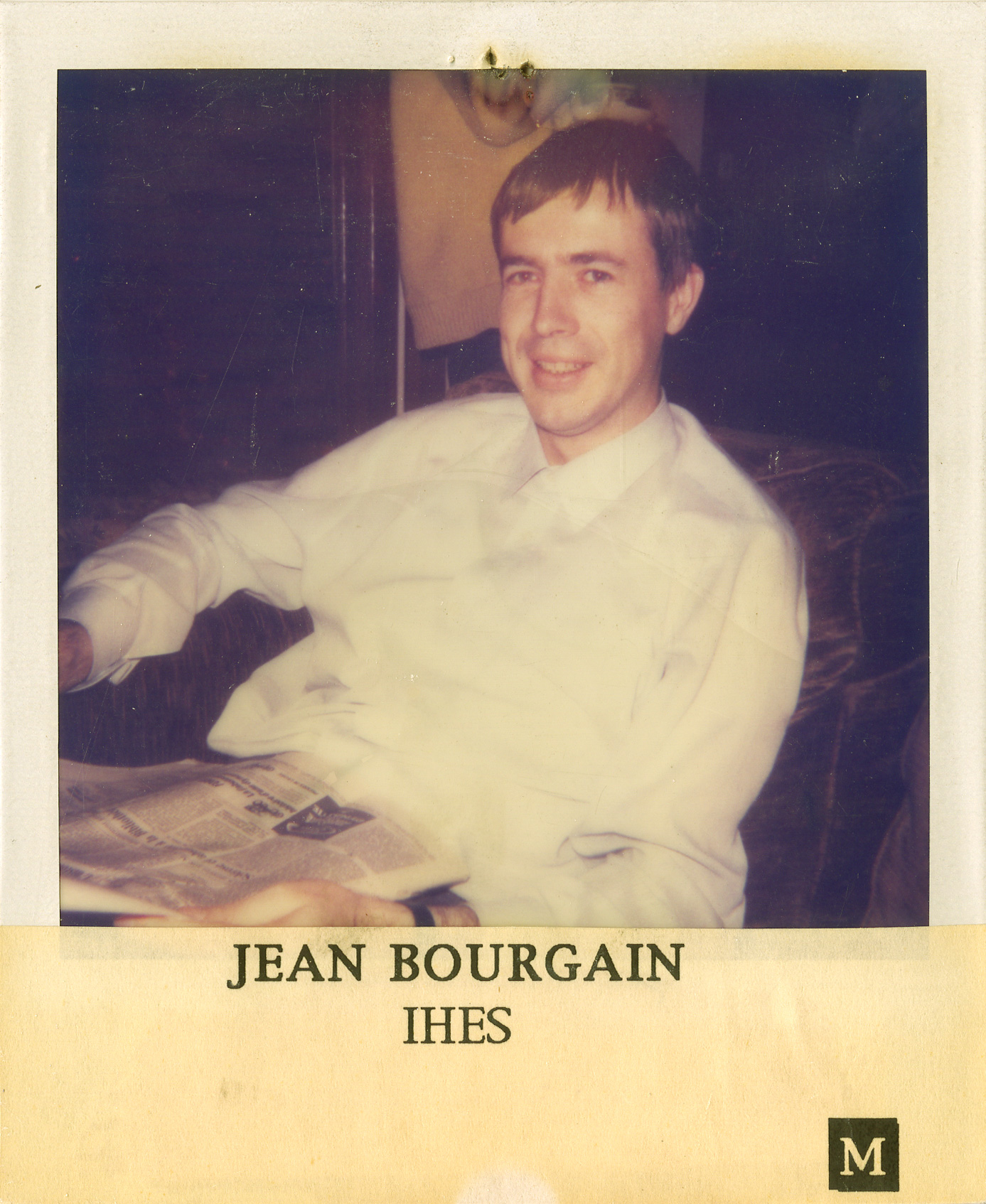

In the mid-1980s, the mathematician Jean Bourgain thought up a simple question about high-dimensional shapes. And then he remained stuck on it for the rest of his life.

Bourgain, who died in 2018, was one of the preeminent mathematicians of the modern era. A winner of the Fields Medal, mathematics’ highest honor, he was known as a problem-solver extraordinaire — the kind of person you might talk to about a problem you’d been working on for months, only to have him solve it on the spot. Yet Bourgain could not answer his own question about high-dimensional shapes.

“I was told once by Jean that he had spent more time on this problem and had dedicated more efforts to it than to any other problem he had ever worked on,” wrote Vitali Milman of Tel Aviv University earlier this year.

In the years since Bourgain formulated his problem, it has become what Milman and Bo’az Klartag of the Weizmann Institute of Science in Israel called the “opening gate” to understanding a wide range of questions about high-dimensional convex shapes — shapes that always contain the entire line segment connecting any two of their points. High-dimensional convex shapes are a central object of study not just for pure mathematicians but also for statisticians, machine learning researchers and other computer scientists working with high-dimensional data sets.

Bourgain’s problem boils down to the following simple question: Suppose a convex shape has volume 1 in your favorite choice of units. If you consider all the ways to slice through the shape using a flat plane one dimension lower, could these slices all have extremely low area, or must at least one be fairly substantial?

A Polaroid taken of Jean Bourgain in the 1980s by the Institute of Advanced Scientific Studies in France.

IHES

Bourgain guessed that some of these lower-dimensional slices must have substantial area. In particular, he conjectured that there is some universal constant, independent of the dimension, such that every shape contains at least one slice with area greater than this constant.

At first glance, Bourgain’s conjecture might seem obviously true. After all, if the shape were extremely skinny in every direction, how could it have enough substance to form one unit of volume?

“Come on — how hard can it be?” Ronen Eldan, a high-dimensional geometer at the Weizmann Institute remembers thinking when he first heard of the problem. “And then, the more you think about it, the more you understand how delicate it really is.”

The difficulty is that high-dimensional shapes often behave in ways that defy our human, low-dimensional intuition. For example, in dimensions 10 and up, it is possible to build a cube and a ball such that the cube has larger volume than the ball, but every slice through the center of the cube has smaller area than the corresponding slice through the center of the ball.

“The beauty of high-dimensional geometry is exactly that it doesn’t look anything like dimension two,” said Sébastien Bubeck of Microsoft Research in Redmond, Washington.

Bourgain’s slicing conjecture is a vote for high-dimensional tameness — a guess that high-dimensional shapes conform to our intuition in at least some ways.

Now, Bourgain’s guess has been vindicated: A paper posted online in November has proved, not quite Bourgain’s full conjecture, but a version so close that it puts a strict limit on high-dimensional weirdness, for all practical purposes.

Bourgain, said Klartag, “would have dreamt” of achieving a result this strong.

The new paper, by Yuansi Chen — a postdoctoral researcher at the Swiss Federal Institute of Technology Zurich who is about to join the statistical science faculty at Duke University — gets at the Bourgain slicing problem via an even more far-reaching question about convex geometry called the KLS conjecture. This 25-year-old conjecture, which asks about the best way to slice a shape into two equal portions, implies Bourgain’s conjecture. What’s more, the KLS conjecture lies at the heart of many questions in statistics and computer science, such as how long it will take for heat to diffuse through a convex shape, or how many steps a random walker must take from a starting point before reaching a truly random location.

Yuansi Chen atop Chäserrugg, a mountain in Switzerland, in December 2020.

Courtesy of Yuansi Chen

Random walks are pretty much the only effective methods available for sampling random points, Eldan said. And for a wide range of different computer science problems, he said, “the most important subroutine in the algorithm is, you want to sample a random point.”

Chen’s new result gives instant improvements to the known running times of algorithms for tasks such as computing the volume of a convex shape or sampling from an assortment of machine learning models. Chen’s work doesn’t quite prove the full KLS conjecture. But when it comes to computer science applications, Bubeck said, “you don’t need the full conjecture to get the full impact.”

Chen is not a convex geometer by training — instead, he is a statistician who became interested in the KLS conjecture because he wanted to get a handle on random sampling. “No one knows Yuansi Chen in our community,” Eldan said. “It’s pretty cool that you have this guy coming out of nowhere, solving one of [our] most important problems.”

Hidden Dumbbells

Like the Bourgain slicing problem, the KLS conjecture (named after its creators, Ravi Kannan, László Lovász and Miklós Simonovits) asks a simple question: Suppose you want to cut a convex shape — maybe an apple without dimples — into two equal-size portions, and you’re planning to put one aside for later. The exposed surface is going to turn brown and unappetizing, so you want to make it as small as possible. Among all the possible cuts, which will minimize the exposed surface?

It’s not too hard to answer this question, at least approximately, if you are limited to straight cuts. But if you’re allowed to make curved cuts, all bets are off. In dimension two, mathematicians know that the best cut will always be a straight line or an arc of a circle. But in dimension three, the best cut is understood only for a few simple shapes, and for higher-dimensional shapes, mathematicians usually don’t even have a hope of finding the optimal cut.

Samuel Velasco/Quanta Magazine

Since the optimal curved cut is so hard to pin down, Kannan, Lovász and Simonovits wondered how much worse things would be if you only allowed straight cuts. In 1995, they conjectured that this restriction will never make things too much worse: There is some universal constant such that the surface area of the best flat cut is at most that constant times the surface area of the best overall cut.

“It was a brilliant insight,” said Santosh Vempala of the Georgia Institute of Technology, even though Kannan, Lovász and Simonovits couldn’t prove their conjecture. Instead of establishing a universal constant, the best they could do was establish a factor that works out to roughly the square root of the dimension the shape lives in. So for a 100-dimensional convex shape, for example, they knew that the best straight cut will expose at most about 10 times as much surface area as the very best cut.

Exposing 10 times as much surface area might not sound so great. But since many attributes of high-dimensional shapes grow exponentially as the dimension grows, a square root’s worth of growth is modest by comparison. “It’s already an indication that there is a nice phenomenon in high dimensions,” Bubeck said. “Things are not as crazy as they could be.”

But researchers were eager to improve on this result, and not just from academic interest: They knew that the KLS factor encapsulates a world of information about how random processes behave within a convex shape. That’s because the smaller the best cut is, the harder it is for a random process to spread around the shape quickly.

Think of a dumbbell, with two massive balls connected by a narrow bridge. The fact that you can divide it into two equal pieces with just a small cut precisely captures the notion that the bridge is a bottleneck. A heat source or a random walker in one of the two balls will usually take a long time to reach the other ball, since it has to find its way through the bottleneck.

Samuel Velasco/Quanta Magazine

Of course, a dumbbell is not convex. A convex shape cannot have a disproportionately small flat cut like the one in the dumbbell, but perhaps it could have a disproportionately small curved cut. The KLS conjecture essentially asks if a high-dimensional convex shape can contain a hidden, twisty sort of dumbbell that slows down random mixing.

Kannan, Lovász and Simonovits’ square root bound put a limit on how extreme these hidden dumbbells could be. And in 2012, Eldan lowered their bound to the cube root of the dimension by introducing a technique called stochastic localization that, roughly speaking, envisions tilting the convex shape and sliding its points around in one direction after another until they have piled up in a particular region. It’s easy to prove the KLS conjecture for a highly concentrated mass, which is about as different from a dumbbell as it gets. By showing that the tilting process hadn’t changed things too much, Eldan was able to calculate a KLS bound for the original shape. “It’s a very, very beautiful process,” Bubeck said.

A few years later, Vempala and Yin-Tat Lee of the University of Washington refined Eldan’s stochastic localization to lower the KLS factor even further, to the fourth root of the dimension. And for a brief, glorious moment, they thought they had done something much stronger. If the dimension is called d, then the square root is d1/2, the cube root is d1/3, and the fourth root is d1/4. By introducing a new technique called bootstrapping, Lee and Vempala thought that they could lower the KLS bound all the way down to d raised to a power of 0 plus a little fudge factor. Since d0 always equals 1, Lee and Vempala’s bound was more or less a constant.

Yin-Tat Lee, top, and Santosh Vempala made a significant improvement to the KLS bound in 2016.

Yin-Tat Lee, left, and Santosh Vempala made a significant improvement to the KLS bound in 2016.

Courtesy of University of Washington; Courtesy of the College of Computing at Georgia Tech

“This is amazing,” Bubeck remembers saying when Lee told him of the result. “In my feeling it was a big deal, worthy of the highest praise,” he said.

Lee and Vempala posted their paper online. But within a few days, Klartag found a gap that undermined their proof of the d0 bound. Lee and Vempala quickly posted a revised draft that only claimed a d1/4 bound. And for several years, researchers thought that perhaps this was the end of the KLS story.

That’s because Eldan and Klartag had previously shown that any KLS bound instantly translates into a Bourgain slicing bound — for instance, Lee and Vempala’s d1/4 bound means that in the Bourgain slicing problem, there is always a slice whose surface area is at least about 1/d1/4. But mathematicians already knew several ways to prove a 1/d1/4 bound for Bourgain slicing. So maybe, they thought, Lee and Vempala had reached the natural endpoint of the KLS question.

“I was starting to feel, ‘Yeah, maybe this is the truth,’” Eldan said.

“There was the feeling that strong people worked on that method, and whatever could be exploited was exploited,” Klartag said. But that was before Yuansi Chen came along.

Bounding Slices

When Lee and Vempala posted their revised paper, they preserved in it their ideas about how a proof of the roughly d0 bound might work. Only one piece of their proof, they explained, had fallen through.

Their paper caught the eye of Chen, then a statistics graduate student at the University of California, Berkeley, who was studying the mixing rates of random sampling methods. Random sampling is a key ingredient in many types of statistical inference, such as Bayesian statistics, a framework for updating beliefs based on new evidence. “You deal with this [random] sampling every day if you want to do Bayesian statistics,” Chen said.

Lee and Vempala’s paper introduced Chen to the idea of stochastic localization. “I thought it was one of the most beautiful proof techniques I had seen for a while,” Chen said.

Chen dived into the literature and spent several weeks trying to fill the gap in Lee and Vempala’s proof, but to no avail. Periodically over the next few years, some idea for how to modify stochastic localization would pop into his head and he would ponder it for a few hours before giving up. Then finally, one of his ideas bore fruit: There was a way, he realized, not to prove the missing statement in Lee and Vempala’s proof, but to get around the need for such a strong statement at all.

Through what Chen called “some little tricks” but Vempala called an “elegant and important new insight,” Chen figured out how to make Lee and Vempala’s bootstrapping method work. This method takes a recursive approach to lowering the KLS bound, by showing that if you can make the bound fairly small, then there’s a way to make it even smaller. Applied repeatedly, this bootstrapping approach achieves the approximately constant bound for the KLS conjecture, and also for the Bourgain slicing problem.

When Chen posted his work online, “I immediately basically stopped everything I was doing and checked this paper,” Klartag said. Researchers were wary, given the previous incorrect proof and the fact that most of them had never heard of Chen. But his contribution turned out to be easy to verify. “This paper is 100% correct,” Klartag said. “There’s no question about it.”

Chen’s result means that the best 50-50 cut of a convex shape isn’t that much smaller than the best flat cut — in other words, high-dimensional convex shapes don’t contain hidden dumbbells with very narrow bridges. From a pure math perspective, “it is a big deal, because it was such a gaping hole in our understanding,” Bubeck said.

And from a practical standpoint, it means that a random walk is guaranteed to mix through a convex shape much faster than researchers could previously prove. Among other things, this understanding will help computer scientists to prioritize among different random sampling techniques — to figure out when the most basic random walk is best, and when a more sophisticated but computationally expensive algorithm will perform better.

In the end, considering how many people tried and failed to prove the d0 bound, the proof was surprisingly simple, Vempala said. Bourgain, he speculated, would probably have thought, “How did I miss that?”

Bourgain would have been thrilled by this development, mathematicians agree. Just a few months before his death in 2018, he contacted Milman, inquiring if there had been any progress. “He wanted to know the answer before he would leave,” Milman wrote.

Correction: March 2, 2021

This article has been revised to clarify that Yuansi Chen’s main appointment at Duke University is with the Department of Statistical Science.