What's up in

Natural language processing

Latest Articles

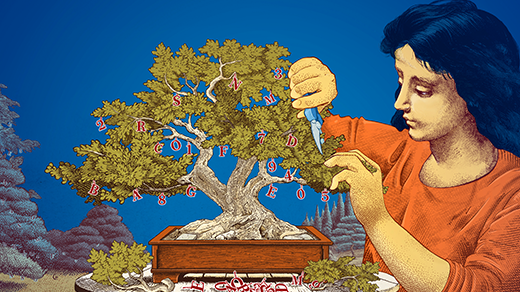

To Make Language Models Work Better, Researchers Sidestep Language

We insist that large language models repeatedly translate their mathematical processes into words. There may be a better way.

Where Does Meaning Live in a Sentence? Math Might Tell Us.

The mathematician Tai-Danae Bradley is using category theory to try to understand both human and AI-generated language.

Why Do Researchers Care About Small Language Models?

Larger models can pull off greater feats, but the accessibility and efficiency of smaller models make them attractive tools.

The Physicist Working to Build Science-Literate AI

By training machine learning models with enough examples of basic science, Miles Cranmer hopes to push the pace of scientific discovery forward.

The Poetry Fan Who Taught an LLM to Read and Write DNA

By treating DNA as a language, Brian Hie’s “ChatGPT for genomes” could pick up patterns that humans can’t see, accelerating biological design.

Chatbot Software Begins to Face Fundamental Limitations

Recent results show that large language models struggle with compositional tasks, suggesting a hard limit to their abilities.

Can AI Models Show Us How People Learn? Impossible Languages Point a Way.

Certain grammatical rules never appear in any known language. By constructing artificial languages that have these rules, linguists can use neural networks to explore how people learn.

Debate May Help AI Models Converge on Truth

How do we know if a large language model is lying? Letting AI systems argue with each other may help expose the truth.

The Computer Scientist Who Builds Big Pictures From Small Details

To better understand machine learning algorithms, Lenka Zdeborová treats them like physical materials.