An Applied Mathematician With an Unexpected Toolbox

“When it’s advanced, any topic becomes mathematical,” said Lek-Heng Lim, an applied mathematician at the University of Chicago.

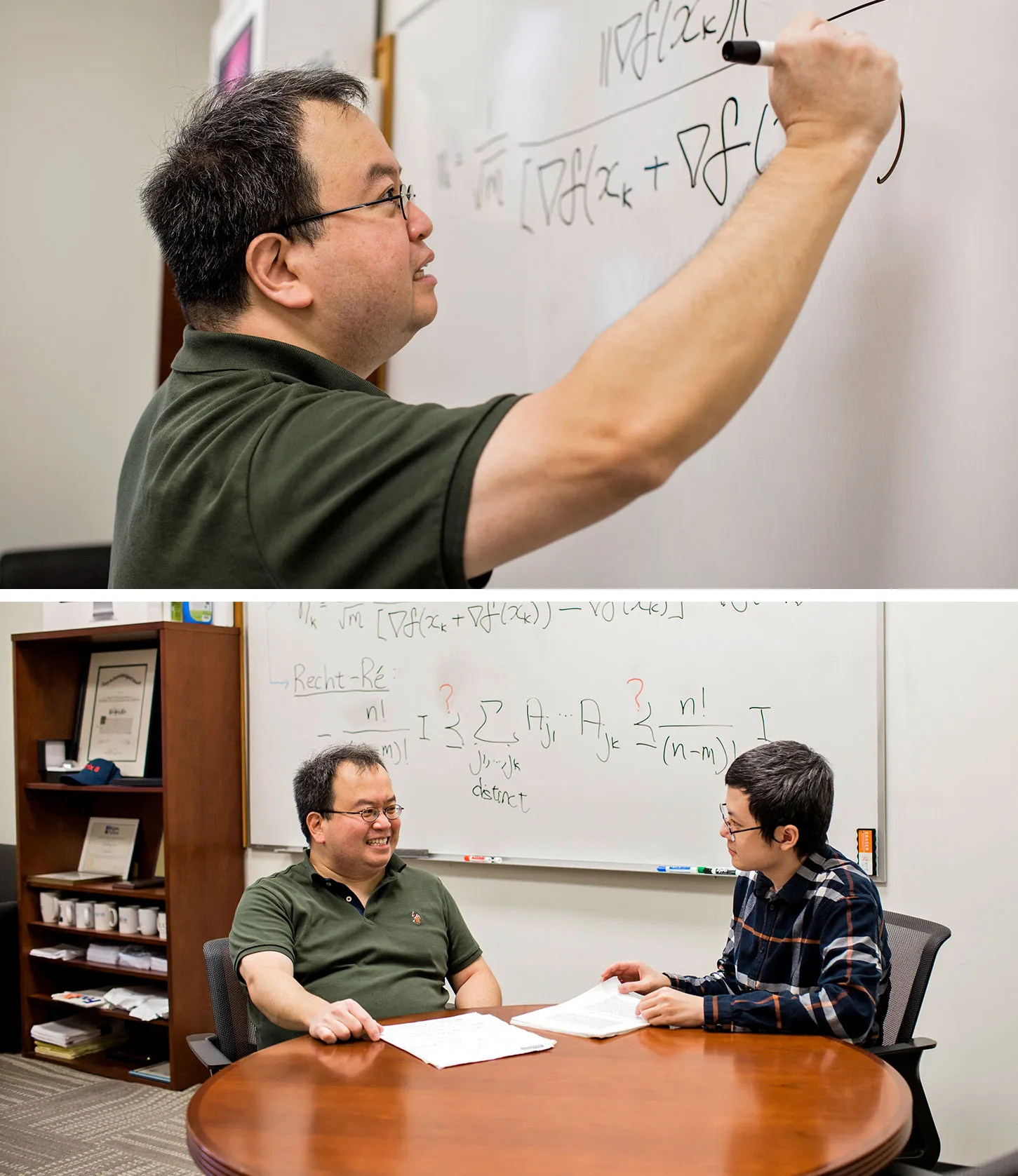

Kristen Norman for Quanta Magazine

Introduction

Lek-Heng Lim longs for a renaissance that would reunite pure and applied mathematics. He points out that the distinction, which in modern math appears to be elemental, is in fact recent. “This break between pure and applied math happened in the last 80 years,” Lim said. “I would argue for a return to the old days.”

Lim’s research moves us closer to this reunion. He studies machine learning and other applied subjects using tools that have been developed in pure math fields like algebra, geometry and topology.

Lim is now a professor at the University of Chicago, but growing up in Singapore he “wasn’t so interested in math,” he said. Then in high school, he got to talking with a physics teacher, who was studying for a master’s degree, about the teacher’s research.

The conversation piqued his interest in gauge theory. The subject is “certainly physics,” he said, “but it’s very mathematical, with physical quantities modeled as mathematical objects.” The conversation put him on a journey to becoming a mathematician.

“Most of it went over my head, but some of the terms stayed with me,” Lim said. “When I encountered them later in my education, it felt like meeting an old friend.”

In 2022, Lim was awarded a Guggenheim fellowship. “Lek-Heng is an exceptional mathematician,” wrote Sayan Mukherjee, a professor of statistics at Duke University, in recommending him for the Guggenheim. “He is the strongest applied mathematician of his generation working at the interface of numerical methods, algebra and algorithms for data science.”

The interview has been condensed and edited for clarity.

“I’m content just to fill potholes in my knowledge,” Lim said.

Kristen Norman for Quanta Magazine

Is it fair to describe the relationship between pure and applied math as ever-evolving?

Yes. It’s a bit unfortunate, the fact that we are even discussing the relationship between pure and applied math. That means they are separate entities.

Look at the olden days. Look at Gauss, Fermat or Euler. Even people as late as von Neumann or Hilbert. They don’t seem to make that distinction. To them, everything is pure math, everything is applied math.

Gauss’s work is not just quadratic reciprocity and Gaussian curvature. It’s also things like least squares problems and trying to find trajectories of planets. Essentially, he invented linear regression. That’s very important in statistics.

Look at Hilbert’s famous list of 23 problems. Some of them have deep roots in applied math and dynamical systems. Some of them have roots in pure math and logic.

Von Neumann was interested in quantum mechanics, mathematical logic, numerical analysis, game theory and operator algebra.

Of course both areas are now so broad that it’s impossible for anyone to know everything. There are certain things that a pure mathematician, I think, ought to know in applied math. And applied mathematicians have a lot to gain, frankly speaking, by increasing their awareness of modern tools in geometry, topology and algebra.

In a 2020 paper, you connected deep neural networks with topology. How?

It used to be that a computer found it very hard to do something that a human can do easily: recognizing, say, that a coffee mug isn’t a cat. Even a young child can do it relatively easily. But a computer didn’t have that kind of capacity.

That started to change in about 2012. Deep neural networks were key, meaning neural networks with many layers. What happened, I guess, was that the layers mean something. That’s my take.

I studied this with my Ph.D. student Greg Naitzat, who is now at Facebook. The idea was: Let’s take, for instance, the set of all cat images and the set of all images that aren’t cats. We’re going to view them as [topological shapes, or manifolds]. One is the manifold of cats and the other is the manifold of non-cats. These are going to be intertwined in some complicated way. Why? Because there are certain things that look very much like cats that are not a cat. Mountain lions sometimes get mistaken for cats. Replicas. The big thing is, two manifolds are intertwined in some very complex manner.

How do these elucidate neural networks?

We carried out experiments to show that these manifolds get simplified. Originally, it was two complex shapes that were intricately intertwined, but it gets simplified. How do I measure this simplification in the shapes? Well, there’s a tool that’s a backbone of computational topology. This allows us to measure the shape of these objects.

What is this tool?

It’s persistent homology.

First, homology is essentially a way to classify different holes of different types of geometric objects up to deformation. Holes that look very different in geometry look identical from the perspective of homology.

What if I only have points sampled from a manifold rather than knowledge of the entire manifold? Like, for instance, the image of a cat: What’s the difference between the image of a cat you see on a computer screen and the actual cat itself? An image has pixels, so if you zoom in far enough, you’re just going to see discrete dots. In that case, how do I talk about homology?

Kristen Norman for Quanta Magazine

Lim with his student Zehua Lai. Together they proved that a long-standing machine learning conjecture is false.

Kristen Norman for Quanta Magazine

Persistent homology is a gadget invented to address this problem: How do I discuss homology when you have points sampled from a manifold instead of the manifold itself?

At every point, take a little ball around the point. I see where two balls overlap, where three balls overlap, and so on. From this data, it can give you an estimate of the homology of the underlying manifold. This allows us to talk about the homology of a manifold when we only have a discrete-points sample from it.

I use this to measure the shape of the manifold as it passes through the layers of a neural network. Ultimately, I can show that it reduces to the simplest possible form.

Do these results help us understand what’s going on in neural networks?

There’s this term called explainable AI. Essentially, your neural network or machine learning model is going to give you an answer, and you want to know how it arrived at that answer.

You can view a neural network as a device for simplifying the topology of the manifolds under study. Now you can explain how it arrives at that.

What made you realize that tools from pure math would be useful in your applied math research?

I’m curious about things that people don’t normally consider as legitimate topics for applied math. As a result of that, I can see the relevance of certain tools that would not be immediate to somebody who’s traditionally trained in applied math.

What’s another example of your use of such tools?

My Ph.D. student Zehua Lai and I showed a long-standing conjecture in machine learning is false.

Modern machine learning problems often involve fitting a huge number of parameters with a massive amount of data. GPT-4, the next iteration of the engine underlying ChatGPT, is rumored to have 1 trillion to 100 trillion parameters. No computer in existence could handle these parameters all at once. So in each step, algorithms pick a small random subset of parameters (whatever the computer can handle) and just work with those instead.

“I’m curious about things that people don’t normally consider as legitimate topics for applied math,” Lim said.

Kristen Norman for Quanta Magazine

Picking a small random subset is called sampling. Now the question is: In subsequent steps of the algorithm, should it pick parameters that we have already picked before in earlier steps, or should we exclude those? In other words, should it sample parameters with replacement or without replacement? This is a question that we always need to consider when our algorithm involves randomization, so it’s a very fundamental and important question.

About 10 years ago Ben Recht and Chris Ré showed that it’s better to sample without replacement than with replacement, provided that a certain analogue of a particular inequality holds. Over the years, people proved various cases of this inequality. We showed that, in general, the inequality does not hold.

How did you do that?

Ultimately, the way to answer this question was to use a tool from algebraic geometry called the noncommutative Positivstellensatz. It is a mouthful. It’s a German word that means, essentially, the location of the positive points of a polynomial.

The noncommutative Positivstellensatz is a more complicated version of something called a Positivstellensatz. It’s for polynomials where the variables do not commute — in which a term like xyx2 cannot be simplified to x3y. Such noncommutative polynomials are very useful when we want to plug in matrices for x and y.

People who are interested in randomized algorithms probably will not know about the Positivstellensatz, because it’s something in algebraic geometry. Even in algebraic geometry, it’s not something that’s standard knowledge.

What do you find most satisfying about doing math research?

I’m content to just satisfy my curiosity. There are people who want to solve big conjectures. They like to build skyscrapers, so to speak.

I’m content to just fill in potholes in my knowledge. When it’s advanced, any topic becomes mathematical. Whether it’s economics, social science, psychology, anything I can think of, ultimately, will be mathematical.

As an applied mathematician, you get the freedom to seek out other fields you’re curious about. If you’re somebody who’s curious about a lot of stuff, this can be very satisfying.