Playing Hide-and-Seek, Machines Invent New Tools

Introduction

Programmers at OpenAI, an artificial intelligence research company, recently taught a gaggle of intelligent artificial agents — bots — to play hide-and-seek. Not because they cared who won: The goal was to observe how competition between hiders and seekers would drive the bots to find and use digital tools. The idea is familiar to anyone who’s ever played the game in real life; it’s a kind of scaled-down arms race. When your opponent adopts a strategy that works, you have to abandon what you were doing before and find a new, better plan. It’s the rule that governs games from chess to StarCraft II; it’s also an adaptation that seems likely to confer an evolutionary advantage.

So it went with hide-and-seek. Even though the AI agents hadn’t received explicit instructions about how to play, they soon learned to run away and chase. After hundreds of millions of games, they learned to manipulate their environment to give themselves an advantage. The hiders, for example, learned to build miniature forts and barricade themselves inside; the seekers, in response, learned how to use ramps to scale the walls and find the hiders.

These actions showed how AI agents could learn to use things around them as tools, according to the OpenAI team. That’s important not because AI needs to be better at hiding and seeking, but because it suggests a way to build AI that can solve open-ended, real-world problems.

“This was an impressive use of a tool, and tool usage is incredible for AI systems,” said Danny Lange, a computer scientist and vice president of AI at the video game company Unity Technologies who wasn’t involved with the hide-and-seek project. “These systems figured out so quickly how to use tools. Imagine when they can use many tools, or create tools. Would they invent a ladder?”

To extrapolate even further: Could they invent something that’s useful in the real world? Recent studies have probed ways to teach AI agents to use tools, but in most of them, tool use itself is the goal. The hide-and-seek experiment was different: Rewards were associated with hiding and finding, and tool use just happened — and evolved — along the way.

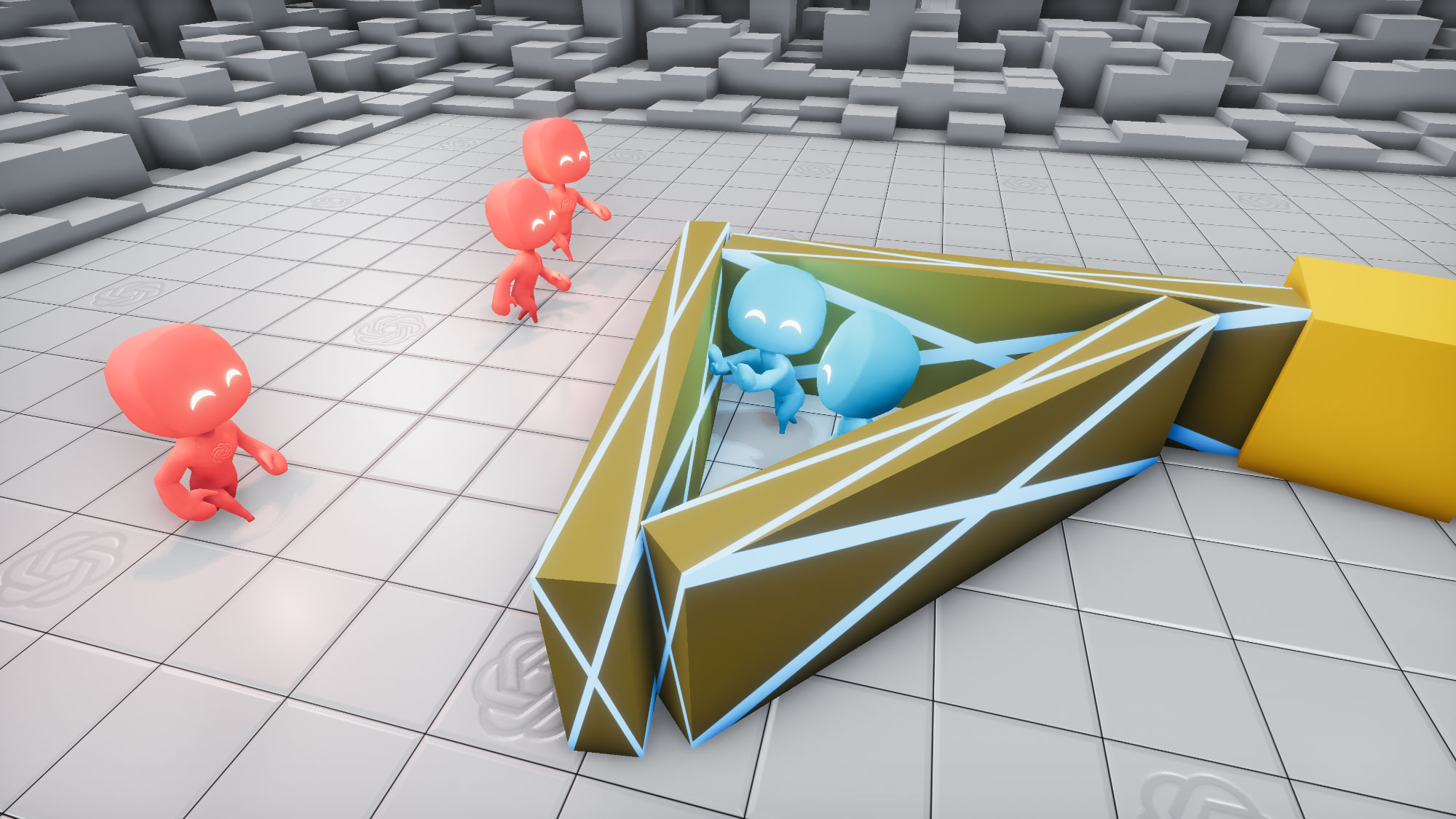

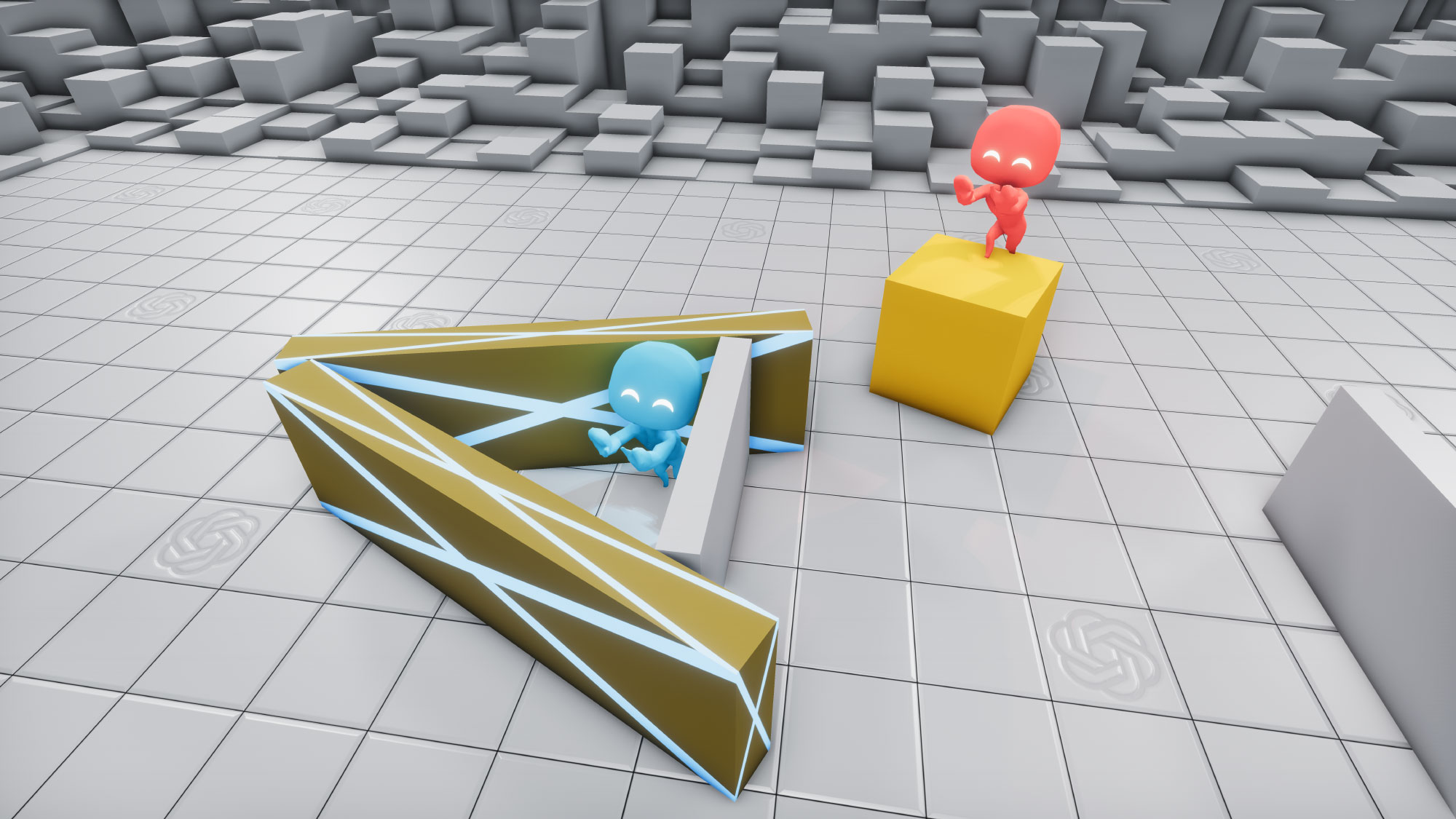

Because the game was open-ended, the AI agents even began using tools in ways the programmers hadn’t anticipated. They’d predicted that the agents would hide or chase, and that they’d create forts. But after enough games, the seekers learned, for example, that they could move boxes even after climbing on top of them. This allowed them to skate around the arena in a move the OpenAI team called “box surfing.” The researchers never saw it coming, even though the algorithms didn’t explicitly prohibit climbing on boxes. The tactic conferred a double advantage, combining movement with the ability to peer nimbly over walls, and it showed a more innovative use of tools than the human programmers had imagined.

While hiders could successfully evade seekers behind walls, the seekers surprised their programmers by learning how to “surf” boxes and break the hiders’ defenses.

OpenAI

“We were definitely surprised by things like that, like box surfing,” said Bowen Baker, one of three OpenAI researchers who designed and guided the project. “We were not expecting that to happen, but it was exciting when it did.”

Fort building and box surfing may not solve any pressing challenges outside hide-and-seek, but the bots’ ability to creatively use available objects as tools suggests that they may be able to solve complex problems in a similar manner. In addition, the emergence of advantageous traits like tool use seems to echo a more familiar course of adaptation: The evolution of human intelligence.

“We aren’t doing evolution in this environment,” Baker said, “but we do kind of see some analogous patterns happening.”

First Moves

Games have long been a useful test bed for artificial intelligence. That’s partly because they offer a clear way to assess whether an AI system has achieved a goal — did it win, or not? But games are also useful because competition drives players to find ever-better strategies to win. That should work for AI systems, too: In a competitive environment, algorithms learn to avoid their own mistakes and those of their opponents to optimize strategy.

OpenAI’s agents played millions of hide-and-seek games in virtual arenas.

OpenAI

The relationship between games and AI runs deep. In the late 1940s and early 1950s, computer scientists including Claude Shannon and Alan Turing first described chess-playing algorithms. Four decades later, an IBM researcher named Gerald Tesauro unveiled a backgammon AI program that learned the game through self-play, which means it improved by competing against older versions of itself.

Self-play is a popular way to test “reinforcement learning” algorithms. Instead of scanning through all possible moves — as some chess programs do — an algorithm using reinforcement learning prioritizes decisions that give it an advantage over its opponent. Chess programs that start with random moves, for example, soon discover how to arrange pawns or use other pieces to protect the king. Self-play can also produce wildly inventive strategies that no human player would ever attempt. To wit: In shogi, a Japanese game similar to chess, human players typically shy away from moving their king to the middle of the board. An AI system, however, recently used exactly this maneuver to trounce human competitors. (For some games, like rock-paper-scissors, no amount of self-play will ever transcend dumb luck.)

Self-play worked for Tesauro’s backgammon-playing AI, which after more than 1 million bouts was making moves that rivaled those of the best human players. And it worked for AlphaGo, an AI program that in 2017 defeated Ke Jie, the world’s top-ranked player in Go, an ancient Chinese board game. Players take turns placing black or white stones on a square grid; the goal is to surround more territory than your opponent.

Analyses of AlphaGo’s moves showed that during self-play, the AI followed a process of learning — and then abandoning — increasingly sophisticated moves. At first, like a human beginner, it attempted to quickly capture as many of its opponents’ stones as possible. But as training continued, the program improved by discovering successful new maneuvers. It learned to lay the groundwork early for long-term strategies like “life and death,” which involves positioning stones in ways that prevent their capture. “It sort of mirrored the order in which humans learn these,” said the computational neuroscientist Joel Leibo of DeepMind, the London-based AI research company behind AlphaGo.

Bolstered by these successes, programmers began tackling video games, which often involve multiple players and run in continuous time, rather than move by move. As of October, a DeepMind AI program called AlphaStar ranked above 99.8% of human players in the complicated video game StarCraft II. And in April 2019, an AI team called OpenAI Five trounced a professional human team in the online battle game Dota 2. Conquests like these have boosted the complexity of AI’s self-taught strategies.

However, these kinds of games are limited to restricted, virtual environments, said Igor Mordatch, who worked on the hide-and-seek project while at OpenAI but now works for Google Brain, the tech giant’s AI research team. “It’s hard to imagine a Dota or Go agent kind of jumping out of the game and starting to solve real-world tasks,” he said.

Of course, not everyone is convinced that OpenAI’s approach could jump out, either. Some experts in the field see the new demonstration as little more than an extension of what’s already emerged in other arenas, like the new strategies AI teams have invented to defeat human experts in recent esports competitions. Doing the same thing in hide-and-seek won’t necessarily push the field forward, they argue. “I am rather skeptical about its usefulness,” said Wojciech Jaśkowski, a computer scientist who develops intelligent automation systems at Nnaisense, a Swiss AI firm. “It’s nice to know that this is possible, but does it surprise us after Dota and StarCraft? I see no groundbreaking methods here.”

But Mordatch maintains that the hide-and-seek demonstration is different. It gave its AI players room to grow — instead of just learning to win the game, they taught themselves to make their own tools to do so.

Millions of Lessons

The rules of the game were simple. Each AI team had between one and three agents, and games lasted 240 moves. The setting was a (virtual) square arena with boxes, ramps and walls that agents could push around and that hiders could lock in place. The arena was based in a 3D physics simulator that prevented real-world impossibilities like agents walking through walls or two agents occupying the same space.

Seekers were frozen in place for the first 96 moves, giving hiders a chance to, well, hide. Each agent was programmed to maximize its point totals, and points were awarded to all the members of a team at once. Hiders gained a point if they were all concealed, and they lost a point if one of them was seen by a seeker. Seekers gained a point if they saw at least one hider, and they lost a point if they couldn’t find any hiders by the end of the round. The point system helped both sides learn better strategies, Baker said. Instead of getting points for specific tasks, like moving a box or climbing a ramp, the teams were rewarded for how well they used such tasks to accomplish their goals — to find, or avoid being found.

At first, gameplay was crude. The agents scurried around — sometimes forward, sometimes backward — like confused toddlers. (To make the AI agents easy to observe, and to match the playful spirit of the game, the programmers made their creations look like little jelly monsters with oversize heads, wide smiles and bright eyes. The bots were adorable bumblers.)

The experiment went through six phases. First, motivated only by the incentive to gain points, the agents learned to chase or run away. (This was a sort of pre-tool phase.) Then, after about 25 million games, the hiders learned to make forts out of the boxes and walls. That advantage didn’t last, however. After another 75 million games, seekers learned to push a ramp to the edge of a fort, climb up and jump in. Hiders had to adopt a new strategy. In the fourth phase, 10 million games later, hiders retaliated by shoving ramps to the outer edges of the playing field — where they wouldn’t be useful — and locking them in place.

As impressive as the agents’ ingenuity was, the development of these first four strategies didn’t surprise the OpenAI team. After those 10 million games, the researchers suspected that the program had run its course. But the AI kept on changing — and learning.

Evolving Beyond Hide-and-Seek

After almost 390 million games came the fifth phase and the introduction of box surfing. During these rounds seekers learned they could still use the locked ramps by moving a box close to one, climbing the ramp and jumping onto the box. (The boxes were too high to scale without a ramp.) Once on a box, a bot could move it around the arena while remaining on top of it. This allowed it to effectively ride around, or surf, on the box, searching for hiders. This gave seekers the advantage of height and mobility. In the sixth and final phase of the game — which emerged after 458 million rounds — the hiders finally learned to lock the boxes beforehand, preventing the surfing.

The OpenAI researchers see these unexpected but advantageous behaviors as proof that their system can discover tasks beyond what was expected, and in a setting with real-world rules. “Now you see behavior … on a computer that replicates behavior you see in a real, living being,” said Lange. “So now your head starts spinning a bit.”

The team’s next step is to see if their findings scale up to more complicated tasks in the real world. Lange thinks this is a realistic goal. “There’s nothing here that prevents this from sort of going on a path where tool usage gets more and more complex,” he said. More complex problems in the virtual world could suggest useful applications in the real world.

One way to increase complexity — and see how far self-learning can go — is to increase the number of agents playing the game. “It will definitely be a challenge to deal with if you want tens, hundreds, thousands of agents,” said Baker. Each one will require its own independent algorithm, and the project will require much more computational power. But Baker isn’t worried: The simple rules of hide-and-seek make it an energy-efficient test of AI.

And he says an AI system that can complete increasingly complex tasks raises questions about intelligence itself. During their post-game analysis of hide-and-seek, the OpenAI team devised and ran intelligence tests to see how the AI agents gained and organized knowledge. But despite the sophisticated tool use, the results weren’t clear. “Their behaviors seemed humanlike, but it’s not actually clear that the way that knowledge is organized in the agent’s head is humanlike,” Mordatch said.

Some experts, like the computer scientist Marc Toussaint at the University of Stuttgart in Germany, caution that these kinds of AI projects still haven’t answered a pivotal open question. “Does such work aim to mimic evolution’s ability to train or evolve agents for one particular niche? Or does it aim to mimic human and higher animals’ ability for in situ problem solving, generalization [and] dealing with never-experienced situations and learning?”

Baker doesn’t claim that the hide-and-seek game is a reliable model of evolution, or that the agents are convincingly humanlike. “Personally, I believe they’re so far from anything we would consider intelligent or sentient,” he said.

Nevertheless, the way the AI agents used self-play and competition to develop tools does look a lot like evolution — of some variety — to some researchers in the field. Leibo notes that the history of life on Earth is rich with cases in which an innovation or change by one species prompted other species to adapt. Billions of years ago, for example, tiny algaelike creatures pumped the atmosphere full of oxygen, which allowed for the evolution of larger organisms that depend on the gas. He sees a similar pattern in human culture, which has evolved by introducing and adapting to new standards and practices, from agriculture to the 40-hour workweek to the prominence of social media.

“We’re wondering if something similar had happened — if the history of life itself is a self-play process that continually responds to its own previous innovations,” Leibo said. In March, he was part of a quartet of researchers at DeepMind who released a manifesto describing how cooperation and competition in multi-agent AI systems leads to innovation. “Innovations arise when perturbations push parts of the system away from stable equilibria into new regimes where previously well-adapted solutions no longer work,” they wrote. In other words: When push comes to shove, shove better.

They saw it happen when AlphaGo bested the best human players at Go, and Leibo says the hide-and-seek game offers another robust example. The bots’ unexpected use of tools emerged from the increasingly difficult tasks they created for each other.

Baker similarly sees parallels between hide-and-seek and natural adaptation. “When one side learns a skill, it’s like it mutates,” he said. “It’s a beneficial mutation, and they keep it. And that creates pressure for all the other organisms to adapt.”