Introduction

Mathematicians and computer scientists had an exciting year of breakthroughs in set theory, topology and artificial intelligence, in addition to preserving fading knowledge and revisiting old questions. They made new progress on fundamental questions in the field, celebrated connections spanning distant areas of mathematics, and saw the links between mathematics and other disciplines grow. But many results were only partial answers, and some promising avenues of exploration turned out to be dead ends, leaving work for future (and current) generations.

Topologists, who had already had a busy year, saw the release of a book this fall that finally presents, comprehensively, a major 40-year-old work that was in danger of being lost. A geometric tool created 11 years ago gained new life in a different mathematical context, bridging disparate areas of research. And new work in set theory brought mathematicians closer to understanding the nature of infinity and how many real numbers there really are. This was just one of many decades-old questions in math that received answers — of some sort — this year.

But math doesn’t exist in a vacuum. This summer, Quanta covered the growing need for a mathematical understanding of quantum field theory, one of the most successful concepts in physics. Similarly, computers are becoming increasingly indispensable tools for mathematicians, who use them not just to carry out calculations but to solve otherwise impossible problems and even verify complicated proofs. And as machines become better at solving problems, this year has also seen new progress in understanding just how they got so good at it.

Grace Park for Quanta Magazine

Preserving Topology

It’s tempting to think that a mathematical proof, once discovered, would stick around forever. But a seminal topology result from 1981 was in danger of being lost to obscurity, as the few remaining mathematicians who understood it grew older and left the field. Michael Freedman’s proof of the four-dimensional Poincaré conjecture showed that certain shapes that are similar in some ways (or “homotopy equivalent”) to a four-dimensional sphere must also be similar to it in other ways, making them “homeomorphic.” (Topologists have their own ways of determining when two shapes are the same or similar.) Fortunately, a new book called The Disc Embedding Theorem establishes in nearly 500 pages the inescapable logic of Freedman’s surprising approach and firmly establishes the finding in the mathematical canon.

Another recent major result in topology involved the Smale conjecture, which asks if the four-dimensional sphere’s basic symmetries are, basically, all the symmetries it has. Tadayuki Watanabe proved that the answer is no — more kinds of symmetries exist — and in doing so he kicked off a search for them, with new results appearing as recently as September. Also, two mathematicians developed “Floer Morava K-theory,” a framework that combines symplectic geometry and topology; the work establishes a new set of tools for approaching problems in those fields and, almost in passing, proves a new version of a decades-old problem called the Arnold conjecture. Quanta also explored the origins of topology itself with a column in January and an explainer devoted to the related subject of homology.

Olena Shmahalo/Quanta Magazine

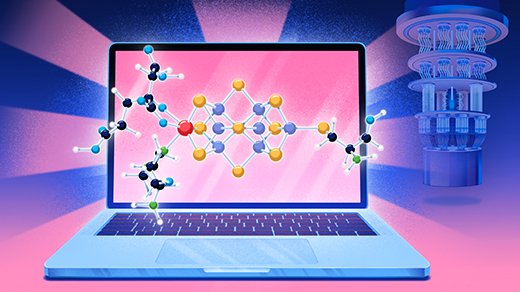

Opening AI’s Black Box

Whether they’re helping mathematicians do math or aiding in the analysis of scientific data, deep neural networks, a form of artificial intelligence built upon layers of artificial neurons, have become increasingly sophisticated and powerful. They also remain mysterious: Traditional machine learning theory says their huge numbers of parameters should result in overfitting and an inability to generalize, but clearly something else must be happening. It turns out that older and better-understood machine learning models, called kernel machines, are mathematically equivalent to idealized versions of these neural networks, suggesting new ways to understand — and take advantage of — the digital black boxes.

But there have been setbacks, too. Related kinds of AI known as convolutional neural networks have a very hard time distinguishing between similar and different objects, and there’s a good chance they always will. Likewise, recent work has shown that gradient descent — an algorithm useful for training neural networks and performing other computational tasks — is a fundamentally difficult problem, meaning some tasks may be forever beyond its reach. Quantum computing, despite its promise, also suffered a big setback in March when a major paper describing how to create error-resistant topological qubits was retracted, forcing once hopeful scientists to realize that such a machine may be impossible. (Scott Aaronson highlighted, in a column and video, just why quantum computers are so difficult to work with, and even to talk about.)

Olena Shmahalo/Quanta Magazine

The Nature of Infinity

How many real numbers exist? It’s been a provocative — and unsolved — question for more than a century, but this year saw major developments toward an answer. David Asperó and Ralf Schindler published a proof in May that combined two previously antagonistic axioms: A variation of one of them, known as Martin’s maximum, implies the other, named (*) (pronounced “star”). The result means both axioms are more likely to be true, which in turn suggests that the number of real numbers is bigger than initially thought, corresponding to the cardinal number $latex\boldsymbol{\aleph}_{2}$ rather than the smaller (yet still infinite) $latex\boldsymbol{\aleph}_{1}$. This would violate the continuum hypothesis, which states that no size of infinity exists between $latex\boldsymbol{\aleph}_{0}$, corresponding to the set of all natural numbers, and the continuum of real numbers. But not everyone agrees, including Hugh Woodin, the original creator of (*), who has posted new work that suggests the continuum hypothesis is right after all.

This wasn’t the only decades-old problem revisited by modern solutions. In 1900, David Hilbert came up with 23 unsolved significant questions, and this year saw mathematicians post incomplete answers to the 12th problem, about the building blocks of certain number systems, and the 13th, about the solutions to seventh-degree polynomials. February also saw the announcement that the unit conjecture is false, meaning that multiplicative inverses actually exist in more complicated structures than mathematicians thought. And in January Alex Kontorovich explored perhaps the greatest unsolved problem in mathematics, the Riemann hypothesis, in an essay and video.

Matteo Bassini for Quanta Magazine

Expanding Mathematical Bridges

Often, a great mathematical advance not only answers a major question, but also provides a new avenue of exploration to try against other problems. Laurent Fargues and Jean-Marc Fontaine created a new geometric object around 2010 that helped their own research. But when combined with Peter Scholze’s ideas surrounding perfectoid spaces, the Fargues-Fontaine curve took on expanded significance, further connecting number theory and geometry as part of the decades-old Langlands program. “It’s some kind of wormhole between two different worlds,” said Scholze.

Other ruminations on the Langlands program included an interview with Ana Caraiani, whose work has helped strengthen and improve similar connections between disparate areas of math, and an examination of the Galois groups of symmetries at the heart of the original Langlands conjectures.

Math and Computers Join Forces

Real-world systems are notoriously complicated, and partial differential equations (PDEs) help researchers describe and understand them. But PDEs are also notoriously difficult to solve. Two new kinds of neural networks — DeepONet and the Fourier neural operator — have emerged to make this work easier. Both have the power to approximate operators, which can transform functions into other functions, effectively allowing the nets to map an infinite-dimensional space onto another infinite-dimensional space. The new systems solve existing equations faster than conventional methods, and they may also help provide PDEs for systems that were previously too complicated to model.

In fact, computers have proved helpful to mathematicians in various ways this year. In January, Quanta reported on new algorithms for quantum computers that would allow them to process nonlinear systems, where interactions can affect themselves, by first approximating them as simpler, linear ones. Computers also continued to push mathematical research forward when a team of mathematicians used modern hardware and algorithms to prove that there are no more types of special tetrahedra than the ones discovered 26 years ago, and — more dramatically — when a digital proof assistant named Lean verified the correctness of an inscrutable modern proof.

Olena Shmahalo/Quanta Magazine

Math Meets Physics, Again

Physics and mathematics have always overlapped, inspiring and advancing each other. The concept of quantum field theory, a catchall that physicists use to describe frameworks that involve quantum fields, has been enormously successful, but it rests on shaky mathematical ground. Bringing mathematical rigor to quantum field theory would help physicists work in and expand that framework, but it would also give mathematicians a new set of tools and structures to play with. In a four-part series, Quanta examined the main issues currently getting in mathematicians’ way, explored a smaller-scale success story in two dimensions, discussed the possibilities with QFT specialist Nathan Seiberg, and explained in a video the most prominent QFT of all: the Standard Model.