What's up in

Deep learning

Latest Articles

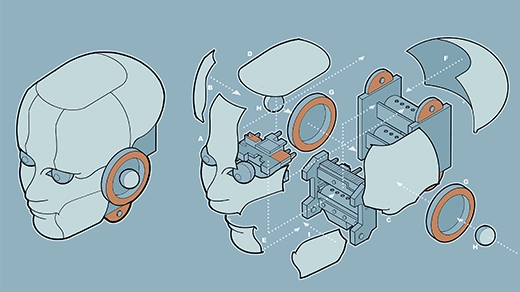

Why Do Humanoid Robots Still Struggle With the Small Stuff?

The last decade has seen vast improvements in humanoid robots, but graduating to widespread use might require going back to the fundamentals.

Novel Architecture Makes Neural Networks More Understandable

By tapping into a decades-old mathematical principle, researchers are hoping that Kolmogorov-Arnold networks will facilitate scientific discovery.

What Is Machine Learning?

Neural networks and other forms of machine learning ultimately learn by trial and error, one improvement at a time.

How AI Revolutionized Protein Science, but Didn’t End It

Three years ago, Google’s AlphaFold pulled off the biggest artificial intelligence breakthrough in science to date, accelerating molecular research and kindling deep questions about why we do science.

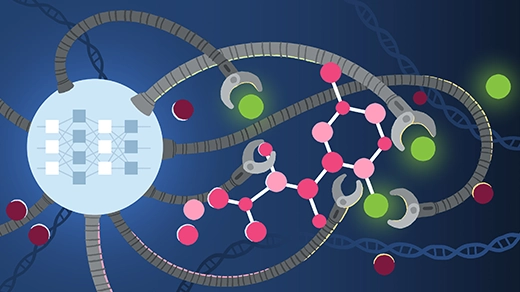

New AI Tools Predict How Life’s Building Blocks Assemble

Google DeepMind’s AlphaFold3 and other deep learning algorithms can now predict the shapes of interacting complexes of protein, DNA, RNA and other molecules, better capturing cells’ biological landscapes.

AI System Beats Chess Puzzles With ‘Artificial Brainstorming’

By bringing together disparate approaches, machines can reach a new level of creative problem-solving.

Mathematicians Solve Long-Standing Coloring Problem

A new result shows how much of the plane can be colored by points that are never exactly one unit apart.

Machines Learn Better if We Teach Them the Basics

A wave of research improves reinforcement learning algorithms by pre-training them as if they were human.

The Physics Principle That Inspired Modern AI Art

Diffusion models generate incredible images by learning to reverse the process that, among other things, causes ink to spread through water.