The AI Revolution in Math Has Arrived

Nash Weerasekera for Quanta Magazine

Introduction

The tipping point came in the summer of 2025. That July, several artificial intelligence models solved five out of six problems at the International Mathematical Olympiad, an annual challenge for some of the world’s best high school students. But while mathematicians were shocked — few had expected the programs to get that good that quickly — the impressive results didn’t necessarily mean that AI would make important strides in research math. After all, Olympiad problems are challenging puzzles with known answers, not open questions.

Nevertheless, the results made people pay attention. Mathematicians who had dismissed AI models as too error-prone to be useful started playing around with them. Those early adopters found, to their surprise, not only that the models were good at puzzles, but that they could help break genuinely new ground. Soon, mathematicians were using AI to discover and prove new results, accomplishing in a day what would have once taken them weeks or months. “2025 was the year when AI really started being useful for many different tasks,” said Terence Tao, a prominent mathematician at the University of California, Los Angeles.

While no single new result is a world-beating breakthrough, some of them are on par with discoveries published in professional mathematical journals. In some cases, algorithms formulate a conjecture, prove it, and verify the proof with minimal human intervention. In others, extensive chats with large language models such as ChatGPT, Claude, or Gemini lead to novel proof strategies.

“This guy’s got a shovel. This guy’s got a pickax. Together we can bore a tunnel,” Tao said. There’s “a lot of throwing things at the wall to see what sticks.”

Though Tao is perhaps the most prominent exponent of AI’s utility in mathematics, others agree.

Even by solving easy problems, said Daniel Litt of the University of Toronto, AI “is changing how mathematics is done.”

Soon, “it will look and feel altogether different from the way mathematics was traditionally done,” Tao said. Where before mathematicians studied one problem at a time, “with these tools you can solve thousands of problems at once and start doing statistical studies.” Though nobody I spoke with thinks AI will replace mathematicians, Tao added that “there are a lot of institutional changes, cultural changes, we will have to make.”

Terence Tao is excited by the opportunities that AI models afford mathematicians. Soon, math “will look and feel altogether different from the way mathematics was traditionally done,” he said.

Reed Hutchinson/UCLA

Those changes will be contested, in math as in other academic disciplines wrestling with AI’s impact. As AI models become a powerful new tool, they risk causing mathematicians to lose direct experience with mathematical understanding, said Akshay Venkatesh of the Institute for Advanced Study. Like Tao, Venkatesh is a recipient of the Fields Medal, math’s most prestigious prize. Both agree that AI’s impact will be significant, but Venkatesh is more cautious about it: “There are valuable things in our culture which we should try to keep,” he said.

Some mathematicians are now leaving academia to work at big tech firms, like OpenAI and Google, or to join math-focused AI startups such as Harmonic, Logical Intelligence, Axiom Math, and Math Inc. “One reason there is so much interest in AI for mathematics in the corporate world is that people are recognizing that the key to general intelligence is combining the insights you get from machine learning and the precision you get from mathematics,” said Jeremy Avigad, the director of the Institute for Computer-Aided Reasoning in Mathematics at Carnegie Mellon University.

By the start of 2026, shock at the power of AI had turned into something more like wonder. A February challenge called First Proof gave entrants a week to have their AI models solve 10 research-level questions in various areas of math. Mathematicians had chosen the questions so that they were unlikely to have appeared in the algorithms’ training data. With varying levels of autonomy, the models succeeded in solving over half the problems. If the Olympiad results represented the moment AI entered an ambitious college math program, the First Proof results were arguably the moment they finished graduate school. In a blog post analyzing the results, Litt wrote: “It’s very likely that this technology is bigger than the computer.”

Creative Evolution

Though the summer of 2025 marked an inflection point in the capabilities of AI, it didn’t come out of nowhere. Pushmeet Kohli, Google DeepMind’s vice president of science, said DeepMind has been trying to solve math problems with AI since 2018. François Charton, now at Axiom, first started trying to use machine learning to solve mathematical problems back in 2019.

But in those early years, it was a niche area. At first, Charton and a handful of others used AI to solve problems whose solutions were already known, just to see if they could get the new techniques to work. By 2024, they were beginning to forge ahead. They looked for problems where there was a rich set of data to analyze, and then used AI to construct mathematical objects with quantifiable properties — like optimal arrangements of points that could fit on a grid without forming an isosceles triangle.

In January 2025, Tao and Javier Gómez-Serrano of Brown University began working with two mathematicians at DeepMind, Adam Wagner and Bogdan Georgiev, on an AI system called AlphaEvolve. AlphaEvolve works by using Gemini to write programs in Python code that might be hundreds of lines long. It then “evolves” these programs using so-called genetic algorithms to attempt to find optimal solutions to math problems. The four mathematicians used AlphaEvolve on a new problem every day or two for a few months.

As they did so, they also learned how to improve the prompts they gave AlphaEvolve. One key takeaway: The model seemed to benefit from encouragement. It worked better “when we were prompting with some positive reinforcement to the LLM,” Gómez-Serrano said. “Like saying ‘You can do this’ — this seemed to help. This is interesting. We don’t know why.”

By late May, the team had tried AlphaEvolve on 67 different problems in several areas of mathematics. On 23 of them, AlphaEvolve improved, in a small way, on the best known solutions. On 36 of the 67, it did as well as what had already been done, and on the remaining handful, it couldn’t match the best known result. The mathematicians shared their findings in a November 2025 paper, “Mathematical Exploration and Discovery at Scale.” Gómez-Serrano noted that any one of their results might have been obtained by an expert in a given area who worked at it for a few months. But without being experts in many of these fields, “we were able to obtain comparable results in the span of a day or two,” he said.

As Tao put it, current AI models are “very good at scouring big lists of problems for low-hanging fruit. It’s tedious and thankless and not something humans want to do.” He cautioned that models are achieving “scattered successes among a big sea of unreported failures.” But the successes are notable.

Gómez-Serrano estimates that he now spends about two-thirds of his time using AI. It is, he said, “getting to the point where it is useful and usable. This is the beginning of the new way we will do mathematics.”

Mistaken Identities

In previous years, AI’s extra power seemed to come from its ability to resurface long-forgotten proofs buried in obscure references. Igor Pak of UCLA noted that ChatGPT is currently “fantastic in finding the right references, right literature, finding connections that Google Scholar — which doesn’t work semantically — can’t.”

Then, over the course of 2025, said Johannes Schmitt of the Swiss Federal Institute of Technology Zurich, something shifted. “It started becoming useful to talk to LLMs, not because they would give you the full answer,” he said, but because “they became good conversation partners.”

Johannes Schmitt has recently noticed a rapidly growing role for AI in mathematics: as a conversation partner.

Aitor Iribar-López

The LLMs he spoke with inevitably made lots of mistakes, leading some mathematicians to dismiss them outright. Many researchers, he said, decide that if “everything it says is kind of wrong, I will just not talk to it.” But others — he puts himself in this camp — have a higher tolerance for “the pain of talking to this bullshitting model. They say, I can still get something out of this conversation; even if not every idea is good, I can ignore the bad ones and take the good ones.” And the mistakes, Schmitt noted, are weird ones: There is virtually no way that a person with any training in mathematics would make such a plethora of basic errors while also succeeding in coming up with subtle, original, and correct ideas.

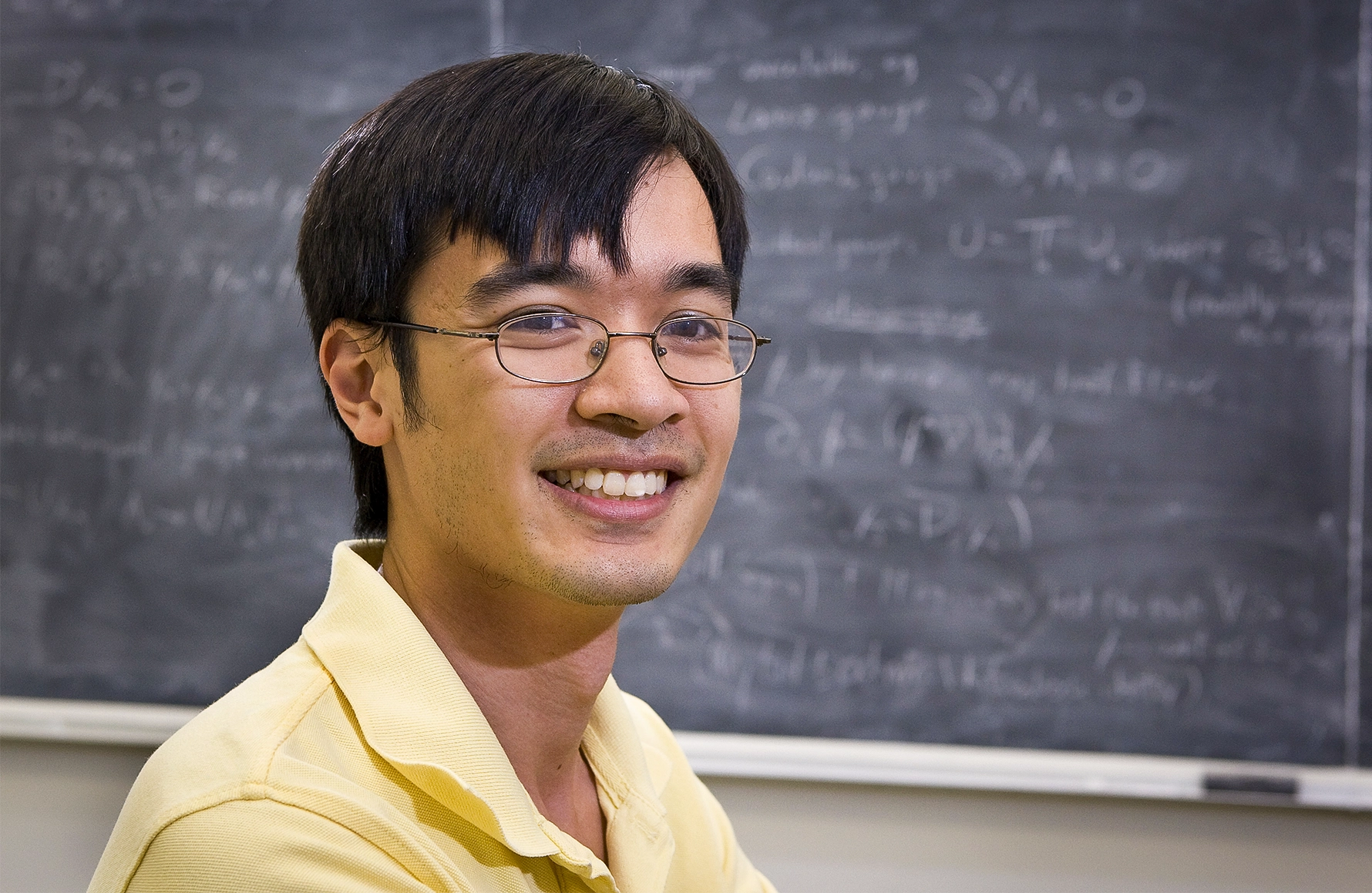

UCLA’s Ernest Ryu, who works largely in a branch of applied math called optimization theory, also started paying more attention to LLMs after the Olympiad results. Whereas AlphaEvolve was trying to optimize particular quantities, Ryu wanted to prove things about the conditions under which optimization algorithms work.

In the summer of 2025, he noticed that LLMs’ mathematical capabilities had dramatically improved. He started using them to help prepare lecture notes, mostly to fill in gaps in his memory of the details of a particular proof. At times, he said, “it would find an error in my reasoning, sometimes major, sometimes minor. Sometimes it would find a simpler proof than I had in my notes.”

He had a sense that AI models were “exhibiting signs of life.” He remembers feeling skeptical but optimistic. To make up his own mind about what LLMs can and can’t do, he decided to try an experiment. One evening in October, after his young son went to sleep, he set out to solve an open problem in optimization theory that he’d attempted a few times in the past. This time he used ChatGPT. “It’s not the most important problem, but I know 10 people who would very much appreciate a solution,” he said.

Ryu’s problem was first proposed in 1983 by a Russian mathematician named Yurii Nesterov. Nesterov was trying to find the minimum of functions that take many variables as inputs and output a single value that behaves “nicely” in a particular mathematical way. If you think of the outputs as forming an elevation map, you want to prove that you eventually converge on the lowest point, and don’t end up endlessly bouncing around in search of it.

This sort of problem arises quite often in applied math, especially in machine learning, where it is central to training neural networks. Say you start somewhere on your map. A widely used technique known as gradient descent uses basic tools from calculus to figure out which way is downhill and how steep the hill is at the point where you’re standing. Take a step downward in the steepest direction each time, and you will eventually get to the very bottom.

But although gradient descent will get you to the right answer, it sometimes gets you there very slowly. So mathematicians have long looked for variations that converge to the right answer more quickly. Nesterov developed one technique for doing so, in which the size of each step downhill depends not only on how steep the function is at a given point, but also on the path you’ve already taken to get there. If you’ve been taking bigger steps in the past, you’ll continue to do so.

It seems intuitively obvious that this will get you to the bottom of the hill more quickly. But what if you go too fast and overshoot? You might risk endlessly oscillating around the true minimum, and never attaining it. Nesterov couldn’t prove that his algorithm would eventually converge to the optimal value. And for 42 years, no one else could either.

When Ryu asked ChatGPT, “it kept giving me incorrect proofs,” he said. “But the lead-up to the inevitable error had interesting steps, correct partial results that seemed potentially useful.” As the LLM made incremental progress, he would check its answers, keep the correct parts, and feed them back into the model with a new prompt. “I had to play the role of the verifier,” Ryu said. “With ChatGPT, I felt like I was covering a lot of ground very rapidly, much more quickly than I could do on my own. That’s what kept me going.”

Within about 12 hours of work spread over three days, he had arrived at a proof of a simplified version of the problem. After a few more days, he finally proved that Nesterov’s method converges. It wasn’t, Ryu said, “the most creative thing, not the most complicated. But certainly it wasn’t that easy.” While it’s not a life-changing result, he added, “it’s something that could be published in a top optimization journal without the AI component. It’s a good result.”

“It’s a concrete instance where the use of ChatGPT really accelerated the discovery,” he said. And he thinks the capabilities of LLMs are only going to continue to improve. “If you look at the velocity of the improvement, that’s staggering. If we continue one year later, two or three model releases down the line, we are going to get really, really impressive, substantial discoveries assisted by AI. It’s going to come.”

A few months after sharing his paper on Nesterov’s method, Ryu took a leave of absence from UCLA to take a job at OpenAI, where he is now a member of the technical staff.

Order in the Court

Over the course of 2025 and into the first months of 2026, AI has been used to prove increasingly abstract results.

In September 2025, more than 100 mathematicians from around the world gathered at Brown University for a special program on algebraic combinatorics. Nicolás Libedinsky and David Plaza had come from Chile, José Simental from Mexico, Geordie Williamson from Australia, and Jordan Ellenberg from Wisconsin.

All of them were interested, for different reasons, in computing a quantity called the d-invariant, which appears in many areas of math. To understand what the d-invariant is, it helps to first look at a well-studied object in one of these areas, called the permutation group. This object is a way of describing the different ways one can shuffle a set of items, like cards in a deck.

It starts out simple. If you have a deck with just one card, you can’t shuffle it. So the permutation group S1 has one element. S2 has two elements: If you have two cards, they can appear in two possible orders. S3 becomes a little more complicated; there are six different ways to order a deck of three cards.

Mark Belan/Quanta Magazine

The different ways of ordering the cards can be arranged into a network of vertices and edges called a graph. The starting arrangement, 123, goes at the bottom. Each edge of the graph (drawn as an arrow) represents a swap of two cards:

As the number of cards n gets larger, Sn grows very quickly — making this graph next to impossible to draw for groups after S4. (S60 has about as many elements as there are atoms in the observable universe.)

Mathematicians want to understand the structure of these graphs both as objects in and of themselves and as tools for analyzing other things.

Consider again the graph of the permutation group S3, which has six elements, or permutations. We want to explore the relationship between these permutations. One way to do so is to look at all the ways to get from one permutation to another by following the arrows. A given permutation is “smaller” than another one (using a definition of size called the Bruhat order) if it is possible to travel along the arrows from the first permutation to the second. So 213 is smaller than 321.

We can then look at the “Bruhat interval” between the two permutations — the set of all the different permutations that lie between them when you follow the graph’s arrows. For example, the interval between 213 and 321 (seen below in red) includes 231 and 312. (If you can’t get from one permutation to another by following the arrows, like from 213 to 132, then neither one is smaller than the other, and the interval between them is not defined.)

The d-invariant associated with two permutations is, loosely speaking, a measure of the complexity of their Bruhat interval’s underlying structure. The same quantity appears in a number of mathematical questions that otherwise seem unrelated, making it of great interest to mathematicians.

In bigger permutation groups, it’s hard to say in any general way what the Bruhat interval between two given permutations looks like. “Intervals are just super complicated things,” Libedinsky said. He, Simental, Plaza, Williamson, and Ellenberg — all hoping for different reasons to find the biggest possible d-invariant for a given permutation group — set out to get AI to help them.

They ended up finding something else entirely.

In October 2025, Ellenberg asked Wagner at DeepMind to use AlphaEvolve (which is not publicly available) to analyze the structures of the Bruhat intervals of dozens of permutation groups. It ran overnight. “In the morning, we were like, this program is really doing something interesting,” Williamson said. “And then I remember a flurry of emails back and forth that day.”

The LLM talked to itself while performing calculations. “I’m about to propose something truly outlandish, a ‘Crazy Ivan’ maneuver for this problem,” it mused, referring to a sharp turn submarines sometimes take to detect their adversaries, popularized in the Tom Clancy novel The Hunt for Red October.

Ultimately, AlphaEvolve generated something like 50 lines of Python code in its attempts to find intervals with large d-invariants. As the mathematicians tried to figure out what this code was doing, Ellenberg realized that if the number of cards in the deck was a power of 2 (like 16, which is 24), then the program became much shorter — about five lines long. “You can analyze it very explicitly,” Williamson said. “It’s doing something very beautiful.”

Clockwise from top left: Jordan Ellenberg, José Simental, Geordie Williamson, Nicolás Libedinsky, and David Plaza uncovered a surprising new structure in a well-studied mathematical object thanks to AI.

Lauren Justice for Quanta Magazine; Imelda Paredes Zamorano/Instituto de Matemáticas UNAM; Sydney University; Esteban Román; Fernanda Fuentes

Clockwise from top left: Geordie Williamson, Jordan Ellenberg, José Simental, Nicolás Libedinsky, and David Plaza uncovered a surprising new structure in a well-studied mathematical object thanks to AI.

Sydney University; Lauren Justice for Quanta Magazine; Imelda Paredes Zamorano/Instituto de Matemáticas UNAM; Esteban Román; Fernanda Fuentes

As they related in a preprint on January 3, 2026, AlphaEvolve had found that the Bruhat intervals in these particular permutation groups had a surprisingly special structure. When the researchers studied the intervals, they found that they formed higher-dimensional cubes called hypercubes. “If you look at what AlphaEvolve was thinking, I was super surprised,” Libedinsky said. “If it was a human, it would be an extremely creative human.”

AlphaEvolve had answered a question they didn’t know they had. “We didn’t ask AlphaEvolve to find big hypercubes,” Ellenberg said. “We asked it to find something else, and we thought about it and realized it was a gigantic hypercube which we had not anticipated was there.”

As Williamson put it, “It’s a structure that’s been sitting there for 50 years in front of our nose. We just hadn’t noticed it.”

Older machine learning methods had previously enabled such serendipitous mathematical discoveries, too — uncovering patterns no one had thought to look for. But in the past, Williamson said, it was a “real engineering effort. … You need to know how to code, spend a lot of time looking at details of neural network training. It was basically extremely difficult for a mathematician with no significant machine learning background to do this.”

With LLMs, “I can suddenly do an experiment in 20 minutes that two years ago would have taken me two weeks,” he said. Though “most of the time it doesn’t work,” AI can now be used like never before “to discover the world that has riches beyond our imagination.”

Around Sphere

Though Bruhat intervals seem like purely combinatorial objects, they also play an important role in a particularly abstract area of math called algebraic geometry, which Ravi Vakil, a mathematician at Stanford University and the current president of the American Mathematical Society, specializes in.

Algebraic geometry is the study of shapes defined by polynomial equations like x3 + 2x2y + xz = 5, which involve a sum of variables raised to whole-number exponents. The degree of the equation is the highest exponent the polynomial has, in this case 3.

Ravi Vakil and his colleagues recently came up with a novel proof idea while chatting with a bespoke version of Gemini. “Who is that idea due to?” he asked. “Is it due to us? Is it due to the model?”

Rod Searcey

Vakil and his colleagues, Balázs Elek of the University of New South Wales and Jim Bryan of the University of British Columbia, were interested in studying how spheres can be embedded in special spaces called flag varieties. (Flag varieties appear in the Bruhat team’s paper as well.) Each embedding — a way of associating each point on the sphere to a point within the flag variety — can be defined by a polynomial equation.

There are lots of ways to embed the sphere. Mathematicians represent each embedding as its own point in a separate high-dimensional space. They then study the embeddings defined by polynomials of different degrees by analyzing the different spaces they form.

As the degree increases, mathematicians want to understand how these spaces change. They knew that when the degree gets arbitrarily large — as it goes to infinity — the space resembles the space of all continuous embeddings, not just those defined by polynomials. But when does this resemblance come to pass?

Vakil and his colleagues had found examples that suggested, to their surprise, that it happens very quickly. “There was some consistency that was not supposed to happen until you reached infinity, and it already happened,” he said.

So, together with Freddie Manners and George Salafatinos, who were then working for DeepMind, they set out to prove it using two specialized modules built atop Google Gemini: DeepThink, which is publicly available, and a system developed by Salafatinos, called FullProof, which is not. They started with a simpler case. “The proof it gave was very elegant, correct, beautifully written. We could follow it line by line,” Vakil said. “It made clear a structure that was not obvious at the time. From that, we realized how the whole argument and significant generalization should potentially work.”

Vakil and his colleagues then went back to the AI model, sketching a proof of the general case and asking it to fill in the details. As they reported in a preprint on January 12, 2026, it succeeded. “To me,” Vakil said, “the real thing was the first thing” — DeepMind’s proof of the simpler case. “The clarity of the argument gave us a new idea.” But he wonders: “Who is that idea due to? Is it due to us? Is it due to the model?”

However one ascribes credit, Vakil said, “I believe I would have come up with the proof given enough time.”

But then he hesitated. “I think so. I’m not sure. I don’t know. Maybe I would have done it in a clunky way. Very possibly, the paper wouldn’t have happened without the assistance.”

And finally: “We needed to go back and forth. AI models will help us do mathematics by letting us do things we did not have time to do before.”

This is perhaps a paradigmatic example of how AI can be useful today. A group of expert mathematicians, with help from a big tech company, figures something out faster than they likely would have otherwise — and they are sure it is correct, because they can check it line by line.

All Ye Need To Know

In asking what AI is doing to mathematical research, we shouldn’t only look at the successes. Litt cautioned that “there is a lot of pollution of the commons by AI-generated nonsense.” Joel David Hamkins of the University of Notre Dame said he is “despairing of this ocean of slop that is overwhelming our journal systems.”

Mathematicians are pinning their hopes on formal proof as the way to navigate this ocean of slop. They’re converting proofs into a language that computers can understand, and then using computer programs to verify that all the logic in the proof pans out. “AI without validation is too unreliable to be of use in any serious application,” Tao said.

Currently, formalizing mathematical proofs in this way is a time-consuming, intricate process that itself takes substantial mathematical knowledge and is a bit of a craft. And so mathematicians are increasingly turning to “autoformalization,” in which AI models translate mathematical statements into formal, logical ones and then prove them. “For the first time,” Tao said, “it does feel like we could formalize a significant fraction of mathematics through AI.”

The other major challenge that many mathematicians see as a consequence of AI’s increasing ability to do math is how it will affect the way students learn. Even the most ardent proponents of AI are concerned. Ken Ono, a professor at the University of Virginia who recently took a leave of absence to become the “founding mathematician” at Axiom, told me he sees “a rosy picture about how AI can help mathematics research, but I am deeply concerned about the role of AI in the future of work and training at all levels.”

Tao said, “Many of the problems we assign, AI can solve instantly. This can discourage a lot of the students from building up their mental muscles.”

Hamkins agreed. “I used to assign quite a bit of homework. I just can’t do it anymore,” he said; a substantial fraction of the assignments students turn in are written by AI. “I don’t want to read it. I don’t want to be the AI cop.” Though homework was highly pedagogically valuable, now “everything has to be in-class quizzes and work. It’s a problem for the entire academic profession.”

As another mathematician at a leading research university told me, “There is a serious risk that, in parallel with accelerating the progress of serious mathematical researchers, AI prevents us from making more mathematical researchers.”

Even with the rapid changes of the past year, none of the mathematicians I spoke to in reporting this piece fear that the subject will become obsolete. Tao gave the analogy of mathematicians trying to climb “a big mountain range with lots of tall mountains and lots of foothills.” Humans can only climb one step at a time, but they can plan a route to the top of a mountain like Everest. Meanwhile, Tao said, current AIs are like jumping robots. They can sometimes “parkour their way to the top of a 6-foot wall” that a human couldn’t climb. But they can’t do long-term strategic planning. Those 6 feet might become 10 feet, or 100, Tao imagines, but “the little jumping robots are nowhere near the Mount Everests of math.”

Pak thinks that certain Everests — such as a major problem in number theory about whether sums like π + e can be written as fractions — will remain unresolved for centuries. “I’m really doubtful AI can make any dents there at all,” he said. “This is not something that AI would be able to do. But I’m quite positive that if humanity survives, eventually we will figure it out.”

Of course, a lot depends on how the capabilities of AI algorithms change and improve in coming years. Even the most astute and careful observers can’t say for sure how the models will develop. Few see signs of stagnation. “Things are moving very fast. I don’t see any sign they are slowing down,” Litt said. The first few months of 2026 have already seen a steady stream of new results from big companies like Google and OpenAI and small ones like Axiom, as well as from academics and even hobbyists.

“My expectation is surely in 20 years we are going to see AI tools generating mathematics that in many measurable ways are better than every human mathematician,” Litt said. “I would be shocked if that doesn’t happen.”

But as Venkatesh told me, “In the end, there are infinitely many ways to formulate any piece of math.” The choices we make, he said, are governed by human values and shaped by the fact that mathematics is not only a science but also an art.

That balance between science and art is in large measure what gives math its beauty — one of the “valuable things in our culture” that Venkatesh wants to retain. If AI pushes mathematics away from its artistic heritage, the discipline will be diminished, even if more theorems are proved each month. After all, no poet talks seriously about doing statistical regression on sonnets to find the optimal ones.

The best hope for AI is that it will help mathematicians find and prove things that would otherwise have remained mysteries. Most mathematicians agree that that’s what computers have done for the past 80 years. But the scale of the change now underway has left many feeling unsettled.

The biggest annual mathematics conference in the world is held every year in early January. In 2026, in Washington, D.C., nervous jokes about being made obsolete by AI were plentiful, even if, on the record, everyone insisted that AI will be a helpmate to human mathematicians. Williamson — who has been working with AI for years and is very excited by it — was chosen to deliver a series of prestigious lectures about AI and math to the entire conference. He told the audience that it’s a mistake to react to AI developments with ignorance and fear.

But he said he understands where the fear comes from. He sees mathematics as a “craft that people have spent their lives — dedicated their lives — towards. There is some possibility that its value may be greatly diminished in the future.”