Anil Seth Finds Consciousness in Life’s Push Against Entropy

In his laboratory at the University of Sussex, the neuroscientist Anil Seth monitors brain activity for clues to the origins of consciousness.

Tom Medwell for Quanta Magazine

Introduction

Anil Seth wants to understand how minds work. As a neuroscientist at the University of Sussex in England, Seth has seen firsthand how neurons do what they do — but he knows that the puzzle of consciousness spills over from neuroscience into other branches of science, and even into philosophy.

As he puts it near the start of his new book, Being You: A New Science of Consciousness (available October 19): “Somehow, within each of our brains, the combined activity of billions of neurons, each one a tiny biological machine, is giving rise to a conscious experience. And not just any conscious experience, your conscious experience, right here, right now. How does this happen? Why do we experience life in the first person?”

This puzzle — the mystery of how inanimate matter arranges itself into living beings with self-aware minds and a rich inner life — is what the philosopher David Chalmers called the “hard problem” of consciousness. But the way Seth sees it, Chalmers was overly pessimistic. Yes, it’s a challenge — but we’ve been chipping away at it steadily over the years.

“I always get a little annoyed when I read people saying things like, ‘Chalmers proposed the hard problem 25 years ago’ … and then saying, 25 years later, that ‘we’ve learned nothing about this; we’re still completely in the dark, we’ve made no progress,’” said Seth. “All this is nonsense. We’ve made a huge amount of progress.”

Quanta recently caught up with Seth at his home in Brighton via videoconference. The interview has been condensed and edited for clarity.

Why has this problem of consciousness been so vexing, over the centuries — harder, it seems, than figuring out what’s inside an atom or even how the universe began?

When we think about consciousness or experience, it just doesn’t seem to us to be the sort of thing that admits an explanation in terms of physics and chemistry and biology. There’s a suspicion that scientific explanation — by which I mean broadly materialist, reductive explanations, which have been so successful in other branches of physics and chemistry — just might not be up to the job, because consciousness is intrinsically private.

In this study of “displaced perception” in Seth’s lab, a volunteer wears a virtual reality headset that provides a view of a mannequin’s chest. This setup can trick the brain into “feeling” touches that aren’t on the body.

Tom Medwell for Quanta Magazine

That leap from the physical to the mental is something that philosophers have grappled with for centuries. René Descartes, for example, famously argued that nonhuman animals were akin to machines, while humans had something extra that made consciousness possible. In your book, you mention the work of a less familiar figure, the 18th-century French scholar Julien Offray de La Mettrie. How did his views differ from those of Descartes, and how do they bear on your own work?

La Mettrie is a fascinating character, a polymath type of figure. I think of him as basically taking Descartes’ ideas and extending them to their natural conclusions, by not being worried about what the [Catholic] Church might say. Descartes was always trying to finesse his arguments in order to avoid being burned alive, or otherwise being subject to harsh clerical treatment. Descartes considered nonhuman animals as “beast-machines.” (This is a term I re-appropriate and hope to rehabilitate in my book.) The beast-machine for Descartes was the idea that nonhuman animals were machines made of flesh and blood, lacking the rational, conscious minds that bring humans closer to God.

La Mettrie said, “OK, if animals are flesh-and-blood machines, then humans are animals, too, of a certain sort.” So just as there is a beast-machine, or a bête machine, you also have l’homme machine — “man machine.” He just extended the same basic idea without this artificial division.

How does consciousness play into that picture? How is consciousness related to our nature as living machines, in a way that’s continuous between humans and other animals? In my work — and in the book — I eventually get to the point that consciousness is not there in spite of our nature as flesh-and-blood machines, as Descartes might have said; rather, it’s because of this nature. It is because we are flesh-and-blood living machines that our experiences of the world and of “self” arise.

You’re clearly more drawn to some of the approaches to consciousness that researchers have put forward than others. For example, you seem to support the work that Giulio Tononi and his colleagues at the University of Wisconsin have been doing on “integrated information theory” (IIT). What is integrated information theory, and why do you find it promising?

Well, I find some bits of IIT promising, but not others. The promising bit comes from what Gerald Edelman and Tononi together observed, in the late ’90s, which is that conscious experiences are highly “informative” and always “integrated.”

They meant information in a technical, formal sense — not the informal sense in which reading a newspaper is informative. Rather, conscious experiences are informative because every conscious experience is different from every other experience you ever have had, ever could have, or ever will have. Each one rules out the occurrence of a very, very large repertoire of alternative possible conscious experiences. When I look out of the window right now, I have never experienced this precise visual scene. It’s an experience even more distinctive when combined with all my thoughts, background emotions and so on. And this is what information, in information theory, measures: It’s the reduction of uncertainty among a repertoire of alternative possibilities.

As well as being informative, every conscious experience is also integrated. It’s experienced “all of a piece”: Every conscious scene appears as a unified whole. We don’t experience the colors of objects separately from their shapes, nor do we experience objects independently of whatever else is going on. The many different elements of my conscious experience right now all seem tied together in a fundamental and inescapable way.

So at the level of experience, at the level of phenomenology, consciousness has these two properties that coexist. Well, if that’s the case, then what Tononi and Edelman argued was that the mechanisms that underlie conscious experiences in the brain or in the body should also co-express these properties of information and integration.

Then is integrated information theory an attempt to quantify consciousness — to attach numbers to it?

Basically, yes. IIT proposes a quantity called phi which measures both information and integration and which according to the theory is identical to the amount of consciousness associated with a system.

One thing that immediately follows from this is that you have a nice post hoc explanation for certain things we know about consciousness. For instance, that the cerebellum — the “little brain” in the back of our head — doesn’t seem to have much to do with consciousness. That’s just a matter of empirical fact; the cerebellum doesn’t seem much involved. Yet it has three-quarters of all the neurons in the brain. Why isn’t the cerebellum involved? You can make up many reasons. But the IIT reason is a very convincing one: The cerebellum’s wiring is not the right sort of wiring to generate co-expressed information and integration, whereas the cortex is, and the cortex is intimately related to consciousness.

I should also say the parts of IIT that I find less promising are where it claims that integrated information actually is consciousness — that there’s an identity between the two. Among other things, taking this stance makes it almost impossible to measure for any nontrivial system. It also implies that consciousness is sort of everywhere, since many systems, not just brains, can generate integrated information.

You also seem fascinated by Karl Friston’s “free-energy principle.” Can you give a layperson-friendly explanation of what this is, and how it can help us understand minds?

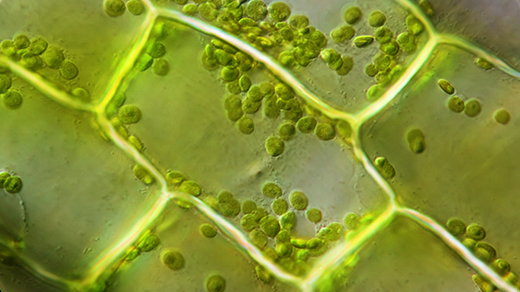

I think the simplest articulation of the free-energy principle is this: Let’s think about living systems — a cell or an organism. A living system maintains itself as separate from its environment. For example, I don’t just dissolve into mush on the floor. It’s an active process: I take energy in, and I maintain myself as a system which maintains its boundaries with the world.

This means that of all the possible states my body could be in — all the possible combinatorial arrangements of my different components — there’s only a very, very small subset of “statistically expected” states that I remain in. My body temperature, for instance, remains in a very small range of temperatures, which is one of the reasons I stay alive. How do I do this? How does the organism do this? Well, it must minimize the uncertainty of the states that it’s in. I have to actively resist the second law of thermodynamics, so I don’t dissipate into all kinds of states.

The free-energy principle is not itself a theory about consciousness, but I think it’s very relevant because it provides a way of understanding how and why brains work the way they do, and it links back to the idea that consciousness and life are very tightly related. Very briefly, the idea is that to regulate things like body temperature — and, more generally, to keep the body alive — the brain uses predictive models, because to control something it’s very useful to be able to predict how it will behave. The argument I develop in my book is that all our conscious experiences arise from these predictive models which have their origin in this fundamental biological imperative to keep living.

In your book, you discuss how things become less mysterious as we understand the science behind them better, and you wonder whether the mystery of consciousness might go away more in the manner of the mystery of “heat” or more like the mystery of “life.” Can you expand on that a little bit?

There may be another connection between consciousness and life, but in this case the connection is more historical than literal. Historically, there is a commonality between the apparent mysteries of “life” and of “heat,” which is that both eventually went away — but they went away in different ways.

Let’s take heat first. Some years ago I read this brilliant book called Inventing Temperature by the philosopher and historian of science Hasok Chang. Until then, I had not realized how complicated and rich and convoluted the story of heat and temperature was. Back in the 17th century, efforts to understand the basis of heat depended on ways to measure hotness and coolness — to come up with a scale of temperature and things like thermometers. But how do you build a thermometer until you have a reliable benchmark, a fixed point of temperature? And how do you get a temperature scale until you’ve got a reliable thermometer? It’s a chicken-and-egg problem that was really problematic at the time. But it was managed: People, bit by bit, bootstrapped reliable thermometers into existence.

Once the ability to measure was in place, the story of heat turned out to be a maximally reductive scientific explanation. Previously, people had wondered whether heat was this thing that flowed between objects. Well, it’s not. Heat turns out to be identical to something else — in this case, the mean molecular kinetic energy of atoms or molecules in a substance. That is what heat is.

Life is very different. Nobody measures how “alive” something is; it didn’t get resolved in that way. But people still wondered what the essence of life really was — whether life required some élan vital, this “spark of life.” Well, it does not. The key to unlocking life was to recognize that it is not just one thing. Life is a constellation concept — a cluster of related properties that come together in different ways in different organisms. There’s homeostasis, there’s reproduction, there’s metabolism, and so on. With life there are also gray areas, things that from some perspectives we would describe as being alive, and from others not — like viruses and oil droplets, and now synthetic organisms. But by accounting for its diverse properties, the suspicion that we still needed an élan vital, a spark of life — some sort of vitalistic resonance — to explain it went away. The problem of life wasn’t solved; it was “dissolved.”

“The free-energy principle is not itself a theory about consciousness,” Seth said, “but I think it’s very relevant because it provides a way of understanding how and why brains work the way they do.”

Harry Genge for Quanta Magazine

Which of these two ways do you suppose the puzzle of consciousness will play out?

Let’s be optimistic and say that this problem, consciousness, will go away too. We’ll look back in 50 years or 500 years, and we’ll say, “Oh, yeah, we understand now.” Will it be a story much like temperature and heat, where we say that consciousness is identical to something else — let’s say, something like integrated information? IIT is in fact the theory that goes most strongly with the temperature analogy. Maybe it will turn out that way, if Giulio [Tononi] is right.

And what if consciousness ends up being more like life?

This, for me, is the more likely outcome. Here, it’s going to be a case of saying consciousness is not this one big, scary mystery, for which we need to find a humdinger eureka moment of a solution. Rather, being conscious, much like being alive, has many different properties that will express in different ways among different people, among different species, among different systems. And by accounting for each of these properties in terms of things happening in brains and bodies, the mystery of consciousness may dissolve too.

You describe perception in your book as a “controlled hallucination.” This gets us into somewhat philosophical territory. How do we decide what’s “real” and what’s an illusion?

I don’t want to be misinterpreted, as I sometimes have — especially from the title of my TED Talk, “Your Brain Hallucinates Your Conscious Reality” — which has led to some people saying, “Go and stand in front of a bus and you’ll revise that opinion.” I don’t need to revise it; that’s already my opinion: Buses will hurt you. At the level of macroscopic, classical physics that we and buses inhabit, buses are real, whether you’re looking at them or not.

But the way we experience “bus-ness” — that which we experience as being [the qualities of] a bus — is different from its objective physical existence. Let’s say the bus is red; now, redness is a mind-dependent property. Maybe bus-ness itself is also a mind-dependent property.

I don’t go all the way to what in philosophy you might call some version of idealism — that everything is a property of the mental. Some people do. This is where I diverge a little bit from people like [the cognitive scientist] Donald Hoffman. We line up in agreeing that perception is an active construction in the brain and that the goal of perception is not to create a veridical, accurate representation of the real world, but is instead geared toward helping the survival prospects of an organism. We see the world not as it is, but as it’s useful for us to do so.

But he goes further, ending up in a kind of panpsychist idealism that some degree of consciousness inheres in everything. I just don’t buy it, frankly, and I don’t think you need to go there. He might be right, but it’s not testable. I think you can tell a rich story about the nature of consciousness and perception while retaining a broadly realist view of the world.

Where do you stand on the question of conscious machines?

I don’t think we should be even trying to build a conscious machine. It’s massively problematic ethically because of the potential to introduce huge forms of artificial suffering into the world. Worse, we might not even recognize it as suffering, because there’s no reason to think that an artificial system having an aversive conscious experience will manifest that fact in a way we can recognize as being aversive. We will suddenly have ethical obligations to systems when we’re not even sure what their moral or ethical status is. We shouldn’t do this without having really laid down some ethical warning lines in advance.

One of the important qualities of consciousness that needs to be accounted for is that it is integrated. “The many different elements of my conscious experience right now all seem tied together in a fundamental and inescapable way,” Seth said.

Tom Medwell for Quanta Magazine

So we shouldn’t build conscious machines — but could we? Does it matter that a conscious machine wouldn’t be biological — that it would have a different “substrate,” as philosophers like to put it?

There’s still, for me, no totally convincing reason to believe that consciousness is either substrate-independent or substrate-dependent — though I do tend toward the latter. There are some things which are obviously substrate-independent. A computer that plays chess is actually playing chess. But a computer simulation of a weather system does not generate actual weather. Weather is substrate-dependent.

Where does consciousness fall? Well, if you believe that consciousness is some form of information processing, then you’re going to say, “Well, you can do it in a computer.” But that’s a position you choose to take — there’s no knock-down evidence for it. I could equally choose the position that says, no, it’s substrate-dependent.

I’m still wondering what would make it substrate-dependent. Living things are made from cells. Is there something special about cells? How are they different from the components of a computer?

This is why I tend toward the substrate-dependent view. This imperative for self-organization and self-preservation in living systems goes all the way down: Every cell within a body maintains its own existence just as the body as a whole does. What’s more, unlike in a computer where you have this sharp distinction between hardware and software — between substrate and what “runs on” that substrate — in life, there isn’t such a sharp divide. Where does the mind-ware stop and the wetware start? There isn’t a clear answer. These, for me, are positive reasons to think that the substrate matters; a system that instantiates conscious experiences might have to be a system that cares about its persistence all the way down into its mechanisms, without some arbitrary cutoff. No, I can’t demonstrate that for certain. But it’s one interesting way in which living systems are different from computers, and it’s a way which helps me understand consciousness as it’s expressed in living systems.

But conscious or not, you’re worried that our machines will one day seem conscious?

I think the situation we’re much more likely to find ourselves in is living in a world where artificial systems can give the extremely compelling impression that they are conscious, even when they are not. Or where we just have no way of knowing, but the systems will strongly try to convince us that they are.

I just read a wonderful novel, Klara and the Sun, by Kazuo Ishiguro, which is a beautiful articulation of all the ways in which having systems that give the appearance of being conscious can screw with our human psyches and minds. Alex Garland’s film Ex Machina does this beautifully. Westworld does it too. Blade Runner does it. Literature and science fiction have addressed this question, I think, much more deeply than much of AI research has — at least so far.