What's up in

Computer Science

Latest Articles

Distinct AI Models Seem To Converge On How They Encode Reality

Is the inside of a vision model at all like a language model? Researchers argue that as the models grow more powerful, they may be converging toward a singular “Platonic” way to represent the world.

The Year in Computer Science

Explore the year’s most surprising computational revelations, including a new fundamental relationship between time and space, an undergraduate who overthrew a 40-year-old conjecture, and the unexpectedly effortless triggers that can turn AI evil.

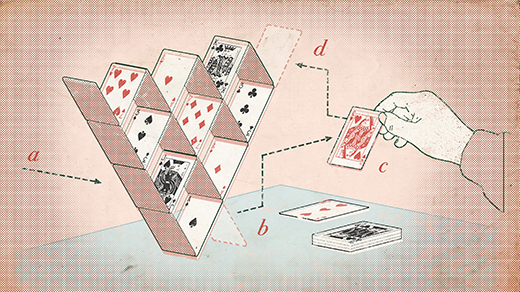

Cryptographers Show That AI Protections Will Always Have Holes

Large language models such as ChatGPT come with filters to keep certain info from getting out. A new mathematical argument shows that systems like this can never be completely safe.

‘Reverse Mathematics’ Illuminates Why Hard Problems Are Hard

Researchers have used metamathematical techniques to show that certain theorems that look superficially distinct are in fact logically equivalent.

To Have Machines Make Math Proofs, Turn Them Into a Puzzle

Marijn Heule turns mathematical statements into something like Sudoku puzzles, then has computers go to work on them. His proofs have been called “disgusting,” but they go beyond what any human can do.

In a First, AI Models Analyze Language As Well As a Human Expert

If language is what makes us human, what does it mean now that large language models have gained “metalinguistic” abilities?

The Game Theory of How Algorithms Can Drive Up Prices

Recent findings reveal that even simple pricing algorithms can make things more expensive.

Researchers Discover the Optimal Way To Optimize

The leading approach to the simplex method, a widely used technique for balancing complex logistical constraints, can’t get any better.

How One AI Model Creates a Physical Intuition of Its Environment

The V-JEPA system uses ordinary videos to understand the physics of the real world.