Myriam Wares for Quanta Magazine

Introduction

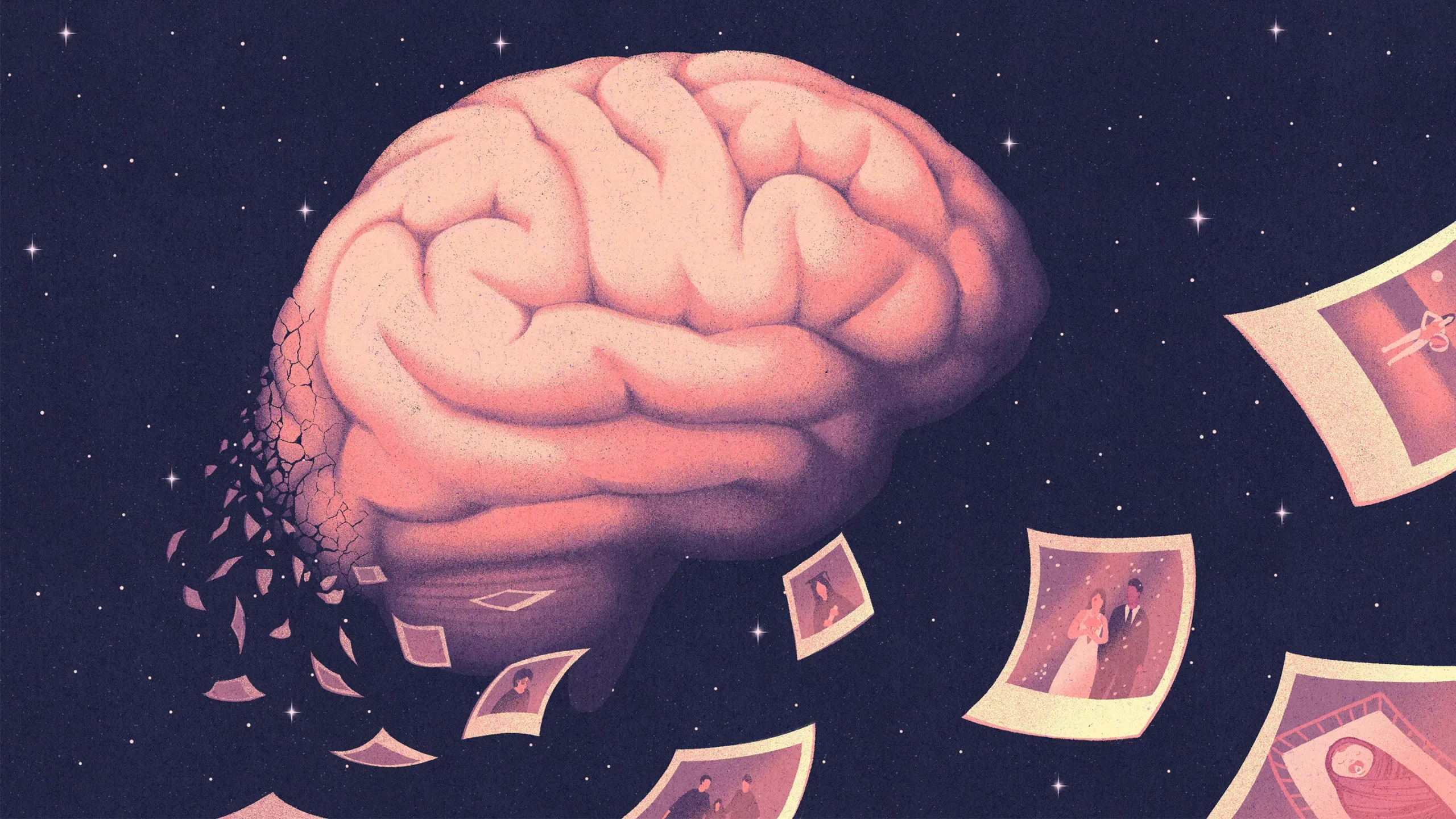

Our memories are the cornerstone of our identity. Their importance is a big part of what makes Alzheimer’s disease and other forms of dementia so cruel and poignant. It’s why we’ve hoped so desperately for science to deliver a cure for Alzheimer’s, and why it is so frustrating and tragic that useful treatments have been slow to emerge. Great excitement therefore surrounded the announcement in September that a new drug, lecanemab, slowed the progression of the disease in clinical trials. If it is approved by the Food and Drug Administration, lecanemab will become only the second Alzheimer’s treatment that counteracts amyloid-beta protein, which is widely supposed to be the cause of the disease.

Yet the effects of lecanemab are so marginal that researchers debate whether the drug will really make a practical difference for patients. The fact that lecanemab stands out as a bright spot speaks to how dismal much of the history of research on treatments for Alzheimer’s has been. Meanwhile, a deeper understanding of the biology at play is fueling interest in the leading alternative theories for what causes the disease.

Speculation about how memory works is at least as old as Plato, who in one of his Socratic dialogues wrote about “the gift of Memory, the mother of the Muses,” and compared its operation to a wax stamp in the soul. We can be grateful that science has vastly improved on our understanding of memory since Plato’s time — out with the wax stamps, in with “engrams” of changes in our neurons. In this past year alone, researchers have made exciting strides toward learning how and where in the brain different aspects of our memories reside. More surprisingly, they have even found biochemical mechanisms that distinguish good memories from bad ones.

Because we are creatures with brains, we often think about memory in purely neurological terms. Yet work published early in 2022 by researchers at the California Institute of Technology suggests that even individual cells in developing tissues may carry some records of their lineage’s history. These stem cells seem to rely on that stored information when they are faced with decisions about how to specialize in response to chemical cues. Advances in biology over this past year unveiled many other surprises as well, including insights into how the brain adapts to extended food insufficiency and how migrating cells follow a path through the body. It’s worth looking back on some of the best of that work before the revelations of the coming year give us a new perspective on ourselves again.

Harol Bustos for Quanta Magazine

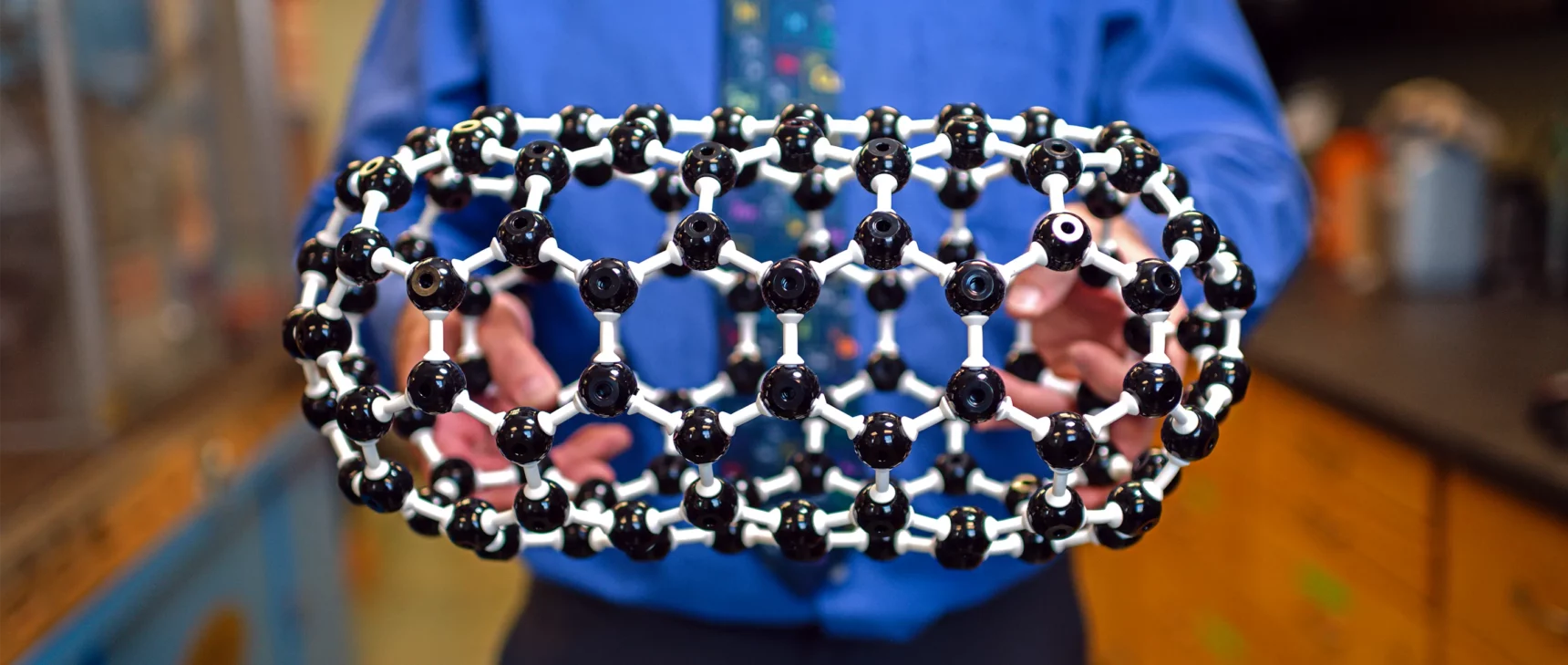

A Turning Point in Alzheimer’s Research?

Many people connected to Alzheimer’s disease, either through research or through personal ties to patients, hoped that 2022 would be a banner year. Major clinical trials would finally reveal whether two new drugs addressing the perceived root cause of the disease worked. The results fell unfortunately short of expectations. One of the drugs, lecanemab, showed potential for slightly slowing the cognitive decline of some patients but was also linked to sometimes fatal side effects; the other, gantenerumab, was deemed an outright failure.

The disappointing outcomes cap three decades of research based heavily on the theory that Alzheimer’s disease is caused by plaques of amyloid proteins that build up between brain cells and kill them. Mounting evidence suggests, however, that amyloid is only one component in a much more complex disease process that involves damaging inflammation and malfunctions in how cells recycle their proteins. Most of these ideas have been around for as long as the amyloid hypothesis but are only just beginning to receive the attention they deserve.

In fact, aggregations of proteins around cells are beginning to look like an almost universal phenomenon in aging tissues and not a condition peculiar to amyloid and Alzheimer’s disease, according to work by Stanford University researchers that was announced in a preprint last spring. The observation may be one more bit of proof that worsening problems with protein management may be a routine consequence of aging for cells.

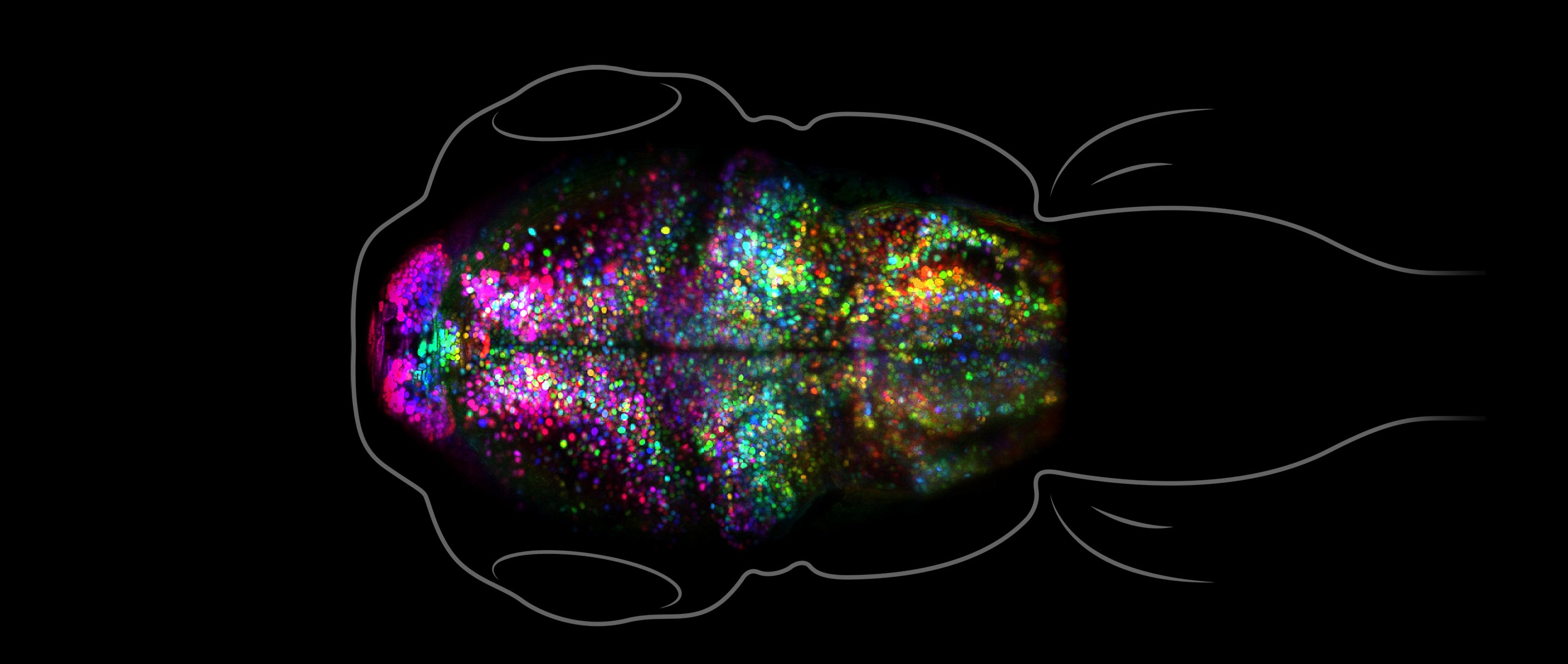

Andrey Andreev, Thai Truong, Scott Fraser; Translational Imaging Center, USC

Watching a Memory Form

Neuroscientists have long understood a lot about how memories form — in principle. They’ve known that as the brain perceives, feels and thinks, the neural activity that gives rise to those experiences strengthens the synaptic connections between the neurons involved. Those lasting changes in our neural circuitry become the physical records of our memories, making it possible to re-evoke the electrical patterns of our experiences when they are needed. The exact details of that process have nevertheless been cryptic. Early this year, that changed when researchers at the University of Southern California described a technique for visualizing those changes as they occur in a living brain, which they used to watch a fish learn to associate unpleasant heat with a light cue. To their surprise, while this process strengthened some synapses, it deleted others.

The information content of a memory is only part of what the brain stores. Memories are also encoded with an emotional “valence” that categorizes them as a positive or negative experience. Last summer, researchers reported that levels of a single molecule released by neurons, called neurotensin, seem to act as flags for that labeling.

Samuel Velasco/Quanta Magazine

Molecular Ecosystems Started Life

Life on Earth began with the first appearance of cells roughly 3.8 billion years ago. But paradoxically, before there were cells, there must have been collections of molecules doing surprisingly lifelike things. Over the past decade, researchers in Japan have been conducting experiments with RNA molecules to learn whether a single type of replicating molecule could evolve into a throng of different replicators, as researchers on the origin of life have theorized must have happened in nature. The Japanese scientists found that this diversification did occur, with various molecules coevolving into competing hosts and parasites that rose and fell in dominance. Last March, the scientists reported a new development: The diverse molecules had started working together in a more stable ecosystem. Their work suggests that RNAs and other molecules in the prebiotic world could likewise have coevolved to lay the foundations of cellular life.

Self-replication is often treated as the essential first step in any origin-of-life hypothesis, but it doesn’t have to be. This year, Nick Lane and other evolutionary biologists continued to find evidence that before cells existed, systems of “proto-metabolism” involving complex sets of energetic reactions might have arisen in the porous materials near hydrothermal vents.

How Body Cells Find Their Place

How does a single fertilized egg cell grow into an adult human body with upward of 30 trillion cells in more than 200 specialized categories? It’s the quintessential mystery of development. For much of the past century, the predominant explanation has been that chemical gradients established in various parts of the developing body guide cells to where they’re needed and tell them how to differentiate into the constituents of skin, muscles, bones, brain and other organs.

But chemicals now seem to be only part of the answer. Recent work suggests that while cells do use chemical gradient clues to guide their navigation, they also follow patterns of physical tension in the tissues that surround them, like tightrope walkers crossing a taut cable. Physical tension does more than tell cells where to go. Other work reported in May showed that mechanical forces inside an embryo also help induce sets of cells to become specific structures, such as feathers instead of skin.

Meanwhile, synthetic biologists — researchers who take an engineering approach to the study of life — made important progress in understanding the kinds of genetic algorithms that control how cells differentiate in response to chemical cues. A team at Caltech demonstrated an artificial network of genes that could stably transform stem cells into a number of more specialized cell types. They haven’t identified what the natural genetic control system in cells is, but the success of their model proves that whatever the real system is, it probably doesn’t need to be much more complicated.

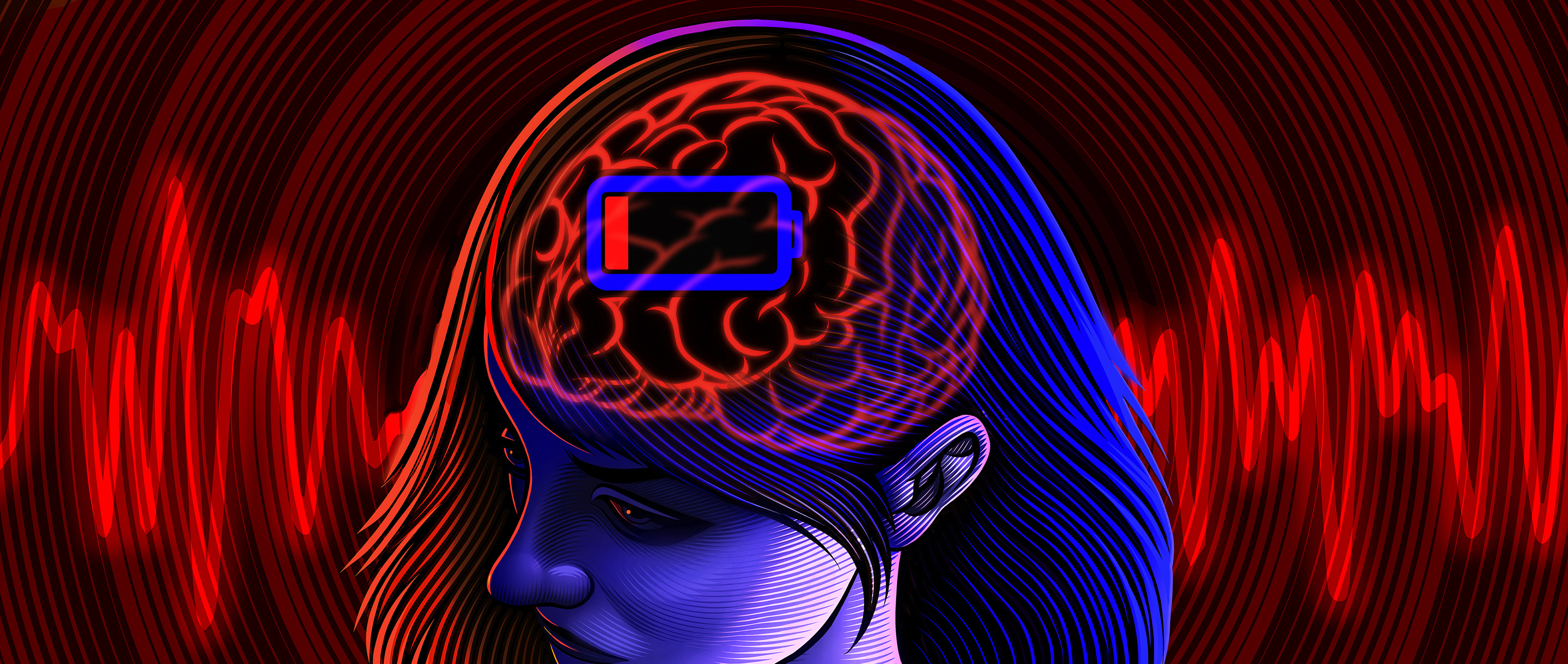

Matt Curtis for Quanta Magazine

What the Low-Energy Brain Sees

The brain is the most energy-hungry organ in the body, so perhaps it’s not surprising that evolution devised an emergency strategy to help brains cope with lengthy periods of food deficiency. Researchers at the University of Edinburgh discovered that when mice have to survive on short rations for weeks on end, their brains start to operate in the equivalent of a “low power” mode.

In this state, neurons in the visual cortex use almost 30% less energy at their synapses. From an engineering standpoint, it’s a neat solution for stretching the brain’s energy resources, but there’s a catch. In effect, the low-power mode reduces the resolution of the animal’s vision by making the visual system process signals less precisely.

An engineering view of the brain also recently improved our understanding of another sensory system: our sense of smell. Researchers have been trying to improve the ability of computerized “artificial noses” to recognize smells. Chemical structures alone go a long way toward defining the smells we associate with various molecules. But new work suggests that the metabolic processes that create molecules in nature also reflect our sense of the smell of the molecules. Neural networks that included metabolic information in their analyses came significantly closer to classifying smells the way humans do.

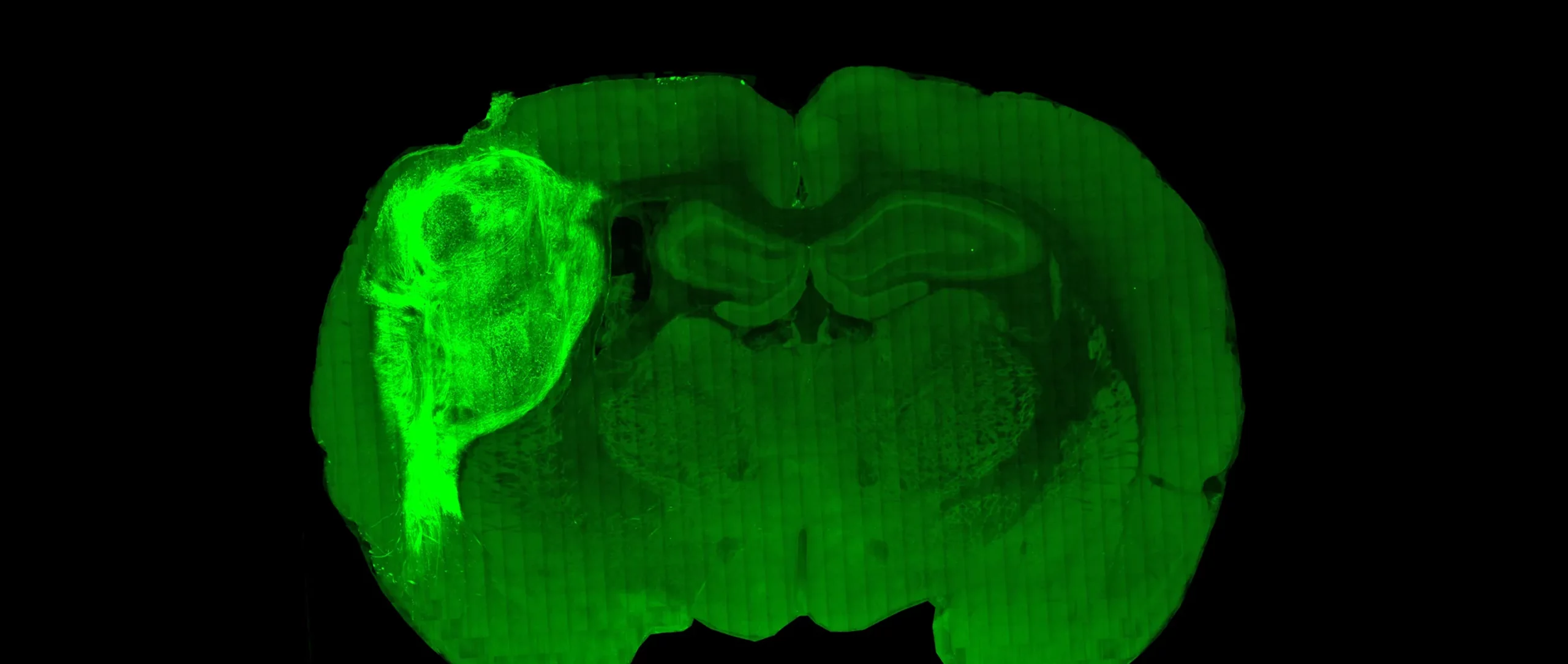

Paşca Lab, Stanford University

Human Brain Cells Connect Inside Animal Brains

A living human brain is still a maddeningly difficult thing for neuroscientists to study: The skull obstructs their view and ethical considerations rule out many potentially informative experiments. That’s why researchers have begun growing isolated brain tissue in the laboratory and letting it form “organoids” with physical and electrical similarities to real brains. This year, the neuroscientist Sergiu Paşca and his colleagues showed how far those similarities go by implanting human brain organoids into newborn laboratory rats. The human cells integrated themselves into the animal’s neural circuitry and took on a role in its sense of smell. Moreover, the transplanted neurons looked healthier than ones growing in isolated organoids, which suggests, as Paşca noted in an interview with Quanta, the importance of providing neurons with inputs and outputs. The work points the way toward developing better experimental models for human brains in the future.