The Computing Pioneer Helping AI See

Alexei Efros studies how machines can understand the visual world.

Peter DaSilva for Quanta Magazine

Introduction

When Alexei Efros moved with his family from Russia to California as a teenager in the 1980s, he brought his Soviet-built personal computer, an Elektronika BK-0010. The machine had no external storage and overheated every few hours, so in order to play video games, he had to write code, troubleshoot, and play fast — before the machine shut down. That cycle, repeated most days, accelerated his learning.

“I was very lucky that this Soviet computer wasn’t very good!” said Efros, who laughs easily and speaks with a mild Russian accent. He doesn’t play as many games nowadays, but that willingness to explore and make the most of his tools remains.

In graduate school at the University of California, Berkeley, Efros began hiking and exploring the Bay Area’s natural beauty. It wasn’t long before he began combining his passion for computers with his enjoyment of these sights. He developed a way to seamlessly patch holes in photographs — for example, replacing an errant dumpster in a photo of a redwood forest with natural-looking trees. Adobe Photoshop later adopted a version of the technique for its “content-aware fill” tool.

Now a computer scientist at the Berkeley Artificial Intelligence Research Lab, Efros combines massive online data sets with machine learning algorithms to understand, model and re-create the visual world. In 2016, the Association for Computing Machinery awarded him its Prize in Computing for his work creating realistic synthetic images, calling him an “image alchemist.”

Efros says that, despite researchers’ best efforts, machines still see fundamentally differently than we do. “Patches of color and brightness require us to connect what we’re seeing now to our memory of where we have seen these things before,” Efros said. “This connection gives meaning to what we’re seeing.” All too often, machines see what is there in the moment without connecting it to what they have seen before.

But difference can have advantages. In computer vision, Efros appreciates the immediacy of knowing whether an algorithm designed to recognize objects and scenes works on an image. Some of his computer vision questions — such as “What makes Paris look like Paris?” — have a philosophical bent. Others, such as how to address persistent bias in data sets, are practical and pressing.

“There are a lot of people doing AI with language right now,” Efros said. “I want to look at the wholly visual patterns that are left behind.” By improving computer vision, not only does he hope for better practical applications, like self-driving cars; he also wants to mine those insights to better understand what he calls “human visual intelligence” — how people make sense of what they see.

Quanta Magazine met with Efros in his Berkeley office to talk about scientific superpowers, the difficulty of describing visuals, and how dangerous artificial intelligence really is. The interview has been condensed and edited for clarity.

Efros works with his students at the University of California, Berkeley.

Peter DaSilva for Quanta Magazine

How has computer vision improved since you were a student?

When I started my Ph.D., there was almost nothing useful. Some robots were screwing some screws using computer vision, but it was limited to this kind of very controlled industrial setting. Then, suddenly, my camera detected faces and made them sharper.

Now, computer vision is in a huge number of applications, such as self-driving cars. It’s taking longer than some people initially thought, but still, there’s progress. For somebody who doesn’t drive, this is extremely exciting.

Wait, you don’t drive?

No, I don’t see well enough to drive! [Laughs.] For me, this would be such a game changer — to have a car that would drive me to places.

I didn’t realize that your sight prevented you from driving. Can you see the images you work with on a computer monitor?

If I make them big enough. You can see that my fonts are quite big. I was born not seeing well. I think that everybody else is a weirdo for having crazy good vision.

Did your non-weirdo status influence your research direction?

Who knows? There was definitely no sense of “Oh, I don’t see well, so I’m going to make computers that see better.” No, I never had that as a motivation.

To be a good scientist, you need a secret superpower. You need to do something better than everybody else. The great thing about science is that we don’t all have the same superpower. Maybe my superpower has been that, because I don’t see very well, I might have more insight into the vision problem.

Efros in his Berkeley office. “I was born not seeing well. I think that everybody else is a weirdo for having crazy good vision.”

Peter DaSilva for Quanta Magazine

I understood early on about the importance of prior data when looking at the world. I couldn’t see very well myself, but my memory of prior experiences filled in the holes enough that I could function basically as good as a normal person. Most people don’t know that I don’t see well. That gave me — I think — this unique intuition that it might be less about the pixels and more about the memory.

Computers only see what’s there now, whereas we see the moment connected to the tapestry of everything we’ve seen before.

Is it even possible to express in words the subtle visual patterns that, for example, make Paris look like Paris?

When you’re in a particular city, sometimes you just know what city you’re in — there’s this je ne sais quoi, even though you’ve never been to that particular street corner. That’s extremely hard to describe in words, but it’s right there in the pixels.

[For Paris], you could talk about how it’s usually six-story buildings, and usually there are balconies on the fourth story. You could put some of this into words, but a lot is not linguistic. To me that’s exciting.

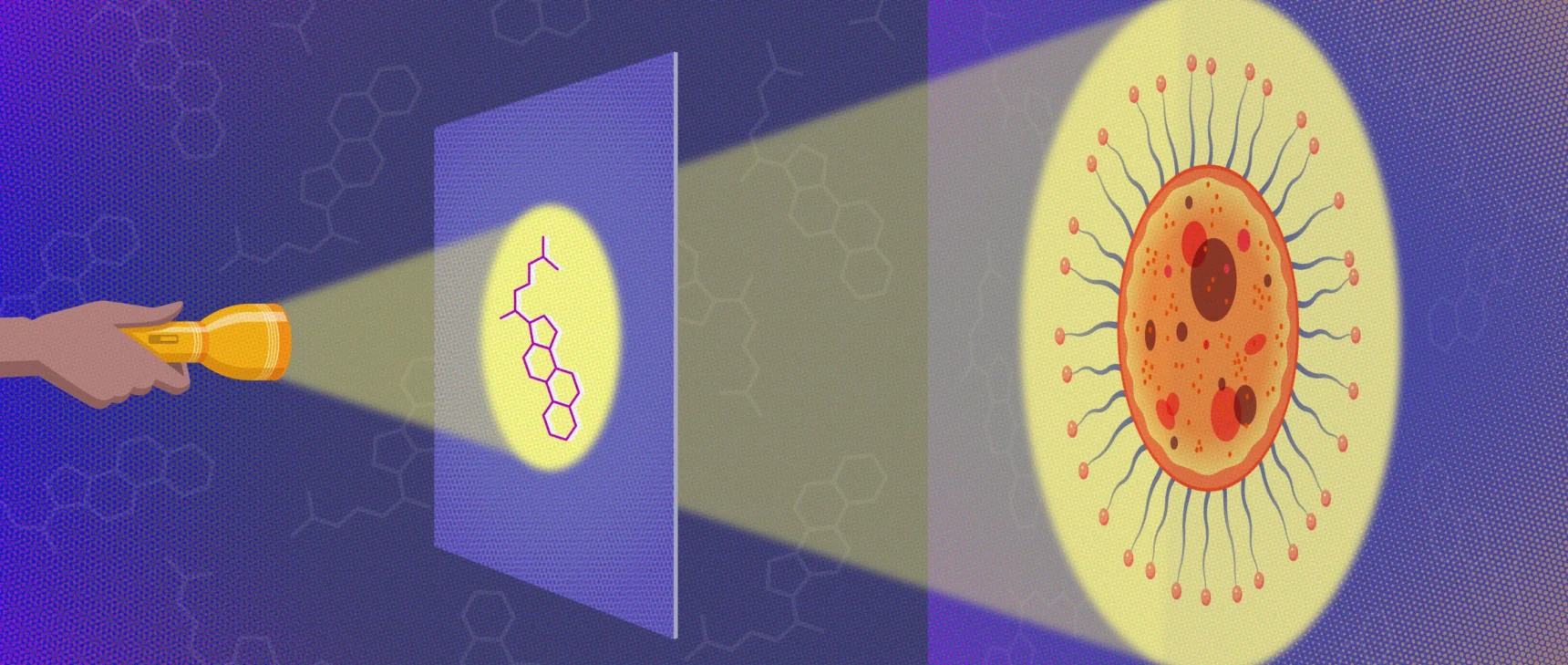

Your recent work involves teaching computers to ingest visual data in ways that mimic human sight. How does that work?

Right now, computers have a ginormous data set: billions of random images scraped off the internet. They take random images, process one image, then take another random image, process that, etc. You train your [computer’s visual] system by going over and over this data set.

The way that we — biological agents — ingest data is very different. When we are faced with a novel situation, it is the one and only time this data will be there for us. We’ve never been in this exact situation, in this room, with this lighting, dressed this way. First, we use this data to do what we need to do, to understand the world. Then, we use this data to learn from it, [to predict] the future.

Since graduate school, Efros has enjoyed hiking and exploring the Bay Area.

Peter DaSilva for Quanta Magazine

Also, the data we see is not random. What you see now is very correlated to what you saw a few seconds ago. You can think of it as video. All of the video’s frames are correlated to each other, which is very different from how computers process the data.

I’m interested in getting our learning approach to be one in which computers see the data as it comes in, process it and learn from it as they go.

I imagine it’s not as simple as having computers look at videos instead of still images.

No, you still need [computers] to adapt. I’m interested in learning approaches that see the data as it comes in and then process and learn from it as they go. One approach we have is known as test-time training. The idea is that, as you’re looking at a sequence of images like a video, things might be changing. So you don’t want your model to be fixed. Just like a biological agent is always adapting to its surroundings, we want the computer to continuously adapt.

The standard paradigm is you train first on a big data set, and then you deploy. Dall·E and ChatGPT were trained on the internet circa 2021, and then [their knowledge] froze. Then it spews out what it already knows. A more natural way is [test-time training], to try to have it absorb the data and learn on the job, not have separate training and deployment phases.

There is definitely an issue with computers, called the domain shift or data set bias — this idea that, if your training data is very different from the data that you’re using when you’re deploying the system, things are not going to work very well. We’re making some progress, but we’re not quite there yet.

Peter DaSilva for Quanta Magazine

Is the problem similar to how banks warn investors that past performance may not predict future earnings?

That’s exactly the problem. In the real world, things change. For example, if a field mouse ends up in a house, it’ll do fine. You will never get rid of that mouse! [Laughs.] It was born in a field, has never been in a house before, and yet it will find and eat all of your supplies. It adapts very quickly, learns and adjusts to the new environment.

That ability is not there in current [computer vision] systems. With self-driving, if you train a car in California and then you test it in Minnesota — boom! — there is snow. It has never seen snow. It gets confused.

Now people address this by getting so much data that [the system] has basically seen everything. Then it doesn’t need to adapt. But that still misses rare events.

It sounds like AI systems are the way forward, then. Where does that leave humans?

The work coming out of OpenAI both on the text front (ChatGPT) and on the image front (Dall·E) has been incredibly exciting and surprising. It reaffirms this idea that, once you have enough data, reasonably simple methods can produce surprisingly good results.

“ChatGPT made me realize that humans are not as creative and exceptional as we like to see ourselves,” Efros said. “Of course, we do have flights of fancy and creativity. We’re able to do things that computers cannot do — at least for now. But most of the time, we could be replaced by ChatGPT, and most people wouldn’t notice.”

Peter DaSilva for Quanta Magazine

But ChatGPT made me realize that humans are not as creative and exceptional as we like to see ourselves. Most of the time, the pattern recognizers in us could be taking over. We speak in sentences made from phrases or sentences we have heard before. Of course, we do have flights of fancy and creativity. We’re able to do things that computers cannot do — at least for now. But most of the time, we could be replaced by ChatGPT, and most people wouldn’t notice.

It’s humbling. But it’s also a motivator to break out of those patterns, to try to have more flights of fancy, to not get stuck in cliches and pastiches.

Some scientists have expressed concern about the risks AI poses to humanity. Are you worried?

A lot of researchers that I have great respect for have been warning about artificial intelligence. I don’t want to minimize those words. A lot of those are valid points. But one needs to put things in perspective.

Right now, the biggest danger to civilization comes not from computers but from humans. Nuclear Armageddon and climate change are much more pressing worries. The Russian Federation has attacked its completely innocent neighbor. I was born in Russia, and it’s particularly horrifying that my former countrymen could be doing this. I’m doing all I can to make sure this remains topic number one.

We may think that the AI revolution is the most important event of our lifetime. But the AI revolution will be nothing if we don’t save the free world.

So you don’t worry at all about AI?

No. You know, I love to worry. I am a great worrier! But if Putin destroying the world is here [raises hand to his head] and climate change is here [lowers hand to his shoulders], then AI is down here [lowers hand to his feet]. It’s fractions of a percent of my worry compared to Putin and climate change.